OpenAI's enterprise AI stack gets specific

OpenAI is packaging Frontier, ChatGPT Enterprise, Codex, and AWS runtime as one enterprise stack, with CyberAgent as the clearest proof point yet.

OpenAI is no longer selling enterprise AI as a bag of copilots. It is selling a company-wide operating layer.

OpenAI picked a very specific way to say it is done selling enterprise AI as a loose pile of assistants.

In The next phase of enterprise AI, published on Apr. 8, the company said enterprise now makes up more than 40% of revenue and is on track to reach parity with consumer by the end of 2026. In the same post, it said Codex has hit 3 million weekly active users and its APIs now process more than 15 billion tokens per minute. Those are not “interesting pilot” numbers. Those are platform numbers.

A day later, OpenAI followed with the CyberAgent deployment story, which says ChatGPT Enterprise now reaches a 93% monthly active usage rate across nearly all departments at the Japanese internet company, while Codex is being used not just for code generation but also for design review, knowledge documents, and implementation planning. Put the two posts together and the message gets much sharper.

OpenAI is no longer pitching enterprise AI as a bag of copilots. It is packaging Frontier as the operating layer, ChatGPT Enterprise as the daily work surface, Codex as the execution wedge, AWS and systems partners as the deployment rail, and a coming AI superapp as the umbrella experience that keeps the whole thing coherent. If our earlier look at OpenAI's $122 billion infrastructure flywheel argued that compute, product reach, and enterprise revenue were starting to feed the same loop, this week's announcement sequence makes the packaging strategy explicit.

That matters because enterprise software does not break loose on vibes alone. Someone has to approve the contract, wire up permissions, explain governance to security, and decide whether this will become one company system or twenty disconnected bots muttering into Slack.

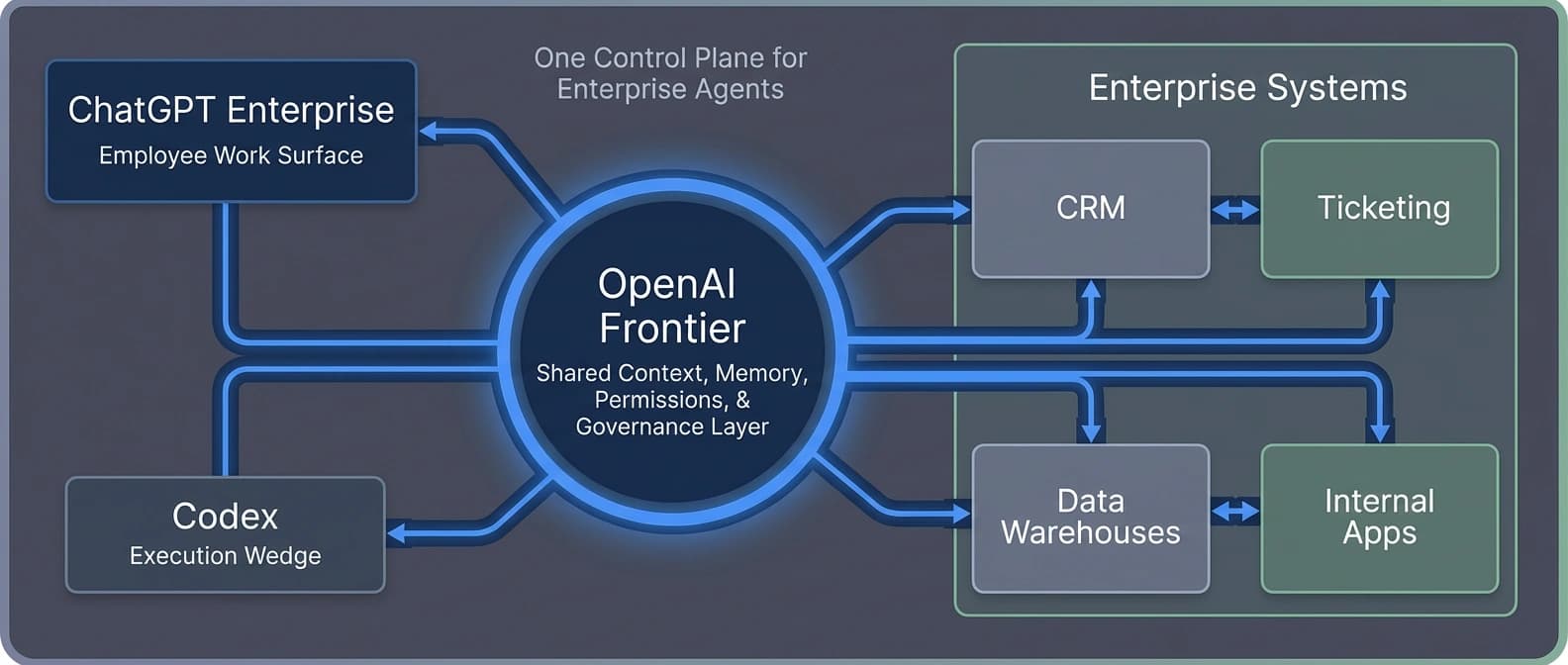

Four layers, one sales story

| Layer | What OpenAI says it does | What it means in practice | Why buyers should care |

|---|---|---|---|

| Frontier | Shared context, memory, permissions, evaluation, open execution | A company-wide control plane for agents across systems | Lets OpenAI sell governance and deployment, not just model access |

| ChatGPT Enterprise | Secure everyday interface for employees | The habit-forming work surface for research, drafting, and action | Reduces rollout friction because workers already know ChatGPT |

| Codex | Agentic execution for engineering and adjacent tasks | The wedge that turns AI from chat into measurable workflow compression | Gives teams a reason to budget for AI beyond generic productivity talk |

| AWS runtime | Stateful environment, cloud distribution, infrastructure scale | The rail that keeps memory, tools, identity, and compute attached | Makes the stack easier to buy as production software rather than a demo |

The shift is political as much as technical. OpenAI is telling enterprises they do not need a different AI tool for every department. They need one governed layer across systems, one familiar surface where employees interact with it, and one execution product that proves the stack can do more than summarize meeting notes.

Frontier is the spine, not the shiny demo

The Frontier launch page is where the stack stops sounding metaphorical. OpenAI says Frontier helps enterprises build, deploy, and manage AI agents with shared context, onboarding, memory, permissions, evaluation loops, and execution across existing systems. In plain English, it is the layer that decides what an agent can see, what it remembers, what “good” looks like, and where it is allowed to act.

That is much more ambitious than “here is another agent builder.” Frontier is a bid to own the semantic and governance layer for enterprise AI, connecting data warehouses, CRMs, ticketing systems, and internal apps so agents do not operate like smart interns who lost the office map.

I keep coming back to that phrase, shared business context, because it tells you what OpenAI thinks enterprises are actually missing. Not model intelligence. Not another prompt box. They are missing a common layer that keeps agents from turning into isolated little kingdoms.

This is also why the announcement lines up so neatly with our earlier argument in OpenAI's agent stack is a distribution play, not a demo. Frontier takes the same logic one level higher. The company is not just trying to provide models and tools. It wants to sit in the middle of the workflow, where context, permissions, memory, and evaluation get standardized.

There is a useful catch here. OpenAI says Frontier works with open standards and can support agents built in house, acquired from OpenAI, or integrated from other vendors. That is the politically necessary sentence. The commercially important sentence sits right next to it: OpenAI still wants the shared context layer to be its layer. If that happens, the company gains leverage even when someone else's app or agent sits on top.

ChatGPT Enterprise handles habit, Codex handles proof

Every enterprise AI pitch has two jobs: become easy enough for ordinary employees to use, then become concrete enough that finance and operations stop treating it like a morale accessory.

ChatGPT Enterprise is doing the first job. OpenAI's argument is simple: because ChatGPT already has 900 million weekly users on the consumer side, employees do not need much retraining to start using the work version. That bridge between personal familiarity and enterprise rollout is one of the company's strongest cards.

Codex is doing the second job. In the enterprise strategy post, OpenAI said Codex has grown more than 5x since the start of the year and now sits at 3 million weekly active users. That matters because Codex gives OpenAI a route into engineering work where time, rework, and throughput are easier to talk about in adult language. Nobody schedules a serious budget meeting because a chatbot wrote a cheerful summary. They do schedule one when an agent starts compressing design review, code review, implementation planning, and documentation into one lane.

That is why our recent coverage of Codex pay-as-you-go team pricing and Codex plugins as a workflow market matters here. Those stories looked like product tweaks on their own. Inside this week's stack framing, they read more like preparation. OpenAI has been turning Codex from a clever capability into a budgetable, installable, enterprise-shaped surface.

And that surface is getting wider than coding. CyberAgent says teams use Codex to pressure-test design proposals, generate improvement suggestions in code review, and build or maintain knowledge documents such as AGENTS.md. It also says non-developers are starting to use it for specifications and mockups. That is a quiet but important shift. Codex is being positioned less as “the coding model” and more as the execution engine for structured work that happens near software, product, and operations.

The superapp line sounds goofy. The strategy doesn't.

OpenAI says it is building toward a unified AI superapp, one place where employees can work with agents throughout the day and complete tasks across the tools they already use. The phrase will make some people roll their eyes, and fair enough.

A superapp is not just a feature bundle. It is a claim about where work starts. If OpenAI can make ChatGPT Enterprise the place where employees research, draft, browse, code, delegate, and trigger actions across other systems, then the company stops being a helpful vendor inside the workflow and starts being the front door to the workflow.

That gives OpenAI more daily attention, a cleaner path to cross-sell new agent products, and one identity-and-permission fabric enterprises can manage instead of a dozen partial ones. It also makes the stack stickier. Once the same vendor controls the shared context layer, the everyday work surface, the coding wedge, and part of the runtime rail, replacing any single piece gets harder.

CyberAgent gives the story its first decent proof point

OpenAI needed a customer example immediately after the strategy post, and CyberAgent's case study is not subtle about the role it is meant to play.

CyberAgent says ChatGPT Enterprise is used across nearly all departments and has reached a 93% monthly active usage rate. That is the kind of adoption figure enterprise vendors love because it makes AI look less like a side project and more like plumbing. The company also says it did not get there through a blunt mandate. Teams adopted the tools based on their own objectives, OpenAI ran training sessions and workshops, and CyberAgent built internal follow-up loops through Slack when usage dropped.

The more interesting part is what CyberAgent says about Codex. The case study points to three concrete jobs:

- pressure-testing design proposals from multiple perspectives

- generating improvement suggestions during code review

- building and maintaining knowledge documents so agents can work with richer context

That is a much better proof point than “developers used AI to write some code faster.” It says OpenAI wants enterprises to think of Codex as a judgment aid and a workflow accelerator upstream of implementation, not just a code autocomplete with nicer manners.

There is an obvious caveat. This is still a vendor-curated customer story, not an independent deployment audit. Buyers should treat it as evidence of what OpenAI wants the market to picture, not as the final word on enterprise outcomes. But even curated proof points tell you something. In this case, they tell you OpenAI knows the stack pitch only works if it can show adoption outside a toy engineering sandbox.

That is also where the contrast with Mistral Forge's enterprise model ownership pitch gets interesting. Mistral is telling enterprises they should want more control over their own model layer. OpenAI is telling them they should want a cleaner operating layer and faster deployment. One story sells ownership. The other sells coordinated convenience.

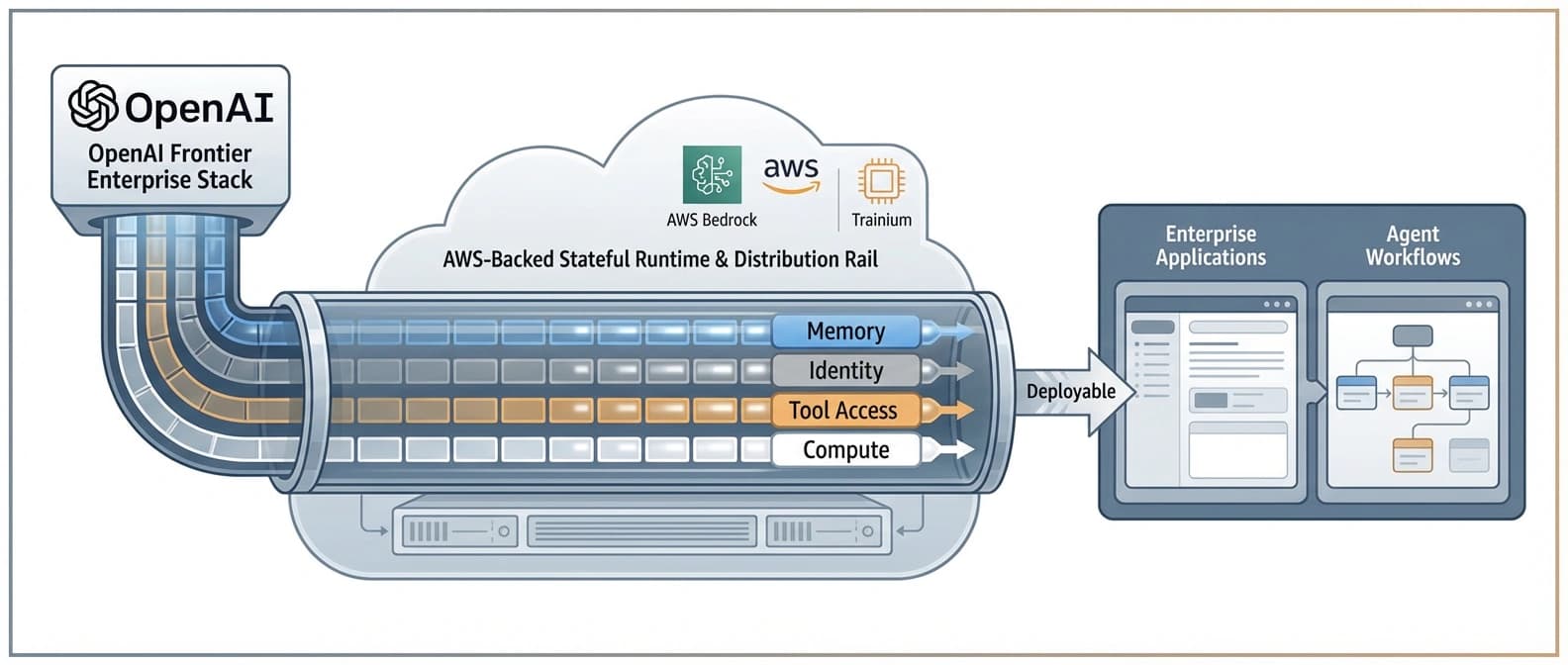

AWS and the partner layer turn a product pitch into a buying path

In the Amazon partnership announcement, OpenAI says AWS will be the exclusive third-party cloud distribution provider for Frontier and that the two companies are co-creating a Stateful Runtime Environment, available through Amazon Bedrock, so customers can build agents and generative AI applications at production scale. OpenAI also says it will consume about 2 gigawatts of Trainium capacity through AWS infrastructure to support demand for the runtime, Frontier, and related workloads.

That gives OpenAI a credible answer to the enterprise question that always arrives right after the demo: where does this actually run, and how does it fit the systems we already have? Stateful runtime, memory, identity, tool access, and Bedrock distribution are not glamorous phrases, but they are exactly the phrases procurement teams and architecture groups expect to hear before anything real gets deployed.

It also turns OpenAI's partner ecosystem into more than decorative logos. In the enterprise strategy post, the company names McKinsey, BCG, Accenture, Capgemini, Databricks, Snowflake, and AWS. Read together, that list tells you OpenAI is trying to cover the whole chain: strategy consultants to sell the program, data and cloud partners to wire the systems, and OpenAI's own layer in the middle to collect the long-term value.

It is a familiar enterprise move, just with more agent language attached to it. And it reinforces the point from the infrastructure flywheel piece: compute scale, product bundling, and enterprise packaging are no longer separate stories for OpenAI. They are becoming one story.

One stack is convenient. One stack is also lock-in with better copy.

The bottom line is not that OpenAI suddenly invented enterprise AI. The bottom line is that it finally stopped describing enterprise AI as a set of adjacent features.

Now the company is describing a hierarchy. Frontier governs. ChatGPT Enterprise distributes. Codex executes. AWS helps run the stateful rail. Partners help wire the buyer's world into the system. The proposed superapp ties the experience together so employees do not have to think about where one layer ends and another begins.

That is a far more serious enterprise pitch than “here are some assistants.” It is also a much stickier one.

OpenAI clearly thinks large companies are tired of point solutions that do not talk to each other and do not share permissions, context, or memory. It is probably right. The harder question is whether those same companies want relief badly enough to let one vendor sit in the middle of the semantic layer, the daily work surface, the coding workflow, the runtime environment, and part of the cloud distribution path.

CyberAgent gives OpenAI its first clean answer: some buyers do, especially when adoption is already broad and the workflow gains look tangible. But one case study is not market closure. OpenAI still has to prove the stack holds up across more companies, more compliance environments, and more departments that do not live anywhere near engineering.

For now, though, the strategic shift is hard to miss. OpenAI is no longer selling enterprise AI as a bag of copilots.

It is selling the company layer where the agents live.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary source for OpenAI's new enterprise framing, the revenue mix claim, Codex weekly usage, API token volume, and the AI superapp thesis.

Primary source for Frontier's platform claims around shared context, memory, permissions, execution environment, and open enterprise deployment.

Primary source for the AWS runtime, Bedrock distribution path, Trainium capacity commitment, and exclusive third-party cloud distribution language around Frontier.

Primary source for the CyberAgent deployment narrative, 93% monthly active usage figure, and Codex use cases beyond straightforward code generation.

About the author

Idris Vale

Idris writes about the institutional machinery around AI, but the lens is broader than policy alone: procurement frameworks, public-sector buying rules, platform leverage, compliance burdens, workflow risk, and the market structure hiding beneath product or infrastructure headlines. The through-line is practical power, not abstract theater.

- 23

- Apr 10, 2026

- Brussels · London corridor

Archive signal

Reporting lens: Follow the buying process, not just the bill text.. Signature: Policy turns real when someone has to buy the system.

Article details

- Category

- AI Products

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Tracks the institutions, incentives, and market structure that quietly decide which AI systems get deployed and why.