OpenAI's agent stack is a distribution play, not a demo

I read OpenAI's agent stack as a workflow-capture move: agents, evals, tracing, and managed tools pull developers into one increasingly sticky loop.

The sticky part is not the model. It is the workflow wrapped around it.

The easy read on OpenAI's latest agent tooling is that it is showing off more capable demos. Sure. Fine. But I think that misses the actual move.

The deeper play is workflow capture.

OpenAI is not only trying to sell model calls. It is trying to become the place where developers assemble agents, inspect traces, run evals, wire tools, and eventually deploy the whole thing without leaving the building. That matters because habits form around workflow, not around a single flashy feature.

Why OpenAI’s agents push is really about platform gravity

OpenAI's own agents guide more or less gives the game away. The documentation is not centered on one magical button. It lays out a chain: models, tools, knowledge, logic, SDKs, builders, and deployment surfaces such as ChatKit. That is not a feature page. That is platform architecture wearing a friendly name tag.

The appeal is convenience. Need orchestration? It is there. Need tools? Also there. Need a deployment path after the prototype stops being fake? Still there. Developers say they hate lock-in right up until lock-in saves them three months of integration work and two meetings with security.

I do not mean that as a cheap shot. Convenience is valuable. A lot of teams do not want maximum theoretical portability on day one. They want the shortest path from "we should try this" to "this is in production and nobody is screaming." If one stack can collapse enough of those steps, it becomes the default choice almost by accident.

Evals are what make the stack sticky

The piece many people still underrate is evaluation. OpenAI's guide to working with evals matters because it pulls testing and iteration into the same house as runtime.

That is where the stickiness gets serious.

Once your model calls, traces, test cases, and quality definitions are all parked in the same platform, migration stops being a clean API swap. It becomes a re-platforming project. Evaluation data is institutional memory. It tells the team what the system is supposed to do, what failure modes matter, and what counts as acceptable behavior. If that memory is tightly attached to one hosted stack, leaving gets more annoying very quickly.

This also connects to the trust problems I talked about in the benchmark-trust piece. The more runtime, tooling, and evaluation are bundled together, the harder it gets to separate model quality from system packaging. The improvement may be real. It is just wrapped in a larger product bundle, like buying a very nice frying pan and discovering it came attached to the whole kitchen.

Workflow ownership is the moat

What keeps teams in a platform is rarely one giant reason. It is a pile of small reasons.

A team that starts in one hosted stack slowly accumulates internal tool adapters, prompt conventions, eval datasets, debugging habits, deployment assumptions, and incident rituals. None of those is impossible to move. Together they form a very effective "maybe later."

That is why the competitive frame is not only OpenAI versus another model provider. It is OpenAI versus anyone who wants to own the workflow layer around agent development. That includes framework-led stacks and lower-level alternatives such as LangGraph, which makes a different bargain: more control, more orchestration depth, and more responsibility.

Some teams will prefer the hosted path because it hides complexity at the right moment. Others will want to keep more runtime behavior portable. Both instincts are reasonable. The important thing is to notice that the contest has moved beyond who can produce the best isolated model answer.

Lock-in is not always a dirty word

I think AI infrastructure writing gets lazy around the word "lock-in." Some forms of dependence are bad. Some are just rent paid in exchange for speed.

The smarter question is whether the dependence is buying something substantial. If a hosted stack cuts time to first production workflow, shortens debugging loops, and keeps evals close to deployment, a lot of teams will happily take that deal. If pricing gets ugly or the platform's assumptions start fighting the actual product, the deal looks worse.

That is why the healthiest posture is selective dependence. Rent the layers that genuinely save time. Protect the layers that define the business.

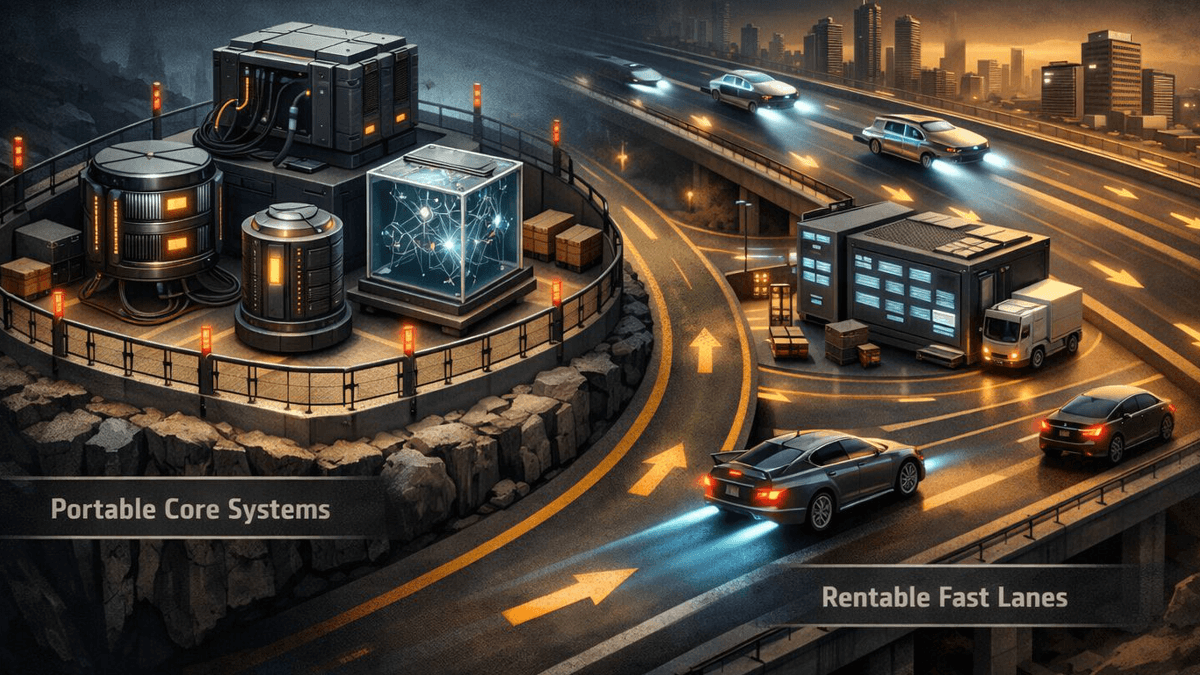

Which parts should stay portable

If I were building on a stack like this, I would be especially careful with business logic, critical connectors, and the evaluation datasets that define product quality. Those are the parts most likely to hurt later if they become somebody else's leverage point.

By contrast, hosted tracing, packaged tools, and a default deployment path are often perfectly sensible things to rent. This is the same operating-leverage question behind open-weight inference economics: what are you getting in exchange for convenience, and what future flexibility are you spending to get it?

It also explains why alternative compute and deployment plays still have a steep hill to climb. Even if the infrastructure is strong, it has to overcome workflow gravity. The easiest environment usually wins more market share than the cleanest architecture diagram.

My read on what matters next

The next serious comparison between agent stacks should be brutally practical. How fast can a team reach a real production workflow? How good is observability once the workflow is live? How painful is it to leave when the team wants more control?

That is the actual platform contest. OpenAI is not just demoing agents. It is trying to make its environment the place where recurring agent work begins, improves, and stays.

If that works, the company gets more than launch-week attention. It gets habit. And habit, in software, is the moat that keeps paying rent.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Core reference for how OpenAI frames agents as a workflow made from models, tools, knowledge, logic, and deployment surfaces.

Shows how OpenAI wants evaluation and iteration to sit inside the same developer loop as agent building.

Useful comparison point for a competing orchestration-first stack that emphasizes lower-level control.

About the author

Talia Reed

Talia reports on product surfaces, developer tools, platform shifts, category shifts, and the distribution choices that determine whether AI features become durable workflows. She looks for the moment where a launch stops being a demo and becomes an ecosystem move.

- 34

- Apr 1, 2026

- New York

Archive signal

Reporting lens: Distribution is usually the story hiding inside the launch.. Signature: A feature matters when it changes someone else’s roadmap.

Article details

- Category

- AI Products

- Last updated

- April 11, 2026

- Lead illustration

- The platform advantage grows when models, tooling, evals, and deployment live inside one workflow surface.

- Public sources

- 3 linked source notes

Byline

Covers product surfaces, tools, and the adoption moves that turn AI features into durable habits.