Mistral Forge turns enterprises into model owners

Mistral Forge is a pitch for buyers that want models they can shape and control, not just rented APIs with a nicer enterprise wrapper.

Forge is not really selling access to a model. It is selling the chance to turn company knowledge into a model asset the buyer can control.

Mistral did not launch Forge as just another enterprise wrapper with a nicer dashboard. The more interesting read is that it wants to change what enterprise buyers think they are buying in the first place.

A generic hosted API makes a company an AI customer. Forge is pitched to make that same company feel like a model owner.

That is a much bigger ambition. In Mistral's launch post, the company describes Forge as a system for building frontier-grade models grounded in proprietary enterprise knowledge. The product page makes the commercial pitch clearer: domain alignment, end-to-end training, enterprise evaluation, governance, and deployment flexibility. None of those ingredients is brand-new alone. Put together, they turn the pitch into ownership.

Why Mistral Forge is selling ownership instead of rented intelligence

A lot of enterprise AI spending still works like a rental market. Companies pay for someone else's model, attach retrieval, add a long system prompt, and hope the whole bundle behaves. That can be perfectly rational. It is also why so many enterprise agents still feel like temps wearing the wrong badge.

Mistral is making the opposite bet. Instead of treating proprietary knowledge as something attached at query time, Forge treats it as something that can be encoded into the model across pre-training, post-training, and reinforcement learning stages. The company says customers can train on internal documentation, codebases, structured data, and operational records so the resulting model learns domain vocabulary, reasoning patterns, and organizational constraints.

That shifts the enterprise question from "which API should we rent?" to "which parts of our company should become model behavior?" That is a more serious conversation, especially for agents that need to navigate internal tools, follow policy, and make decisions inside a company's actual workflow instead of a demo environment.

Enterprise evals are what make the ownership pitch believable

This is where Forge gets more interesting than a plain fine-tuning service. Mistral is not only selling customization. It is selling a loop: tune the model, test it against internal criteria, improve it again, and then deploy it where the risk profile makes sense.

That evaluation layer matters because enterprise buyers do not need a model that sounds impressive in a conference clip. They need a model that clears their own acceptance tests. Forge's product page explicitly emphasizes evaluation frameworks tied to enterprise KPIs rather than generic public benchmarks.

That is smart. It is also necessary.

This part connects directly to our benchmark-trust recession explainer. If a vendor cannot show how the model behaves against the buyer's own failure cases, leaderboard bragging does not buy much confidence. Mistral seems to understand that. It is pitching customization and measurement together, which is the only version that feels real.

The company also says Forge supports continuous improvement and reinforcement learning, not just one-off tuning. That matters because an owned asset gets iterated. A rented API gets swapped the second procurement gets itchy.

Deployment choice is what turns customization into control

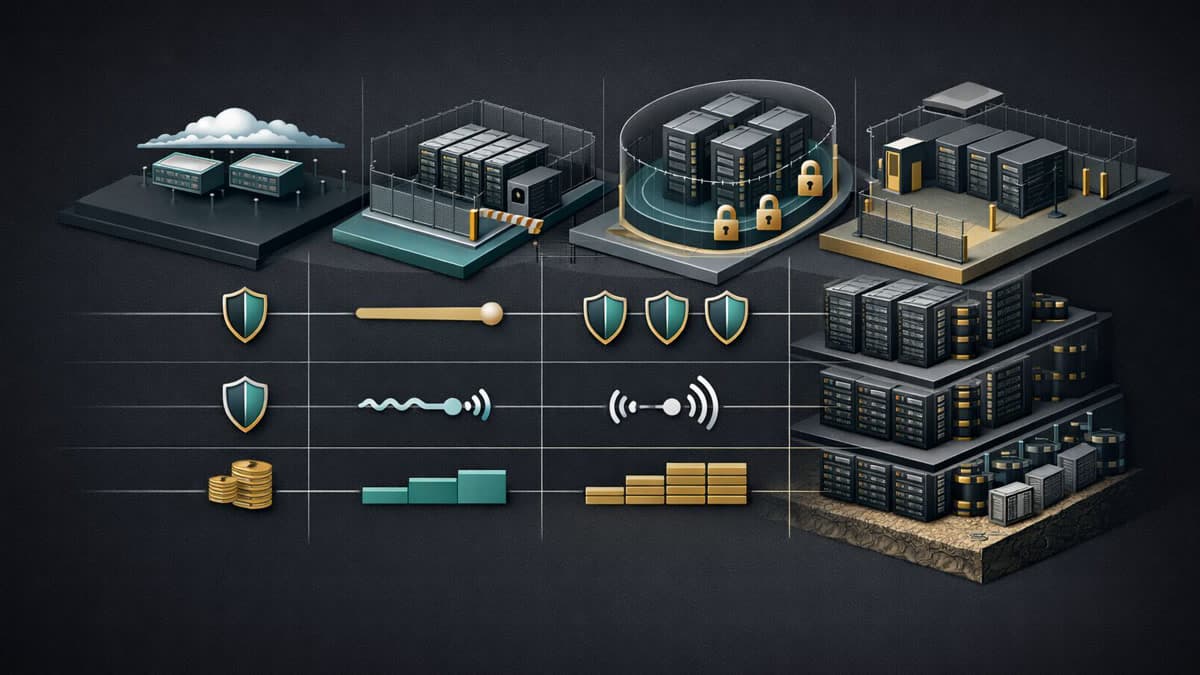

The other reason Forge feels strategic is that Mistral keeps pairing customization with deployment flexibility. Its self-deployment documentation says Mistral models can run through vLLM, TensorRT-LLM, or TGI, and it pitches self-hosted paths for fuller enterprise setups.

That matters because customization without deployment choice can still leave the buyer stuck. A company that trains a better model but can only consume it through one vendor-controlled hosted stack has gained performance, but not much sovereignty.

A company that can choose among managed deployment, private cloud, or self-hosted infrastructure has more room to match the model to compliance, latency, residency, and cost requirements. That ties directly into open-weight inference economics: control only becomes meaningful when it can change how and where inference runs.

It also makes Forge feel different from the workflow-capture logic behind OpenAI's agent-platform shift or Google AI Studio's full-stack push. Those companies want to own the workflow around hosted intelligence. Mistral is trying to sell ownership of the intelligence asset itself, or at least a credible path toward it.

Forge fits Mistral’s broader open-model story

Forge did not land out of nowhere. Mistral's Small 4 announcement already leaned hard on openness, efficiency, fine-tunability, and day-zero deployment across common serving stacks. NVIDIA's Nemotron Coalition announcement pushed the same broader thesis by describing Mistral as part of a wider push toward open frontier models that organizations can post-train and specialize.

That context matters because Forge is not just a product SKU. It is Mistral trying to turn its open-and-customizable identity into an enterprise buying thesis.

My read on what enterprises are really buying

If Forge works, the customer is not mainly buying model training. The customer is buying a different posture toward AI.

Instead of renting a model and wrapping policy around it afterward, the company gets to push more of its proprietary knowledge, evaluation logic, and deployment requirements into the model lifecycle itself. That should make enterprise agents more legible inside real workflows and less dependent on generic assumptions.

There is still a tension here. Forge sells autonomy, but it is autonomy mediated by Mistral's tooling, recipes, and services. So this is not pure self-reliance. It is more like buying your own kitchen inside somebody else's building.

Still, the strategic direction is clear. Mistral wants enterprise buyers to stop thinking like API renters and start thinking like owners of a model asset shaped by their own institutional knowledge. That is a more serious pitch than most launch-week coverage gave it credit for.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary announcement defining Forge as a system for building frontier-grade models grounded in proprietary enterprise knowledge.

Product page detailing the custom training, enterprise evaluation, governance, and deployment-flexibility pitch.

Confirms Mistral’s support for self-deployment paths and its recommendation of vLLM and other inference engines.

Shows the broader Mistral strategy around open, customizable, efficient models that enterprises can fine-tune or deploy directly.

Provides broader context on Mistral’s role in open frontier model development and specialization as an enterprise platform argument.

About the author

Talia Reed

Talia reports on product surfaces, developer tools, platform shifts, category shifts, and the distribution choices that determine whether AI features become durable workflows. She looks for the moment where a launch stops being a demo and becomes an ecosystem move.

- 34

- Apr 1, 2026

- New York

Archive signal

Reporting lens: Distribution is usually the story hiding inside the launch.. Signature: A feature matters when it changes someone else’s roadmap.

Article details

- Category

- AI Products

- Last updated

- April 11, 2026

- Lead illustration

- Forge packages training, evaluation, and deployment choice into a bid for enterprise model ownership rather than pure API consumption.

- Public sources

- 5 linked source notes

Byline

Covers product surfaces, tools, and the adoption moves that turn AI features into durable habits.