OpenAI Codex Gets Pay-as-You-Go Team Pricing

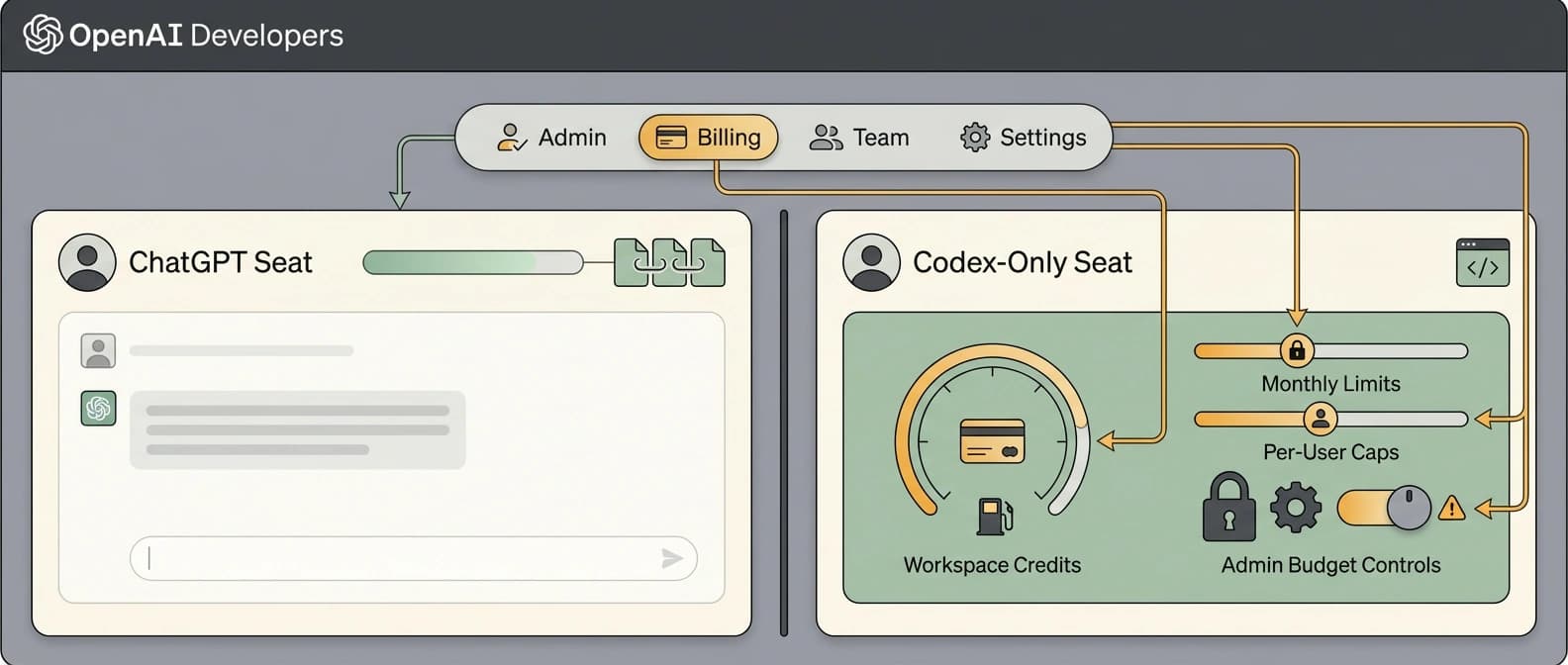

OpenAI split Codex from the bundled ChatGPT seat, adding Codex-only seats, token billing, credits, and spend controls that make small team pilots easier to approve.

The product story is not that Codex got cheaper. The real shift is that OpenAI turned “no rate limits” into “please see budget settings.”

OpenAI just changed what a Codex rollout looks like inside a company. In its April 2 launch post, the company said ChatGPT Business and Enterprise workspaces can now add Codex-only seats with pay-as-you-go pricing, giving teams full Codex access without paying a fixed seat fee for a full ChatGPT subscription. That may sound like a small packaging tweak. It is not. It is OpenAI taking the coding agent out of the buffet line and putting it on a meter.

That shift matters because bundled products are easy to demo and annoying to budget. Metered products are the opposite. Nobody throws a party for token accounting, but finance teams love a receipt. I do not say that with malice. I say it as someone who has watched enough “strategic AI adoption” decks die the moment somebody asked, very reasonably, what the thing would cost after week two.

OpenAI Codex pay-as-you-go pricing changes the seat model

The launch post says Business and Enterprise customers can add Codex-only seats, while standard ChatGPT Business seats still exist and still include Codex usage limits. OpenAI also lowered the annual price of ChatGPT Business from $25 to $20 per seat, which is a neat bit of pricing stagecraft: unbundle the specialist product, then sweeten the general one so the room does not get tense.

The bigger change is operational. OpenAI says Codex-only seats have no rate limits, but that phrase now comes with an asterisk the size of a billing console. Usage is billed on token consumption, and teams can track spend across budgets, workflows, and teams. In other words, “no rate limits” now means “you may continue, but accounting has entered the chat.”

That is a real improvement for companies that wanted to test Codex without handing every prospective user a full ChatGPT seat. A team can now start with a few Codex users, run focused pilots, and see whether the agent actually saves time on code review, implementation, or workflow automation before expanding access. For adoption, this is much more useful than another round of vague “contact sales” mist.

The new Codex rate card turns usage into math instead of vibes

The pricing page and the Codex rate card help article make the billing change explicit. OpenAI is moving from approximate per-message credit estimates to a token-based rate card that charges different credit amounts for input tokens, cached input tokens, and output tokens.

For example, the current token-based card lists GPT-5.4 at 62.50 credits per million input tokens, 6.250 credits per million cached input tokens, and 375 credits per million output tokens. GPT-5.3-Codex is listed at 43.75 / 4.375 / 350 credits respectively, and GPT-5.1-Codex-mini comes in much lower at 6.25 / 0.625 / 50. Fast mode consumes 2x as many credits. Code review runs on GPT-5.3-Codex.

That may sound painfully granular if you miss the old world where software pricing could hide behind the phrase “generous limits.” I get it. Nobody wakes up hoping to compare cached-input pricing tables. Still, this is the kind of boring that makes a product easier to buy. Teams can estimate heavy-output tasks differently from light prompt-and-edit work, and they can model what happens if developers switch models or lean harder on cached context.

OpenAI also says the new token-based rate card is currently applicable to new and existing Business customers, and new Enterprise customers. That nuance matters. The launch post frames Codex-only seats as available to Business and Enterprise workspaces, but the rate-card docs are more specific about who is already on the new metered model. So the clean way to say it is this: the seat option is broader than the billing migration language, and some Enterprise or Edu customers may still be on the legacy message-based card while migration continues.

Codex-only seats and spend controls make pilots easier to approve

This is where the story gets more interesting than a spreadsheet. OpenAI’s help docs say ChatGPT Business now supports two seat types: standard ChatGPT seats with fixed monthly pricing and usage-based Codex seats. A workspace can mix both. That is not just a billing detail. It is product segmentation with a hard hat on.

Admins can add workspace credits, turn on automatic recharge, and set monthly credit usage limits by seat type or even by specific user. By default, OpenAI says seats and users have no limits specified, but admins can set caps for Codex seats while keeping different limits for standard ChatGPT seats.

That changes the internal politics of agent adoption. Instead of approving a broader seat rollout up front, a company can say: give the infra team a higher Codex budget, give the occasional users a lower cap, and stop the whole thing from turning into an accidental hobby farm. This is a much easier sentence to take into a budgeting meeting than “trust us, the agent is powerful.”

There is also a limited-time incentive attached. OpenAI says eligible ChatGPT Business workspaces can receive $100 in credits for each new Codex-only team member who joins and starts using Codex, up to $500 per team. Which is very thoughtful. Nothing says “enterprise readiness” quite like a coupon, but to be fair, coupons work.

Why this pricing shift matters for coding-agent adoption

The practical story is not that Codex suddenly became cheap for every workload. Some teams will spend less. Some will spend more. Output-heavy tasks, fast mode, and long-running jobs can burn credits faster than people expect. OpenAI says average Codex cost runs around $100 to $200 per developer per month, with wide variance depending on models, number of running instances, automations, and fast mode usage.

What changed is that Codex is now easier to pilot, easier to govern, and easier to treat as its own product line inside a company. That lines up with the broader Codex distribution push we just saw through the Codex plugin directory, and it fits the same adoption pattern we have been tracking in pieces on the coding-agent orchestration bottleneck, Claude Code’s growing aftermarket, and the wider OpenAI agents platform shift.

The old bundled-seat story treated Codex as one more perk inside a bigger subscription. The new story treats it like a team product with its own meter, its own controls, and its own approval path. That is less glamorous, but far more usable. If you are a manager trying to get a coding agent through procurement without sounding like you swallowed a keynote, this is good news.

And that, to me, is the real April 2 update. OpenAI did not merely tweak Codex pricing. It gave companies a way to buy the coding agent separately, test it in smaller doses, and keep one eye on the usage dashboard while pretending that was always the plan.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Launch post announcing Codex-only seats, token billing, spend controls, and the lower annual ChatGPT Business seat price.

Pricing page showing the token-based rate card, model-specific credit rates, and which Business and Enterprise customers are currently on the new billing model.

Help article detailing the token-based rate card, legacy rate card, and migration nuance across plan types.

Explains workspace credits, automatic recharge, seat-type limits, and per-user overrides for Codex usage in Business.

Useful context because the pricing shift lands right after OpenAI broadened Codex distribution through plugins and connected workflow tooling.

About the author

Idris Vale

Idris writes about the institutional machinery around AI, but the lens is broader than policy alone: procurement frameworks, public-sector buying rules, platform leverage, compliance burdens, workflow risk, and the market structure hiding beneath product or infrastructure headlines. The through-line is practical power, not abstract theater.

- 23

- Apr 10, 2026

- Brussels · London corridor

Archive signal

Reporting lens: Follow the buying process, not just the bill text.. Signature: Policy turns real when someone has to buy the system.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Tracks the institutions, incentives, and market structure that quietly decide which AI systems get deployed and why.