Claude Opus 4.6 Fast Mode OpenRouter is now routable

OpenRouter has publicly listed Anthropic's new Claude Opus 4.6 Fast Mode SKU, exposing the same model in a faster beta lane with 6x pricing, separate limits, and a very practical routing decision for operators.

Fast Mode is not a new Claude brain. It is the same Opus 4.6 buying a much faster line at a much less democratic price.

Anthropic did not release a brand-new supermodel here. It released a faster lane for the same one, and OpenRouter has now made that lane publicly visible as a separate thing you can route to.

That is why this story matters.

On Anthropic's side, Fast Mode is explicitly a beta research preview for Claude Opus 4.6. The company says it uses the same model with a faster inference configuration, delivers up to 2.5x higher output tokens per second, and does not promise faster time to first token. On OpenRouter's side, the public models API now lists anthropic/claude-opus-4.6-fast with a created timestamp of 2026-04-07T20:07:52Z, and the public model page describes it as the same Opus 4.6 with higher output speed at premium 6x pricing.

Put those two facts together and the operator story snaps into focus. This is not a new intelligence tier. It is latency sold as a SKU.

That may sound like a small distinction, but it is the kind of small distinction that ends up changing routing tables, budget conversations, and product defaults. We already saw Google move in a similar direction when it started selling Flex and Priority inference lanes in the Gemini API. Anthropic's version is narrower and pricier. Instead of a broad service-tier menu, it has taken one premium model and added an even more premium speed setting.

If you run coding agents, browser agents, or long multi-step workflows, that is a real decision. Not every delay matters. Some delays matter a lot. The annoying part, as usual, is that the invoice suddenly has opinions.

Claude Opus 4.6 Fast Mode OpenRouter news: what actually changed

Anthropic shipped the product definition first. The docs show how to call standard Opus 4.6 with speed: "fast" plus a beta header. In other words, Fast Mode exists in Anthropic's API as a service option layered onto the existing model.

OpenRouter changes the packaging.

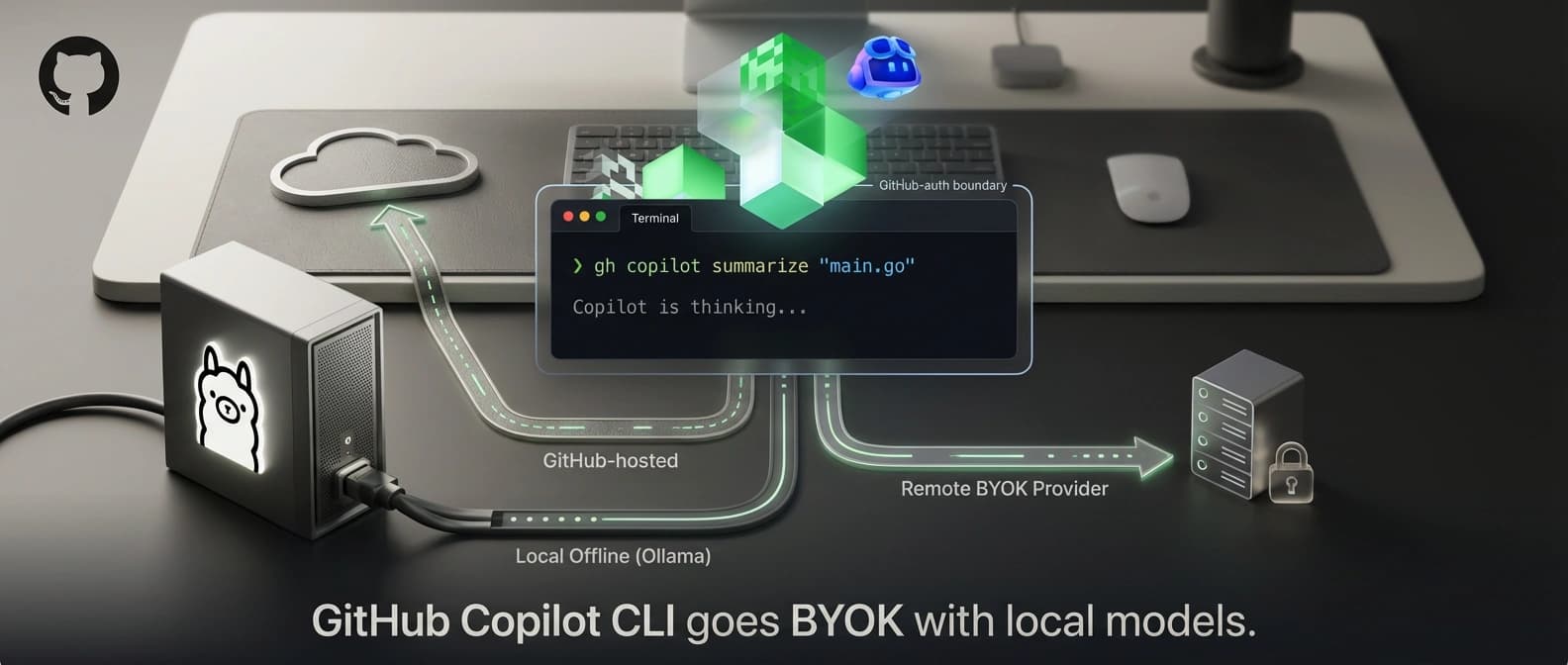

By exposing anthropic/claude-opus-4.6-fast as its own public model ID, OpenRouter turns a beta speed flag into a distinct route target. That matters for two reasons.

First, it makes discovery easier. A feature hidden in docs is something infra teams discuss in a Slack thread. A separate model listing is something product teams, procurement people, and orchestration layers can actually see, compare, and wire up.

Second, it changes the mental model. Anthropic says, correctly, that Fast Mode is not a different model. OpenRouter is not contradicting that. But a router still has to name the route somehow, and once a route gets its own listing, it starts behaving like a SKU whether the underlying weights changed or not.

That distinction sounds nerdy because it is nerdy. It is also commercially important. Operators do not buy abstract purity. They buy things they can route, meter, and fall back from.

Here is the short version.

| Question | Standard Claude Opus 4.6 | Claude Opus 4.6 Fast Mode | OpenRouter exposure |

|---|---|---|---|

| New model weights? | No | No | No |

| Intelligence upgrade? | Baseline | Same model, same behavior | Same as Anthropic claim |

| Speed benefit | Standard | Up to 2.5x higher output tokens/sec | Routed as separate target |

| TTFT improvement promised? | No special claim | No, docs say benefit is OTPS not TTFT | No separate claim needed |

| Standard pricing | $5 in / $25 out per MTok | N/A | Mirrors source pricing |

| Fast pricing | N/A | $30 in / $150 out per MTok | Mirrors source pricing |

| Access model | Broadly available Opus 4.6 | Beta research preview, waitlist-limited | Publicly listed and routable |

| Limits | Standard Opus controls | Separate fast-mode limits | Depends on router plus upstream availability |

The big story is in the last two rows. Anthropic treats Fast Mode as a limited beta configuration. OpenRouter treats it as a publicly surfaced route. That turns documentation into distribution.

What Claude Opus 4.6 Fast Mode actually is, and what it is not

Anthropic's docs are unusually clear here, which is refreshing. Fast Mode runs the same model with a faster inference configuration. The docs spell out three points that should kill most of the confusion before it breeds.

- It is the same model weights and behavior.

- The gain is in output tokens per second.

- The gain is not necessarily time to first token.

That means Fast Mode is not a smarter Opus, not a hidden Opus 4.7, and not some surprise reasoning leap wearing sunglasses. It is Opus 4.6 with more aggressive serving.

Why does that distinction matter so much? Because too many buyers hear "faster" and mentally translate it to "better." Sometimes faster really is better, especially when a user is staring at a live coding session or a long-running agent that needs to show visible progress. But faster does not change the model's judgment, domain knowledge, or ceiling on difficult reasoning. It just gets the same brain talking faster.

That is still valuable. For agent operators, the bottleneck often is not whether the model can solve the task. It is whether the workflow stays tolerable while it solves it. Our recent piece on the AI coding agent orchestration bottleneck made a similar point from the workflow side: a lot of agent pain is waiting, handoff, and tool churn rather than pure raw capability.

So yes, speed can matter a lot. Just do not let anyone sell you new intelligence when the vendor documentation is plainly describing a new service lane.

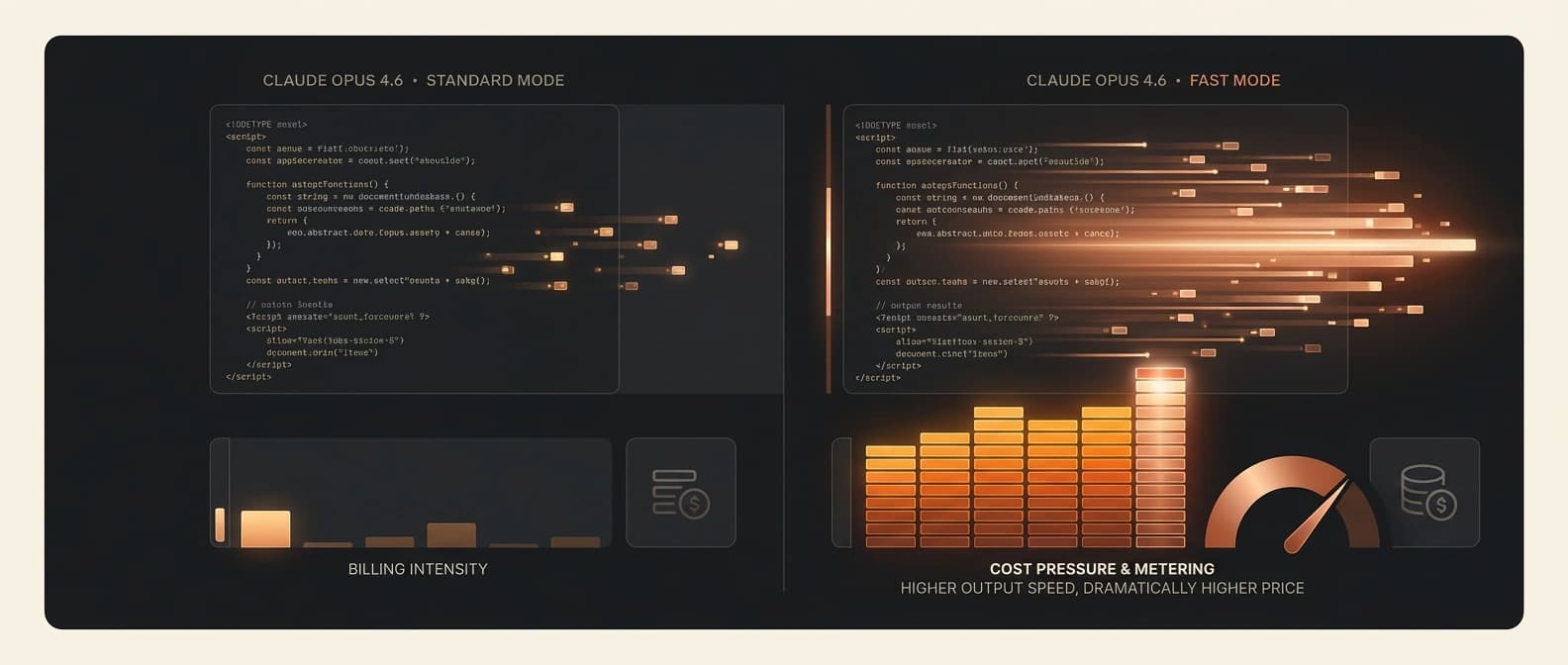

Claude Opus 4.6 Fast Mode pricing: the premium is the whole plot

Anthropic prices standard Claude Opus 4.6 at $5 per million input tokens and $25 per million output tokens. Fast Mode comes in at $30 input and $150 output per million tokens.

That is exactly 6x standard Opus pricing.

This is the part where the story stops being technical and becomes managerial. Plenty of teams can talk themselves into paying more for lower latency. Far fewer can do it once the multiplier is six and the model is not actually changing.

| Pricing lane | Input price | Output price | Multiplier vs standard Opus 4.6 |

|---|---|---|---|

| Standard Opus 4.6 | $5 / MTok | $25 / MTok | 1x |

| Opus 4.6 Fast Mode | $30 / MTok | $150 / MTok | 6x |

Anthropic also says Fast Mode pricing applies across the full 1M-token context window, including requests above 200k input tokens. That is a subtle but important detail. There is no discounted "only the big prompt is standard-priced" loophole here. If you choose Fast Mode, you are paying premium rates across the request.

So when is that worth it? Usually when output speed itself is the product experience.

Think live coding help, long tool-rich responses, or agent flows where the model emits a lot of visible output and the human is actively waiting. If the main pain is watching a rich answer stream out too slowly, Fast Mode can make economic sense. If the main pain is that your app spends too long before the first token arrives, the docs are basically warning you not to expect magic.

There is a funny little honesty in that. Anthropic is not saying, "Everything gets faster." It is saying, "The talking part gets faster." That is much less glamorous, and much more useful.

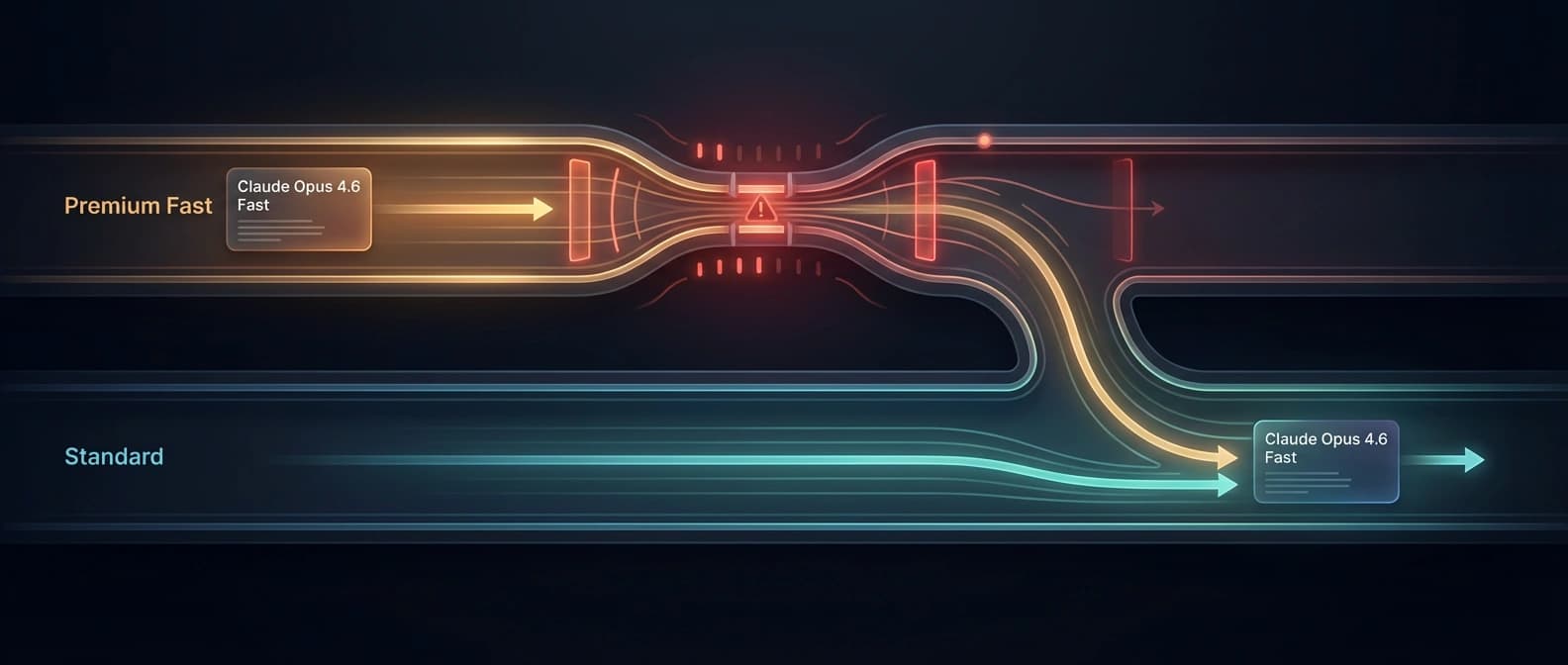

Claude Fast Mode rate limits and fallback are not footnotes

This is another place where the docs matter more than the launch gloss.

Anthropic says Fast Mode has dedicated rate limits separate from standard Opus rate limits. If you exceed them, the API returns a 429 with a retry-after header. The docs also describe two ways to handle that moment:

- let the SDK retry and wait for fast capacity

- catch the rate-limit error and retry without

speed: "fast"

That second option is the practical one for many teams. In plain English, Fast Mode is meant to be something you can fall back from. Anthropic even notes that if you fall back from fast to standard speed, you will take a prompt-cache miss because different speeds do not share cached prefixes.

That is not a cute implementation detail. It affects real costs and latency.

A serious deployment therefore needs a policy, not just a toggle. Do you wait for fast capacity because the user experience is worth it? Do you fall back immediately because spending time in retry purgatory is worse than losing the speed boost? Do you reserve Fast Mode only for certain turns? These are routing questions, not model-philosophy questions.

And they sound a lot like the service-tier conversations now happening across the market. Google's Flex and Priority split sells that choice more explicitly. Anthropic is doing it inside one flagship model. Either way, the industry keeps converging on the same awkward truth: one inference lane is not enough anymore.

There is also an access caveat. Anthropic calls Fast Mode a beta research preview and points users to a waitlist. So direct first-party access is not being framed as universally open. That is another reason the OpenRouter listing is interesting. It does not prove universal availability in practice, and I am not treating it that way. But it does make the SKU publicly visible and selectable in a way that feels more operational than a hidden beta doc.

That alone will get the attention of the people who manage cross-provider routing. They already spend their days deciding when to use a cheaper model, a faster lane, or a fallback stack. Fast Mode gives them one more expensive little lever to argue about.

Why OpenRouter makes Claude Opus 4.6 Fast Mode feel bigger than a beta flag

A lot of model features stay tucked inside provider docs and never become real market objects. OpenRouter has a habit of changing that by making them legible as routable inventory.

That is what seems to be happening here.

The OpenRouter models API does more than acknowledge Fast Mode exists. It gives the route a stable ID, public metadata, pricing, context length, and a plain-English description that says it is the fast-mode variant of Opus 4.6 with identical capabilities and premium 6x pricing. In other words, OpenRouter is not selling a mystery box. It is packaging Anthropic's own framing into a router-native object.

That matters most for teams already building provider-agnostic or mixed-provider stacks. If your orchestration layer already switches between vendors, a separate route target is cleaner than special-casing an Anthropic-specific speed parameter deep in application logic. The router abstraction is the product.

We have seen that routing layer become more strategic in other contexts too, including the mixed-stack dynamics behind GLM-5.1, Claude Code, and OpenClaw. Once teams stop thinking in terms of one sacred provider and start thinking in terms of workflows, every routable performance tier starts to look like infrastructure.

There is also a broader supply-side backdrop here. Anthropic's premium lanes only make sense if the company is increasingly serious about where scarce compute goes, which is hard to separate from the bigger compute story around its multi-gigawatt infrastructure push with Google and Broadcom. When compute is expensive and demand is lumpy, selling access classes becomes a very rational habit.

Again, not glamorous. Extremely real.

When Claude Opus 4.6 Fast Mode is worth paying for, and when it probably is not

The simplest way to think about Fast Mode is to ask one brutally boring question: Is output speed itself worth 6x to this turn?

If yes, maybe buy it. If no, absolutely do not let the words "premium lane" hypnotize you.

| Use case | Fast Mode verdict | Why |

|---|---|---|

| Live coding or troubleshooting with long visible outputs | Strong candidate | The user sees output streaming and feels the difference directly |

| Agent turns with big tool-rich writeups shown to a human | Strong candidate | OTPS matters when the answer itself is the waiting experience |

| Background research or enrichment | Usually no | No human is watching, so the premium mostly burns money |

| Short prompts with tiny outputs | Usually no | Faster output on 80 tokens is not much of a business model |

| Heavy workflows where TTFT is the main pain | Weak candidate | Anthropic does not position Fast Mode as a TTFT fix |

| Reliability-sensitive flows with clear standard fallback | Maybe | Can work if your fallback logic is disciplined and measured |

That last row matters. A lot of teams will not run Fast Mode everywhere. They will run it selectively, then fall back to standard speed when limits hit or when the customer simply does not justify luxury inference.

And that is probably the healthy way to think about it. Not as a new default, but as a premium operator tool for the moments that earn it.

This is especially relevant for developers already annoyed by opaque limits and traffic shaping around Anthropic products, something we covered in our piece on Claude Code session limits becoming a trust issue. Once users are already sensitive to how access, throughput, and hidden constraints behave, a premium speed tier is not just a performance story. It is a trust and routing story too.

Claude Opus 4.6 Fast Mode OpenRouter: the real takeaway

The cleanest read is also the least dramatic one.

Anthropic has created a faster premium serving lane for the same Opus 4.6 model. OpenRouter has now surfaced that lane as a separate public route. That does not mean a new model arrived. It means latency, limits, and pricing just got a little more modular.

I think that is the real headline worth keeping.

Not "Anthropic launched a new frontier model." Not "OpenRouter unlocked a secret Claude." Just this: the market is getting better at selling service characteristics as distinct products. Same weights. Different lane. New routing decision.

That will look increasingly normal from here.

Because once one provider can charge more for faster output on the same model, every operator has to decide whether speed belongs in the model selector, the routing layer, or the finance team's nightmares. Usually all three.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary source for the product definition: Fast Mode is a beta research preview for Claude Opus 4.6, uses the same model, targets higher output tokens per second rather than time to first token, carries separate fast-mode rate limits, and documents fallback behavior.

Primary source for standard Opus 4.6 pricing and the Fast Mode pricing table showing $30 input and $150 output per MTok, or 6x standard rates.

Confirms baseline Opus 4.6 pricing, 1M-token context window, and that Opus 4.6 remains Anthropic's broadly available flagship model while Fast Mode is framed as a configuration on top of it rather than a separate intelligence tier.

Primary listing source showing anthropic/claude-opus-4.6-fast in the public models feed with created timestamp 2026-04-07T20:07:52Z, 1M context, and a description pointing back to Anthropic's Fast Mode docs.

Near-primary public model page confirming OpenRouter is surfacing Fast Mode as a separate routable model page with premium 6x pricing and explicit same-capability framing.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.