GPT-5.2 Codex pushes Codex into cloud work

GPT-5.2 Codex matters less as a model bump than as a shift to cloud software work, with fresh environments, network controls, and reviewable diffs.

The interesting part of GPT-5.2 Codex is not the decimal point. It is that Codex now looks like a place where software jobs go to run.

The interesting part of GPT-5.2 Codex is not the decimal point. It is the job description.

OpenAI has already spent the last few weeks widening Codex’s reach through pay-as-you-go team pricing, a plugin push that reached into Claude Code, and a broader plugin directory built to distribute workflows. Those were important moves, but they still left one question hanging in the air: what exactly is Codex becoming?

GPT-5.2 Codex gives the clearest answer yet. The product is no longer easiest to understand as fancy autocomplete, or even as a chatty coding helper that happens to know how to use a terminal. The live Codex docs now describe something more operational: delegated cloud tasks, fresh environments, controlled internet access, progress tracking, and reviewable diffs that can turn into pull requests. That is a different shape of product.

There is one awkward sourcing wrinkle here, and it is worth saying plainly. OpenAI’s sitemap shows the introducing-gpt-5-2-codex launch URL was freshly updated on April 7, but mirrored fetches of that same URL still surface overlapping older copy. So the cleanest current picture of what Codex is becoming comes from OpenAI’s own Codex docs and its new guide on how OpenAI uses Codex internally. Thankfully, those sources are unusually revealing. They make the cloud-work story hard to miss.

GPT-5.2 Codex matters because Codex now behaves like remote software work

The current Codex product page says Codex can read, edit, and run code. That verb set matters. Plenty of tools can explain a file or draft a patch. Fewer are being framed as a place where work runs.

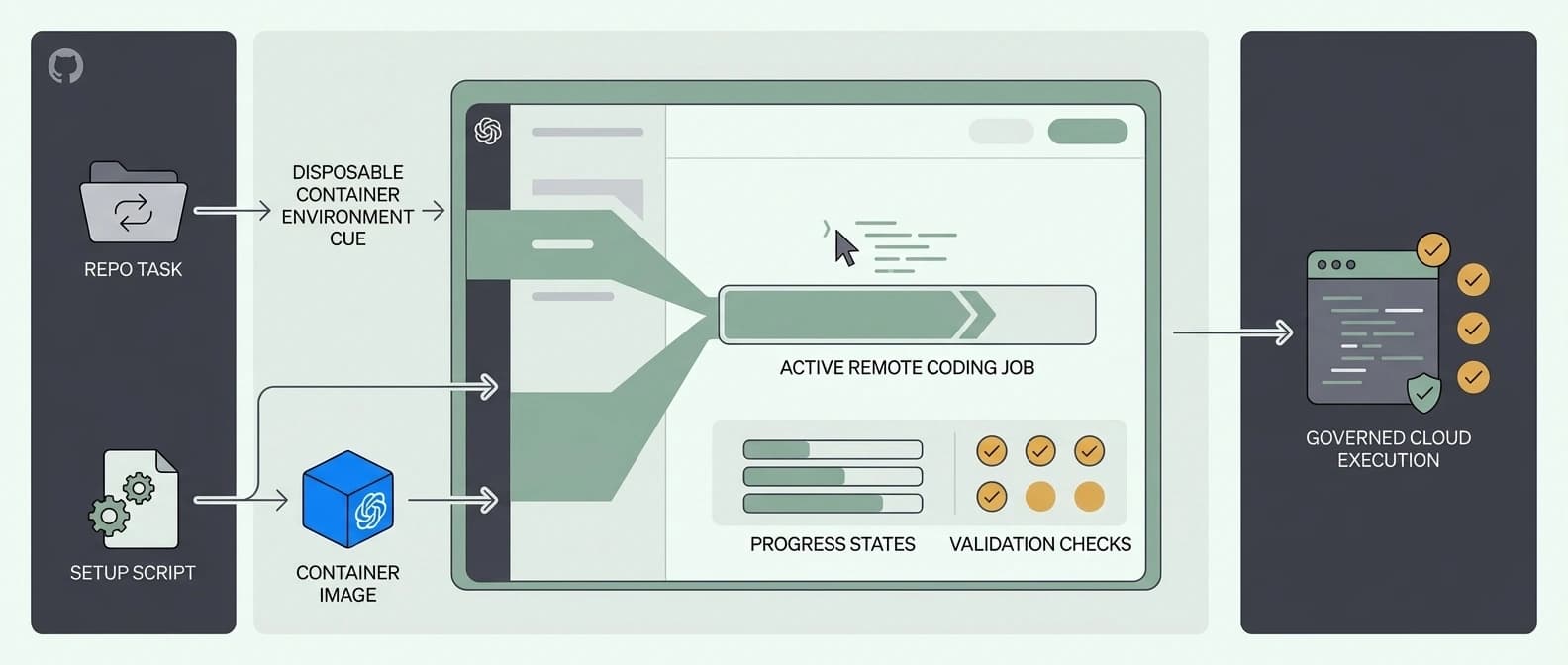

The Codex cloud docs push that framing much further. OpenAI describes cloud tasks as a way to delegate software engineering work to Codex in the cloud, choose the repository and environment, monitor progress, review changes, and turn results into pull requests. That is not a tiny UX tweak. That is the language of a remote job system.

I keep coming back to the contrast with earlier Codex stories on this site:

| Codex phase | What changed | Why it mattered |

|---|---|---|

| Plugin directory | Codex got a curated install surface for workflows | Distribution |

| Pay-as-you-go team pricing | Codex became easier to budget and pilot | Procurement |

| GPT-5.2 Codex plus current cloud docs | Codex now looks like a place to send real repo work | Execution |

That last shift is the big one. Software teams do not buy coding agents only because they answer questions. They buy them because they might take actual work off the critical path. If the agent can pick up a bounded task, run it in a prepared environment, use tools, return a work log, and hand back diffs for review, the conversation changes from “is the model smart?” to “does this slot into the way our team already works?”

That is a much better product question. It is also much harder for rivals to shrug off.

Fresh cloud environments make GPT-5.2 Codex feel less like autocomplete with a passport

The most important detail in the cloud environments docs is simple: each task runs in a fresh cloud environment. OpenAI says teams can shape that environment with setup scripts, environment variables, and container-image choices. The agent then runs terminal commands in a loop, edits files, and validates its own work.

That sounds dry. It is not.

This is the difference between asking a model for code in a chat window and giving an agent a room to work in. One is a suggestion engine. The other is a supervised remote labor surface. Same family, different utility bill.

It also explains why GPT-5.2 Codex is more important as product architecture than as model branding. A better model helps, obviously. But the thing teams will actually feel is the environment contract around it. Fresh task environments reduce local-machine weirdness. Setup scripts make repeated work less brittle. Container-backed customization makes the job less dependent on whatever one heroic engineer happened to configure two months ago and forgot to document.

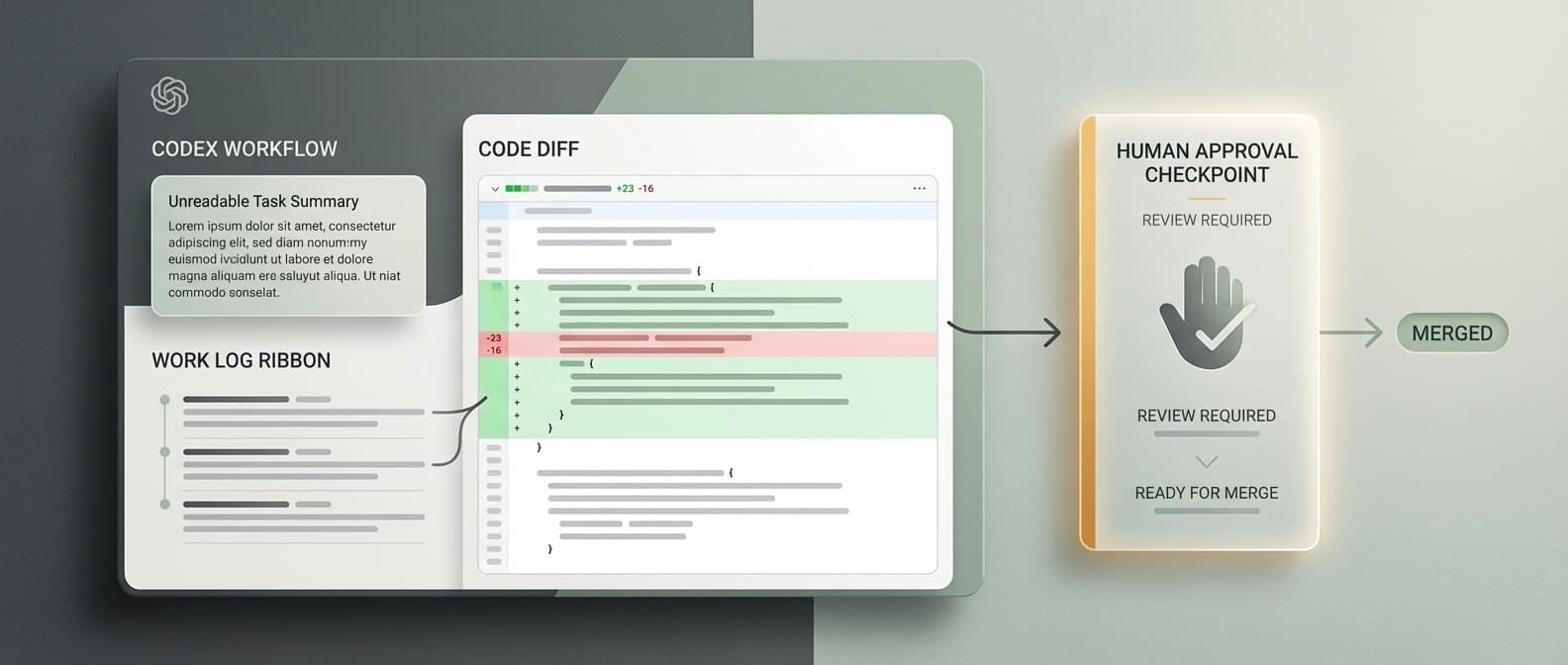

And when the agent is done, OpenAI says Codex returns a summary of its changes, a work log, and a diff you can inspect before applying. That is the real-time feedback story in practical form. Not floating sparkles. Receipts.

This is also where the site’s broader OpenAI agents platform shift starts to connect. The power move is not just model access. It is owning the task surface around the model, from the repo handoff to the review loop.

OpenAI Codex internet access turns into a policy knob instead of a scary yes-or-no bet

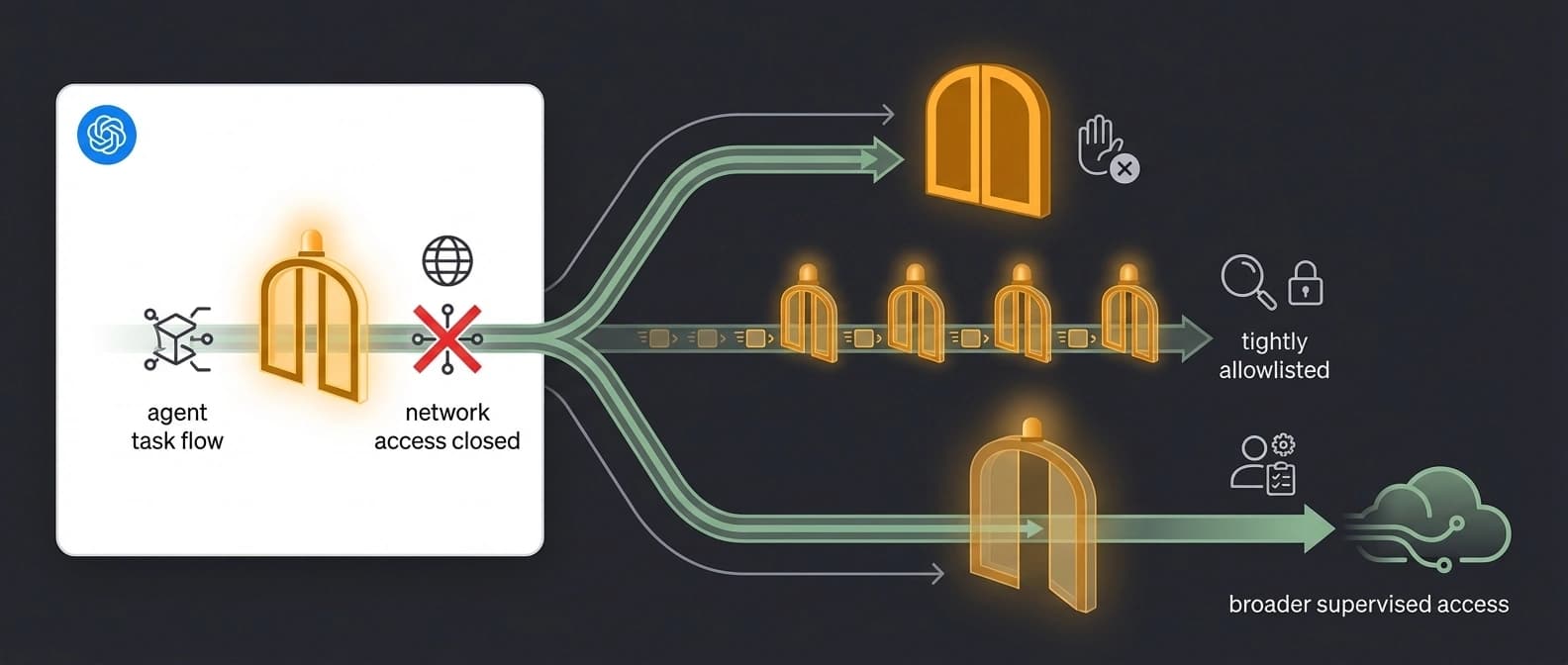

The next meaningful detail is network control. The internet access docs say Codex blocks internet access by default during the agent phase. That one line does a lot of work. It tells enterprise buyers, security teams, and nervous managers that OpenAI understands the obvious objection.

Then the docs get more interesting. Internet access is not treated as one giant on switch. OpenAI frames it as a configurable policy surface. Teams can keep tasks offline, open limited access through host allowlists or a middleware proxy, or broaden access when a task truly needs it.

That matters because real software work does sometimes need the network. Package installs exist. External docs exist. APIs exist. Dependency registries exist. Pretending serious agent work can live forever in a sealed jar is how you end up with great demos and miserable production behavior.

But giving an agent unrestricted network access by default would also be a superb way to terrify everyone in procurement before lunch.

So the actual design choice is more grown-up than the market’s usual drama. Codex can stay blocked when that is safer, then open in controlled ways when the task requires it. That is much closer to how competent operators think about infrastructure. Guardrails first. Exceptions second. Nobody gets to wear the “YOLO” hoodie to the change-review meeting.

The source that really locks this point in is OpenAI’s own How OpenAI uses Codex guide. The company says Codex is used daily across multiple technical teams, and it explicitly notes that startup scripts, environment variables, and internet access significantly reduce Codex’s error rate. That is not just marketing language. It is operational advice from the people trying to make the tool work on real codebases.

How OpenAI uses Codex tells you the product strategy faster than the launch language does

The most revealing first-party document in this whole bundle is not the fresh sitemap entry. It is the internal-usage guide.

OpenAI says Codex is used daily across teams such as Security, Product Engineering, and other technical groups. The company describes using it for tasks like understanding unfamiliar code, reducing code-review backlog, debugging issues, writing tests, and handling cleanup work that would otherwise chew up focused engineer time. It also highlights Best-of-N, which lets users generate multiple responses in parallel. That is a subtle but important tell. OpenAI is not positioning Codex as one oracle answer. It is positioning Codex as managed parallel work.

That is exactly how a serious team would want a cloud coding agent to behave.

When I read that guide next to the live Codex docs, the product strategy gets much clearer. Codex is being framed as a software coworker you delegate bounded jobs to, not a novelty demo you keep open in a side tab for vibes. The docs talk about environments, task setup, internet rules, progress monitoring, diffs, and pull requests because that is the layer where adoption either becomes real or dies in a pilot.

This is why GPT-5.2 Codex feels like a bigger deal than another model bump. It is arriving alongside a more explicit contract for how Codex should be used:

- send it real repo tasks

- give it a prepared environment

- choose its network policy deliberately

- let it work in the cloud

- inspect the logs and diff before merge

That is not editor autocomplete. That is queueable software work.

The older frame for coding agents was “look, it can write code.” The stronger frame is “look, it can take a ticket-shaped job, do the boring parts in a controlled workspace, and hand back something reviewable.” Those are not the same market.

Why GPT-5.2 Codex changes the coding-agent market even without benchmark theater

I am intentionally not making this a benchmark story, partly because OpenAI’s live public surfaces are giving a cleaner signal elsewhere. The strategic shift is already visible without another leaderboard screenshot.

The coding-agent market has been drifting toward repo-scale work for a while. We have seen the pressure build through install surfaces, workflow packaging, budget controls, and attempts to become the tool developers do not bother switching away from. Our piece on OpenAI’s Astral Python workflow power grab made the same broader point from a different angle: the fight is moving toward workflow ownership.

GPT-5.2 Codex sharpens that fight because it makes Codex look less like an assistant perched beside the editor and more like a remote execution layer with a polite UI. If you are a competitor still selling “strong code generation” as the whole story, that is a problem. Strong generation is table stakes now. The durable question is whether the product can carry work from instruction to environment to validation to review.

That is also why the container-backed environment detail matters more than it may seem. It is the scaffolding that lets the model stop being a one-shot output machine. Fresh environments, setup scripts, network policies, and reviewable artifacts are what turn intelligence into a workflow someone can govern.

In plain English, this is the moment Codex starts looking like a cloud software worker.

Not a magical one. Not an autonomous CTO in a browser tab. Let us all stay hydrated.

But a real one, in the very practical sense that teams can hand it bounded tasks, shape its workspace, control its access, watch its progress, and inspect what it did before anything lands.

The real question now is whether teams treat Codex like a worker or a widget

The live Codex product page currently says Codex is included across ChatGPT Plus, Pro, Business, Edu, and Enterprise plans. That suggests the surface is broad. What is still less tidy is the exact GPT-5.2-specific rollout language across OpenAI properties, which is why this draft stays disciplined about what it claims beyond the docs that are plainly live now.

Even with that caveat, the important move is already visible. OpenAI is making Codex legible as a cloud work surface.

If teams treat it like a widget, they will keep asking whether it writes prettier snippets than the last model. That conversation will get stale fast.

If teams treat it like a worker, the better questions appear immediately. Which jobs are bounded enough to delegate? What setup script makes the environment stable? When should network access stay blocked? When should it be narrowly opened? Which diffs can be trusted after review, and which still need a human to do the hard thinking?

That is a more serious workflow. It is also a more defensible product.

So yes, GPT-5.2 Codex is a model launch. But the reason it matters now is that Codex is starting to look like the place where OpenAI wants software tasks to go run. Once you see that, the rest of the recent Codex moves line up neatly: pricing made it buyable, plugins made it packable, and this shift makes it operational.

That is the story. The decimal point is just the nametag.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Confirms the GPT-5.2 Codex launch URL is freshly updated on April 7, 2026, even though mirrored fetches currently surface overlapping older copy for that path.

Best first-party evidence for how OpenAI wants Codex used in practice, including daily internal usage, parallel work, and the importance of startup scripts, environment variables, and internet access.

Provides the broad current Codex product frame: Codex can read, edit, and run code, and the live product surface now spans major ChatGPT plans.

Defines cloud tasks as delegated software engineering work in the cloud, with progress monitoring, environment selection, and PR-oriented review flow.

Explains that each task runs in a fresh cloud environment, how setup scripts and container images shape that environment, and what artifact set comes back when the agent finishes.

Shows that internet access is blocked by default during the agent phase and can be opened in limited or broader ways when a task truly needs it.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 6 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.