AI coding's new bottleneck: agent orchestration

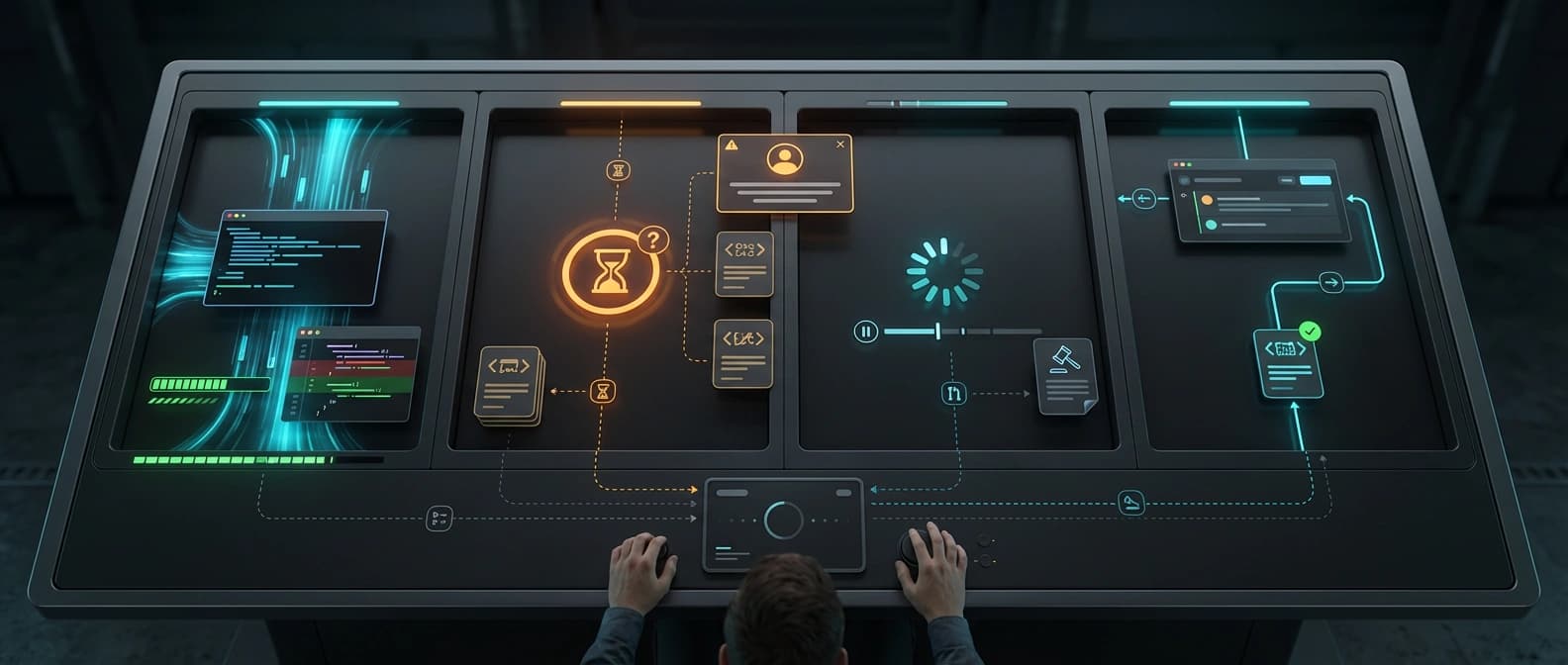

Teams can spin up AI coders easily now. The harder part is routing, supervising, and rescuing several agents before the workflow turns into terminal soup.

The model can already write another function. The harder problem is knowing which agent should touch which branch, when to interrupt it, and how to keep the whole thing from turning into terminal karaoke.

A few months ago the flashy demo was one AI agent writing code for one developer. This week the more interesting story is what people are building around five of them.

The strongest signals all point the same way. Addy Osmani is writing about the jump from “conductor” mode to “orchestrator” mode. Show HN this week has projects like Optio, Paseo, and Orca. Product Hunt snippets over the last few days surfaced Maestri, Agentation, and SuperTurtle, though I am deliberately not treating those snippets as traction proof because direct Product Hunt fetches were blocked in this reporting run. Reddit is full of developers building graph views, kanban boards, and browser dashboards just to keep multiple coding agents from dissolving into terminal soup.

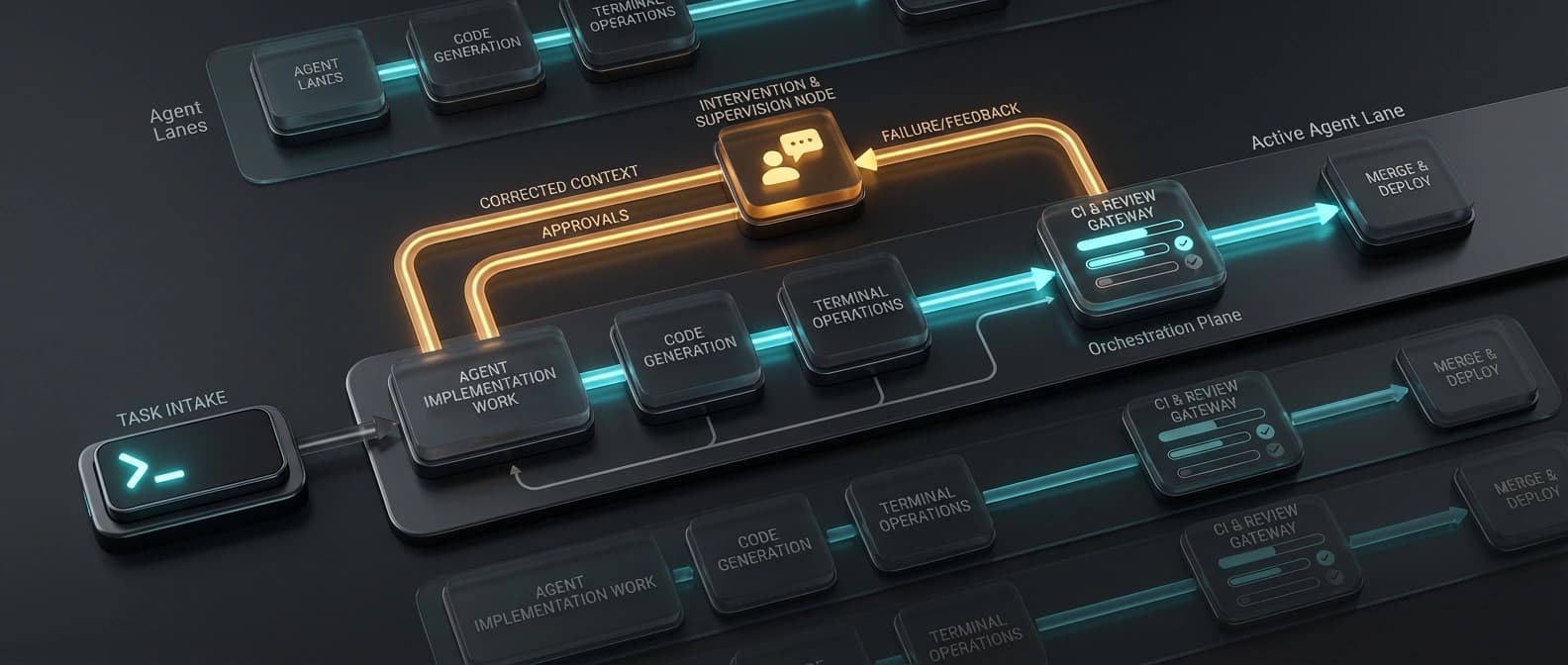

That convergence matters. It suggests the hot subcategory right now is not “another agent that writes code.” It is AI coding agent orchestration: the boring-sounding control layer that decides which agent works where, how parallel AI coding agents stay out of each other’s way, who gets pinged when something stalls, and how failures get fed back into the loop.

The glamorous part of AI coding was never going to stay glamorous for long. Once the model can already write another route handler, another test, another migration, the limiting reagent becomes supervision. Or, more bluntly, the human trying to remember which agent owns auth, which Claude Code worktree is drifting, and which tab has been politely waiting for input for ten minutes like a Victorian child.

The new tools are solving different pieces of the same headache

It is tempting to flatten all these launches into one bucket. That would miss the interesting part.

Optio is not selling the same thing as Paseo, and neither one looks much like Maestri. Optio is a closed-loop automation system. Its pitch is ticket to merged PR: take intake from GitHub or Linear, provision an isolated environment, run an agent in a worktree, watch CI, resume the agent when tests fail or review comments arrive, then merge when the loop closes. That is orchestration as workflow engine.

Paseo is more like orchestration as control surface. It wraps Claude Code, Codex, and OpenCode in one interface that spans desktop, mobile, web, and CLI. The pitch is not “walk away forever.” It is “run agents on your own machines, check them from your phone, switch providers, attach to a task, send a follow-up, keep moving.”

Maestri and Orca push a third angle: orchestration as a multi-agent coding workspace. Their selling point is spatial visibility. Multiple worktrees. Multiple terminals. Notifications. Notes. Git status. One canvas instead of thirty tabs and a rising sense of personal failure. At a certain point, “just open another terminal” stops sounding pragmatic and starts sounding like a dare.

Then you have adjacent tools that sit on the edges of the same problem. SuperTurtle is remote and phone-first, with parallel worker supervision and milestone updates instead of a log firehose. Agentation is not a multi-agent runtime at all. It turns visual UI feedback into structured context that coding agents can actually act on. Anvil leans into proof and adversarial verification. Different products, same big realization: once agents multiply, the workflow layer becomes the real product.

That is the common thread. Some tools are building the automation loop. Some are building the operator cockpit. Some are building the human feedback and verification rails around the agents. But they are all reacting to the same new bottleneck: coordination.

Why this is happening now

The simple answer is that single-agent coding has started to hit its ceiling.

Osmani puts it cleanly. One agent eventually runs into context overload, weak specialization, and coordination limits. The first fix was subagents. The next fix is systems that can manage teams of agents with task lists, dependencies, file boundaries, and quality gates. In other words, the answer to “my coding agent needs help” quickly became “congratulations, you are now doing lightweight engineering management.”

That sounds dramatic, but the Reddit threads make it very concrete. One builder described juggling 8 to 10 CLI sessions and having no idea which one was idle, blocked, or waiting. Another built a kanban board so agents could share ticket state and artifacts. Another built a local web UI around git worktrees so parallel Claude Code sessions could be seen in one browser panel. These are not abstract philosophical complaints. They are workflow injuries.

The timing also makes sense economically. The model layer keeps improving, and in some cases getting cheaper or more interchangeable, as we argued in our piece on the GLM 5.1 and Claude Code wedge. Once that happens, the competitive edge shifts upward. The question is no longer only “which model writes the nicest patch.” It is “which stack lets a developer run three or six agents without setting their attention span on fire.”

This fits the broader move we described in AI’s action-not-answers battlefront. The market is drifting from chat answers toward systems that can take action across a workflow. In the design lane, we already saw a cousin of this in Google Stitch 2.0’s handoff story: the interesting part was not the pretty mockup but the machine-readable bundle that moved work to the next step. Coding-agent orchestration is the same instinct aimed at engineering execution.

So is this real, or just wrapper theater with better screenshots?

Some of it is absolutely wrapper theater. A prettier dashboard is still just a prettier dashboard if it does not solve ownership, interruption, and feedback.

The real tests are dull. Does the tool isolate work through branches or worktrees so agents do not trample each other? Does it make task state visible without forcing you to read every line of output? Can it route failures back into the right agent with useful context? Can you interrupt, redirect, approve, or kill work without losing the thread? Does review still exist, or is the product just selling you a nicer way to be surprised?

Optio passes that test more than a generic wrapper because it tries to close the loop around CI and review. Paseo passes it when the cross-device surface actually reduces babysitting. Maestri and Orca pass it when one canvas really does beat alt-tab purgatory. Agentation and Anvil pass it when they reduce ambiguity and raise the odds that the human sees the right thing at the right time.

The weak version of this category is “we put Claude Code in a shinier box.” The strong version is “we built the missing control plane for multi-agent work.” Those are not the same business.

There is also a ceiling to how autonomous this gets. Teams still need specs, boundaries, and someone who notices when the agent is confidently renovating the wrong kitchen. Remote supervision helps. Better notifications help. Feedback loops help. None of that removes the need for oversight. It just makes oversight feel less like whack-a-mole.

Which AI coding orchestration habits will become standard?

If this really is a workflow shift, the winners will not be the loudest “AI engineer replacement” pitches. They will be the tools that make parallel work feel ordinary.

Watch for five things. First, whether worktree isolation becomes table stakes in every serious multi-agent coding workspace. Second, whether routing across providers becomes normal instead of novel. Third, whether review and CI feedback get treated as first-class agent inputs rather than annoying leftovers. Fourth, whether mobile and remote supervision become standard once people realize they do not want to sit next to the terminal all day. Fifth, whether verification layers such as review agents and evidence-first checks become part of the same stack instead of an afterthought.

My bet is that this category is real, but uneven. Some of these products will age badly because “multiple terminals, but fancier” is not enough. The broader shift still looks durable. The strongest developer signal right now is that code generation is becoming the cheap part. Coordination is becoming the expensive part.

That is not as sexy as the old demos. It is also much closer to where real software work gets stuck.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Useful synthesis of the shift from one-agent pair programming to orchestrating multiple agents with shared task lists, quality gates, and worktree isolation.

Official positioning for an infinite-canvas control surface around coding agents, notes, roles, and on-device supervision.

Official repo describing ticket-to-PR orchestration, Kubernetes repo pods, git worktree isolation, CI watching, and review-loop auto-resume.

Discussion signal for the orchestration angle. Do not treat HN points or comments as adoption proof.

Official repo for a multi-surface interface across desktop, mobile, web, and CLI with worktree support, daemon control, and multi-provider agent routing.

Useful for the operator-surface framing and remote supervision angle. Not an adoption source.

Official repo for a cross-platform IDE built around multiple worktrees, agent terminals, notifications, and GitHub status visibility.

Useful as a current-week signal that worktree-centric control surfaces are drawing builder attention.

Official repo metadata and site files position Anvil as an evidence-first coding agent for GitHub Copilot CLI with adversarial review and rollback, which fits the oversight side of the control layer.

Official site for turning UI annotations into structured context that coding agents can act on. Useful as part of the human-to-agent feedback surface rather than pure runtime orchestration.

Official repo for phone-first remote control of local coding agents, milestone updates, and parallel worker supervision through SubTurtles.

Direct Product Hunt fetching was blocked in this reporting run. Treat this only as a discovery pointer inferred from snippets and Maestri's own site embed, never as traction proof.

Captures operator pain around juggling 8-10 CLI sessions and the demand for graph-level visibility.

Useful proof that users want task-state visibility, ticketing, and artifact sharing for parallel agent teams.

Useful user-level evidence that developers are building local multi-agent coding workspaces around worktrees and browser dashboards.

About the author

Talia Reed

Talia reports on product surfaces, developer tools, platform shifts, category shifts, and the distribution choices that determine whether AI features become durable workflows. She looks for the moment where a launch stops being a demo and becomes an ecosystem move.

- 34

- Apr 1, 2026

- New York

Archive signal

Reporting lens: Distribution is usually the story hiding inside the launch.. Signature: A feature matters when it changes someone else’s roadmap.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 15 linked source notes

Byline

Covers product surfaces, tools, and the adoption moves that turn AI features into durable habits.