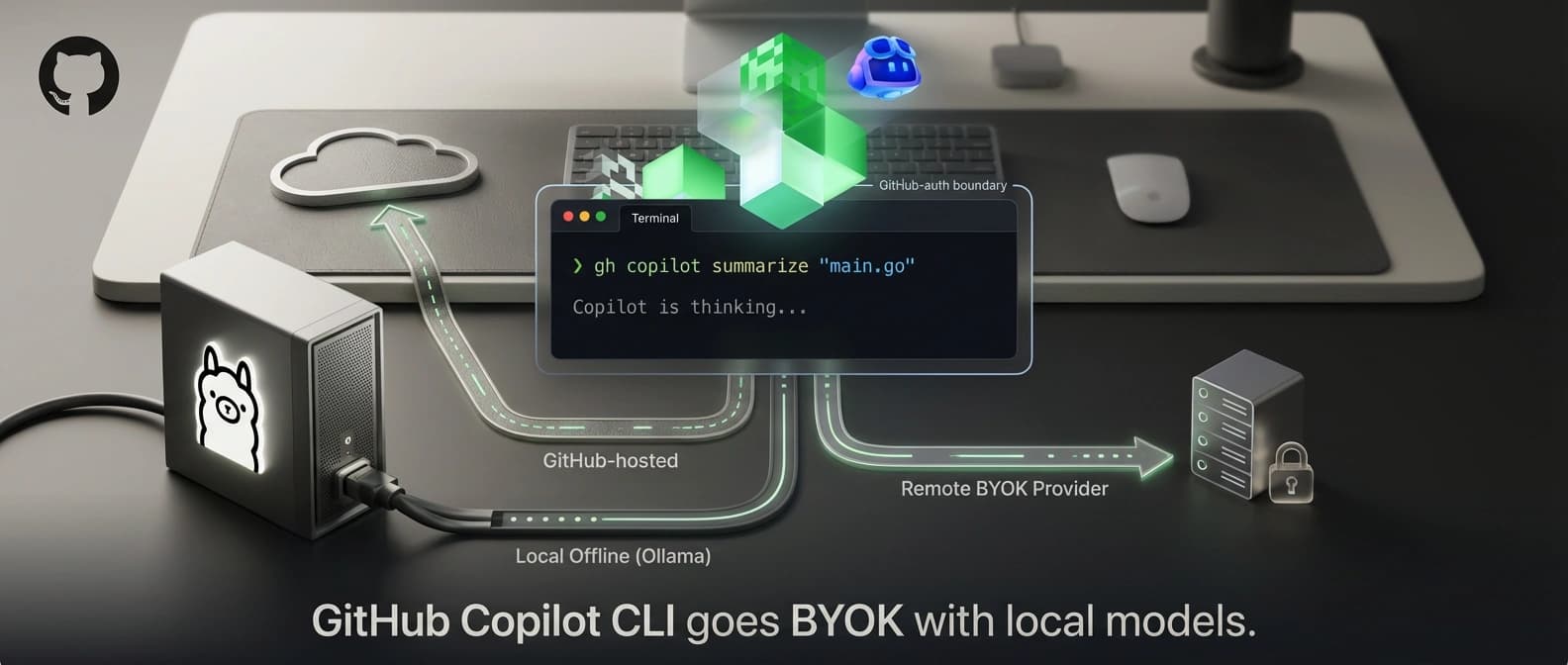

GitHub Copilot CLI goes BYOK with local models

GitHub now lets Copilot CLI use local and BYOK models through Ollama, vLLM, Azure OpenAI, Anthropic, or OpenAI, with offline mode and optional GitHub auth.

GitHub did not just add a few provider knobs. It turned Copilot CLI into a terminal agent shell that can ride on somebody else's inference stack.

When GitHub Copilot CLI went generally available in February, the sales pitch was easy to summarize: GitHub had turned Copilot into a proper terminal-native coding agent. It could plan, edit files, run commands, switch models, call tools, use built-in sub-agents, and remember work across sessions. Useful. Fast. Slightly dangerous in the way all terminal agents are slightly dangerous.

But GitHub still owned the model lane.

That is what changed on April 7. In its new changelog entry, GitHub says Copilot CLI can now use your own provider or fully local models instead of GitHub-hosted routing. The companion docs say that means OpenAI-compatible endpoints, Azure OpenAI, Anthropic, and locally running options such as Ollama, with offline mode and optional GitHub authentication layered on top. That sounds like a configuration update. It is really a product-definition update.

The useful way to read this is not, "nice, more models." The useful way to read it is that GitHub just separated Copilot CLI the agent shell from GitHub the model router.

I think that is the real story.

What changed in GitHub Copilot CLI on April 7

The April 7 release adds four things that matter more than the headline makes them sound.

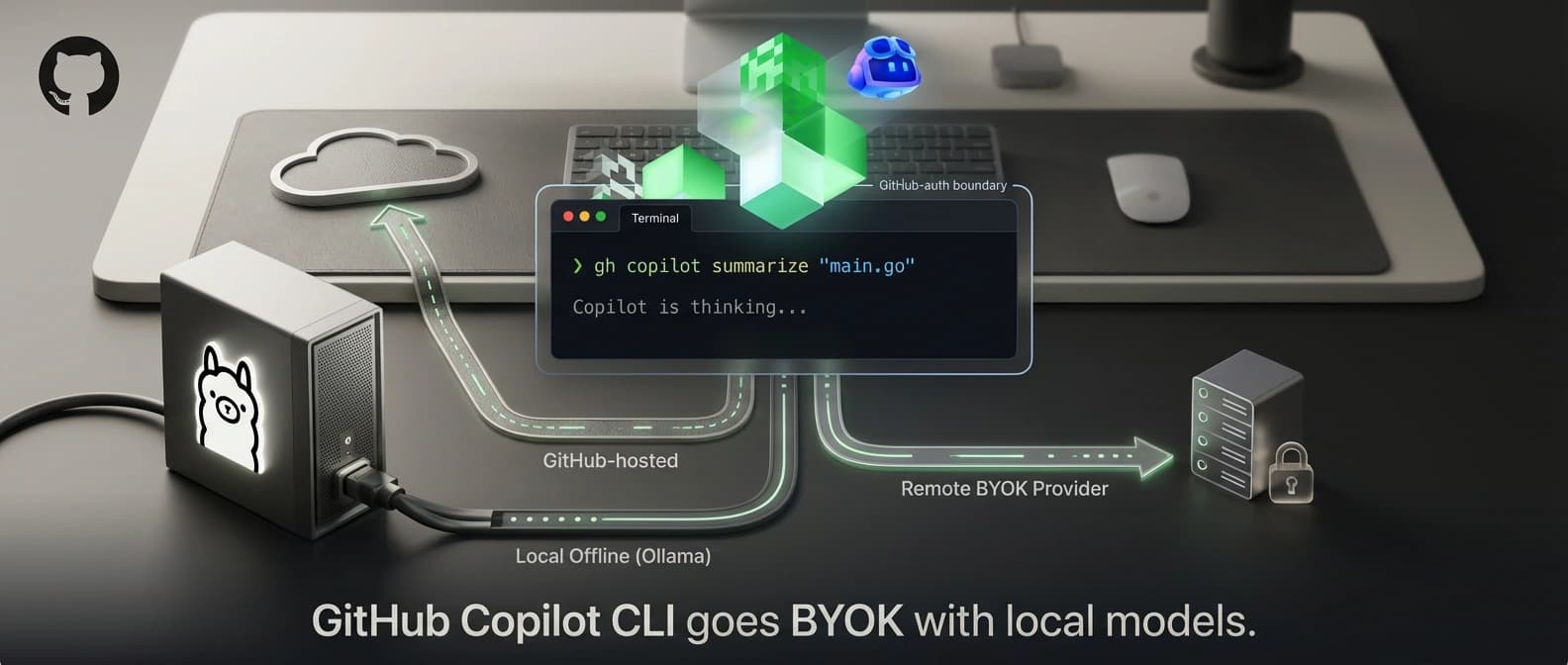

First, Copilot CLI can now point at another provider entirely. GitHub says you can configure Azure OpenAI, Anthropic, or any OpenAI-compatible endpoint. In practice that means the same terminal workflow can now sit on top of OpenAI, Azure, Ollama, vLLM, Foundry Local, or another compatible service, provided the model supports tool calling and streaming. GitHub also recommends a context window of at least 128k tokens. That recommendation is quietly important. An agent loop with a tiny context window is a bit like hiring a contractor with excellent tools and the memory of a fruit fly.

Second, fully local use is now on the table. The docs explicitly call out locally running models such as Ollama, and the changelog calls out local workflows directly. That moves Copilot CLI into the same broader trend we have been watching in pieces like Google turns Android Studio into a local AI agent IDE and Microsoft Foundry's local and sovereign AI stack. The appetite is not just for better models. It is for keeping inference close to the machine, the team, or the compliance perimeter.

Third, offline mode now exists. Set COPILOT_OFFLINE=true, and GitHub says Copilot CLI stops contacting GitHub's servers, disables telemetry, and talks only to your configured provider. That is not magic. It is still a very practical shift.

Fourth, GitHub authentication becomes optional when you bring your own provider. That sounds small until you read the auth docs. You can now start Copilot CLI with only provider credentials and no GitHub login at all. In other words, the terminal agent no longer needs GitHub as an identity gate for the core model interaction path.

That is new.

GitHub adds two caveats that matter just as much as the launch copy. One, built-in sub-agents inherit the same provider configuration, so this is not a weird second-class mode where the main agent is local and the helpers quietly tunnel back to GitHub. Two, if your provider configuration is wrong, Copilot CLI says it will show an error and will not silently fall back to GitHub-hosted models. That last point deserves a slow clap. Surprise routing is a wonderful way to burn trust and create invoices that begin with the phrase, "well, technically."

GitHub Copilot CLI local models turn Copilot into a shell, not a router

This feature makes more sense if you compare it with what GitHub was already doing a day earlier.

In GitHub's April 6 blog post about using multiple model families in Copilot CLI for a "second opinion," the company was already treating the CLI as a flexible agent surface rather than a one-model experience. The April 7 BYOK and local-model update takes that idea to its logical end. The model is now swappable at the provider level, not only at the menu level inside GitHub's own lane.

That shifts Copilot CLI closer to the position GitHub is also building in GitHub Copilot SDK exports GitHub's agent runtime. In both cases, the sticky thing is not necessarily the model. It is the runtime behavior: planning, tool use, approvals, session handling, sub-agent orchestration, terminal ergonomics, and the habits developers build around that workflow.

So the business question changes.

Before April 7, using Copilot CLI meant using GitHub's agent shell and GitHub's model-routing layer together. After April 7, GitHub can keep the shell while letting the model path float underneath it. That is a more open product move than most AI assistant vendors make once they already have you in the chair.

It is also a shrewd one. Developers often like a tool's interface and workflow long before they agree on a single model vendor. Some teams want Azure because procurement already signed the papers. Some want Anthropic because that is where their coding evals look strongest. Some want OpenAI because it is the default path in half their stack. Some want Ollama or vLLM because the words "internet dependency" and "shared codebase" in the same sentence make security people start blinking in Morse code.

GitHub is now saying: fine, keep the workflow.

Which providers work with Copilot CLI BYOK and local endpoints

The docs split supported providers into three types.

openaifor OpenAI and any compatible endpoint, including Ollama, vLLM, Foundry Local, and similar OpenAI Chat Completions API surfacesazurefor Azure OpenAIanthropicfor Anthropic

That is a tidy list, but the practical question is not only which endpoints are accepted. It is which operating mode you are actually choosing.

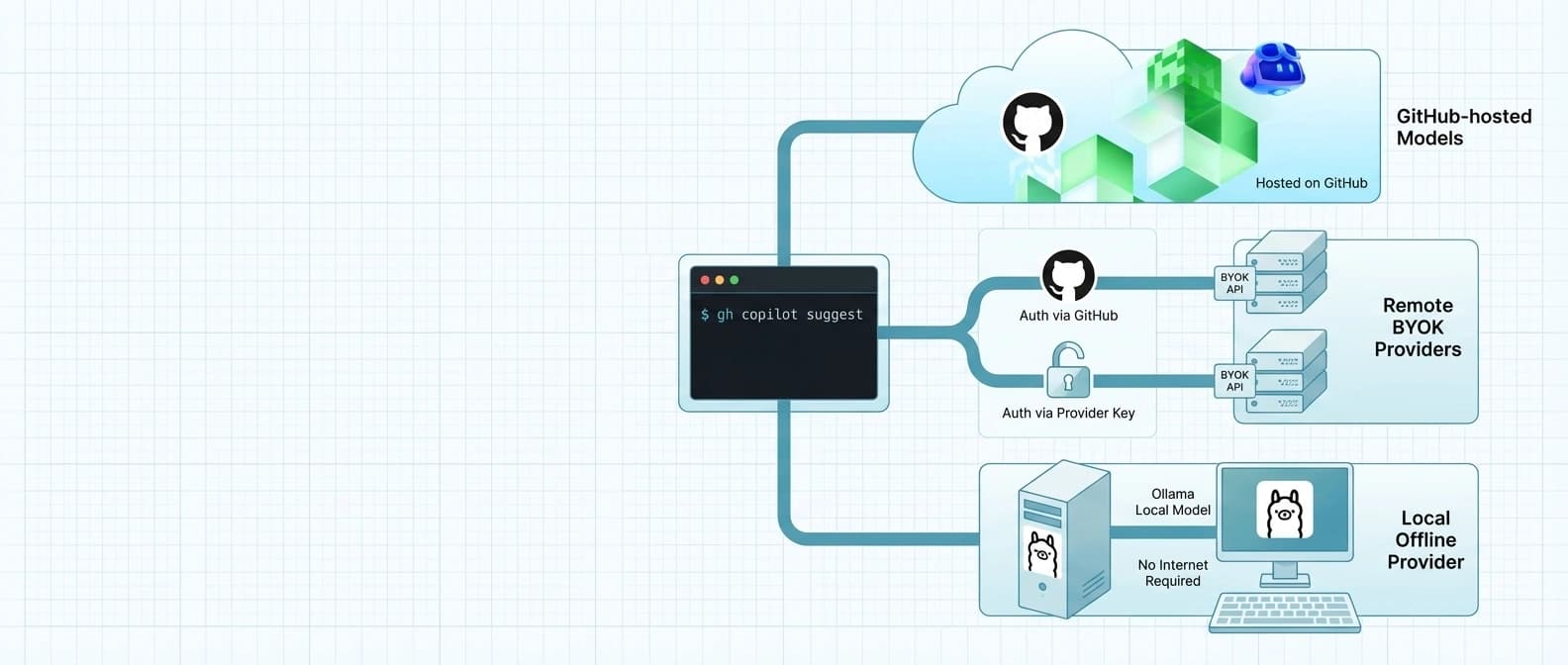

| Mode | Model path | GitHub sign-in | What you keep | What you lose | Best fit |

|---|---|---|---|---|---|

| GitHub-hosted default | GitHub-routed Copilot models | Required | Turnkey setup, GitHub-hosted features, no provider plumbing | Direct provider control, local-only routing | Developers who want the easiest default path |

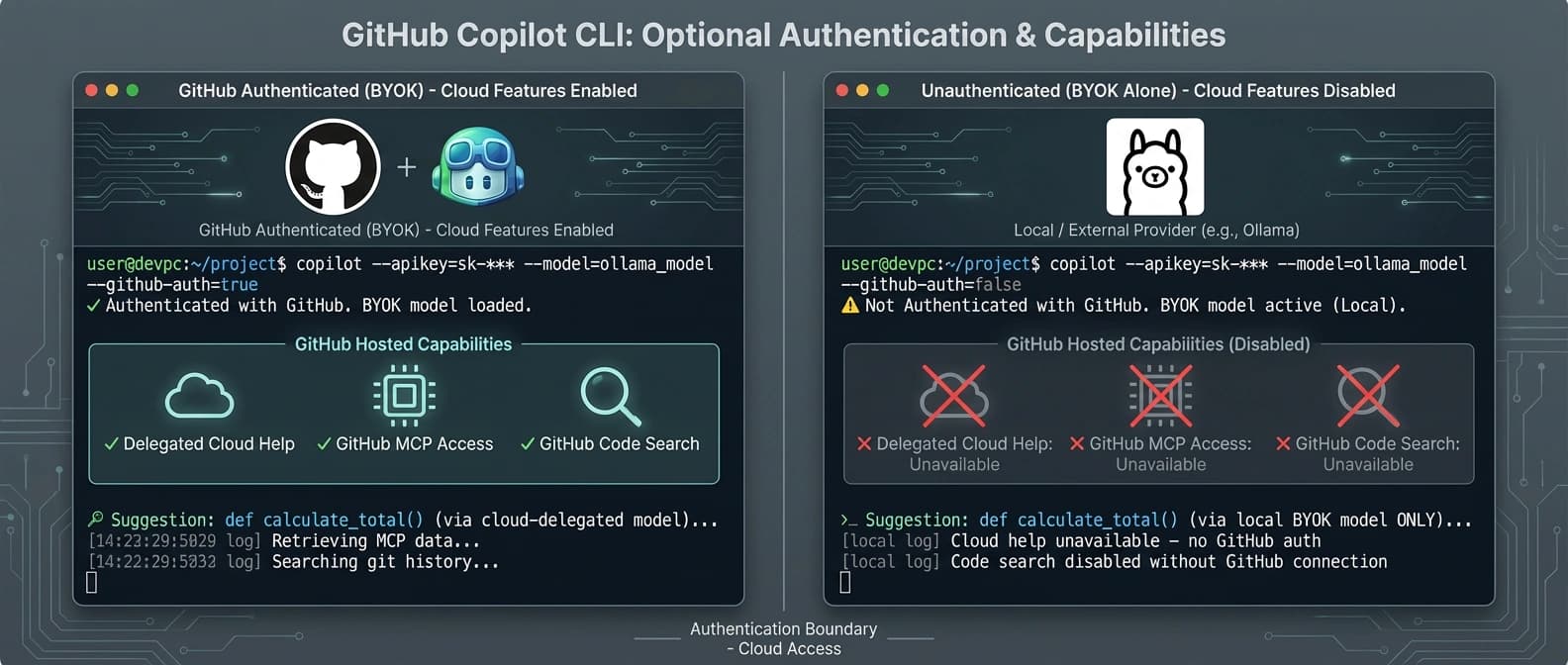

| BYOK with GitHub auth | Your provider or local endpoint | Optional, but enabled here | Copilot CLI workflow plus GitHub-hosted extras like /delegate, GitHub MCP, and Code Search | You still manage provider config and model quality | Teams that want custom models without losing GitHub's add-ons |

| BYOK without GitHub auth | Your provider or local endpoint | Not required | Direct provider control, no GitHub identity dependency for core responses | /delegate, GitHub MCP, and GitHub Code Search | Developers who want Copilot CLI mostly as a shell |

| Offline plus local or on-prem provider | Local or isolated endpoint only | Not used | No GitHub server contact, telemetry off, strongest control story | All GitHub-hosted features and any remote-provider convenience | Air-gapped, regulated, or on-prem environments |

That table is the real upgrade guide.

It also explains why GitHub's model requirements matter. Copilot CLI is not a simple prompt box. It expects tool calling and streaming because the agent loop depends on them. If you point it at a local model that cannot call tools reliably, the nice terminal shell stays nice right up until the moment it fails to do the actual job. Same workflow, worse engine.

That is why this release does not prove every local model is suddenly a drop-in replacement for GitHub's default setup. There is no cross-provider benchmark in GitHub's materials showing identical coding quality, planning quality, or tool reliability across Ollama, vLLM, Azure OpenAI, Anthropic, and OpenAI. So developers should read this as workflow portability, not as a universal quality guarantee.

Copilot CLI offline mode is useful, but air-gapped has an asterisk

GitHub's offline-mode docs are refreshingly direct about what COPILOT_OFFLINE=true does and does not do.

What it does:

- stops GitHub authentication attempts

- disables telemetry

- prevents Copilot CLI from contacting GitHub's servers

- restricts the CLI to your configured provider

What it does not do is magically make a remote provider local. GitHub spells this out in the docs: offline mode is only fully air-gapped if the provider is also local or inside the same isolated environment. If COPILOT_PROVIDER_BASE_URL points at a remote endpoint, your prompts and code context still travel over the network to that provider.

That caveat matters because "offline" is one of those words vendors love right up until somebody brings network diagrams into the room.

So the practical split looks like this.

If you run Copilot CLI against Ollama on your workstation, vLLM inside your cluster, or another on-prem provider in the same isolated environment, then the new setup opens a real path for air-gapped or sovereign workflows. If you run it against a public cloud endpoint, you still gain GitHub separation and often better cost or vendor control, but you do not get full isolation. Air-gapped means air-gapped. It does not mean "the packets are going somewhere else and we all agreed not to make eye contact."

That precision is one reason this update matters. GitHub is not only widening provider choice. It is acknowledging that local inference and controlled network boundaries are now first-class buying criteria for developer tools.

GitHub Copilot CLI unauthenticated use changes what breaks, not what works

The new auth model is simple once you strip out the ceremony.

If you use BYOK, GitHub authentication is not required for core model access. Copilot CLI can talk straight to your configured provider. But if you skip GitHub authentication, GitHub's docs say you lose three things:

/delegate, because that relies on Copilot cloud agent running on GitHub's servers- the GitHub MCP server, because it needs GitHub API access

- GitHub Code Search, because that also depends on GitHub authentication

That is a clean boundary. The local or external model path handles the agent brain for terminal work, while GitHub-hosted value-add features still live behind GitHub's identity and infrastructure.

This is why the sweet spot for many teams will probably be BYOK plus GitHub auth, not pure unauthenticated use. You get your preferred provider for inference, but you keep GitHub-native extras when they are helpful. It is the same logic that shows up in GitHub Copilot cloud agent gets enterprise guardrails: GitHub increasingly looks strongest when it acts as the workflow and governance layer around model usage, not just the party that forwards tokens to a model endpoint.

There is another quiet implication here too. If you have been uneasy about GitHub's training and data-boundary posture, which we covered in GitHub makes Copilot training opt-out for individuals, this update gives teams another lever. It does not settle every data-governance concern by itself. It does make the routing path far more negotiable.

Why GitHub giving Copilot CLI BYOK matters for developers

The immediate value is practical.

Developers who already pay for Azure OpenAI, Anthropic, or OpenAI can now use Copilot CLI without also treating GitHub's hosted routing as the mandatory center of gravity. Teams already running Ollama, vLLM, or Foundry Local can test whether they can keep a familiar agent workflow while moving inference closer to home. Security-sensitive groups finally have an official path to use the CLI in isolated setups. And because GitHub says the CLI never silently falls back to GitHub-hosted models, the control story is much cleaner than the usual "trust us, we routed it right" posture.

The strategic value is broader.

This update loosens lock-in without giving up product stickiness. GitHub can still own the terminal experience, the command surface, the GitHub-native extras, and the habits developers build around the tool. But it no longer needs to insist that all useful inference must pass through GitHub first. That is a surprisingly confident move.

It is also a sign of where the competition is going. The control point in AI developer tools keeps moving upward. Raw model access matters, but increasingly the durable value is in the agent loop, the permissions, the tool integrations, the session memory, and the governance surface. GitHub seems to understand that. So do a lot of its rivals, even when they present it with more fireworks and worse typography.

Still, restraint is important here.

This does not prove that local inference is now good enough for every serious coding workflow. This does not prove teams will save money in every case. This does not prove that skipping GitHub auth is the best default for most developers.

What it proves is narrower and more useful: you can now keep Copilot CLI's agent workflow while choosing a different model path, and in some environments you can do that without touching GitHub's servers at all.

That is a meaningful shift.

Should you run Copilot CLI with Ollama, vLLM, Azure OpenAI, or stay on GitHub's default?

If you are a solo developer who mainly wants the smoothest path, GitHub-hosted defaults are still the least fussy option. No extra provider plumbing. No wondering whether your local model will stumble over tool calls. No weekend spent debugging an endpoint that was technically OpenAI-compatible in the same way decaf is technically coffee.

If you are on a team that already standardized on Azure OpenAI, Anthropic, or OpenAI, the new BYOK route looks much more compelling. You can preserve a single terminal workflow while aligning the actual inference layer with the rest of your stack.

If you need local inference, air-gapped operation, or on-prem control, this is the first Copilot CLI update that makes the tool genuinely relevant to that conversation. For those teams, the release is not a convenience feature. It is market access.

The bottom line is simple.

GitHub Copilot CLI used to be a strong terminal agent tied tightly to GitHub's own model-routing path. As of April 7, it looks more like a model-agnostic terminal shell with optional GitHub services wrapped around it. That does not make the choice easier. It makes it yours.

And for developer tools in 2026, that is a bigger feature than one more model dropdown pretending to be destiny.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Canonical April 7 announcement for provider choice, offline mode, optional GitHub authentication, inherited provider settings for built-in sub-agents, and the no-silent-fallback behavior.

Core setup source for provider types, supported endpoints, environment variables, model requirements, and the offline-mode caveat that only local or isolated providers are truly air-gapped.

Defines unauthenticated use and spells out which features disappear without GitHub sign-in: /delegate, GitHub MCP, and GitHub Code Search.

Baseline reference for what Copilot CLI already was before the April 7 change: a terminal-native coding agent with planning, tools, sub-agents, and repository memory.

April 6 product context showing GitHub already treating Copilot CLI as a flexible model surface, which makes the next-day BYOK and local-model shift more strategically coherent.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.