Gemini API Docs MCP turns docs into agent plumbing

Google's Gemini API Docs MCP and Gemini Agent Skills turn documentation into a first-party context layer for coding agents, with Google tightening workflow control.

Google is not just publishing docs here. It is turning docs into a managed input channel for coding agents.

Google's new Gemini API Docs MCP pitch looks small if you read it like a normal docs update. It is not a normal docs update. Google is taking current API documentation, SDK guidance, model info, and best-practice instructions and turning them into a first-party context layer for coding agents. That is a much bigger deal than "here is a nicer help page," which is roughly as exciting as being told your toaster now has opinions.

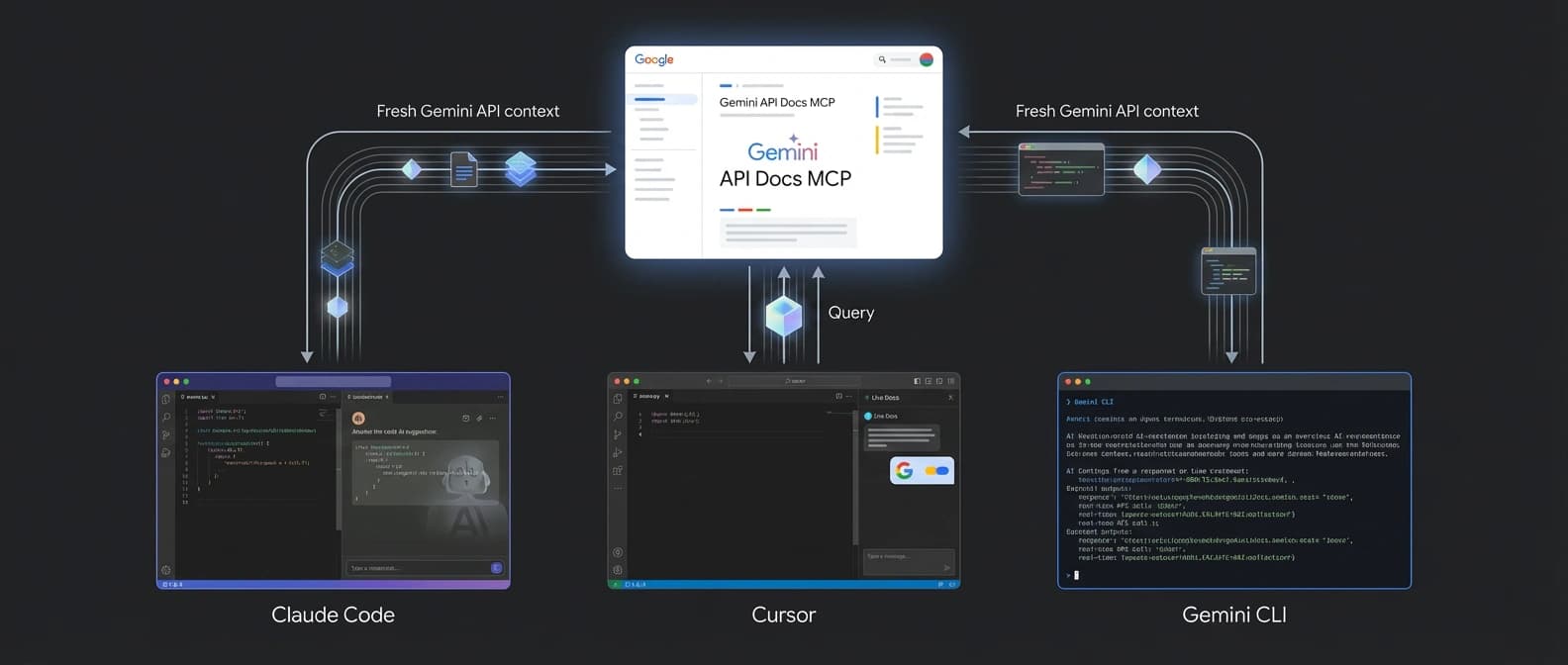

The official April 1 Google blog post says coding agents often generate outdated Gemini API code because their training data has a cutoff date. Google's fix comes in two parts. The Gemini API Docs MCP exposes current documentation through MCP, and Gemini Agent Skills add best-practice instructions and resource links. The companion docs page shows this workflow reaching Claude Code, Cursor, and Gemini CLI, which is the strategic tell. Google is not keeping this inside one house pet. It wants its documentation layer sitting inside the coding tools developers already use.

Gemini API Docs MCP puts live docs inside the agent loop

That is the part I keep coming back to. The coding-agents docs do not frame MCP as a nice extra. They frame it as a way for an agent to call search_documentation and pull current API definitions and integration patterns into the job itself. The docs were last updated on April 1, 2026 UTC, which matters because freshness is the whole sales pitch here.

A normal documentation site waits for a developer to notice they are wrong and open a tab. The Gemini API Docs MCP changes that shape. Now the agent can retrieve the up-to-date pattern while it is working. In plain English, Google is trying to stop your coding assistant from confidently writing SDK code like it just woke up from a six-month nap.

That changes the role of documentation. It stops being passive reference material and starts acting like runtime input. We have already seen Google push more of the agent loop into hosted surfaces in its Gemini API tooling update around tool combination and grounding. This move takes the same instinct and points it at the most boring thing in software, which is exactly why it matters. Boring infrastructure is where power hides.

Gemini Agent Skills fill the best-practice gap, with a support footnote

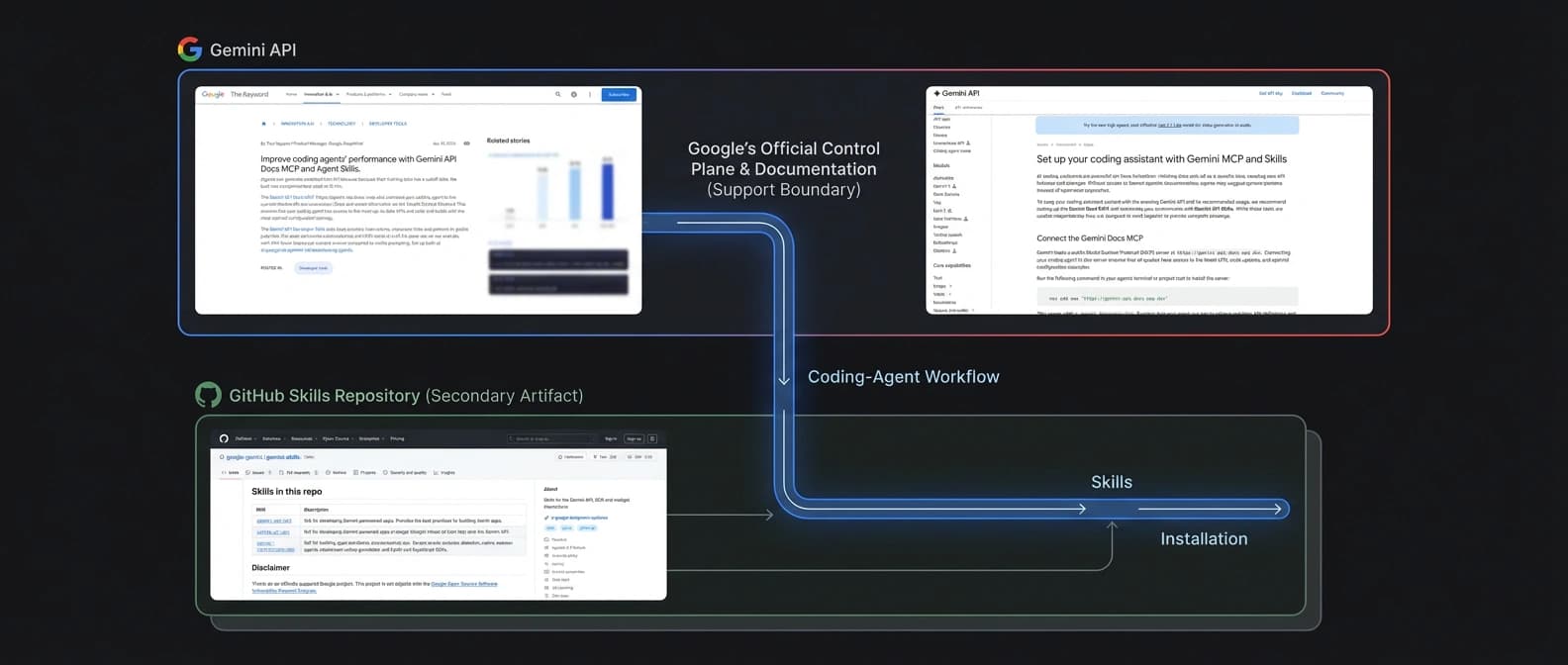

The Gemini Agent Skills side of the story is slightly messier, and that mess is informative. Google's blog and docs promote skills as the second half of the setup. The idea is simple: the MCP gives the agent fresh documentation, while the skill package repo gives it durable instructions about current SDK patterns and official entry points.

On its own, that is sensible. The interesting wrinkle is support. The public README for google-gemini/gemini-skills says the repo is not an officially supported Google product. So we have an official Google blog post and an official Google docs page actively steering people toward a workflow whose GitHub repo carries a narrower disclaimer.

I do not read that as a scandal. I read it as product strategy with legal shoes on. Google clearly wants the workflow adopted. At the same time, it is being careful about which artifact carries full support obligations. That is why the real asset here is not the repo by itself. The real asset is the vendor-controlled context channel: official docs, official best practices, and a recommended install path that plugs into outside agent surfaces.

The March 25 Google Developers Blog post makes this even clearer. Google said skills helped close the knowledge gap, but also admitted they have an update-story problem because users may leave old skill data sitting in their workspaces. It explicitly pointed toward documentation MCPs as a fresher route. In other words, skills are useful, but live docs are the sturdier control point. If skills age like milk, the MCP is the refrigerated shelf.

That lands in the same broader tool economy we have been watching around the growing Claude Code aftermarket. Once vendors start shipping more official context plumbing, the surrounding wrappers and teaching layers multiply fast.

Google's eval numbers are interesting, but they are still Google's

Google's headline claim is that using MCP and Skills together reached a 96.3 percent pass rate on its eval set, with 63 percent fewer tokens per correct answer than vanilla prompting. Those are good numbers. They are also Google's numbers, on Google's eval, for a very specific failure condition: generating outdated Gemini API code.

That does not make the results fake. It just keeps them in the right box. This is evidence that the combined workflow may help agents stay current on Gemini API usage. It is not independent proof that any coding assistant suddenly became a genius. Anyone reading it that way is grading the waiter on the soup and declaring the whole restaurant Michelin-ready.

The earlier skills post adds useful context here too. Google said the strongest gains showed up with newer reasoning-capable Gemini models, while older 2.5-series models improved less. That tells me the workflow is not magic dust. The model still has to be good enough to use the extra context well. Fresh docs help, but they do not turn a mediocre planner into a careful engineer any more than a map turns me into a competent hiker.

Why Gemini API Docs MCP matters for coding workflows now

The strategic shift is workflow control. If Google can make Gemini API Docs MCP the trusted way agents get current Gemini knowledge, then it owns more of the reasoning environment even when the user is sitting in Claude Code or Cursor. That is a subtler move than launching a new IDE. It is also probably smarter.

We have already been watching the coding-agent market move from raw model bragging toward control surfaces, install surfaces, and guardrails. That is visible in the orchestration bottleneck showing up in AI coding and in GitHub's enterprise guardrail push for Copilot agents. The fight is increasingly about who gets to shape the working context around the model, not just who sells the model call.

That is why I think this launch matters now. Google is giving developers a first-party answer to an obvious pain point: coding agents drift toward outdated code. But it is also doing something more ambitious. It is teaching developers to expect vendor documentation as a live dependency inside the agent loop. Once that habit sticks, the provider is not just publishing docs. It is operating part of the workflow.

There is a practical benefit to that. Real coding teams want fresher code suggestions and less token waste. Fair enough. Nobody enjoys paying for an agent to reinvent an API from memory like a guy explaining directions with total confidence and zero map. But the trade-off is worth noticing. The more your assistant depends on vendor-fed context, the more the vendor influences how your coding workflow stays current.

So yes, Google shipped a docs MCP and a skill package. That is the product read. The strategic read is a little sharper: Google is turning documentation into agent plumbing, then threading that plumbing into Claude Code, Cursor, Gemini CLI, and whatever other coding surfaces are willing to accept the pipe. Once docs become infrastructure, the help center stops being a library. It starts becoming part of the stack.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Official April 1, 2026 launch post that frames outdated training data as the problem and presents Gemini API Docs MCP plus Gemini Agent Skills as a combined fix.

Canonical setup page showing the workflow across Claude Code, Cursor, and Gemini CLI and describing the MCP retrieval tool plus available skills.

March 25, 2026 post explaining Google's earlier skill-only approach, including the update-story problem and the move toward documentation MCPs for fresher context.

Useful for the support-boundary caveat because the README says the repo is not an officially supported Google product.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.