Google's Gemini tooling update is a control-plane play

Google's latest Gemini API release bundles tool combination, server-side state, Search, and Maps into a tighter agent stack that is easier to ship and harder to leave.

The interesting part is not that Gemini can call more tools. It is that Google wants more of the agent loop to happen inside Google's own surfaces.

I think Google's March 17 Gemini API update is much more strategic than the "nice feature bundle" read it is going to get.

Google added tool combination, Interactions API state handling, Google Search grounding, and Google Maps grounding across the Gemini 3 family. On paper, that can look like routine platform housekeeping. In practice, it is a bid to make more of the agent loop feel native to the Gemini stack.

That matters because agent pain rarely lives in the demo. It lives in the glue: the search step, the custom function call, the state handoff, the awkward moment where one tool returns before another and your app starts to resemble a plate of dropped spaghetti.

Gemini tool combination cuts the orchestration tax

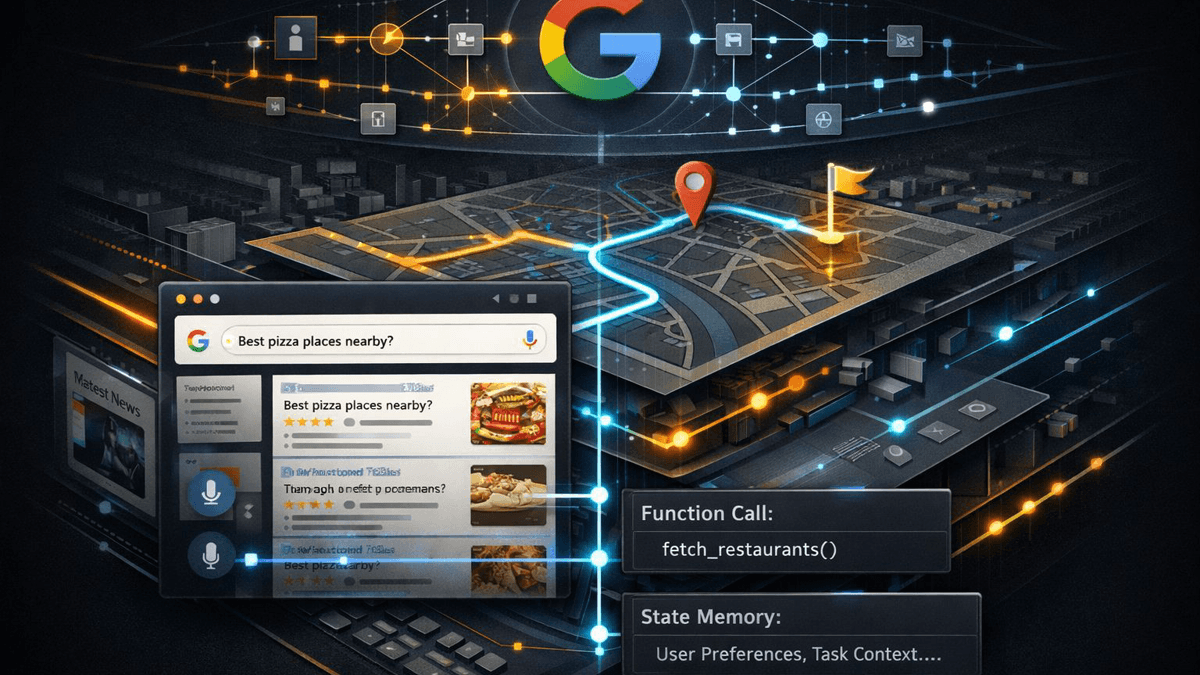

Google's tool-combination docs are revealing because they are not just about adding more tools. They are about combining built-in tools like Search and Maps with custom functions inside one generation while preserving tool context across the loop. That is the kind of detail developers care about after the keynote music stops.

The docs also introduce id fields so tool calls can be matched to the right responses in asynchronous and parallel flows, and they say those IDs and thought signatures need to travel back across turns if you want the model to keep its bearings. Again, not glamorous. But this is exactly where agent systems get brittle.

I read this as Google trying to remove orchestration tax. If search grounding, maps grounding, and business-specific actions can run through one hosted loop, developers have to write less adapter code and fewer homemade context bridges. That is not a tiny convenience feature. That is platform gravity.

Interactions API turns memory into a hosted feature

The stronger tell is the Interactions API. Google describes both stateless history replay and a stateful path where the client can pass previous_interaction_id so the service remembers the conversation. That sounds mild. It is not.

Once the provider owns more of the conversation state, tool context, and reasoning traces, the provider stops looking like a model vendor and starts looking like the place your agent runtime actually lives. I keep coming back to the same image: Google is not just selling you ingredients here. It is trying to become the kitchen.

That lines up with what I saw earlier in Google AI Studio's full-stack push and, from another angle, in OpenAI's agent platform shift. Different branding, same instinct: host more of the useful work around the model so leaving the platform feels increasingly annoying.

Maps grounding gives Google a home-field advantage

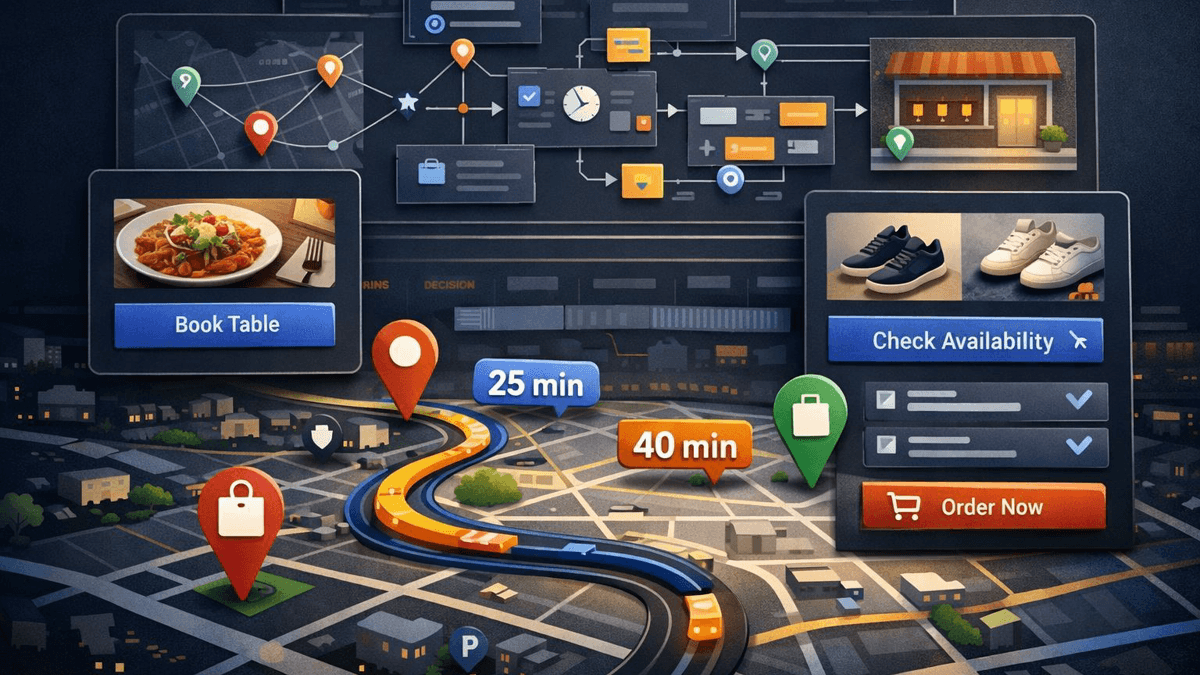

Search grounding already helped with freshness. Maps grounding adds something rivals cannot easily fake. Google's docs say Gemini can now pull on Google Maps data for local business details, geographically specific answers, routes, commute times, and other place-aware responses. That widens the set of agents that feel practical rather than merely polite.

A travel assistant can combine web freshness, place data, and internal business logic. A field-service workflow can reason about travel time before it schedules a job. A local shopping product can combine preferences, inventory, and real-world location context without treating maps as an awkward sidecar.

That is why I think this update matters. Search tells you what is new. Maps tells you where things are and how far away they feel. Put both inside the same tool-combination flow, then add hosted state, and suddenly Gemini looks a lot less like a chatbot API and a lot more like managed agent infrastructure.

My read on Google's API strategy

None of this means Google solved agent development. Developers still need to decide how much state they want to rent, how much portability they are willing to give up, and whether Google's hosted memory is a gift or the start of a very cozy dependency.

Still, the direction is obvious. Google is shrinking the number of off-platform decisions a developer has to make before something useful works. That is usually how platform power grows: not with one dramatic lock, but with a series of small conveniences that save you from rebuilding the same plumbing at 2 a.m.

So I would not file this under feature maintenance. I would file it under control-plane expansion. Google is trying to make orchestration feel like a native property of Gemini. Once that happens, developers are not just picking a model. They are picking where the rest of the loop lives.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Launch post covering built-in and custom tool combination, context circulation, tool call IDs, Maps grounding, and Google's recommendation to use the Interactions API for these workflows.

Documents stateful conversations through previous_interaction_id and the server-side state model Google now wants developers to use for these agent flows.

Explains how Gemini can use built-in tools and custom functions in one request, preserve tool context, and use IDs and thought signatures across turns.

Defines the Maps-based grounding path for location-aware responses and place-level context in Gemini 3 workflows.

Useful supporting context for Google's broader grounding stack, including citation behavior and real-time web retrieval.

About the author

Talia Reed

Talia reports on product surfaces, developer tools, platform shifts, category shifts, and the distribution choices that determine whether AI features become durable workflows. She looks for the moment where a launch stops being a demo and becomes an ecosystem move.

- 34

- Apr 1, 2026

- New York

Archive signal

Reporting lens: Distribution is usually the story hiding inside the launch.. Signature: A feature matters when it changes someone else’s roadmap.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Covers product surfaces, tools, and the adoption moves that turn AI features into durable habits.