Gemini 3 is Google's agent stack, not one model

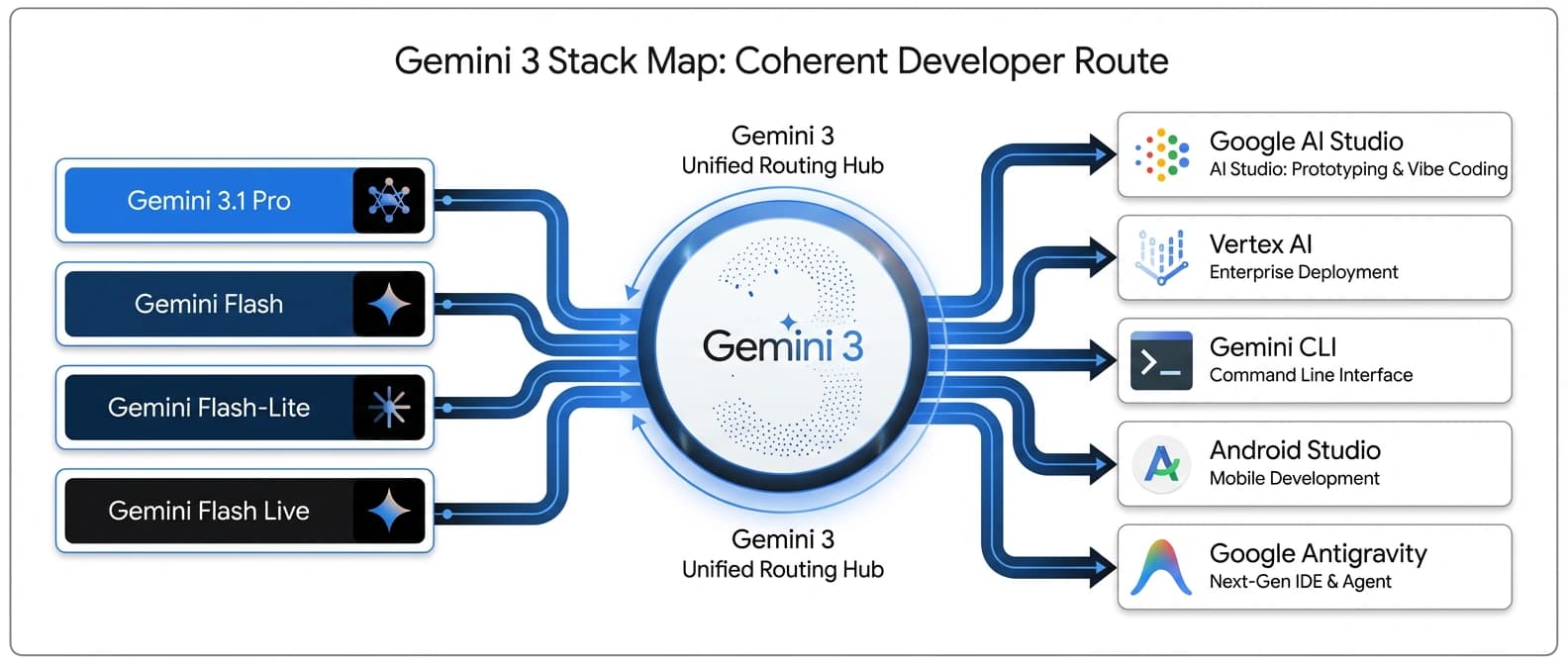

Gemini 3 matters because Google tied 3.1 Pro, Flash, Flash-Lite, Flash Live, AI Studio, and Antigravity into one app-building stack for developers.

Gemini 3 matters less as one smarter model and more as Google's attempt to make coding, live voice, and app-building feel like one route.

Google's Gemini 3 page looks like a model launch if you glance at it for five seconds. There is the smart-new-model language. There are the benchmark tables. There is the familiar promise that the system can help you learn, build, and plan almost anything. Standard issue.

But once I traced where Google actually put these pieces, the story changed. Gemini 3 stopped looking like one more frontier model release and started looking like a coordinated stack move. Google now has Gemini 3.1 Pro for the hard work, Gemini 3 Flash for fast frontier tasks, Gemini 3.1 Flash-Lite for cheap volume, Gemini 3.1 Flash Live for real-time audio, and Google Antigravity sitting in the middle as the app-building hinge.

That is the trick.

This is why the launch matters. Not because Google found a new way to say "our model is smarter now." Every lab has that slide. Gemini 3 matters because Google is finally making its models, APIs, coding surfaces, live voice layer, and app-building workflow feel like one system developers can actually route through. The company's March 2026 roundup all but says this out loud: the stack now stretches across Search Live, Gemini Live, AI Studio, Vertex AI, Android Studio, Gemini CLI, and Antigravity.

That is not a single model story. That is a platform story wearing model-launch makeup.

Gemini 3 stops being a model story once you trace the surfaces

The cleanest way to understand Gemini 3 is to stop thinking in terms of one ladder and start thinking in terms of one shared control surface with several lanes.

Google's own family page and rollout posts now map the pieces pretty clearly:

| Layer | Google's role for it | Where it shows up | Why it matters |

|---|---|---|---|

| Gemini 3.1 Pro | Highest-reasoning tier for complex work | Gemini API, Vertex AI, Gemini app, NotebookLM, Gemini CLI, Android Studio, Antigravity | The premium brain for coding, synthesis, and harder agent tasks |

| Gemini 3 Flash | Fast frontier intelligence | DeepMind family page and Google's coding demos | The quick-response tier for interactive multimodal work |

| Gemini 3.1 Flash-Lite | Cheapest high-volume tier | AI Studio and Vertex AI | The scale lane for translation, moderation, UI generation, and bulk workloads |

| Gemini 3.1 Flash Live | Real-time audio and voice rail | Gemini Live API, Search Live, Gemini Live, enterprise CX surfaces | The live interaction layer for voice-first agents |

| Google Antigravity | Agentic development platform | AI Studio Build mode, standalone Antigravity surface, broader Google dev flow | The front door that turns model capability into app-building workflow |

The naming is a little messy because Google keeps saying "Gemini 3" while several of the key launches are branded "3.1." That part is annoying. Still, the strategic move is easy to read. Google is no longer trying to sell only a better flagship model. It is trying to sell a route.

We already saw pieces of this in our earlier take on Google AI Studio's full-stack distribution play, and again when Gemini 3.1 Flash Live started looking like Google's real-time agent rail. What changed in this wave is coordination. The DeepMind page, the March roundup, the Pro rollout, the Flash-Lite pricing post, and the AI Studio Build mode update now point in the same direction.

Names matter. Surfaces matter more.

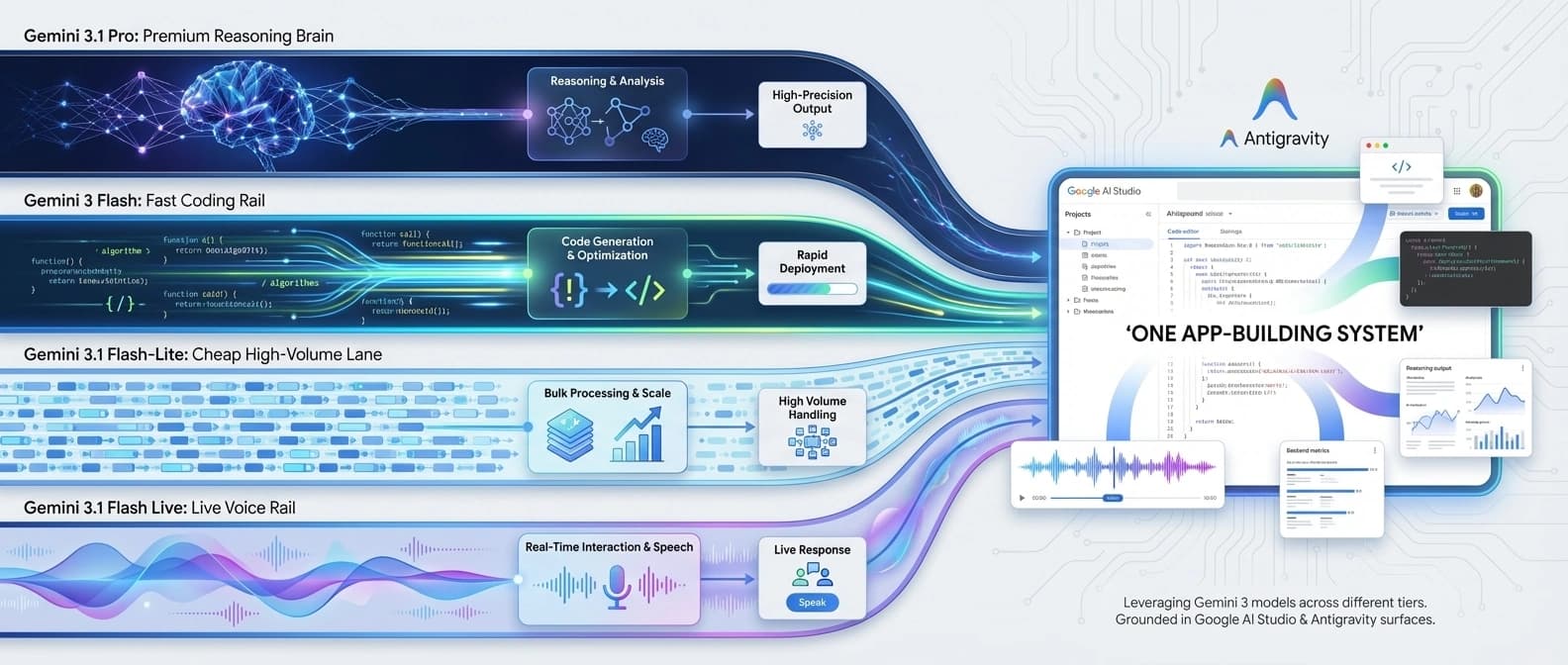

Gemini 3.1 Pro, Flash, Flash-Lite, and Flash Live each play a different job

The models are not interchangeable, and Google is being unusually direct about that.

In the Gemini 3.1 Pro launch post, Google positions Pro as the model for tasks where a simple answer is not enough. That includes complex reasoning, creative coding, system synthesis, and the sort of work where you want the model to keep several moving parts in its head at once. Google also says 3.1 Pro is rolling out in preview across the Gemini API, Vertex AI, Gemini Enterprise, Gemini CLI, Android Studio, Antigravity, the Gemini app, and NotebookLM. That rollout pattern matters more than any single benchmark number because it tells you what Google thinks the premium tier is for: shared intelligence across all of its serious surfaces.

Then there is Gemini 3 Flash, which the DeepMind page describes as best for frontier intelligence at speed and, more pointedly, as Google's best model for vibe coding and agentic coding. This is the fast lane. It is there for the moments when you want capability without dragging the whole premium stack into every turn.

Gemini 3.1 Flash-Lite is the bulk lane. Google's Flash-Lite post prices it at $0.25 per 1 million input tokens and $1.50 per 1 million output tokens, then frames it as the fastest and most cost-efficient Gemini 3-series model for high-volume developer workloads. Google also cites Artificial Analysis to claim a 2.5x faster time to first answer token and a 45% increase in output speed versus 2.5 Flash. If you are building translation, moderation, repetitive UI generation, or anything with a frightening request count, Flash-Lite is the part of the stack that keeps the finance team from developing a personality disorder.

Gemini 3.1 Flash Live plays a different game. In its launch post, Google calls it the company's highest-quality audio and voice model yet and makes it available through the Gemini Live API in AI Studio, enterprise customer-experience tooling, Search Live, and Gemini Live. The company says Flash Live scored 90.8% on ComplexFuncBench Audio and 36.1% on Scale AI's Audio MultiChallenge with thinking on. More important than the numbers, though, is the placement. Google is clearly treating live audio as shared infrastructure, not as a novelty tucked away in one demo.

Put all of that together and the tiering starts to make practical sense:

| If you are doing this | Start with this layer | Why |

|---|---|---|

| Complex code generation, repo-wide reasoning, harder synthesis | Gemini 3.1 Pro | Highest-reasoning tier and broadest serious rollout |

| Fast multimodal coding or interactive UI work | Gemini 3 Flash | Google positions it as the fast frontier lane |

| Cheap large-scale request volume | Gemini 3.1 Flash-Lite | Pricing and latency story are the point |

| Real-time voice or live agent interaction | Gemini 3.1 Flash Live | Built and distributed as Google's live conversation rail |

| Prompt-to-app workflow with backend wiring | Antigravity via AI Studio | This is where the model family turns into a working dev surface |

That table is more useful than the family portrait. A lot more useful.

Google Antigravity is the hinge, even if the name sounds like a stunt

I am sorry to report that Antigravity is an important product, even if the name sounds like it was selected by a committee trying to impress a skateboard shop.

In the AI Studio Build mode launch post, Google says the upgraded experience is powered by the new Google Antigravity coding agent. This is where the stack stops being abstract.

AI Studio can now turn prompts into fuller web apps, wire in Firebase for databases and authentication, install external libraries, support React, Angular, and Next.js, store API keys in Secrets Manager, and preserve work across sessions. Google says the new experience has already been used internally to build hundreds of thousands of apps over the last few months, and the March roundup says users will be able to take apps from AI Studio to Google Antigravity with a single button click.

That is the hinge.

Models alone do not create stack coherence. Workflow does. If you have read our piece on Google's Gemini API tool-combination and grounding push, the pattern should feel familiar: Google keeps trying to reduce the number of times a developer has to leave a Google-owned surface to get useful work done. Our look at Gemini API docs becoming an MCP context layer made the same point from another angle. The company is not only improving model quality. It is trying to make surrounding context, retrieval, tooling, and app wiring happen inside the same orbit.

Antigravity matters because it turns that strategic instinct into an obvious developer entry point. It is the place where a user can go from prompt, to code edits, to backend integration, to secrets handling, to app continuity, without feeling like they have fallen through three unrelated product traps.

And yes, there is still demo theater here. Google loves a polished coding vignette. A model that builds a 3D universe or a retro game from one prompt is great marketing because it compresses a big platform story into a pretty clip. But demos without app plumbing are just stage fog. Antigravity is the part that says, "Fine, here is the database, here is the auth, here is the project memory, now try to leave."

That is much more serious.

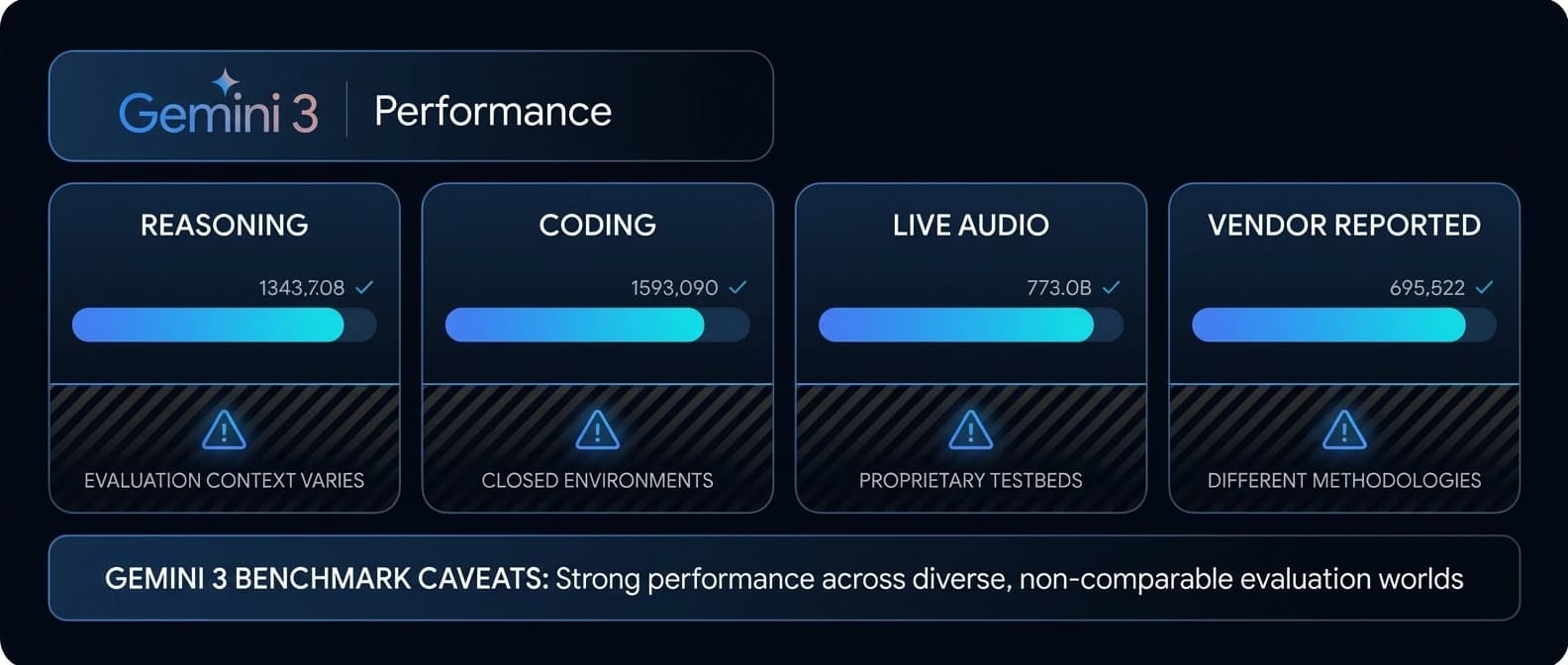

Gemini 3 benchmarks look strong, but the footnotes matter

Google wants the benchmark story to carry weight, and to be fair, the numbers are not weak.

On the DeepMind family page, Gemini 3.1 Pro posts a verified 77.1% on ARC-AGI-2, 94.3% on GPQA Diamond, 68.5% on Terminal-Bench 2.0, 80.6% on SWE-Bench Verified, 85.9% on BrowseComp, and 92.6% on MMMLU. The 3.1 Pro post also highlights that ARC-AGI-2 score as more than double the reasoning performance of 3 Pro. Flash Live and Flash-Lite each get their own flattering numbers too.

The issue is not that the benchmarks are fake. It is that they are mixed.

Some are no-tools reasoning tests. Some include search and code. Some are single-attempt coding tasks. Some are live audio benchmarks. Some competitor numbers on the DeepMind page sit beside "other best self-reported harness" notes. That means you are not looking at one clean, universally comparable league table. You are looking at a vendor-assembled dossier built from several testing worlds.

A smaller table makes the problem clearer:

| Benchmark claim | What it tells you | Why to stay cautious |

|---|---|---|

| ARC-AGI-2: 77.1% for Gemini 3.1 Pro | Google thinks its reasoning tier took a real jump | ARC-AGI-2 is interesting, but it is still a narrow proxy for product usefulness |

| Terminal-Bench 2.0: 68.5% for Gemini 3.1 Pro | Google wants a stronger agentic coding story | Terminal harnesses vary, and the same page includes self-reported competitor context |

| ComplexFuncBench Audio: 90.8% for Flash Live | Flash Live is meant for voice agents that must execute tasks, not just chat nicely | This is still a Google-selected benchmark in a product launch post |

| Flash-Lite 2.5x faster TTFAT vs. 2.5 Flash | Google is serious about a cheaper, faster scale tier | Artificial Analysis comparisons are useful, but real production stacks add other variables |

So yes, Gemini 3's benchmark story is credible enough to say Google is very much in the fight. No, it is not clean enough to settle which tier you should use. For that question, I still trust workflow and economics more than a page full of trophy numbers.

That is also why our earlier breakdown of Google's Flex and Priority inference tiers matters here. Once you have several model classes doing different jobs, the next problem is not "who won the benchmark." It is "which requests deserve the expensive lane, which ones can wait, and how much orchestration pain are you willing to tolerate?"

Which Gemini 3 tier matters most for developers right now

If you are a developer trying to decide whether this launch matters today, I think the answer depends less on raw intelligence and more on where you sit in the stack.

If you want a serious coding and app-building brain, 3.1 Pro is the part to watch. Google is clearly routing that model into the premium places where difficult work happens: CLI, Android Studio, Antigravity, Vertex, the Gemini API, and high-tier consumer plans. That distribution pattern is the tell.

If you want cheap scale, Flash-Lite is probably the most immediately practical layer in the family. It has pricing, a clear role, and no philosophical confusion. Bulk work is bulk work.

If you want real-time voice agents, Flash Live might be the most strategically important piece in the stack because it connects developer tooling to consumer distribution. Search Live and Gemini Live put the same live-audio rail in front of a giant audience. That kind of shared substrate is hard to dismiss as demo sparkle.

And if you want to understand where Google thinks the whole story comes together, watch Antigravity and AI Studio Build mode. That is the place where the company is trying to turn a family of models into a development habit.

I would be a little careful, though. Antigravity still feels in motion. A recent Google AI Developers Forum thread complains that quotas on Pro and Ultra plans were lowered enough to make all-day work harder. That is anecdotal, not a product verdict, but it is a useful reminder that access rules, rate expectations, and practical limits around these surfaces can shift while the marketing pages still look very serene.

Serene pages are easy. Reliable daily tooling is harder.

Gemini 3 looks useful now because the stack is real, not because the demos are pretty

The bottom line for me is simple: Gemini 3 is useful right now where Google has already wired the stack into real surfaces. That includes AI Studio, Gemini API, Vertex AI, Android Studio, Gemini CLI, Search Live, Gemini Live, and the Antigravity path out of Build mode. Those are product rails, not just keynote vapor.

There is still theater in the presentation. Of course there is. Google is a large company and cannot resist showing a model build a small universe before explaining the billing lane. But under the demo gloss, there is a real structural move here.

Google has a premium reasoning model. It has a fast tier. It has a cheap scale tier. It has a live audio rail. It has a coding and app-building front door. And it is placing all of them across the same developer and consumer surfaces at the same time.

That is why I would read Gemini 3 as a stack-coherence launch, not a single-model launch. Antigravity is the hinge. Flash Live is the rail. Flash-Lite is the bulk lane. 3.1 Pro is the brain. The rest of the family exists to make those pieces feel less like separate products and more like one route through Google's agent stack.

Whether developers accept that bundle is still open. But this is no longer just polished demo theater. It is Google trying to make leaving its agent workflow feel inconvenient, and that is a much more durable ambition than winning one benchmark slide.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Canonical family page tying Gemini 3.1 Pro, Flash, Flash-Lite, Flash Live, Google AI Studio, Gemini API, Vertex AI Studio, and Antigravity into one product story, plus the benchmark table used in launch framing.

Best connective source for the rollout across Search Live, Gemini Live, Flash-Lite, Flash Live, AI Studio Build mode, and the broader March push.

Explains Antigravity's role inside AI Studio Build mode, Firebase integration, secrets storage, project memory, and framework support.

Primary source for Flash Live positioning, availability across Live API, Search Live, Gemini Live, and its benchmark claims.

Primary source for Flash-Lite pricing, speed framing, and high-volume use cases.

Primary source for 3.1 Pro rollout surfaces, ARC-AGI-2 score claim, and the preview status across developer and consumer products.

Useful only as a narrow signal that quotas and all-day usability expectations around Google surfaces may still be moving; treat forum feedback as anecdotal.

About the author

Idris Vale

Idris writes about the institutional machinery around AI, but the lens is broader than policy alone: procurement frameworks, public-sector buying rules, platform leverage, compliance burdens, workflow risk, and the market structure hiding beneath product or infrastructure headlines. The through-line is practical power, not abstract theater.

- 23

- Apr 10, 2026

- Brussels · London corridor

Archive signal

Reporting lens: Follow the buying process, not just the bill text.. Signature: Policy turns real when someone has to buy the system.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 7 linked source notes

Byline

Tracks the institutions, incentives, and market structure that quietly decide which AI systems get deployed and why.