Anthropic’s Australia MOU is a sovereign AI wedge

Anthropic’s Australia MOU is non-binding but strategic: it links AI safety cooperation, Economic Index data, research credits, and future infrastructure alignment.

A non-binding MOU can still be a real strategic wedge when it lines up safety, workforce data, and future compute access in the same document.

If you only read the headlines, Anthropic signed a nice-sounding Australian safety pact and everybody got to shake hands in Canberra without pretending a memo is a moon landing.

The MOU text, signed on 1 April, is explicitly non-binding. It also says in plain language that it gives Anthropic no preferential treatment in Commonwealth procurement, grants, or regulation. So no, this is not Australia quietly tossing Claude the keys to the kingdom with a koala sticker on top.

But dismissing it as ceremony would miss the real move. The Anthropic Australia MOU ties four things together in one formal government relationship: cooperation with Australia’s AI Safety Institute, Economic Index data sharing on how AI is changing work, support for local research and skills, and alignment with the government’s brand-new expectations for data centers and AI infrastructure developers. That combination is why I keep coming back to the word wedge. Small in legal force, strategically sharp.

What the Anthropic Australia MOU actually commits to

Start with the text rather than the vibes. Section 2 of the MOU says Anthropic and Australia will pursue technical exchanges and collaboration with safety and security institutions, including the AI Safety Institute, to build a shared view of frontier-model capabilities, opportunities, and risks.

The same document also says Anthropic will explore expanding its presence in Australia and work with Australian research and academic institutions, including on AI safety research. Anthropic’s own announcement goes further on how it sees that relationship: the company says it will share findings on emerging model risks, participate in joint evaluations, and share Economic Index data so the government can track AI adoption and worker effects across sectors including natural resources, agriculture, healthcare, and financial services.

That matters because safety cooperation is the polite door. The bigger room behind it is industrial policy. Once a frontier lab is helping a government understand model risk, labour impact, local research capacity, and future infrastructure needs at the same time, it is no longer just doing diplomatic garnish. It is positioning itself as a preferred participant in how that country sets the terms for any eventual AI buildout.

There is still a limit here, and it matters. The MOU says separate arrangements would be needed for specific collaborations. In other words, the document opens lanes. It does not pave the motorway. Anyone claiming otherwise is trying to invoice you for concrete that has not been poured.

Why Australia matters in sovereign AI, not just AI safety

Australia is useful to Anthropic for reasons that go well beyond good-government optics. The ministerial release says this is the first arrangement signed under the country’s National AI Plan. That gives Anthropic something more valuable than a ribbon-cutting photo: early formal alignment with the policy framework Australia wants to use to manage AI investment.

Australia sits in an awkwardly important place in the next wave of compute politics. It is a U.S.-aligned democracy in the Indo-Pacific, it has serious research institutions, it cares about national security and data sovereignty, and it has the energy questions that every serious frontier AI footprint now drags into the room. Safety and compute are no longer separate conversations held by different people in nicer blazers. They are increasingly the same meeting.

That is why this story sits naturally beside our coverage of OpenAI’s $122 billion infrastructure flywheel and Mistral’s Paris data-center debt push. Frontier labs are learning the same lesson in different accents: if you want long-term influence, you do not just need models. You need policy clearance, energy credibility, local relationships, and a path into whatever “sovereign AI” means in that market once the PowerPoint is forced to meet a substation.

The data-center signal is the whole tell

The clause that makes this MOU more than a safety exchange sits in Section 3. Anthropic says it recognizes the importance of expanding Australia’s energy supply and transmission, especially firmed renewables, and welcomes engagement with the government on infrastructure planning. Then Section 3.3 adds the sharper point: Anthropic supports the government’s Expectations of data centres and AI infrastructure developers and will align its Australian operations with them.

Those expectations are not law. They are policy guidance, but not decorative policy guidance. The government says proposals that align most closely with them will be prioritised in Commonwealth regulatory assessments, while energy-intensive proposals that do not align will not be prioritised. The expectations also tell operators to back new clean energy and storage, cover transmission costs, use water efficiently, invest in Australian skills, and provide access to compute for local researchers and startups on favorable terms.

Policy people love the word expectations because it sounds gentler than conditions while still carrying the faint aroma of “do not test me.” In practice, the message is straightforward: if a frontier AI company wants deeper Australian roots later, it should start by sounding cooperative now.

That is the infrastructure tell. Anthropic is not committing to build a data center tomorrow, and the company’s announcement is careful on that point. It says Anthropic is exploring investments in data-center infrastructure and energy, aligned with the new expectations. That is a signal, not a shovel, and definitely not evidence of a build already under way. Still, it is a meaningful signal because it places Anthropic inside the policy grammar Australia is using to filter future compute investment.

If you have been watching Europe’s procurement fights or Microsoft’s push toward local sovereign AI stacks, the pattern is familiar. Safety language, data residency, workforce benefits, and infrastructure access are blending into one bargain. The companies that understand that early get a cleaner runway.

The research money matters, but it is not the legal force of the MOU

Anthropic also announced AUD$3 million in Claude API credits for Australian institutions including ANU, Murdoch Children’s Research Institute, the Garvan Institute of Medical Research, and Curtin University. That matters because it gives the broader pitch some actual flesh. It is hard to say you are building a domestic ecosystem if the local contribution is just a PDF and a determined smile.

It is also important not to muddle the pieces. The research-credit package sits alongside the MOU. It is not the same thing as the MOU’s legal force, because the MOU barely has legal force to begin with. Same week, same story, different instrument.

The same goes for Anthropic’s Sydney signal. The company says it will share more about its local team and leadership in coming weeks as it prepares to open a Sydney office. Readers should treat that as evidence of direction, not a finished footprint.

Why this is a wedge, not a breakthrough

So what changed? Not procurement preference. Not a binding infrastructure deal. Not a guaranteed Australian Claude campus rising heroically from the eucalyptus.

What changed is that Anthropic now has a formal, government-recognized lane into four debates that will shape Australia’s next AI cycle: frontier-model safety, worker impact, local research capacity, and the terms under which future compute investment gets welcomed or chilled. That is more than symbolism and less than a contract, which is exactly why it matters.

It also fits a broader Anthropic pattern. The company is spending more time turning safety positioning into institutional relationships rather than just branding. In one market that can mean court fights, as in our piece on Anthropic’s Pentagon blacklist injunction. In another, it means getting inside the rules before the real infrastructure money shows up.

My read is simple: Australia has given Anthropic a landing lane, and Anthropic has happily accepted the map. The MOU will not decide who wins the next compute race on its own. But it does show how that race is increasingly being run: not just through chips and models, but through safety institutes, energy policy, workforce data, and political trust.

That is why this agreement is a sovereign AI wedge. Not because it is legally huge. Because it is strategically well placed.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Anthropic’s own framing for the MOU, the Economic Index data-sharing plan, the AUD$3 million research-credit package, and the Sydney office signal.

The controlling text for what the MOU actually says, including the non-binding clause and the explicit statement that it gives no procurement preference.

The government’s 23 March expectations document, which links data-center approvals to national interest, energy, water, workforce, and local capability.

Ministerial framing that places the MOU inside Australia’s National AI Plan and calls it the first arrangement signed under that plan.

About the author

Talia Reed

Talia reports on product surfaces, developer tools, platform shifts, category shifts, and the distribution choices that determine whether AI features become durable workflows. She looks for the moment where a launch stops being a demo and becomes an ecosystem move.

- 34

- Apr 1, 2026

- New York

Archive signal

Reporting lens: Distribution is usually the story hiding inside the launch.. Signature: A feature matters when it changes someone else’s roadmap.

Article details

- Category

- AI Policy

- Last updated

- April 11, 2026

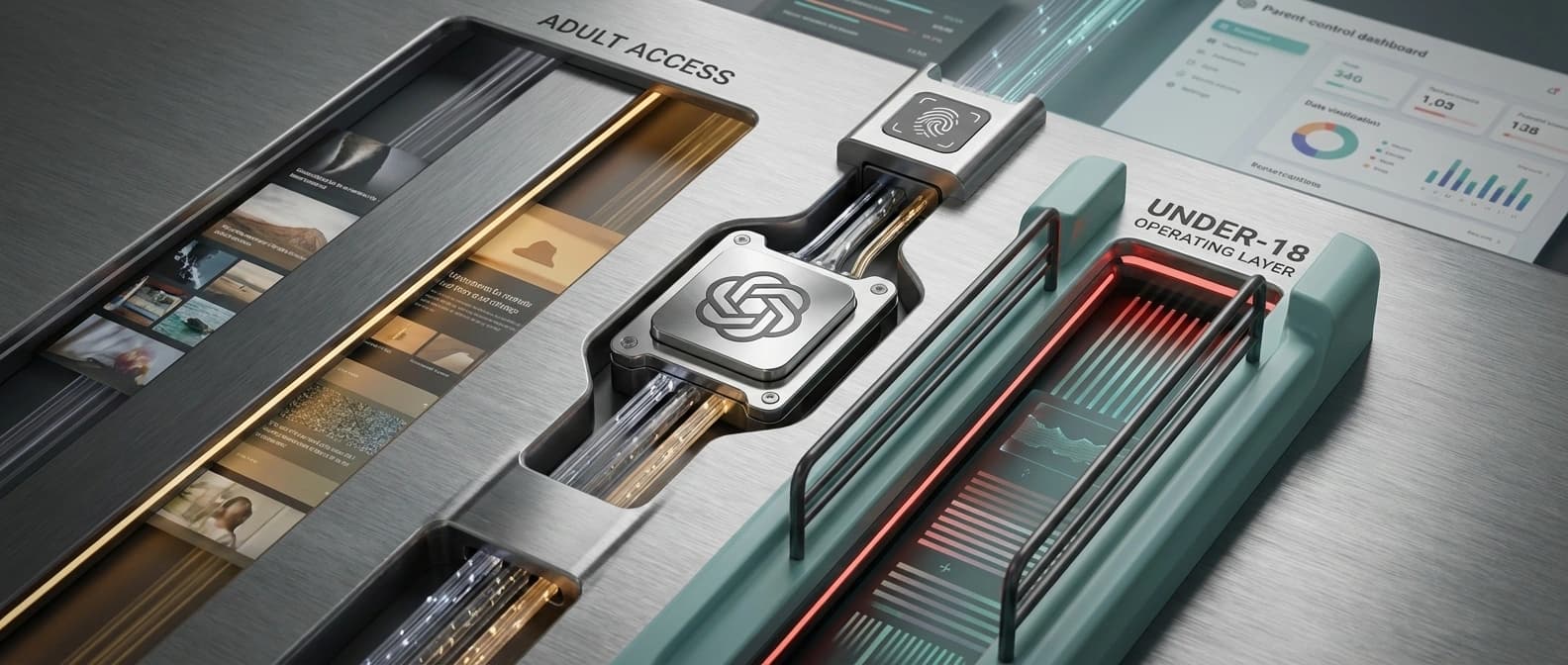

- Lead illustration

- The strategic read sits in the paperwork: the MOU now lives beside safety review and labour-impact material, while any future operating conditions stay on paper rather than on a rollout board.

- Public sources

- 4 linked source notes

Byline

Covers product surfaces, tools, and the adoption moves that turn AI features into durable habits.