Perplexity's Incognito Mode didn't hide anything

A class-action suit alleges Perplexity piped millions of AI chat transcripts to Meta and Google through hidden trackers. Incognito Mode did nothing to stop it.

Incognito Mode didn't stop the trackers. It just stopped you from seeing them.

On March 31, 2026, a class-action complaint landed in federal court alleging that Perplexity AI shared millions of user chat transcripts — including conversations about health conditions, financial decisions, and legal matters — with Meta and Google through hidden ad trackers embedded in its interface. The lawsuit filed March 31 also claims that Perplexity's "Incognito Mode," the feature users would reasonably expect to shield their queries, did absolutely nothing to stop this data from flowing to advertisers.

Ars Technica first reported the story this week. The Verge confirmed the core tracker and data-sharing allegations shortly after.

Here is what makes this different from the usual "tech company has privacy problem" story: the trackers at the center of this complaint are structural. They are not a bug. They are the same adtech infrastructure that runs across most of the commercial web, now embedded inside an AI chat tool where people type their most sensitive questions.

The trackers were always on

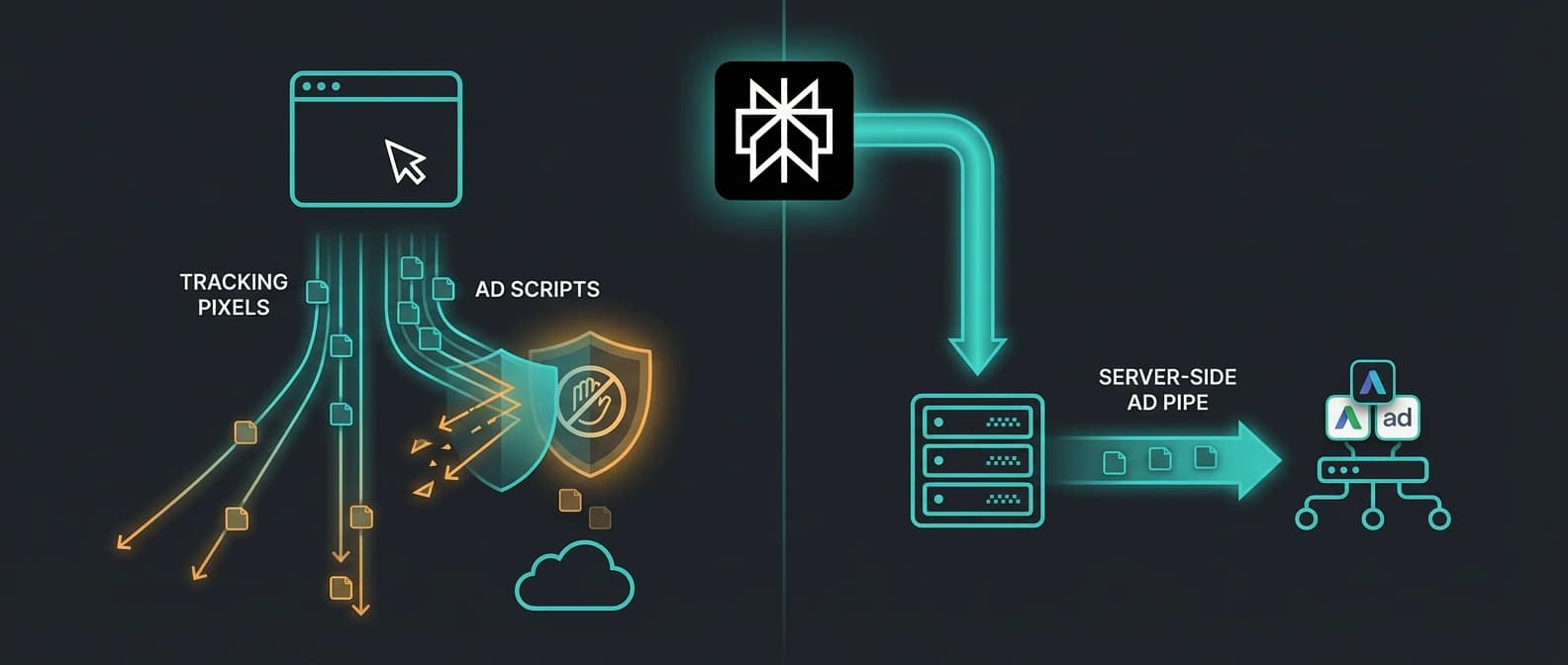

According to the complaint, Perplexity's web interface contained embedded tracking pixels and scripts from Meta (the Meta Pixel) and Google (Google Analytics and Google Ads tags). These trackers fired during active chat sessions, collecting query text, response content, and metadata that could link conversations back to individual users through device fingerprints and logged sessions.

The complaint identifies several tracker mechanisms:

| Tracker | Type | What it collects | Blockable? |

|---|---|---|---|

| Meta Pixel | Client-side | Page events, query content, user metadata | Yes (ad blockers catch it) |

| Google Analytics | Client-side | Session data, interaction events | Yes |

| Google Ads tags | Client-side | Conversion events, query metadata | Yes |

| Meta Conversions API | Server-side | Matched user data, event payloads | No |

That last row is the one that changes the stakes, and I'll get into it below. But even the client-side trackers are remarkable here. Most websites track browsing behavior. Perplexity tracks what you type into a chat box — questions about symptoms, tax questions, relationship advice, legal research. The content is categorically more sensitive than a product page view.

This is also where the parallel to other AI tools becomes hard to ignore. When we looked at how GitHub Copilot handles data usage policy, the tension was between training data and user trust. Perplexity's case is different in mechanism but similar in pattern: user data flows somewhere users did not expect, and the company's stated policy does not match the technical reality.

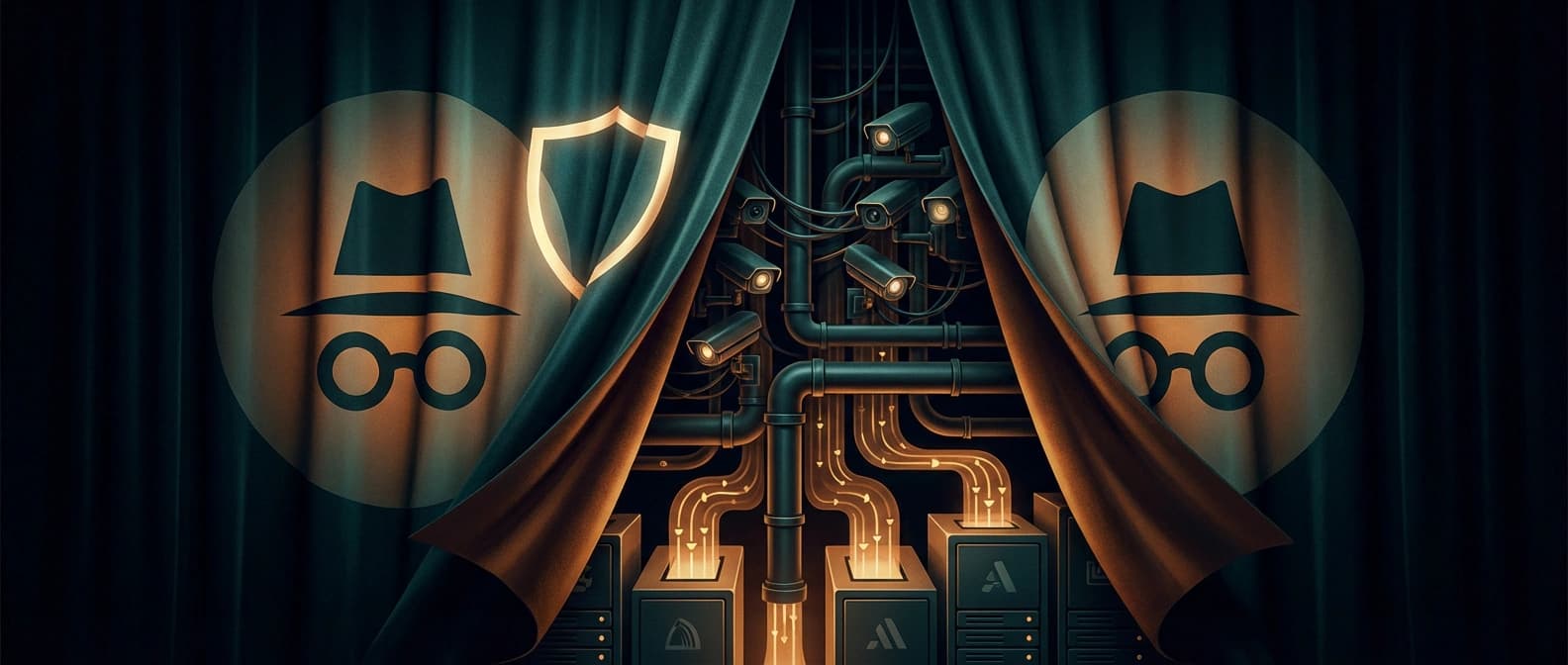

Incognito Mode was a label, not a shield

Perplexity marketed Incognito Mode as a way to search privately. The name itself invokes browser-incognito conventions — the expectation that your activity stays local and disappears when the session ends.

According to the complaint, it did neither. Incognito Mode may have stopped Perplexity from saving queries to your account history, but the trackers continued to fire. The data still reached Meta and Google's servers. The "private" session was private only from your own Perplexity account page.

I'll be honest: calling a feature "Incognito Mode" while hidden trackers pipe your health questions to Meta's ad infrastructure is a bold branding choice. It is the kind of thing that looks fine in a product roadmap and looks like a potential liability in a courtroom.

The complaint cites Perplexity's own privacy policy page, arguing that the policy's data-sharing disclosures do not adequately describe the tracker-driven sharing the lawsuit alleges. If the court agrees, that gap between stated policy and technical behavior becomes a central legal question — and potentially a precedent for how AI chat tools disclose data flows.

The server-side tracker most coverage skips

Most of the coverage so far focuses on the Meta Pixel and Google tags. Those are the visible trackers — the ones an ad blocker can catch. The complaint's more serious allegation involves Meta's Conversions API.

The Conversions API is a server-side tracking mechanism. Instead of running JavaScript in your browser (where ad blockers can intercept it), it sends data directly from Perplexity's servers to Meta's servers. Your browser never sees the request. Your ad blocker never touches it. From the user's perspective, it is invisible by design.

This matters because the Conversions API was built specifically to survive the decline of third-party cookies and the rise of ad-blocker adoption. Meta's own documentation describes it as a way to maintain ad-measurement accuracy when client-side tracking fails. It is not a side channel. It is the intended backup for the main channel.

When the complaint alleges that Perplexity used the Conversions API to share user chat data with Meta, it is describing a data pipeline that no user-facing privacy control — Incognito Mode, browser privacy settings, or ad blockers — can stop. The data leaves Perplexity's backend. The user has no visibility and no opt-out mechanism beyond not using the product at all.

This is the structural vulnerability. It is not unique to Perplexity. Any AI chat tool that embeds server-side ad trackers has the same exposure. The reason most coverage misses it is simple: you need to read the complaint carefully, understand how the Conversions API works, and connect the two. Most quick-turn news coverage stops at "company had trackers."

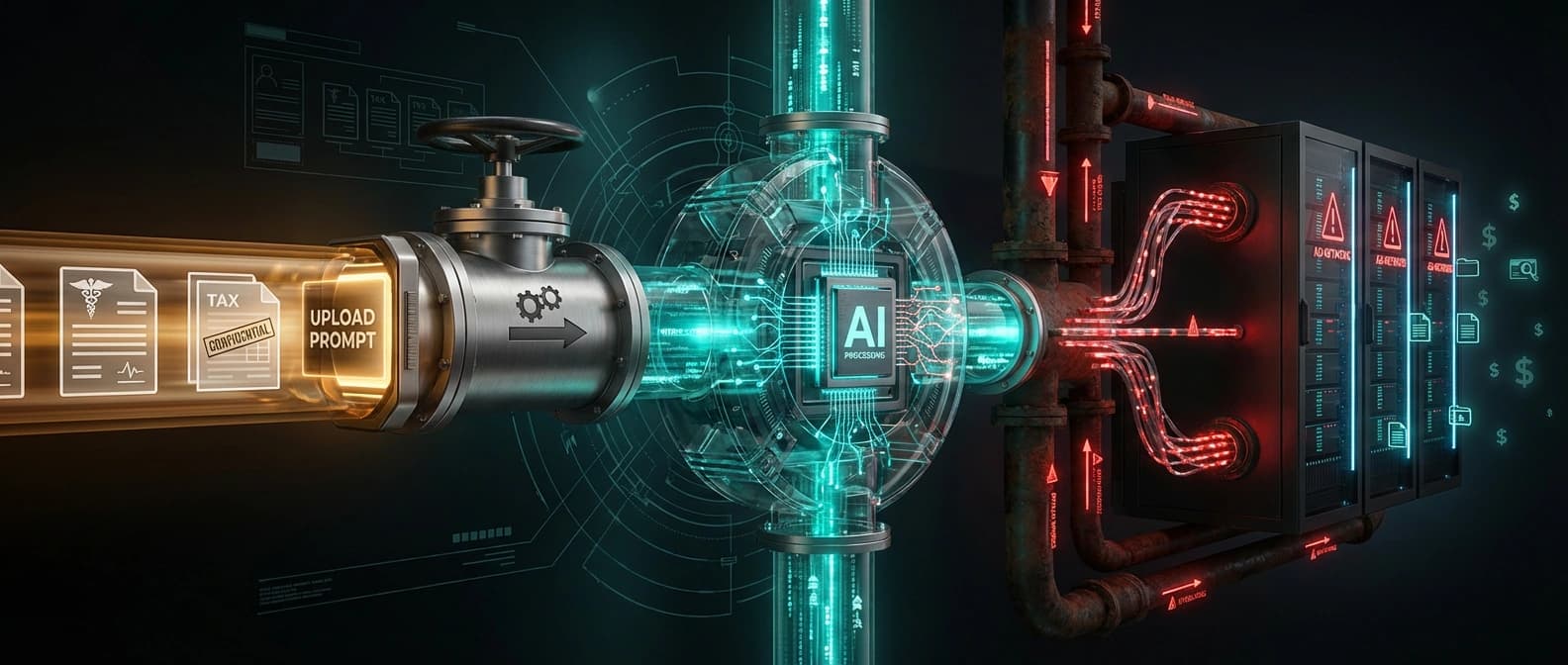

The upload pipeline makes everything worse

Perplexity actively encourages users to upload documents — medical records, financial statements, legal filings — so the AI can analyze them. The product literally prompts you to do it. That is a useful feature. It is also, if the tracker allegations hold, a mechanism for funneling some of the most sensitive personal data in existence into an ad-targeting pipeline.

Think about the chain: a user uploads a medical summary or a tax return. Perplexity processes it. The response appears on screen. The trackers fire. The data — or at minimum the metadata and interaction signals tied to that session — reaches Meta and Google's ad systems. The user never consented to this flow in any meaningful way, because the consent was buried in a privacy policy that did not accurately describe the mechanism.

This is worse than a search engine tracking queries, because search queries are self-composed. Users choose their words. Uploaded documents contain raw personal data the user did not write for the purpose of sharing. The exposure is categorically different, and the complaint makes this point directly.

If this sounds like it connects to broader questions about how AI companies handle sensitive user data, it does. Our coverage of OpenAI's teen safety blueprint and age prediction dealt with a different mechanism but the same structural question: are AI companies building safety and privacy controls that match the sensitivity of the data they collect? In Perplexity's case, the complaint argues the answer is no.

What this lawsuit could actually change

The class covers all non-Pro, non-Max Perplexity users in the United States from December 2022 through February 2026. That is a large class. The legal claims include violations of the federal Wiretap Act, the California Invasion of Privacy Act, and the California Consumer Privacy Act.

Here is why this case has precedent potential beyond Perplexity:

-

Wiretap Act claims are rare in adtech cases. The statute requires interception of communications. If the court accepts that AI chat transcripts qualify as "communications" under the Wiretap Act, every AI chat tool with embedded trackers inherits the same legal exposure. That is not a narrow ruling. That is a new category of liability.

-

CCPA claims force disclosure. California's consumer privacy law gives users the right to know what data is collected and shared. If Perplexity's privacy policy failed to accurately describe tracker-driven sharing, the CCPA violation is straightforward — and the standard applies to every company doing business in California.

-

The Incognito Mode claim tests consumer protection. If the court finds that "Incognito Mode" created a misleading impression of privacy, that finding could apply to any feature name or marketing language that implies privacy without delivering it.

This is why the "Perplexity is evil" framing is a distraction. The story is not about one company's culture or ethics. It is about whether AI chat tools — as a product category — can embed standard adtech infrastructure without triggering wiretap liability, consumer-protection claims, and CCPA disclosure requirements. The answer to that question affects every AI company operating in the United States, not just Perplexity.

Our reporting on the Anthropic Pentagon blacklist injunction covered a different legal arena, but the pattern is familiar: government and private litigation are converging on AI companies from multiple angles, and each case narrows the space for vague privacy assurances.

What to ignore — and what to actually watch

Ignore:

- "Perplexity is uniquely bad." The tracker infrastructure at issue here is standard adtech. The Conversions API exists because Meta built it to bypass privacy controls. The problem is structural, and singling out Perplexity lets every other AI chat tool off the hook.

- Competitive framing. This is not "Perplexity vs. ChatGPT vs. Claude." Every AI chat product with embedded trackers has the same vulnerability. The competitive angle is noise.

- IPO speculation. Perplexity's valuation, business model, and IPO timeline are irrelevant to whether your health questions reached Meta's servers.

- "AI is dangerous" culture-war framing. This is not a story about AI risk. It is a story about adtech surveillance inside a specific product category.

Watch:

- How the court handles the Wiretap Act claim. If AI chat transcripts qualify as protected communications, the legal ground shifts under every AI chat tool with trackers.

- Whether Perplexity changes its tracker architecture. The company may remove or restructure its trackers before the case goes further. That would be a concrete signal that the legal risk is real, not just theoretical.

- Whether other AI chat tools audit their own trackers. If the Conversions API vulnerability is as structural as the complaint suggests, other companies should be checking their own stacks right now. Silence on this front is telling.

- The CCPA disclosure standard. If the court finds Perplexity's privacy policy inadequate, every AI company operating under California jurisdiction needs to review its own disclosures. That is a compliance event, not a PR problem.

The broader governance question — who gets to see the data you type into an AI chat box, and whether you have any meaningful control over that — is exactly the kind of trust infrastructure we tracked in our piece on Europe's AI procurement battle. The jurisdictions are different. The structural question is the same.

Bottom line: If you use any AI chat tool with embedded trackers, assume your conversations may reach ad networks. Incognito modes, browser privacy settings, and ad blockers do not protect you from server-side data sharing. The lawsuit against Perplexity could change the legal framework for that reality — or it could settle quietly and change nothing. Either way, the technical vulnerability is real right now.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary reporting on the class-action complaint, including tracker details and Incognito Mode claims.

The full complaint filed March 31, 2026, detailing tracker mechanisms, Conversions API allegations, and class scope.

Perplexity's own privacy policy, which the lawsuit claims contradicts its actual data-sharing practices.

Secondary reporting corroborating the tracker and data-sharing allegations.

About the author

Lena Ortiz

Lena tracks the economics and mechanics behind AI systems, from serving architecture and open-weight deployment to developer tooling, platform shifts, product decisions, and the operational tradeoffs that shape what teams actually run. Her reporting is aimed at builders and operators deciding what to trust, adopt, and maintain.

- 24

- Apr 10, 2026

- Berlin

Archive signal

Reporting lens: Operating leverage beats ideological posturing.. Signature: If the cost curve moves, the product strategy moves with it.

Article details

- Category

- AI Policy

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Covers the economics, tooling, and operating realities that shape how AI gets built, shipped, and run.