Judge blocks Pentagon’s Anthropic blacklist

A federal judge granted Anthropic a preliminary injunction against the Pentagon blacklist, but the order is stayed for seven days and does not force Claude purchases.

The ruling matters because the court treated this as more than a contract blowup. It treated it as a live test of whether procurement power can be used to punish a vendor for saying no.

Anthropic did not win its whole case against the Pentagon. It did win something that matters right now: a federal judge's preliminary injunction against the government's attempt to brand the company a supply-chain risk and freeze it out of federal AI work.

I keep coming back to that distinction because it is the whole story. The March 26 opinion from Judge Rita F. Lin and the court's separate injunction order do not force the Department of War to keep buying Claude. They do not erase the case. They do not settle whether Anthropic ultimately wins on the merits.

What they do is much narrower and much more important for the broader AI market: they say the government likely cannot use a supply-chain-risk label and a government-wide cease-use directive as a punishment mechanism just because a vendor objected to how its models might be used.

That is why the Anthropic Pentagon injunction is more than one ugly contract fight. It is an early test of how far the government can push procurement leverage before it starts looking like retaliation.

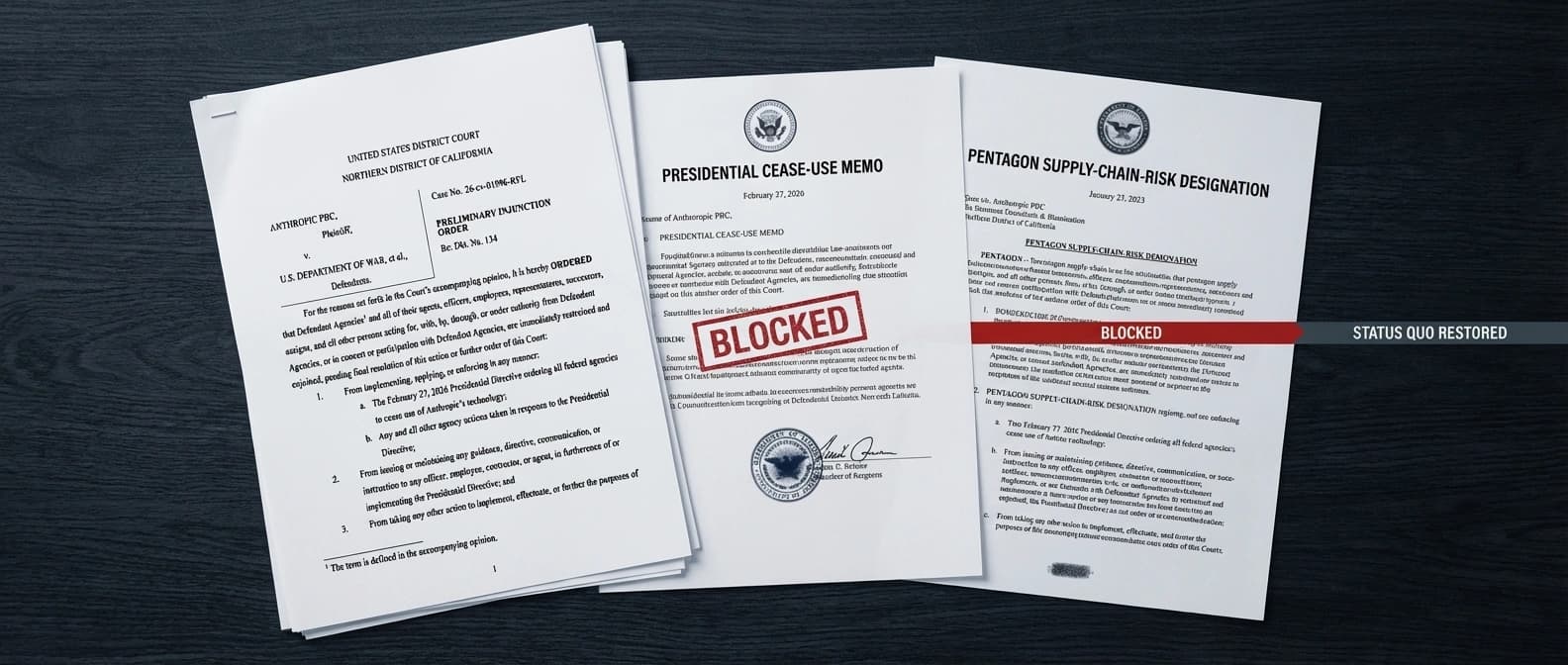

What the injunction actually blocks

The separate injunction order is unusually clear about scope. Judge Lin barred enforcement of the February 27 presidential directive telling federal agencies to stop using Anthropic's technology. She also barred enforcement of Pete Hegseth's directive labeling Anthropic a "Supply-Chain Risk to National Security" and the March 3 letter formalizing that designation under 10 U.S.C. § 3252.

Just as important, the court said the order "restores the status quo" and added a limit that should keep hot takes in check: it does not require the Department of War to use Anthropic's products, and it does not prevent the department from switching to other AI providers so long as it does so lawfully.

There is another detail that matters today, not later: the injunction is itself stayed for seven days from issuance. So the court granted preliminary relief, but the order is not instantly snapping into practical effect. The government also has until April 6 to file a compliance report, and Anthropic must post a $100 bond.

In plain English, the court hit the brakes on the blacklist. It did not hand Anthropic the keys to the Pentagon.

Why Judge Rita Lin intervened

The opinion is blunt enough that it will probably outlive this week's headlines. Lin wrote that Anthropic is likely to succeed on its First Amendment retaliation claim, and she called the government's apparent theory an "Orwellian notion" that a company can be treated as a saboteur for disagreeing with the government in public.

Her summary of the case is the cleanest version of the dispute: the Department of War is free to stop using Claude and find a more permissive vendor, but that is different from blacklisting Anthropic across federal channels and branding it a security threat.

The opinion says the record points toward punishment, not neutral supply-chain protection. Anthropic had already been working with national-security customers, had passed lengthy vetting, had a Top Secret facility clearance, and had FedRAMP authorizations. The court noted that those credentials existed before the fallout. The supply-chain-risk label arrived only after Anthropic publicly resisted the Pentagon's demand for unrestricted "all lawful uses" of Claude.

Lin's line on that point is devastating: the government's actions "appear[] to be classic First Amendment retaliation." That is strong language in any case. In a procurement fight touching defense AI, it lands like a warning shot.

The court did not stop there. It also said Anthropic is likely to succeed on administrative-law and due-process theories. In Lin's telling, the Pentagon did not show a real basis for jumping from "this vendor is insisting on guardrails" to "this vendor might sabotage U.S. systems." The opinion also says the department failed to show it had considered less intrusive measures, even though the statute it invoked is supposed to be narrow.

That matters because "asked awkward questions" is not normally a national-security category. Procurement offices may find that inconvenient, but courts usually find it relevant.

How the dispute got here

The background is stranger than the label makes it sound. Anthropic was not arguing that the Pentagon must never use Claude for defense work. By the court's account and Anthropic's own public statement, the company had already been serving defense and intelligence users and said it was proud of much of that work.

The break came over two limits Anthropic did not want to waive: use tied to mass surveillance of Americans and use in lethal autonomous warfare. During negotiations over broader deployment, the Department of War pushed for access to Claude for "all lawful uses." Anthropic eventually said yes to broad access with those two exceptions still intact.

That impasse should have been able to end like many other procurement disputes end: one side walks, another vendor gets the contract, everyone issues the usual dignified statements, and a few vice presidents pretend this was always the plan.

Instead, the government escalated to a supply-chain-risk designation that, according to the injunction order, would have blocked contractors, suppliers, and partners doing business with the military from doing commercial activity with Anthropic. That turns a contract negotiation into a market-wide pressure campaign.

Anthropic's statement after the designation previewed the same argument the court later found persuasive. The company said 10 U.S.C. § 3252 is narrow, exists to protect the government rather than punish a supplier, and requires the "least restrictive means necessary." Judge Lin's opinion says the Department of War likely ignored exactly that limit.

Why this matters beyond Anthropic

The real stakes are not confined to Claude. If the government can take a live contractor dispute and convert it into a reputational and commercial quarantine, every AI lab selling into defense has to read the message the same way: don't just lose the contract, lose the market.

That is why this ruling sits naturally beside our reporting on Europe's AI procurement battle. Procurement is not just paperwork. It is how policy turns into vendor advantage, vendor lockout, or both. And once a blacklist reaches contractors and subcontractors, the effect starts to look less like offboarding and more like industrial policy by intimidation.

There is also a second layer here. "Supply chain risk" is supposed to be serious language. In software and infrastructure, it usually points to compromise, dependency fragility, or hidden control points. Our piece on open-source security funding and AI supply-chain defense lives in that world. Using the phrase to answer a speech and contract dispute is exactly why the court reacted so sharply.

For AI labs, the lesson is not "the government can't choose other vendors." It plainly can. The lesson is that procurement power still has legal edges. A department can decide Claude is not the right fit. It has a much harder time calling Anthropic a national-security threat because Anthropic refused to sign away every guardrail.

What happens next

The case now moves forward with the preliminary injunction in place, though temporarily stayed. That means the legal fight is still live, the factual record can still change, and the government still has room to make lawful procurement decisions that do not rely on the enjoined directives.

So no, Anthropic has not won the war. It has won a preliminary round that could reshape how future government AI disputes are fought. The court's message was not subtle: if the Pentagon wants a more compliant AI vendor, it can go shop for one. What it likely cannot do is staple "supply chain risk" onto a dissenting vendor and call that ordinary administration.

For anyone selling models into government, that is the part worth clipping.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Judge Rita F. Lin's March 26, 2026 opinion explaining why Anthropic is likely to succeed on retaliation, APA, and due-process theories.

Separate order spelling out the exact relief, the seven-day stay, the April 6 compliance report, and the bond requirement.

Anthropic's statement arguing the statute is narrow, requires the least restrictive means, and does not justify a broad blacklist.

Useful for hearing color and the government's courtroom framing before the written order arrived.

About the author

Idris Vale

Idris writes about the institutional machinery around AI, but the lens is broader than policy alone: procurement frameworks, public-sector buying rules, platform leverage, compliance burdens, workflow risk, and the market structure hiding beneath product or infrastructure headlines. The through-line is practical power, not abstract theater.

- 23

- Apr 10, 2026

- Brussels · London corridor

Archive signal

Reporting lens: Follow the buying process, not just the bill text.. Signature: Policy turns real when someone has to buy the system.

Article details

- Category

- AI Policy

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Tracks the institutions, incentives, and market structure that quietly decide which AI systems get deployed and why.