OpenAI teen safety blueprint becomes ChatGPT's U18 mode

OpenAI is turning its teen safety blueprint into product behavior, with a separate U18 ruleset, age prediction defaults, tighter guardrails, and parental controls.

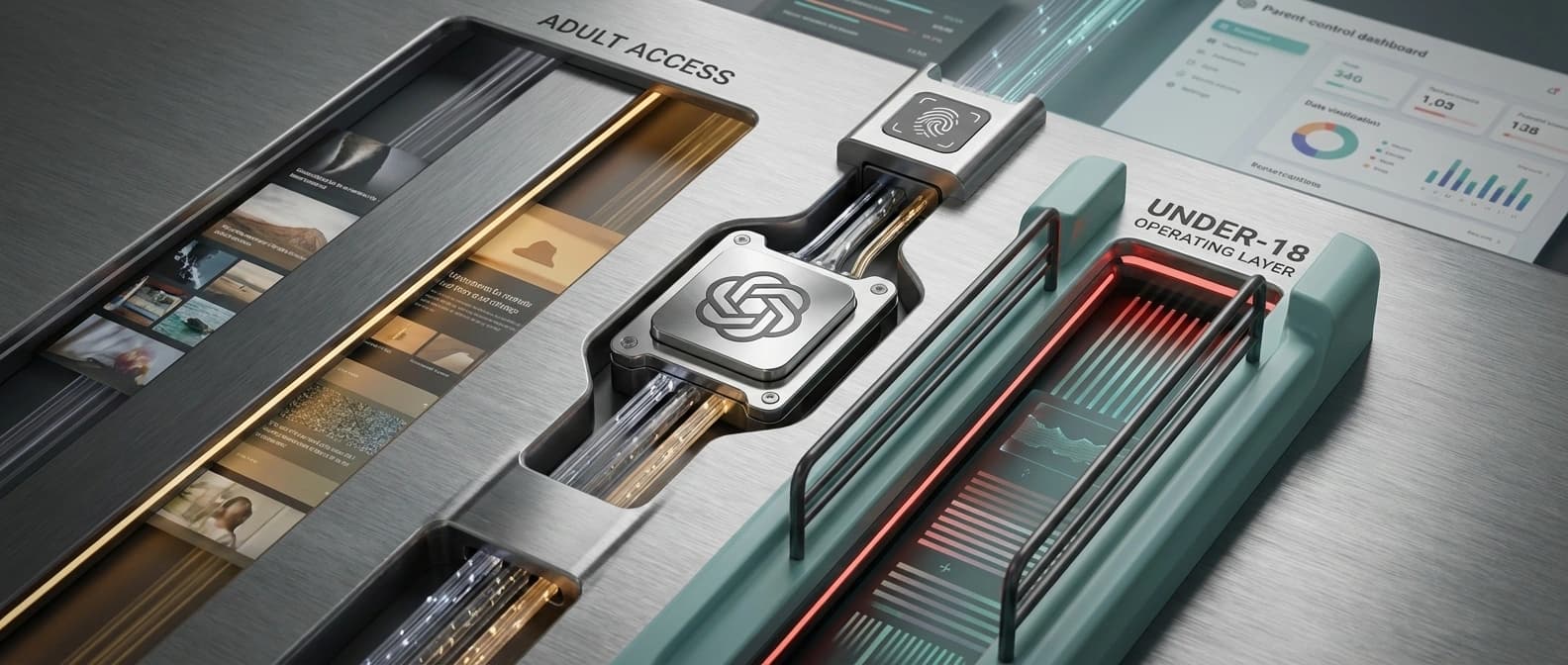

OpenAI is not just publishing teen principles. It is wiring a second operating mode into ChatGPT.

OpenAI is not treating teen safety as a side policy memo anymore. Across its Teen Safety Blueprint, the U18 Model Spec update, Sam Altman's freedom and privacy note, and its age prediction documentation, the company lays out something much more concrete: ChatGPT is getting a distinct under-18 behavior layer, and when OpenAI is unsure how old you are, it may treat your account as a minor first.

That is the real shift. Plenty of companies say teens deserve safer AI. OpenAI is formalizing a separate U18 operating mode where teen safety can outrank some of the privacy and freedom defaults it wants for adults, then wiring that choice into age prediction, parental controls, feature gates, and narrower model behavior. It feels like the next step after the more operational safety posture we saw in OpenAI's recent safety bug bounty. The company keeps moving safety talk out of the PDF and into the product.

Put plainly, OpenAI says three things are true at once:

- some teen safeguards already apply when users say they are under 18

- parental controls are part of the live family-facing stack

- age prediction is rolling out, and uncertain-age accounts can default into the U18 experience

This is not a market standard. Not yet. But once one of the biggest consumer AI platforms decides uncertainty should resolve toward the teen rulebook, everybody else inherits the argument.

This is a second operating manual, not a nicer press release

The easiest way to misunderstand the Teen Safety Blueprint is to read it as a values page. It is that, but it is also a deployment plan.

OpenAI says it is already putting the framework into action across products, has strengthened safeguards for younger users, launched parental controls, and is building toward age prediction so ChatGPT can tailor behavior automatically.

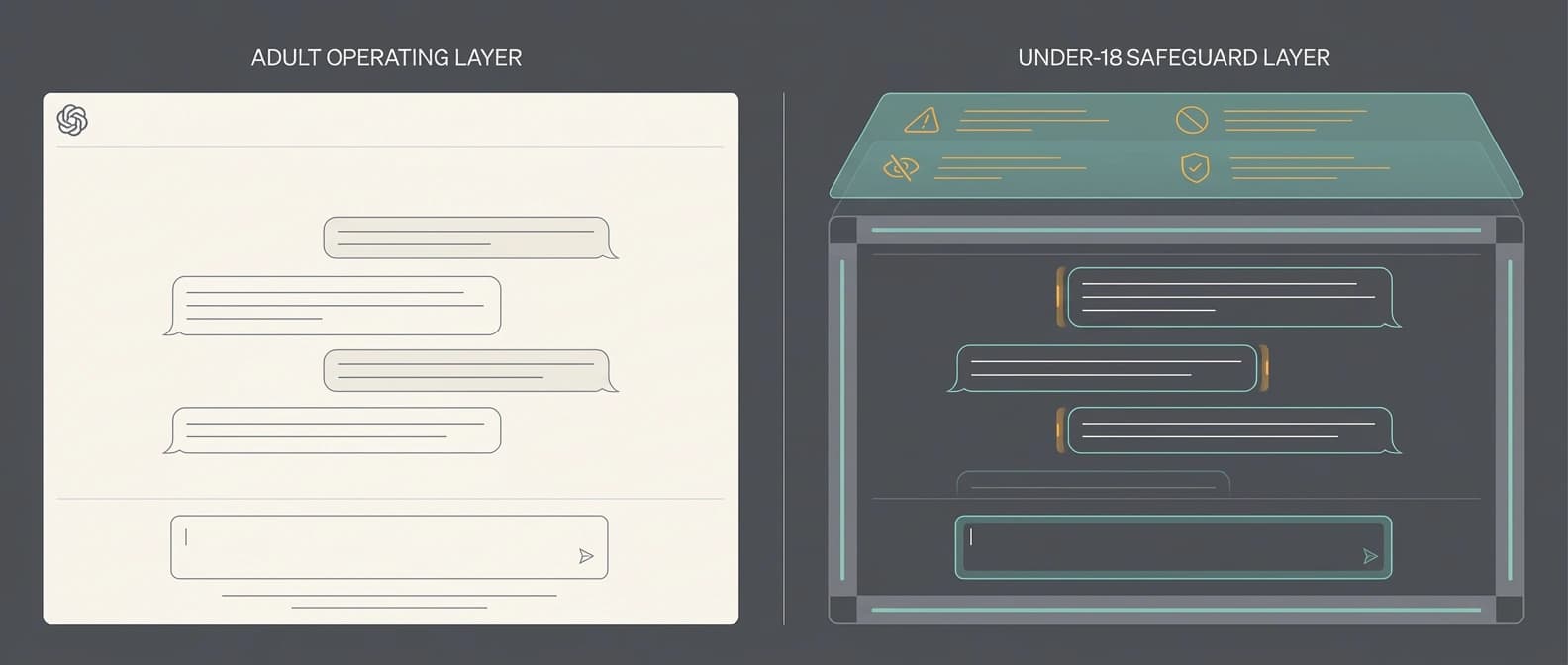

Corporate values are cheap. Default settings are expensive. OpenAI is saying ChatGPT will no longer operate as one flat policy surface. There is one experience for adults and another for users who are known or suspected to be minors. The difference reaches into what the model should say, which features parents can switch off, and when the system can push users toward offline support.

Some of this is live now. OpenAI says the U18 principles already inform protections for self-declared teens and that parental controls extend across newer surfaces including group chats, Atlas, and the Sora app. Some of it is newer and still moving, especially the age-prediction layer.

The U18 Model Spec narrows freedoms adults still get

The U18 Model Spec update is where the blueprint stops sounding abstract and starts sounding like product law. OpenAI says the model should follow four commitments in teen contexts:

- put teen safety first, even when that conflicts with other goals

- promote real-world support and trusted offline relationships

- treat teens like teens, without talking down to them or treating them as adults

- be transparent about expectations

That does not just create a gentler tone. It changes behavior in riskier territory.

| Area | Adult framing OpenAI describes | U18 framing OpenAI describes |

|---|---|---|

| Freedom | Adults should get broad latitude within safety bounds | Teen safety can override freedom when the two conflict |

| Privacy | OpenAI argues for strong AI-conversation privacy for adults, with limited serious-risk exceptions | Teen privacy remains important, but can become secondary to safety |

| Sensitive requests | Adult users may get more room in sexual or fictional-harm contexts if the request is allowed | U18 users face tighter refusals, safer alternatives, and more guardrails |

| Uncertain age | Adult experience stays open when OpenAI is confident | If OpenAI is unsure, it can default the account into U18 mode |

| Escalation | Serious misuse can trigger monitoring or review | Teens get stronger nudges to trusted adults and limited emergency escalation logic |

The specific high-risk areas are telling. OpenAI says teen conversations should receive extra care around self-harm and suicide, romantic or sexualized roleplay, graphic or explicit content, dangerous activities and substances, body image and disordered eating, and requests to keep secrets about unsafe behavior. That is much broader than a simple adult-content filter.

Altman's explainer gives the clearest contrast. He says an adult who wants flirtatious chat may get it, while a teen should not. He also says an adult asking for help with fictional writing about suicide may get assistance, while a teen should not be allowed to use that same creative-writing door. Same model family, different rulebook.

That is the part many headlines will miss. The teen safety blueprint is not only about refusing bad content. It is about redefining which kinds of ambiguity still count as acceptable for minors.

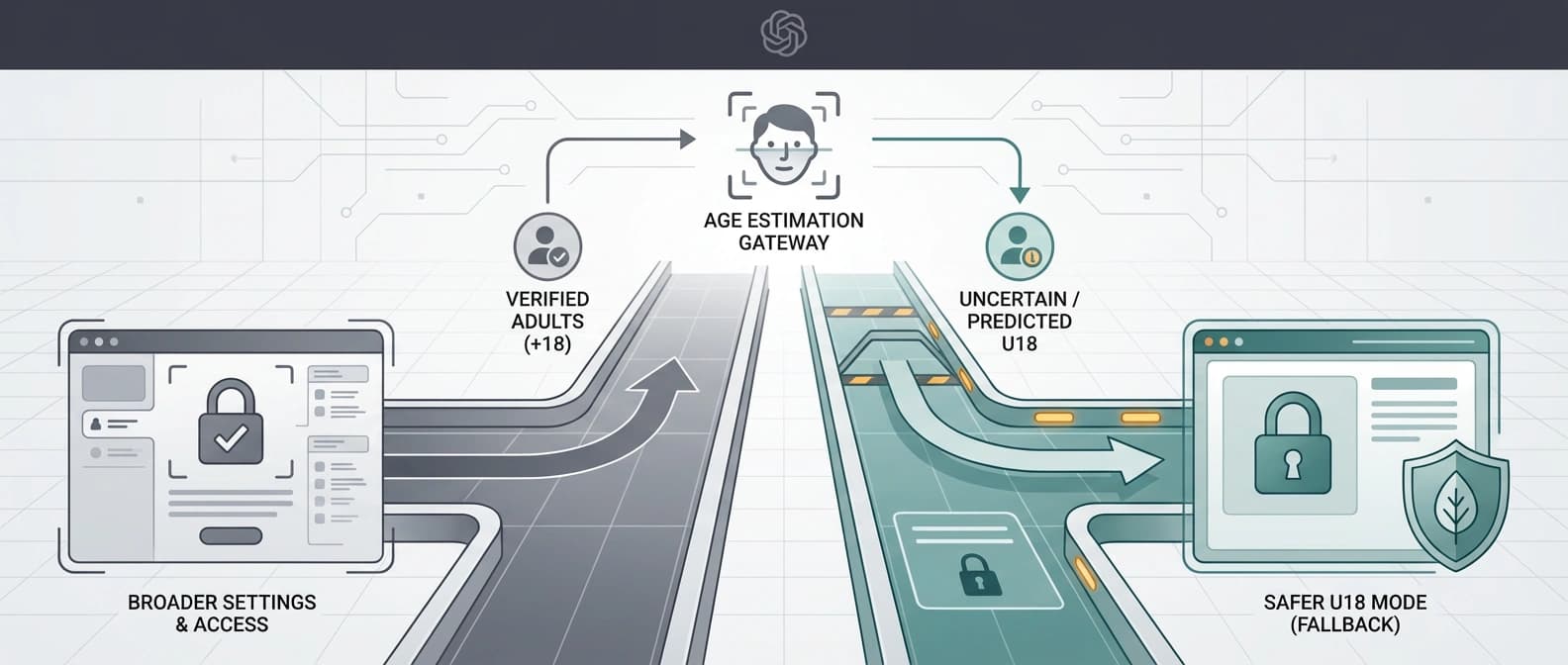

Age prediction is the real leverage point

If the blueprint is the philosophy, age prediction is the switch.

OpenAI's help documentation says the feature is rolling out globally on ChatGPT consumer plans, with EU rollout delayed for regional requirements. It says the system uses account-linked signals to predict whether someone may be under 18, including broad topic patterns, time-of-day usage, and account age. It also says age prediction can still run even if a user entered a date of birth at signup.

This is where the whole package gets sharper, and more controversial. OpenAI says that if it predicts an account belongs to someone under 18, extra safety settings turn on automatically. If the system is not confident, it still takes the safer route and defaults to the under-18 experience. Adults who get swept into that lane can verify their age to remove the extra settings.

That shifts the burden onto adults to prove they should have the broader experience.

In some countries that can mean a selfie, a government ID, or both through Persona, the third-party verifier OpenAI uses. OpenAI says it does not receive the ID or selfie itself, only age-confirmation information, and Persona deletes those materials within seven days. Privacy advocates will still have plenty to say about the friction and the false-positive risk.

The broader pattern is familiar. As with GitHub's Copilot data-usage default shift, the setting that matters most is often the one users never actively chose. Defaults are where platform policy quietly becomes platform power.

OpenAI is at least being unusually blunt about it. If it cannot tell whether you are 17 or 27, it would rather annoy an adult than under-protect a minor.

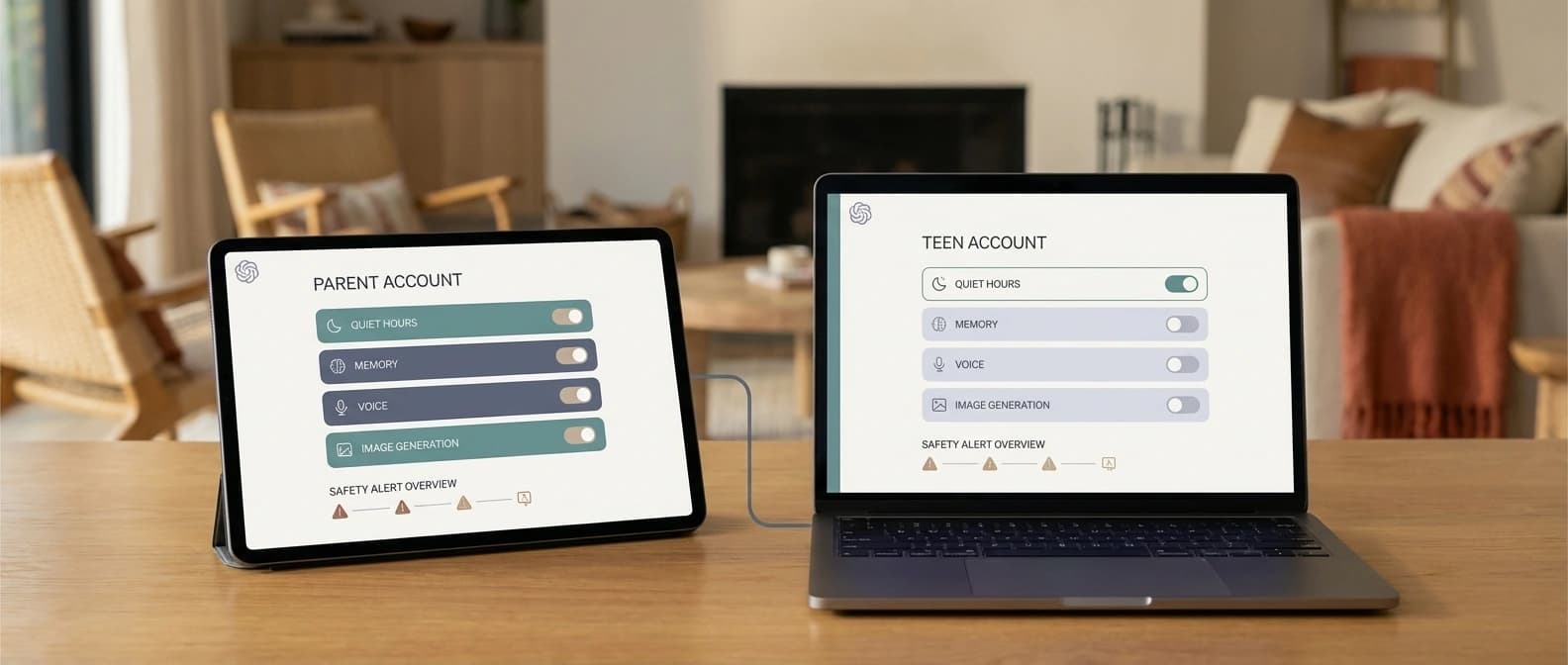

Parental controls turn ChatGPT into family software

The parental controls FAQ makes the blueprint feel even less theoretical. Parents and teens can link accounts by email or phone. Once linked, parents can manage settings such as reduced sensitive content, model-training participation, memory, voice mode, image generation, quiet hours, and some controls related to Sora and Atlas.

That last part matters. OpenAI is not fencing off teen safety inside one chat window. It is extending the logic across its broader consumer surface area. Even after the turbulence around Sora's Disney-deal collapse, Sora still appears inside OpenAI's teen-governance perimeter, which tells you the company is thinking at the platform level.

OpenAI says parents do not get access to their teen's ordinary conversations, and the controls do not provide real-time monitoring. Teens can also unlink accounts.

But it is not hands-off either. The FAQ says parents can receive safety notifications in certain limited situations involving serious self-harm concerns. OpenAI describes a two-step process in which automated systems flag activity and a trained review team decides whether the signs look like acute distress. Parents receive an alert and support resources. In rare emergency scenarios, OpenAI says further action may be taken.

Silicon Valley eventually turns everything into a settings page. In this case, that settings page is carrying a lot of moral weight.

The practical implication is that ChatGPT is starting to behave less like a generic chatbot and more like family software, with schedules, feature gates, restricted modes, and a limited escalation path around safety risk.

Sam Altman says the tradeoff out loud

The most revealing document in the whole package is probably Altman's short teen safety, freedom, and privacy essay. Companies usually hide the uncomfortable hierarchy behind bland language about balance. Altman does the opposite. He spells out the conflict.

For adults, OpenAI says AI conversations should receive very strong privacy protection, potentially approaching the privileged status people expect from doctors or lawyers, while still allowing limited monitoring or review for threats to life, plans to harm others, or massive cybersecurity risks. It also says adults should get broad freedom within safety limits.

Then comes the carveout. For teens, OpenAI says safety comes ahead of privacy and freedom. It says the company is building age prediction to separate minors from adults, may ask for ID in some places, will train ChatGPT not to engage in flirtatious talk with under-18 users, and will not help teens with suicide-related creative-writing requests that might still be allowed for adults in some contexts. Altman also says that if an under-18 user appears to be in suicidal crisis, OpenAI will attempt to contact parents and, if necessary in imminent-harm situations, authorities.

What I keep coming back to is the hierarchy. OpenAI is explicitly defining a category of users for whom privacy and freedom are real values, but not top values.

That will make some people deeply uncomfortable, and not unreasonably. Age prediction can be wrong. Parents are not always safe confidants. Older teens sit in a grey zone where "treat them like teens" can mean protection or overreach, depending on the case. OpenAI is making a hard call anyway, and betting that many policymakers and parents will prefer a company that states the tradeoff plainly over one that pretends the tradeoff does not exist.

This will shape the market, even if it does not become the standard

None of this means OpenAI has invented the definitive model for youth safety in AI. It has not. The blueprint will attract criticism from privacy advocates, free-expression absolutists, teen-rights advocates, and anyone worried about false positives. It should. These are real tradeoffs, not a compliance coloring book.

Still, the move matters because it turns a fuzzy question into an operational one. If a major consumer AI platform can maintain a distinct U18 ruleset, default uncertain users into it, and bolt on family controls, regulators now have a more concrete object to point at. Rivals do too.

That is especially relevant in places where policy becomes buying behavior. As we argued in EU AI procurement may matter more than lab headlines, institutional markets often care less about the prettiest demo than about whether a system is documentable, governable, and easy to defend. A company that can say it already has age assurance, teen-specific model behavior, and a parent-support framework may look a lot more prepared when schools, public agencies, or app-store gatekeepers start asking hard questions.

The bottom line is simple. OpenAI's teen safety blueprint is not just a statement that teenagers need extra care. It is a product plan for a separate ChatGPT behavior layer, with age prediction as the switch, U18 model rules as the operating logic, and parental controls as the household interface. Once a company decides uncertainty should default to "treat this user as under 18," policy has officially left the PDF and walked into production.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Core announcement for the blueprint framing, product-safety claims, and the broader policy roadmap.

Source for the U18 Principles, the specific higher-risk categories, and OpenAI's explanation of how teen contexts change model behavior.

Sam Altman's explainer on why OpenAI says teen safety can override privacy and freedom defaults.

Most concrete source for rollout status, age-prediction signals, adult age verification, and regional rollout details.

Details the actual parent-teen linking flow, setting controls, quiet hours, and the limits on parent visibility.

Useful for confirming the freshness and clustering of OpenAI's April teen safety push.

About the author

Lena Ortiz

Lena tracks the economics and mechanics behind AI systems, from serving architecture and open-weight deployment to developer tooling, platform shifts, product decisions, and the operational tradeoffs that shape what teams actually run. Her reporting is aimed at builders and operators deciding what to trust, adopt, and maintain.

- 24

- Apr 10, 2026

- Berlin

Archive signal

Reporting lens: Operating leverage beats ideological posturing.. Signature: If the cost curve moves, the product strategy moves with it.

Article details

- Category

- AI Policy

- Last updated

- April 11, 2026

- Public sources

- 6 linked source notes

Byline

Covers the economics, tooling, and operating realities that shape how AI gets built, shipped, and run.