NVIDIA OpenShell makes agent security infrastructure

OpenShell matters less as another framework than as a control plane that moves policy, sandboxing, and model routing outside the agent’s reach.

The interesting move is not the agent itself. It is the decision to put the rules somewhere the agent cannot rewrite.

I think NVIDIA OpenShell matters because it treats agent security as a placement problem, not a prompt-writing contest.

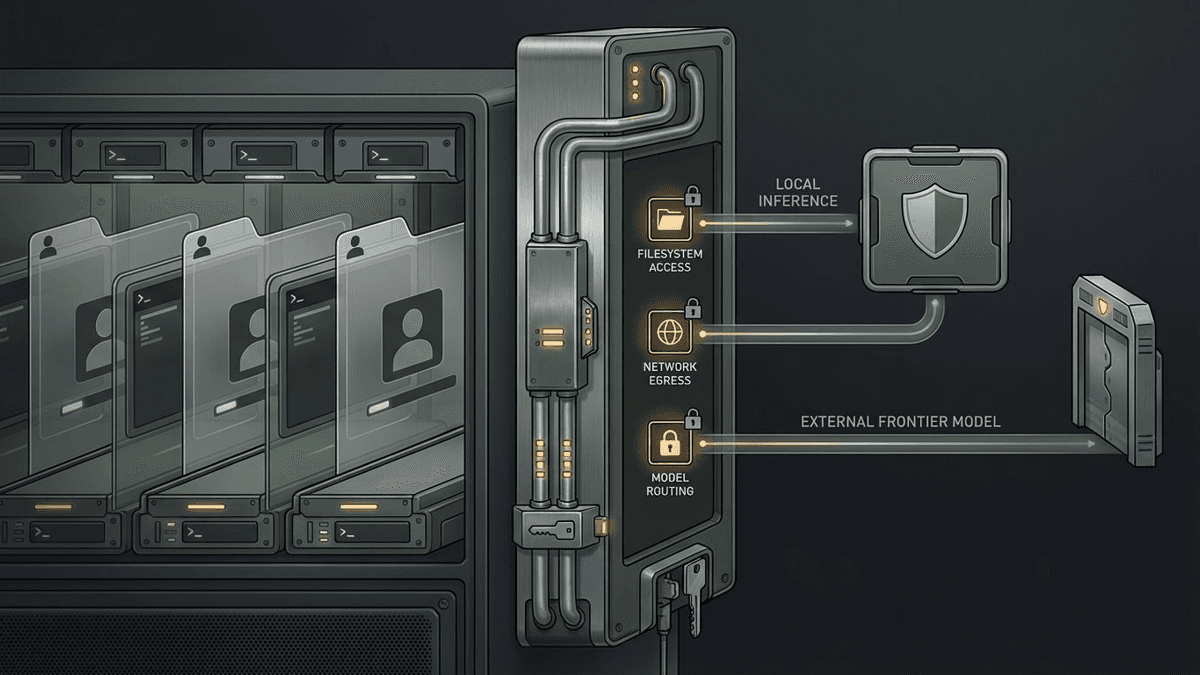

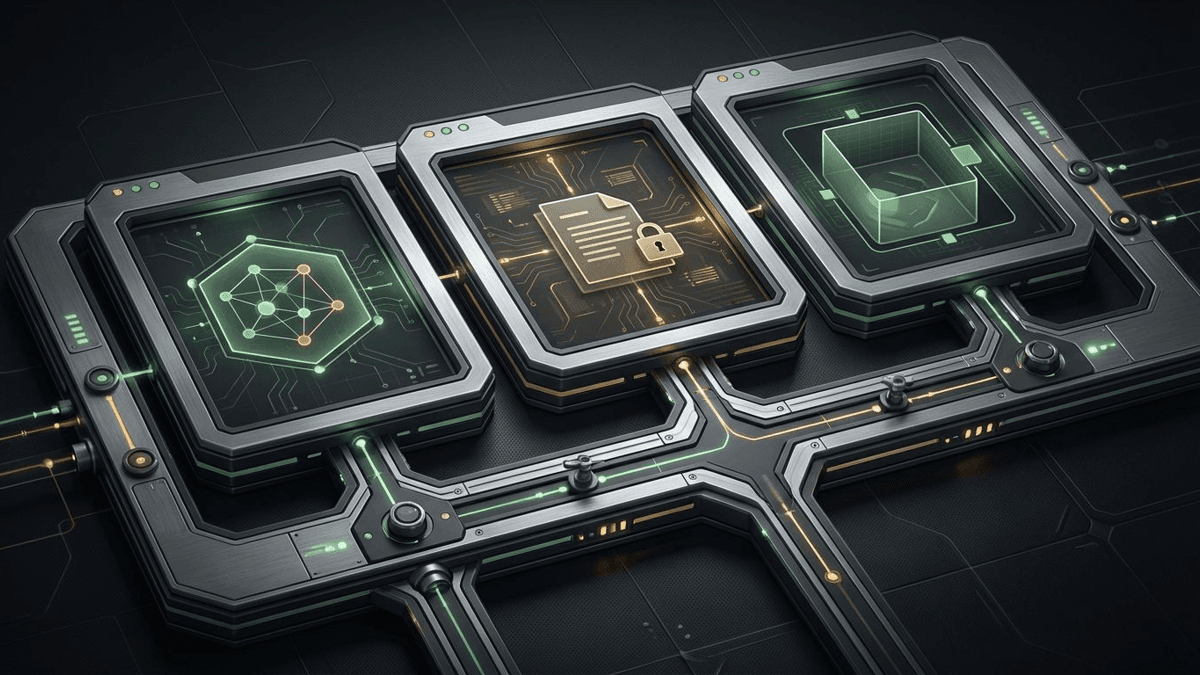

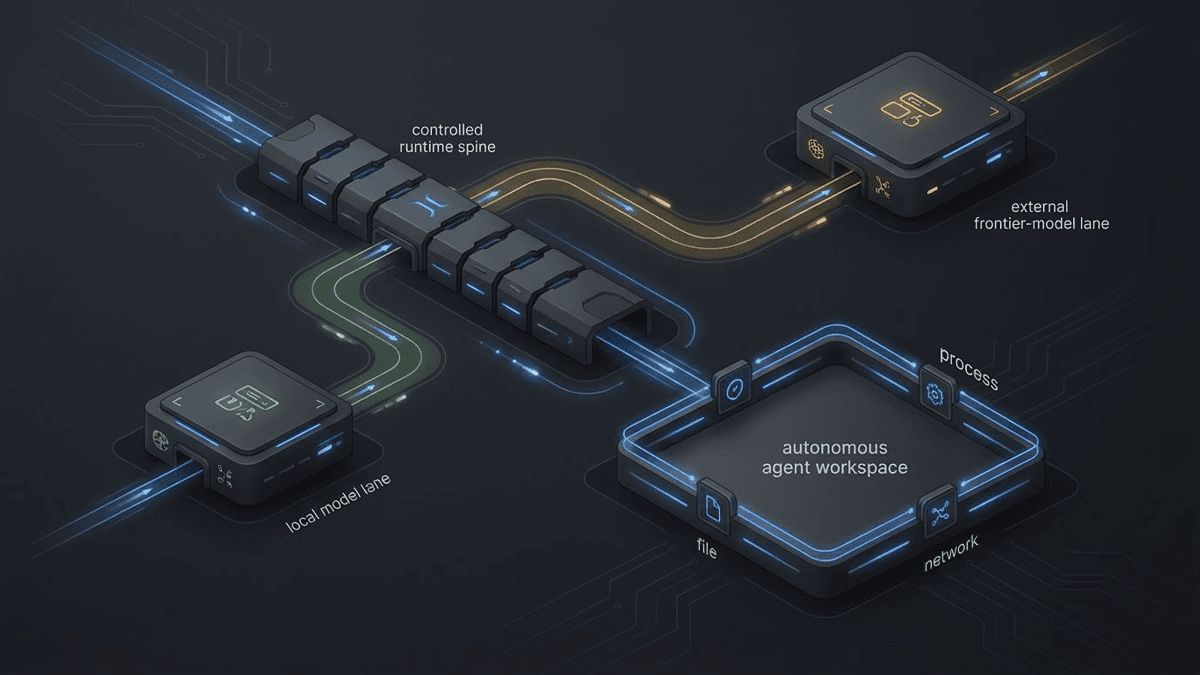

That distinction sounds dry until you look at what the project actually does. NVIDIA's developer write-up and GitHub README describe three core pieces: an isolated sandbox, a policy engine, and a privacy router. Sandboxes start with minimal outbound access. Policies are declarative YAML. Network and inference rules can be hot-reloaded. Filesystem and process constraints are fixed when the sandbox is created. In other words, the runtime gets the final word instead of the agent politely promising to behave.

That is a much more serious answer to agent risk than the usual launch-day incense.

OpenShell puts the rules outside the agent

A lot of current agent stacks still act as if the model, the harness, and the guardrails can all live in roughly the same place. The system prompt says "please do not," the tool wrapper waves a permission banner, and everyone hopes the reasoning loop remains in a cooperative mood. I do not love those odds.

NVIDIA is pretty direct about the failure mode: an agent that is effectively policing itself. Once you give that system shell access, live credentials, package installs, or permission to spawn subagents, the old safety story starts to feel very thin. It is the difference between telling a teenager not to raid the cookie jar and actually moving the jar to a locked cupboard.

That is why OpenShell's browser-tab analogy works for me. Each agent session gets its own isolated environment. Network access is not assumed. The policy layer decides what actions can run. The boundary lives outside the agent process.

Why out-of-process policy beats prompt policing

The strongest evidence is in the repo examples. In the README quickstart, a fresh sandbox cannot even curl the GitHub API. After a policy update, read-only GET requests are allowed while POST requests are still blocked at the proxy layer. The policy engine evaluates access at the level of binary, destination, method, and path. That is not hand-wavy guardrail talk. That is runtime policy with actual teeth.

The same pattern shows up in credential handling and model routing. OpenShell manages credential bundles as providers and injects them at runtime instead of storing them in the sandbox filesystem. Its privacy router is meant to keep sensitive context on local compute and route to outside frontier models only when policy allows. That is what makes the project feel more like an agent control plane than one more framework.

I also think this is why OpenShell sits differently from things like OpenAI's agent stack or Google's Gemini tooling push. Those stories are about workflow ownership. OpenShell is about enforcement around the workflow. Different layer. Same race to become unavoidable.

This is real software, but it is still early

There is enough substance here to take it seriously. The repo is public under Apache 2.0. NVIDIA says OpenShell works with familiar agent surfaces like Claude Code, Codex, Copilot, OpenCode, and community sandboxes such as OpenClaw. Cisco has already said its DefenseClaw framework will hook into OpenShell as part of a broader push around agent identities, zero-trust access, and runtime guardrails.

At the same time, the README throws a bucket of cold water on the hype in a way I actually appreciate. It calls the current release "Alpha software — single-player mode" and describes it as proof-of-life: one developer, one environment, one gateway. Good. More launch posts should come with that level of adult supervision.

That means the honest reading is mixed in the useful way. The architecture is real. The repo is real. The enterprise category is real. The outcome is not settled.

My take on the agent control plane race

What OpenShell gets right is the core design move: put the sandbox outside the agent, put policy outside the agent, and put routing decisions outside the agent. Once agents can keep acting without constant human supervision, trust stops being a vibes problem and starts being an infrastructure problem.

That does not make runtime security the whole stack. Together AI's post-training work still matters, because a better-behaved agent is easier to contain and easier to trust. But model reliability and runtime enforcement solve different problems. One helps the agent act better. The other helps limit damage when acting better is not enough.

I do not know whether OpenShell itself becomes the standard. I do think the standard is going to have to look a lot like this. Once the agent asks for more reach, somebody other than the agent needs the last word. That is the part NVIDIA understands.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

NVIDIA’s higher-level framing for OpenShell, including the browser-tab analogy and the argument for system-level policy enforcement.

Primary source for the architectural description: sandbox, policy engine, privacy router, and the out-of-process enforcement thesis.

Public repo source for the alpha-stage README, policy examples, supported agent integrations, and the project’s own maturity caveats.

Confirms repository activity, creation date, current push date, license, and public adoption signals such as stars and forks.

Useful as ecosystem signal that runtime guardrails and zero-trust controls for agents are becoming a live enterprise security category, not as proof of OpenShell adoption.

About the author

Lena Ortiz

Lena tracks the economics and mechanics behind AI systems, from serving architecture and open-weight deployment to developer tooling, platform shifts, product decisions, and the operational tradeoffs that shape what teams actually run. Her reporting is aimed at builders and operators deciding what to trust, adopt, and maintain.

- 24

- Apr 10, 2026

- Berlin

Archive signal

Reporting lens: Operating leverage beats ideological posturing.. Signature: If the cost curve moves, the product strategy moves with it.

Article details

- Category

- Open Source AI

- Last updated

- April 11, 2026

- Lead illustration

- OpenShell’s pitch is simple: the last word on what an agent can touch should live outside the agent itself.

- Public sources

- 5 linked source notes

Byline

Covers the economics, tooling, and operating realities that shape how AI gets built, shipped, and run.