Safetensors joins PyTorch Foundation weight stack

Hugging Face has moved Safetensors into the PyTorch Foundation, turning the default open-model weight format into vendor-neutral infrastructure for the wider stack.

Safetensors is no longer just Hugging Face's safer file format. It is becoming shared plumbing for how the open model world passes weights around.

File formats do not usually get dramatic headlines. They sit in the background, do their job, and hope nobody notices them. That is the dream. The moment people start paying attention to a file format, something has usually gone wrong, or something much bigger is shifting underneath it.

That is why I keep coming back to Hugging Face moving Safetensors into the PyTorch Foundation. On paper, this can sound like one more governance post from the land of foundation logos and polite committee nouns. In practice, it is more interesting than that. Safetensors already became the default way a huge share of open models get distributed. Now the format is being moved into a vendor-neutral home inside the same foundation stack that already houses projects like PyTorch, vLLM, DeepSpeed, Ray, and Helion.

That is not a cosmetic change. It is a clue.

The announcement from Hugging Face says Safetensors is joining the PyTorch Foundation as a foundation-hosted project under the Linux Foundation. The post also says the trademark, repository, and governance now sit with the Linux Foundation rather than any single company, even though Hugging Face's core maintainers will keep leading the project day to day. The PyTorch Foundation project page makes the technical case in plain English: this is a secure, fast format for model weights, designed to avoid arbitrary code execution while keeping zero-copy and lazy-loading behavior.

If model launches are the movie stars of open AI, Safetensors is the freight elevator. Nobody buys a ticket for the elevator. Everybody still depends on it.

The format just picked up a bigger political home

The cleanest way to read this move is to separate the confirmed present-tense facts from the more ambitious future-tense pitch.

| Detail | Status | Why it matters |

|---|---|---|

| Safetensors is now a PyTorch Foundation hosted project | Confirmed | The format moves into a broader open governance structure rather than living purely as a Hugging Face-origin project |

| The trademark, repo, and governance now sit with the Linux Foundation | Confirmed | That lowers the risk of one company being the sole long-term steward of a widely used format |

| Existing users should expect no breaking changes | Confirmed | This is a governance and roadmap move, not a forced migration event |

| The maintainer path is now formally documented in repo governance files | Confirmed | Contribution rules are clearer than informal "talk to the core team" culture |

| Safetensors may be used in PyTorch core as a serialization system | Planned, not complete | This is the part that could turn the move from symbolic into structural |

| Device-aware loading, TP/PP loading, and broader quantization support are on the roadmap | Planned, not complete | These features would make Safetensors more central to modern inference and training stacks |

The important part is that nothing breaks today. Models stored in Safetensors still work the way they worked yesterday. The format is the same. The APIs are the same. Hub behavior is the same. That matters, because the best infrastructure moves are usually the ones that do not force every downstream user into a weekend migration ritual.

But it would be a mistake to stop there. The story is not "nothing changes." The story is that ownership and trajectory change while the byte layout stays put.

Why Safetensors mattered before the Foundation move

Safetensors did not spread because the name sounded responsible. It spread because the old default was awkward in exactly the wrong way.

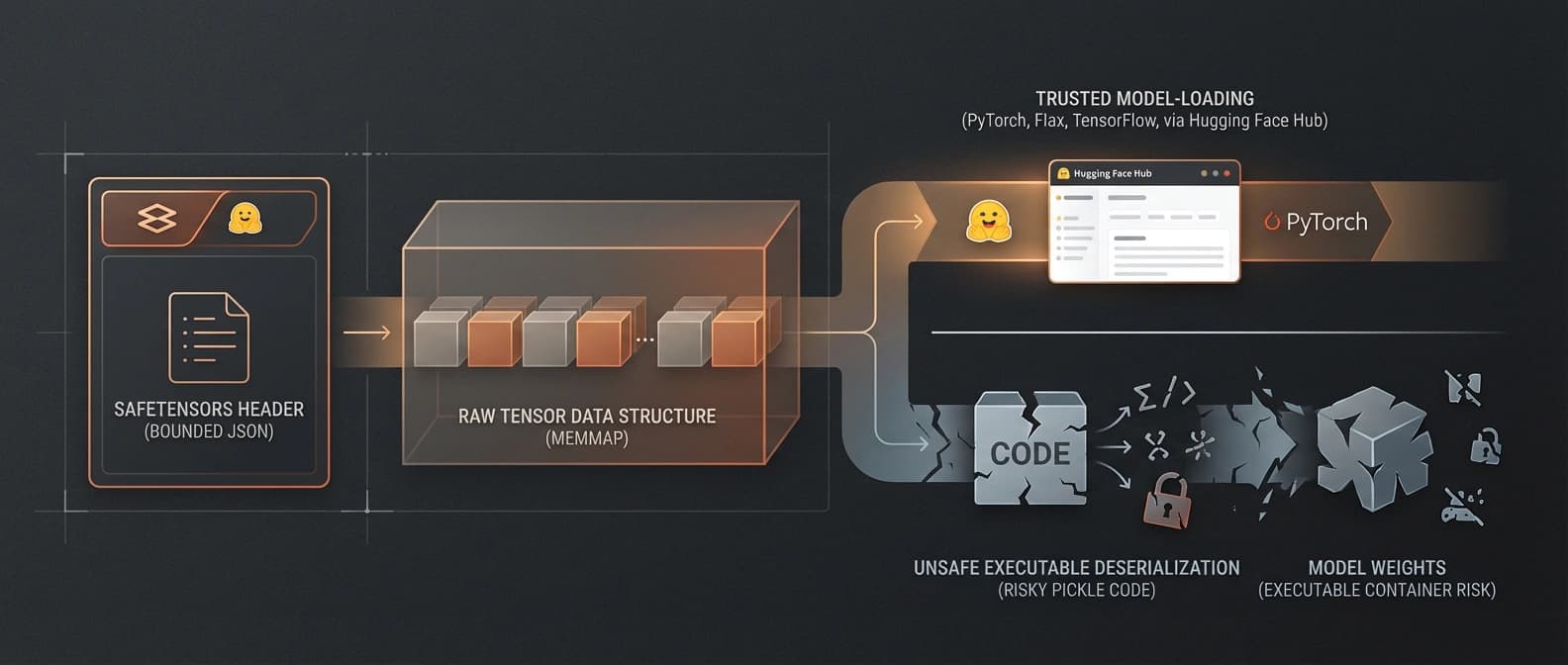

The problem was pickle. In the Python world, pickle-based model files came with a real code-execution risk during deserialization. That was tolerable when machine learning still felt like a smaller club and everyone more or less assumed the files came from people they trusted. It gets a lot less charming once open model sharing becomes a normal way the field works.

Hugging Face's description of the format is blunt: Safetensors was built so model weights could be stored and shared without executing arbitrary code. The underlying structure is deliberately plain. The repo README describes a simple layout with a bounded JSON header and raw tensor data after it. The PyTorch Foundation page highlights the same point, along with a built-in 100 MB header limit for DOS protection, zero-copy reads, and lazy loading.

That combination ended up being unusually practical.

- It made the safety story easier to explain.

- It kept loading fast enough that people did not feel punished for doing the safer thing.

- It worked across multiple frameworks, not just one corner of the ecosystem.

That last point matters more than it sounds. Open models do not live inside one tidy software monoculture. They move through PyTorch, TensorFlow, Flax, inference servers, quantization pipelines, conversion scripts, local toolchains, and hosting layers. When we cover open model releases like Gemma 4's sprawling hardware tradeoffs, the flashy part is the model family or the benchmark.

The same goes for frontier open-weight pushes like Holo3. The dull but crucial part is still how those weights get packaged, loaded, and trusted.

That is where Safetensors quietly won.

The Hugging Face announcement says it is now the default format for model distribution across the Hugging Face Hub and others, used by tens of thousands of models across modalities. That line should not be read as empty chest-puffing. By this point, Safetensors has already graduated from niche safety patch into baseline ecosystem habit.

This is also why the Foundation move matters. You do not bother moving a format like this into neutral governance unless it has already crossed from "helpful project" into "shared dependency." Nobody forms a constitutional monarchy around a side script.

Why governance matters more than the press release makes it sound

Open source people love saying that code is what matters. Code does matter. Governance matters too, especially once a project becomes boring enough to be foundational.

That is the phase Safetensors is entering.

The Hugging Face post argues that moving into the PyTorch Foundation makes the project vendor-neutral and more community-owned. In the abstract, that is persuasive. In the concrete, the repo documents are even more revealing. The new GOVERNANCE.md spells out a consensus-based maintainer model, an appeal path, and a formal nomination route for future maintainers. The MAINTAINERS.md file then provides the useful reality check: the current formal list still names only Daniël de Kok and Luc Georges, both from Hugging Face.

I actually like that tension because it tells the truth. Safetensors has not magically become a sprawling multi-vendor parliament overnight. What changed is the container around the project. The path is now open for broader stewardship, but the day-to-day center of gravity has not instantly scattered to the winds.

That is healthier than pretending governance is already wider than it is.

The other reason this matters is political, not just procedural. Once a format becomes the way a big chunk of the ecosystem passes around model weights, control over that format starts to look like platform power. Not monopoly power in the cartoon sense. More like the quiet kind of leverage that comes from owning the default layer everyone else has built habits around.

Moving Safetensors into the PyTorch Foundation lowers the odds that the format will be treated as one company's house preference forever. It also makes coordination with adjacent stack projects a lot easier to justify. The Hugging Face post explicitly calls out collaboration with other Foundation-hosted projects rather than parallel work. That is not subtle. It is the whole strategy.

And that strategy lines up with where the rest of the open inference world is heading. The more projects like vLLM's serving stack turn model serving into serious infrastructure, the less tolerable it becomes for the weight format underneath them to feel like a semi-private implementation detail.

The same is true for vLLM's Triton backend work on AMD. Shared plumbing eventually wants shared rules.

This is really a model-distribution infrastructure story

The forward-looking part of the announcement is where the story stops being merely institutional and starts getting operational.

Hugging Face says the team is working with PyTorch so Safetensors may be used within PyTorch core as a serialization system for torch models. It also points to future work on device-aware loading and saving so tensors can load directly onto CUDA, ROCm, and other accelerators without unnecessary CPU staging. On top of that, the roadmap mentions first-class APIs for tensor parallel and pipeline parallel loading, plus broader formal support for formats such as FP8, GPTQ, AWQ, and sub-byte integer types.

That is a lot of plumbing language. Good. Plumbing language is the right language here.

Because if those roadmap items land, Safetensors stops being mainly a safer download format and starts becoming a deeper part of how big models move through real systems:

- weights arriving from a hub or registry

- weights loading onto specific accelerators with less waste

- only the needed slices loading for distributed inference or training

- quantized variants fitting into a cleaner common contract

That is a much bigger role.

It would also fit the broader direction of the market. The open model world is becoming less about "can I download this checkpoint" and more about "can I load, shard, quantize, serve, and trust it without writing custom archaeology every time." The more that question dominates real deployments, the more value shifts toward the format and loading layer rather than the announcement banner sitting on top of it.

This is the part I would not overstate yet. The PyTorch core tie-in is still a stated ambition, not a finished integration. The distributed-loading and quantization pieces are roadmap items, not shipped facts. If you pretend those are already complete, you are writing fan fiction with better nouns.

Still, the direction is hard to miss. Safetensors is moving closer to the center of the open model runtime story.

What changes now for users and contributors

For normal users, almost nothing changes immediately, and that is probably the correct outcome.

If you pull a model from the Hub today and it uses Safetensors, your day should look boring. Same files. Same APIs. Same workflows. That is what the announcement promises, and nothing in the repo or Foundation materials suggests otherwise.

For contributors and companies building on top of the format, the change is bigger.

Now there is:

- a formal governance document

- a documented maintainer path

- a neutral foundation home

- a clearer argument for cross-project coordination with other PyTorch Foundation infrastructure

That does not guarantee healthy stewardship by itself. Foundations are not magic. They are just better containers for shared dependencies when the alternative is one vendor holding the keys forever while everyone else pretends not to notice.

The deeper shift is psychological. Safetensors used to read primarily as a Hugging Face answer to a concrete safety problem. It now reads as something closer to a common layer for the open ecosystem. That is a different identity, and identity matters because it changes what other projects feel comfortable depending on.

What will tell us this turned into real infrastructure power

Three things will tell us whether this turns into a real power shift or just a tidy governance upgrade.

First, watch whether PyTorch core actually adopts Safetensors more deeply as a serialization path. That is the fastest route from "widely used" to "hard to route around."

Second, watch whether the maintainer base broadens beyond Hugging Face. The current two-person list is not a scandal. It is just unfinished proof. If the project is truly becoming shared ecosystem infrastructure, the stewardship should eventually start to look shared too.

Third, watch whether the promised work around accelerator-aware loading, distributed loading, and quantization lands in a way other stack projects can use without drama. That is where the boring file format starts turning into the boring layer everyone is quietly grateful exists.

The bottom line for me is simple. Safetensors is no longer just the safer alternative to a risky old default. It is becoming part of the governance and runtime substrate under open AI model distribution. That may sound dry. It is also exactly the sort of dry change that ends up mattering for years.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary announcement for the move into the PyTorch Foundation, the vendor-neutral governance framing, the adoption claims, and the forward roadmap around PyTorch core, accelerator-aware loading, and quantization support.

Best primary source for the Foundation's plain-language framing of Safetensors as a secure, fast model-weight format with zero-copy, lazy-loading, cross-framework, and DOS-protection properties.

Shows the new formal governance model, consensus process, maintainer path, and appeal structure now attached to the project.

Important reality check on who formally maintains the project right now. It documents the current maintainer list rather than the aspiration.

Canonical repository for the format specification, implementation details, and the simple file-structure explanation used throughout the article.

Source for the developer-facing explanation of the format and its current role in model loading workflows across frameworks.

About the author

Idris Vale

Idris writes about the institutional machinery around AI, but the lens is broader than policy alone: procurement frameworks, public-sector buying rules, platform leverage, compliance burdens, workflow risk, and the market structure hiding beneath product or infrastructure headlines. The through-line is practical power, not abstract theater.

- 23

- Apr 10, 2026

- Brussels · London corridor

Archive signal

Reporting lens: Follow the buying process, not just the bill text.. Signature: Policy turns real when someone has to buy the system.

Article details

- Category

- Open Source AI

- Last updated

- April 11, 2026

- Public sources

- 6 linked source notes

Byline

Tracks the institutions, incentives, and market structure that quietly decide which AI systems get deployed and why.