NVIDIA Nemotron Coalition wants to train the open stack

NVIDIA's Nemotron Coalition is an attempt to turn DGX Cloud, post-training, and partner coordination into the shared foundation for frontier open AI.

The clever part of the NVIDIA Nemotron Coalition is not the word open. It is NVIDIA turning itself into the place where open frontier models get trained, tuned, and made usable.

The easy read on the NVIDIA Nemotron Coalition is that NVIDIA wants to look friendly to open models. That part is true. It is also the least interesting part.

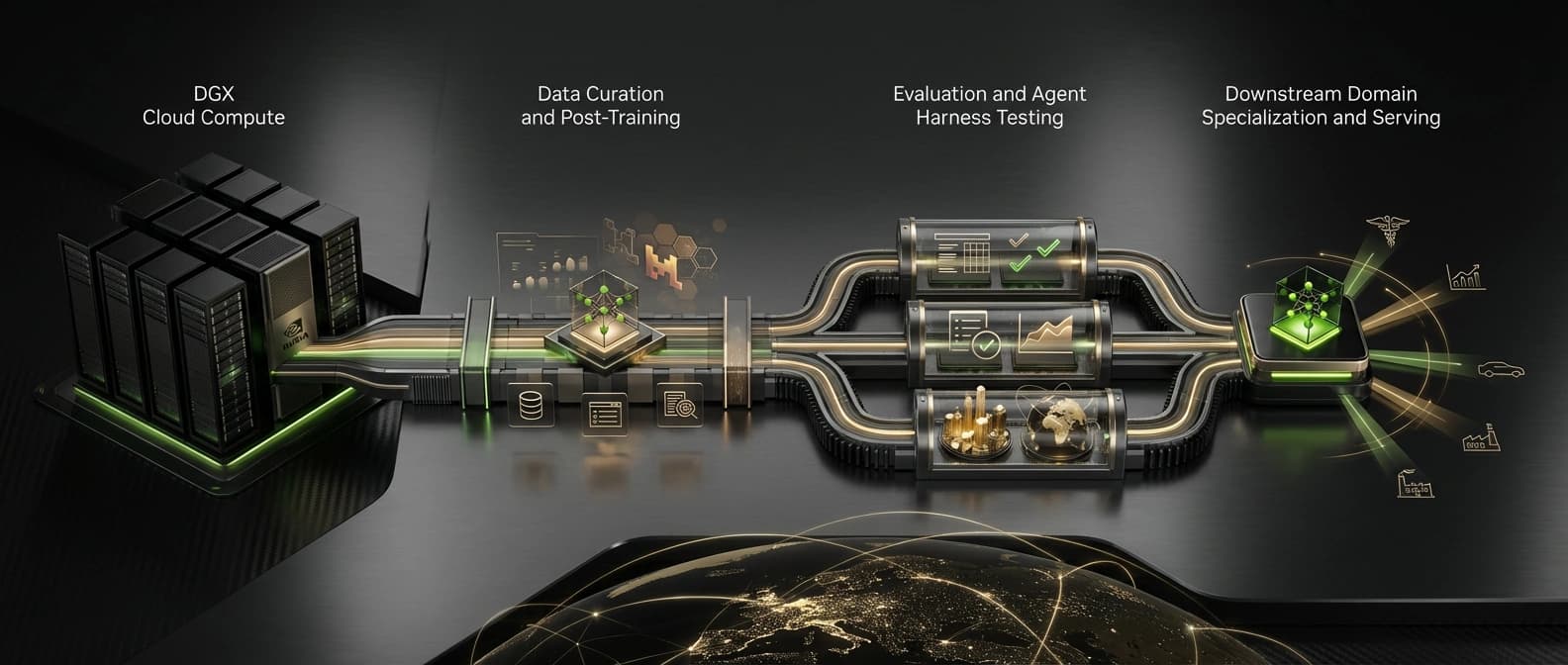

The more useful read is that NVIDIA is trying to become the shared training ground for frontier open models. Not just the chip vendor. Not just the host. The coalition puts NVIDIA in the middle of training, post-training, evaluation, and deployment, with Mistral and a small cluster of influential AI companies helping fill in the missing pieces.

This is infrastructure politics in open-source clothing.

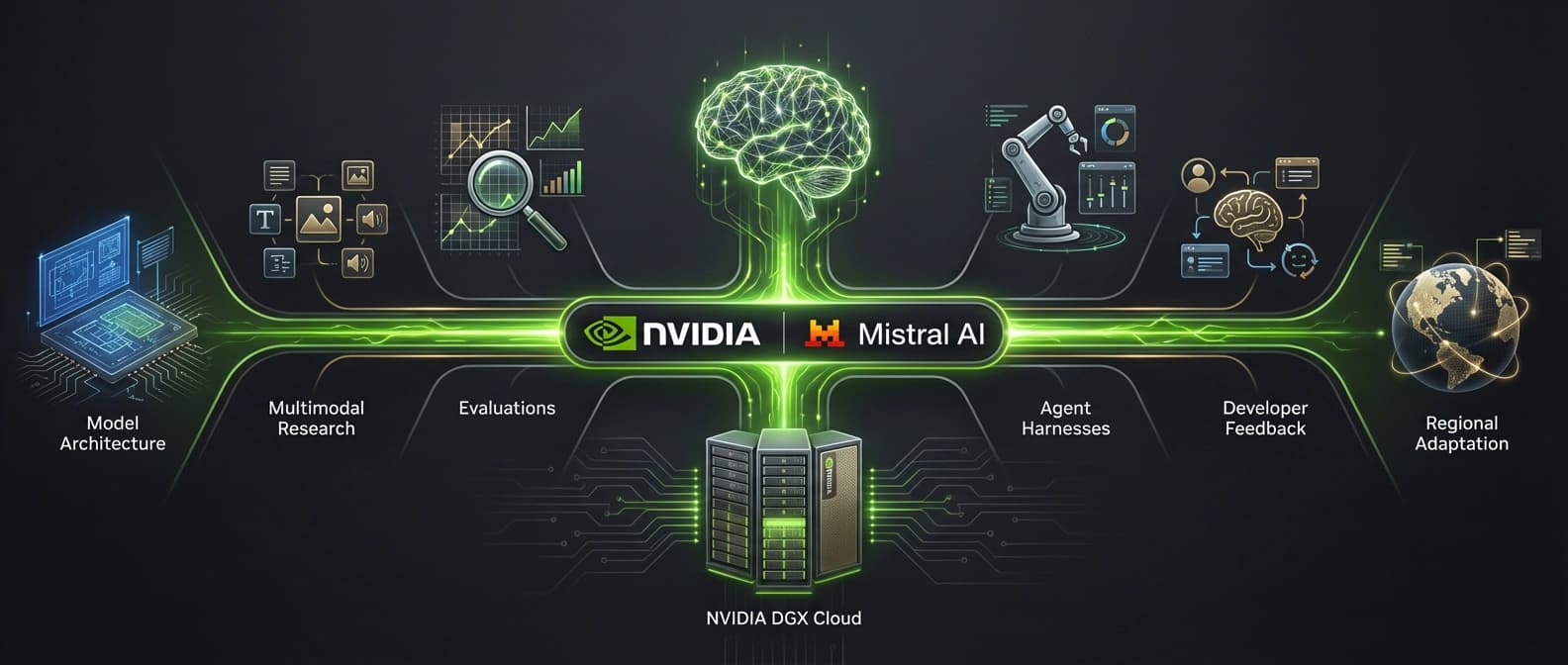

NVIDIA's announcement says the coalition brings together Black Forest Labs, Cursor, LangChain, Mistral AI, Perplexity, Reflection AI, Sarvam, and Thinking Machines Lab to build open frontier models through shared expertise, data, and compute. The first project is even more specific: a base model codeveloped by Mistral AI and NVIDIA, trained on NVIDIA DGX Cloud, with coalition members contributing data, evaluations, and domain expertise. NVIDIA says that model will be shared with the open ecosystem and will underpin the upcoming Nemotron 4 family.

That is not a book club with GPUs. It is a coordinated attempt to decide where open frontier models get made and what stack they grow up on.

Why the NVIDIA Nemotron Coalition matters now

Open models have momentum, but the ecosystem is still messy. Labs can release weights and benchmark charts. Builders still have to solve the boring expensive part: training access, post-training, evaluation, agent wiring, and infrastructure that survives real traffic.

That fragmentation is NVIDIA's opportunity.

If you read the coalition launch next to Jensen Huang's GTC framing in The Future of AI Is Open and Proprietary, the company's position is not subtle. NVIDIA does not think the market resolves into one winning closed lab and a pile of hobby projects. It thinks the future is a mix of open and proprietary models, plus a larger stack of orchestration and specialization around them.

That stack is where NVIDIA already makes itself hard to ignore. We have already seen part of that logic in NVIDIA Dynamo's role above vLLM, where raw inference is only one layer of the system NVIDIA wants to own. The Nemotron Coalition extends the same idea earlier in the pipeline. Instead of waiting for labs to release open models and selling the serving layer afterward, NVIDIA is trying to sit inside the creation process itself.

I keep coming back to that point because it changes how the announcement reads. This is not just NVIDIA blessing openness. It is NVIDIA trying to shape the operating system around open-model development.

What the NVIDIA Nemotron Coalition actually promises

The announcement gives enough detail to matter, but not enough detail to relax.

Here is the cleanest way to separate confirmed facts from the glow around them:

| Coalition claim | What is actually confirmed | What is still missing |

|---|---|---|

| The coalition will advance open frontier models | NVIDIA says members will contribute expertise, data, evaluations, and research toward shared model development | No public governance charter, voting process, or decision structure for the coalition |

| The first project is concrete | NVIDIA and Mistral say the first project is a base model codeveloped by them | No hard release date beyond saying it will underpin the upcoming Nemotron 4 family |

| The resulting model will be open | NVIDIA says the model will be shared with the open ecosystem, and Mistral says the models will be open-sourced | No public license yet, and no confirmed release plan for training data or full training recipes |

| Members help shape development | NVIDIA says partners will contribute data, evaluations, and domain expertise | No evidence that the coalition operates independently of NVIDIA or outside DGX Cloud |

| This helps developers specialize models | Both companies frame the model as a base for post-training and domain adaptation | No proof yet on benchmark quality, enterprise adoption, or how permissive downstream usage will be |

That middle column is strong enough for a real story. The right column is why nobody should talk about the coalition as if it were already a settled institution. Corporate coalitions often end up as ambition wearing a lanyard. NVIDIA has given more detail than that, but the missing pieces still matter.

NVIDIA wants to be the training ground, not just the sponsor

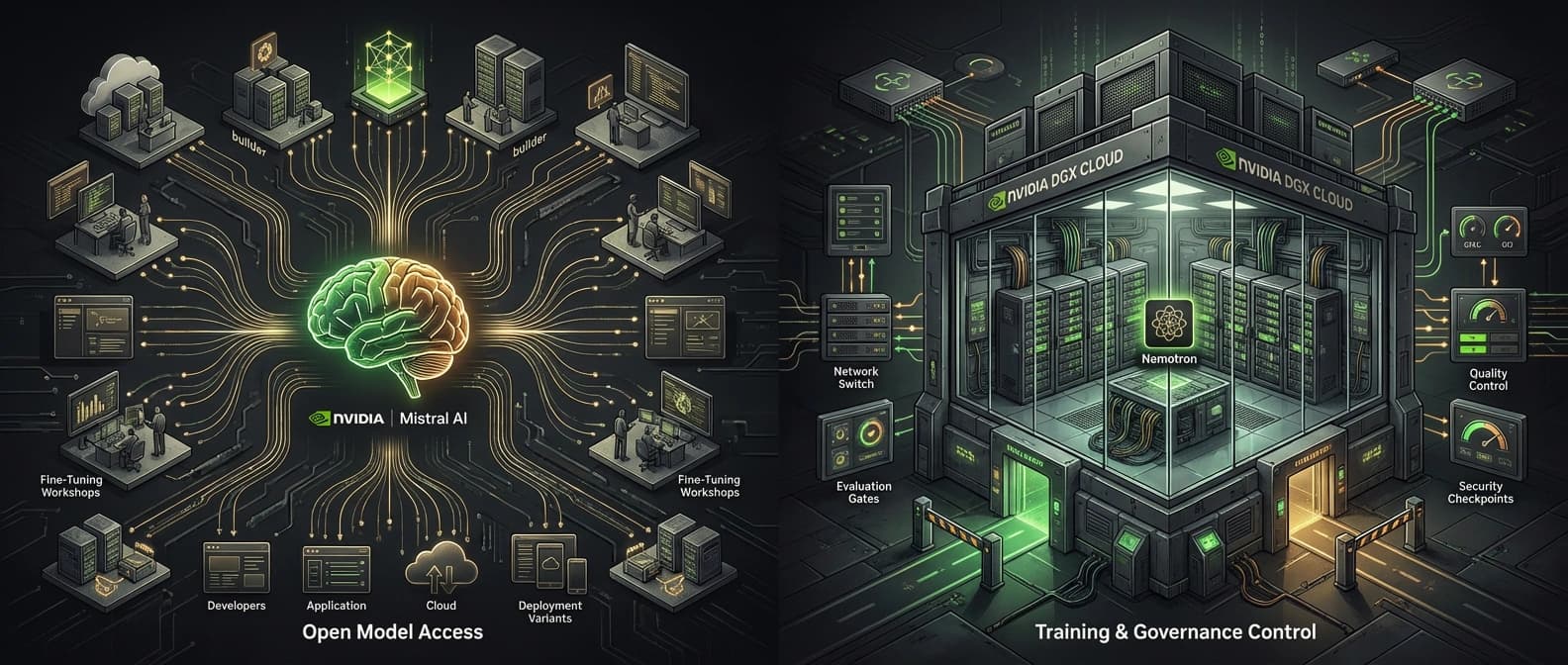

There are a few ways to profit from open models. You can release them. You can host them. You can sell the picks and shovels. Or you can do something more durable: become the place where serious labs train, refine, and operationalize them.

That last option is what makes the NVIDIA Nemotron Coalition strategically sharp.

Mistral's post is especially clear here. It says NVIDIA brings compute resources, model-development tools, and synthetic-data generation pipelines, while Mistral brings model architecture, multimodal capabilities, and fine-tuning know-how. NVIDIA's announcement adds DGX Cloud as the training venue for the first model. Put those together and the coalition starts to look less like a symbolic alliance and more like a managed campus.

That matters because open models do not become influential just by existing. They matter when teams can train them, adapt them, evaluate them, and ship them without rebuilding the whole pipeline from scratch. If NVIDIA becomes the easiest place for that work to happen, it gains leverage even when the resulting weights are open.

Open does not cancel dependency. It just moves the dependency around.

We have already seen this logic in open-weight inference economics. Cheap or available weights are only one piece of the bill. Compute, memory, tooling, latency, and operational complexity decide what actually gets used. The Nemotron Coalition applies the same lesson one step earlier. If the open ecosystem trains on NVIDIA, tunes on NVIDIA, and serves through NVIDIA-adjacent infrastructure, NVIDIA still sits in the money path.

That is the bet.

What “open frontier models” means here, and what it does not

This is the part that needs the most adult supervision.

NVIDIA uses phrases like "open models" and says the first coalition model will be shared with the open ecosystem. Mistral goes a step further and says the models will be "open-sourced." Those are encouraging signals if you want broad downstream access. They are not the same thing as full open governance, full training transparency, or a guarantee that every meaningful artifact will be public.

Right now, we do not have a public license. We do not have a public commitment to release the training corpus. We do not have a public governance model showing that coalition members can outvote NVIDIA, route around DGX Cloud, or steer the roadmap in any independent way.

That does not make the coalition fake. It means people should use the right level of precision.

The most defensible reading today is this: the coalition aims to produce a model that outside developers and organizations can post-train and specialize, with stronger openness than a closed API-only stack, while NVIDIA still anchors the training environment and the surrounding tooling. That is meaningful. It is also a long way from a neutral public commons.

I would be especially careful with the word "independent." Nothing in the source material says the coalition is independent from NVIDIA. In fact, the opposite is clearer. NVIDIA announced it. NVIDIA provides the compute environment. The first base model is codeveloped by NVIDIA and Mistral. Other members contribute, but the center of gravity is not hard to find.

Why Mistral, LangChain, and the rest of the logo wall matter

The member list matters, but not for the usual press-release reason.

A big partner wall often signals prestige first and substance second. Here, the member roles at least line up with real gaps in the open-model pipeline. NVIDIA's announcement does something useful by naming the kinds of contributions expected from different members.

| Member | Stated contribution | Why it matters to NVIDIA's hub strategy |

|---|---|---|

| Mistral AI | Codevelops the first base model with NVIDIA and brings frontier model-building expertise | Gives the coalition a credible model-building center instead of a pure infrastructure story |

| LangChain | Plans to build an agent harness, evaluate capabilities, and provide observability into agent behavior | Makes the models easier to test in real agent workflows, not just benchmark tables |

| Cursor | Brings real-world performance requirements and evaluation datasets | Pushes the work toward developer use cases instead of abstract leaderboard glory |

| Black Forest Labs | Contributes multimodal expertise | Helps the coalition argue that open frontier models should span more than text |

| Perplexity | Brings large-scale product and model-development perspective | Connects model quality to search and user-facing reliability at scale |

| Sarvam | Adds voice-first and language-inclusive experience | Strengthens the sovereignty and regional customization story NVIDIA likes to tell |

Mistral is the critical name because it gives the first model real authorship, not just endorsement. That also fits neatly with our earlier look at Mistral Forge and enterprise model ownership. Mistral has been pushing the idea that customers want more control than closed APIs give them. The coalition lets Mistral push that logic up into frontier training itself, while NVIDIA supplies the giant machine room.

LangChain is the other quietly important name. NVIDIA quotes Harrison Chase saying LangChain will help build the "best agent harness" for these models, along with observability into agent behavior. That sounds niche until you see where the market is heading. If open models want to matter in agent systems, coding tools, and long-horizon workflows, they need better harnesses and better evaluation loops. Raw weights do not tell you how a model behaves inside a messy product. Tooling does. That is the same pressure behind pieces like Qwen3.6-Plus and repo-scale coding agents, where the useful story is rarely just the model.

So yes, the logo wall matters. But mostly because it shows NVIDIA building a loop: training, evaluation, agent testing, product feedback, and downstream specialization in one orbit.

DGX Cloud is the quiet center of the story

If I had to pick the single phrase that matters most in the announcement, it would be this one: trained on NVIDIA DGX Cloud.

That is the sentence that turns the coalition from messaging into strategy.

DGX Cloud is not just rented compute. In this context, it is NVIDIA's way of turning hardware access into workflow gravity. The more open-model development happens inside that environment, the more NVIDIA becomes the default place where frontier open work gets coordinated. That has knock-on effects everywhere else, from model optimization to inference tooling to enterprise comfort with the stack.

It also gives NVIDIA a cleaner answer to a problem that keeps showing up across open AI: labs want openness and distribution, but they still need absurd amounts of compute, better post-training infrastructure, and someone to help absorb operational pain. NVIDIA can offer all of that without pretending to be a neutral charity. The company gets to sell compute and shape the stack. Its partners get training access, tooling, and a faster path to something deployable.

What could slow the NVIDIA Nemotron Coalition down

The biggest short-term risk is the timeline. NVIDIA says the first coalition model will underpin the upcoming Nemotron 4 family, but there is no hard public schedule in the announcement. Upcoming is one of those nice soft words companies use when they want credit now and scrutiny later.

The second risk is openness drift. If the coalition ships weights under a restrictive license, keeps too much of the recipe closed, or turns downstream usage into a maze of conditions, the "open frontier" branding will age badly.

The third risk is partner alignment. Every company in this group has its own incentives. Mistral wants distribution and control. LangChain wants the models to work in agent systems. Cursor wants developer-grade quality. Perplexity wants models that hold up under real product pressure. NVIDIA wants all of that, plus the infrastructure spend. Those goals overlap. They do not perfectly match.

Then there is the simplest risk of all: the model might just be fine. Not bad. Not category-defining. Just fine. Open-model history is full of launches that sounded historic for forty-eight hours and then settled into the middle of the shelf.

The practical read on NVIDIA's open-model strategy

The story here is not that NVIDIA suddenly discovered the moral beauty of open models. It is that NVIDIA sees a chance to become the shared venue where open frontier models are trained, tuned, and made useful.

That makes the NVIDIA Nemotron Coalition more interesting than a standard partnership post. The next competition may be less about who ships the best weights and more about who owns the workflow around those weights.

If the coalition produces a strong first model with broad downstream rights, NVIDIA will have done something clever. It will have helped the open ecosystem while also making itself harder to route around. If it stumbles, this turns into another well-lit GTC artifact with a memorable logo wall and a fuzzier legacy.

For now, I think the safest strong claim is this: NVIDIA is not just backing open models. It is trying to become the place where open frontier models get organized.

That is a much bigger ambition.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary announcement for coalition members, DGX Cloud training, the first Mistral and NVIDIA base model, and the claim that the resulting model will underpin the upcoming Nemotron 4 family.

Jensen Huang's broader GTC framing for open and proprietary models, plus NVIDIA's own description of the coalition as part of a larger systems strategy.

Confirms Mistral as a founding member and codeveloper of the coalition's first base model, and uses stronger open-source language than NVIDIA's announcement.

Official product page for existing Nemotron positioning and NVIDIA's broader open-model framing. Use as product context, not as proof of unreleased coalition details.

About the author

Lena Ortiz

Lena tracks the economics and mechanics behind AI systems, from serving architecture and open-weight deployment to developer tooling, platform shifts, product decisions, and the operational tradeoffs that shape what teams actually run. Her reporting is aimed at builders and operators deciding what to trust, adopt, and maintain.

- 24

- Apr 10, 2026

- Berlin

Archive signal

Reporting lens: Operating leverage beats ideological posturing.. Signature: If the cost curve moves, the product strategy moves with it.

Article details

- Category

- Open Source AI

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Covers the economics, tooling, and operating realities that shape how AI gets built, shipped, and run.