Together AI fine-tuning becomes a reliability layer

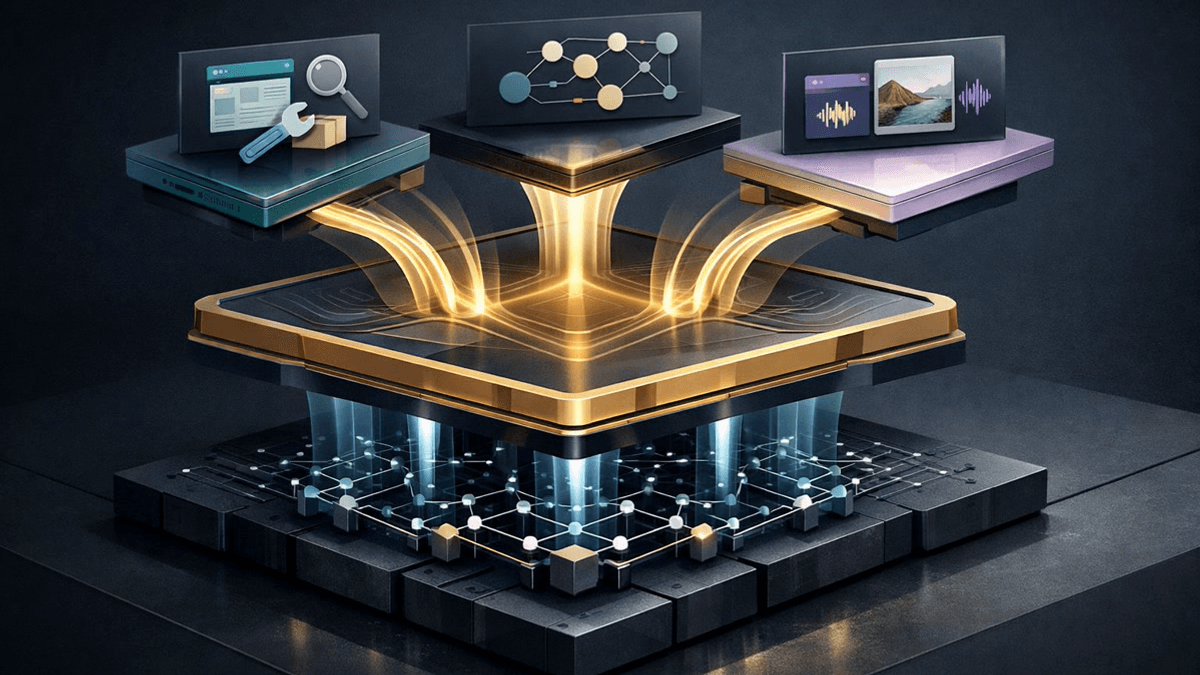

Together AI's fine-tuning expansion matters less as a feature list than as evidence that post-training is becoming the control point for reliable agent products.

The moat is moving from model access to the post-training loop that makes agents behave.

I think Together AI's March 18 fine-tuning update matters less as a feature bundle and more as a reliability pitch wearing a feature bundle as a hat.

The company added tool-calling fine-tuning, reasoning fine-tuning, and vision-language fine-tuning. It also said customers can train on datasets up to 100GB, see clearer cost and ETA estimates, and get up to 6x better throughput on 100B-plus models. That is a busy launch post. It reads like somebody emptied the whole product drawer onto the table and said, "See? We organized it."

What grabbed me is the layer Together chose to organize. Base-model access is getting cheaper, broader, and less magical by the week. The hard part now is making an agent behave like a colleague you would trust with a customer ticket, not like a brilliant intern who keeps mailing the wrong spreadsheet to the wrong person. That is a post-training problem.

Together AI fine-tuning is really a reliability product

I keep seeing the same agent failure pattern: the model itself is often good enough, but the behavior around it is flimsy. The agent picks the wrong tool, mangles the arguments, loses the thread halfway through a task, or misreads a screenshot that mattered more than the surrounding text. It is a bit like hiring a waiter with a perfect memory for menu poetry who still cannot remember which table ordered the soup.

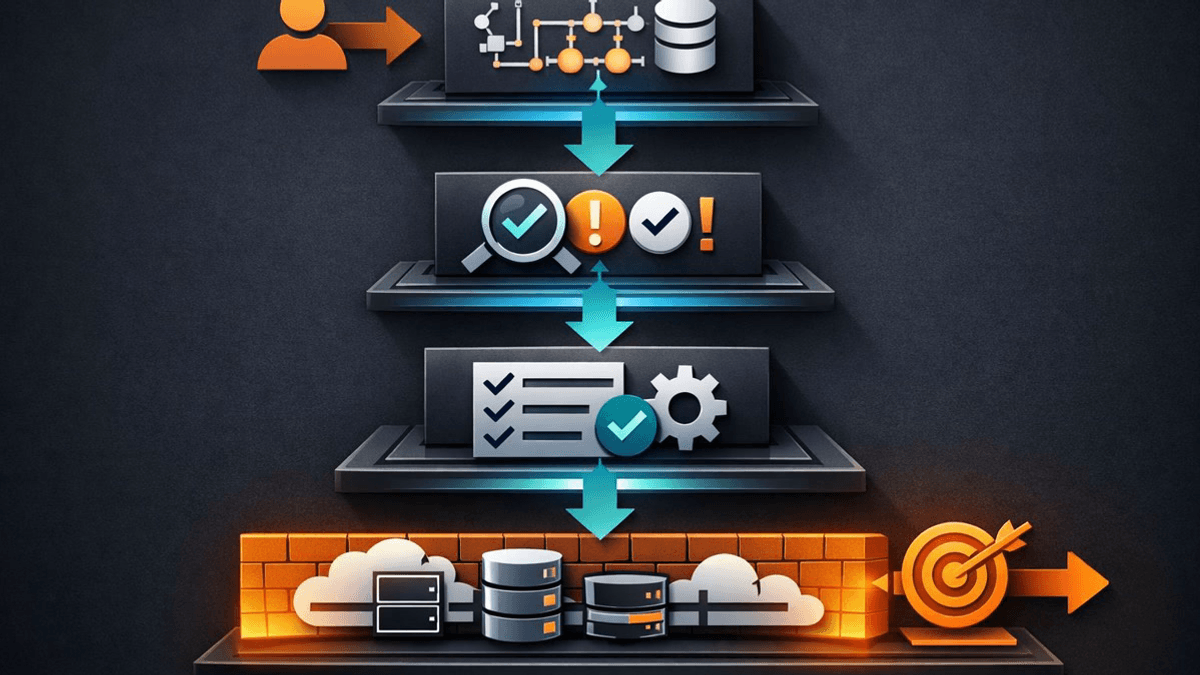

That is why Together's update matters. It is trying to move the control point upward, away from raw model access and toward the post-training loop where tool use, reasoning, and multimodal behavior get shaped into something teams can actually run. That lines up with what I saw in OpenAI's agent stack push and Google AI Studio's full-stack distribution play: the moat is drifting toward the operating layer wrapped around the model, not just the model itself.

Tool calling is where the expensive mistakes start

The most revealing piece is the tool-calling support. Together says developers can fine-tune against an OpenAI-style top-level tools array and assistant tool_calls, and its docs say the platform validates that declared calls match known tools before training starts. That is not glamorous. It is useful.

Once a product is allowed to do things instead of merely say things, slightly wrong stops being cute. A slightly wrong paragraph is annoying. A slightly wrong tool invocation can trigger a bad refund flow, a broken workflow, or an action that sends a human team off to clean up a mess. I would not call tool reliability the whole agent market, but it is certainly the part that makes finance people sit up straighter.

This is why I read the launch as a reliability move. Together is not just offering more fine-tuning knobs. It is trying to host the discipline around structured action.

Reasoning and vision tuning push the stack upward

The reasoning and vision pieces make the same argument from two other angles. Together's docs say reasoning models can be trained with explicit reasoning or reasoning_content fields, and they also warn that reasoning models should be tuned with reasoning data if you want to preserve that capability. That warning matters because it quietly admits something the market likes to gloss over: you cannot fix a reasoning workflow with vibes and a CSV.

The VLM support is just as telling. Together documents hybrid image-text and text-only training, and notes that the vision encoder is frozen by default unless train_vision=true is enabled. In plain English, the company is not selling magic eyes. It is selling a configurable multimodal post-training surface.

That pushes Together into the same strategic neighborhood as Mistral Forge. If model access keeps commoditizing, providers need a stronger answer to the question, "Fine, but where do I make the thing dependable?" Together wants that answer to live in its post-training loop.

My take on Together's real bet

I do not think this launch proves Together solved agent reliability. The throughput claims are still vendor claims. The dataset ceiling is only useful if the examples are good. Vision tuning still depends on whether your real failure cases are actually represented. Garbage in remains extremely committed to its profession.

Still, I think the direction is right. Product teams should treat tool calling, reasoning, and vision as separate reliability surfaces, audit where their agents already break, and only then decide whether post-training deserves a bigger role than prompts, evals, or orchestration. Very short version: do not fine-tune because it sounds serious. Fine-tune because you found the exact crack in the wall.

That is why Together AI fine-tuning matters now. The story is not "more model options." The story is that post-training is starting to look like the layer where agent products either grow up or stay stuck as demos. I have a hard time imagining that layer staying secondary for much longer.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Official March 18 update announcing tool-calling, reasoning, and VLM fine-tuning, plus training-stack and planning changes.

Documents the OpenAI-style tool schema, dataset expectations, and supported models for tool-call fine-tuning.

Shows Together's reasoning-data format and its warning that reasoning models should be trained with reasoning traces.

Details hybrid image-text training, supported VLMs, and the default behavior of freezing the vision encoder unless train_vision is enabled.

Useful for showing that Together is stretching the service across a broad set of open and open-adjacent models rather than a single flagship family.

About the author

Talia Reed

Talia reports on product surfaces, developer tools, platform shifts, category shifts, and the distribution choices that determine whether AI features become durable workflows. She looks for the moment where a launch stops being a demo and becomes an ecosystem move.

- 34

- Apr 1, 2026

- New York

Archive signal

Reporting lens: Distribution is usually the story hiding inside the launch.. Signature: A feature matters when it changes someone else’s roadmap.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Lead illustration

- The strategic move is not more model access. It is controlling how agent behavior gets tuned into something teams can trust.

- Public sources

- 5 linked source notes

Byline

Covers product surfaces, tools, and the adoption moves that turn AI features into durable habits.