Microsoft gives Foundry its own multimodal stack

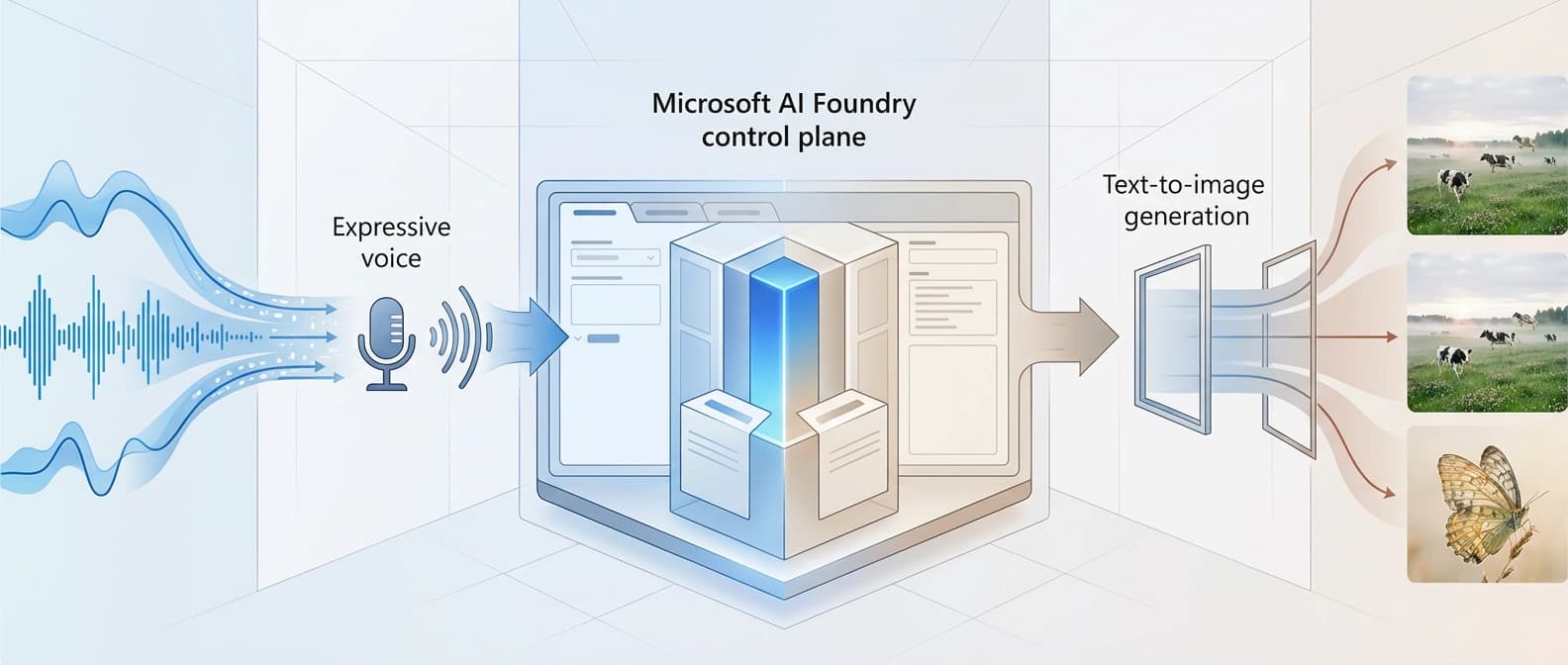

MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 turn Foundry into more of a Microsoft-owned multimodal stack, not just a shelf for other labs' models.

The story is not that Microsoft launched three models. The story is that Foundry now looks a little less like a model mall and a little more like Microsoft's own kitchen.

Microsoft's April 2 MAI announcement looks, at first glance, like three model launches taped together with optimism. Look closer and the sharper story shows up: Microsoft is turning Foundry into more of a first-party multimodal stack.

That is the meaningful part.

MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 matter on their own, sure. But the bigger signal is architectural. Foundry has spent a lot of time looking like the fanciest model mall in town: plenty of serious infrastructure, plenty of partner models, plenty of enterprise varnish. What it lacked was more Microsoft-owned substrate in the actual multimodal loop. Now Microsoft is putting its own speech recognition, speech generation, and image generation pieces much closer to the center.

I think that makes this launch more important than the benchmark tables do.

Why MAI-Transcribe-1 is the real signal

If I had to pick one model that explains the whole move, it is MAI-Transcribe-1.

Microsoft says the model supports 25 languages, starts at $0.36 per hour, and delivers approximately 50% lower GPU cost than leading alternatives while staying competitive on accuracy. It also says MAI-Transcribe-1 ranks first on the FLEURS benchmark in 11 core languages and beats Whisper large v3 on the remaining 14. Those are Microsoft's numbers, not holy scripture, but the direction is obvious: the company wants developers to treat transcription as a first-party Microsoft layer, not just a commodity bolt-on.

That matters because speech recognition is where a lot of voice products quietly live or die. A flashy voice demo can survive mediocre prose. It cannot survive bad listening. If Foundry wants to be the place where teams build assistants, copilots, and full agent workflows, owning the ear is a pretty nice place to start.

It also fits the broader Microsoft pattern we just saw in Microsoft Agent Framework ends Microsoft's agent split. The company keeps nudging its developer story away from parallel bets and toward one house narrative. Different layer, same instinct.

MAI-Voice-1 and MAI-Image-2 turn this into a stack

MAI-Voice-1 completes the other half of the audio loop. Microsoft says it can generate 60 seconds of expressive audio in under one second on a single GPU, with pricing starting at $22 per 1 million characters. The Foundry blog also ties it directly to Copilot's Audio Expressions and podcast features, while routing developer access through Azure Speech.

That pairing matters. This is not Microsoft tossing a TTS model over the wall and calling it a platform. It is assembling speech-to-text plus text-to-speech plus language-model orchestration into something developers can actually ship. If our recent Voxtral TTS story showed the appeal of open control in voice tooling, Microsoft's move shows the opposite bet: tighter first-party integration, tighter platform gravity, and a cleaner enterprise buying story.

Then there is MAI-Image-2. The timing matters here, because Microsoft announced it earlier on March 19. On that date, the company said MAI-Image-2 was rolling out to Copilot and Bing Image Creator, with API access available for select customers such as WPP and broader Foundry access coming soon. On April 2, Microsoft folded it into the bigger stack push and published Foundry-facing docs and pricing. That does not make MAI-Image-2 a brand-new April 2 model, and it definitely does not make it a video model. It makes it the image leg of a broader first-party multimodal story.

According to Microsoft, MAI-Image-2 now starts at $5 per 1 million text-input tokens and $33 per 1 million image-output tokens. The Microsoft Learn docs also show it as a preview model for global standard deployment in specific regions, including West Europe and East US. That is not vague platform poetry. That is real product surface area.

Where Microsoft Foundry and Microsoft AI Playground actually fit

The availability language is a little Microsoft-ish, which is my polite way of saying you have to read three pages to get one clean picture.

The combined April 2 announcement says the MAI models are available through Foundry and can also be tried in the MAI Playground, flagged there as US-only. The Foundry blog says MAI-Transcribe-1 and MAI-Voice-1 are available now through Azure Speech, and frames Foundry as the place developers deploy and build with them. The Learn docs get more concrete for MAI-Image-2, spelling out deployment type, API endpoint shape, authentication options, pixel limits, and region availability.

So the cleanest reading is this: Microsoft is spreading one MAI family across several connected surfaces. Foundry is the developer platform story. Azure Speech is part of the production path for the audio models. Microsoft AI Playground is the try-it-now surface. That spread is not a bug. It is the strategy.

We have seen a similar instinct elsewhere in Google AI Studio's full-stack distribution play, where the trick is not just model quality but controlling more of the path from experiment to deployment. Foundry is moving in that direction too.

This is a platform shift, not a declaration of independence from partners

The easy overreaction would be to say Microsoft is replacing everyone else in Foundry. That is not what happened.

Foundry still matters partly because it gives developers access to a wide mix of models and infrastructure options. Microsoft is not throwing the partner catalog into a lake. What it is doing is making sure some of the most important multimodal plumbing now carries a Microsoft label. That gives the company more control over pricing, roadmap timing, product integration, and the margin story underneath all of it.

It also makes Foundry easier to explain. The platform is no longer just where Microsoft hosts the AI industry. It is increasingly where Microsoft hosts the AI industry and inserts more of its own stack into the middle of the workflow. That lines up with the broader Foundry positioning we saw in Microsoft's local and sovereign AI stack push, and it rhymes with the broader industry move we called out in OpenAI's agents platform shift: platform companies want the whole loop, not just a seat in it.

That is why this launch matters. MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 are useful products. More important, they make Foundry look less like a showroom for everybody else's breakthroughs and more like a Microsoft-built multimodal platform with its own serious internals. For Redmond, that is not a side quest. It is the point.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Combined April 2 announcement with pricing, availability framing, and the broad first-party positioning for all three models.

Azure AI Foundry blog post with the strongest Foundry-specific framing, preview language, use cases, and audio-model availability details.

Gives the sharper transcription argument, price-to-performance claims, and product rollout notes around Copilot and Teams.

March 19 announcement that establishes MAI-Image-2's earlier debut and the more selective initial API-access language before the April 2 stack push.

Foundry documentation with MAI-Image-2 deployment mechanics, region list, API shape, and preview status.

About the author

Idris Vale

Idris writes about the institutional machinery around AI, but the lens is broader than policy alone: procurement frameworks, public-sector buying rules, platform leverage, compliance burdens, workflow risk, and the market structure hiding beneath product or infrastructure headlines. The through-line is practical power, not abstract theater.

- 23

- Apr 10, 2026

- Brussels · London corridor

Archive signal

Reporting lens: Follow the buying process, not just the bill text.. Signature: Policy turns real when someone has to buy the system.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Tracks the institutions, incentives, and market structure that quietly decide which AI systems get deployed and why.