Meta Muse Spark is really a distribution launch

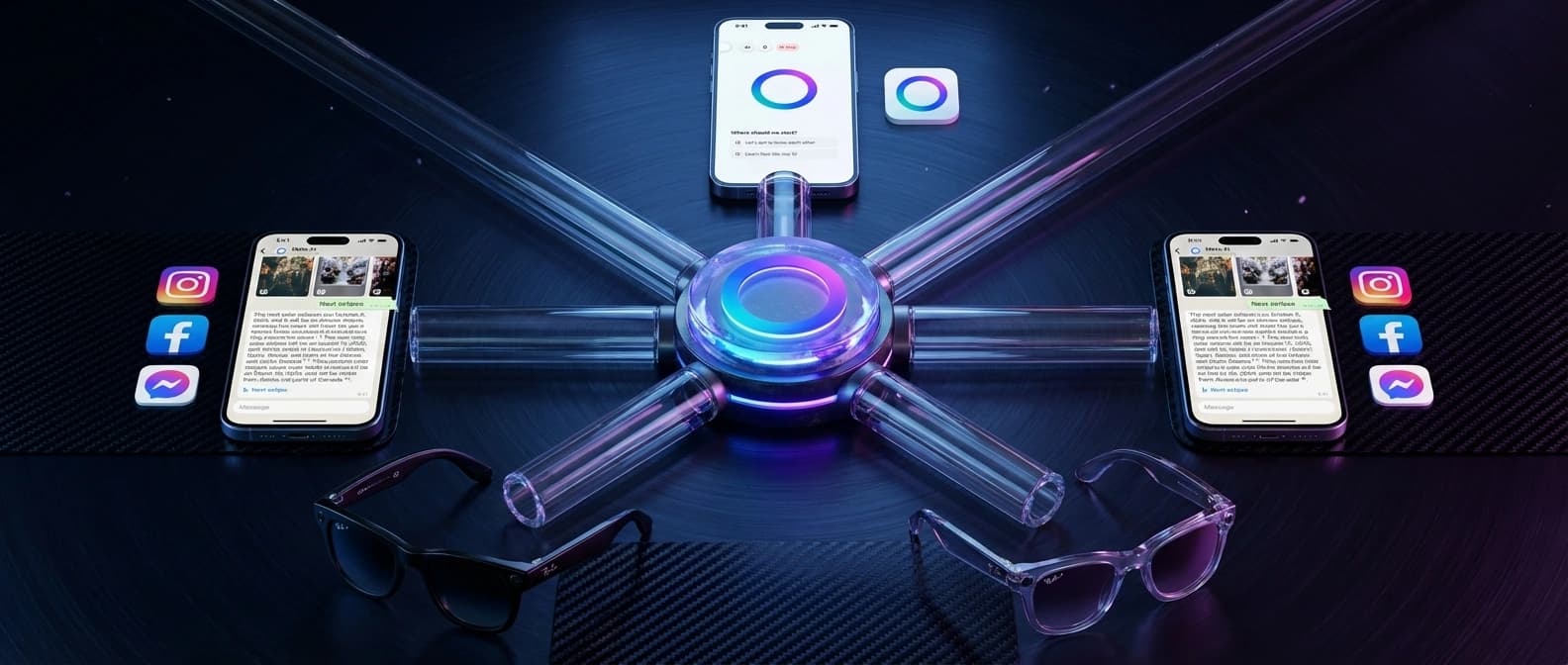

Meta is using Muse Spark to put a proprietary reasoning model in Meta AI now, then roll it into WhatsApp, Instagram, Facebook, Messenger, AI glasses, and an API preview.

Muse Spark matters less as a benchmark event than as Meta deciding its next AI move should ride its own consumer surfaces first.

Meta wants you to look at Muse Spark and see a model launch. There is a benchmark story. There is a scaling story. There is a fresh lab label, Meta Superintelligence Labs, which sounds like the sort of name a company gives itself when it would like you to stop asking how the last cycle went.

But Muse Spark is more interesting as a distribution story.

The key fact is not that Meta announced a new reasoning model on April 8. The key fact is that Meta is putting that model into the Meta AI app and meta.ai now, then extending it to WhatsApp, Instagram, Facebook, Messenger, and AI glasses in the coming weeks. At the same time, Meta says it is opening a private API preview for select partners. That is not just a model release. It is Meta trying to turn one proprietary assistant brain into a cross-surface product system.

That shift matters because Meta spent years treating open-weight credibility as a major part of its AI identity. Llama helped Meta look generous, ambitious, and very online in the way only AI launch discourse can. Muse Spark points somewhere else. This time the company is keeping the flagship assistant model hosted, tightly integrated with its own surfaces, and paired with product behavior that sounds a lot like a consumer-friendly agent harness.

In other words, this is a model launch wearing product-distribution clothing. Or maybe the other way around.

Meta Muse Spark is live in Meta AI now, but the full rollout is staggered

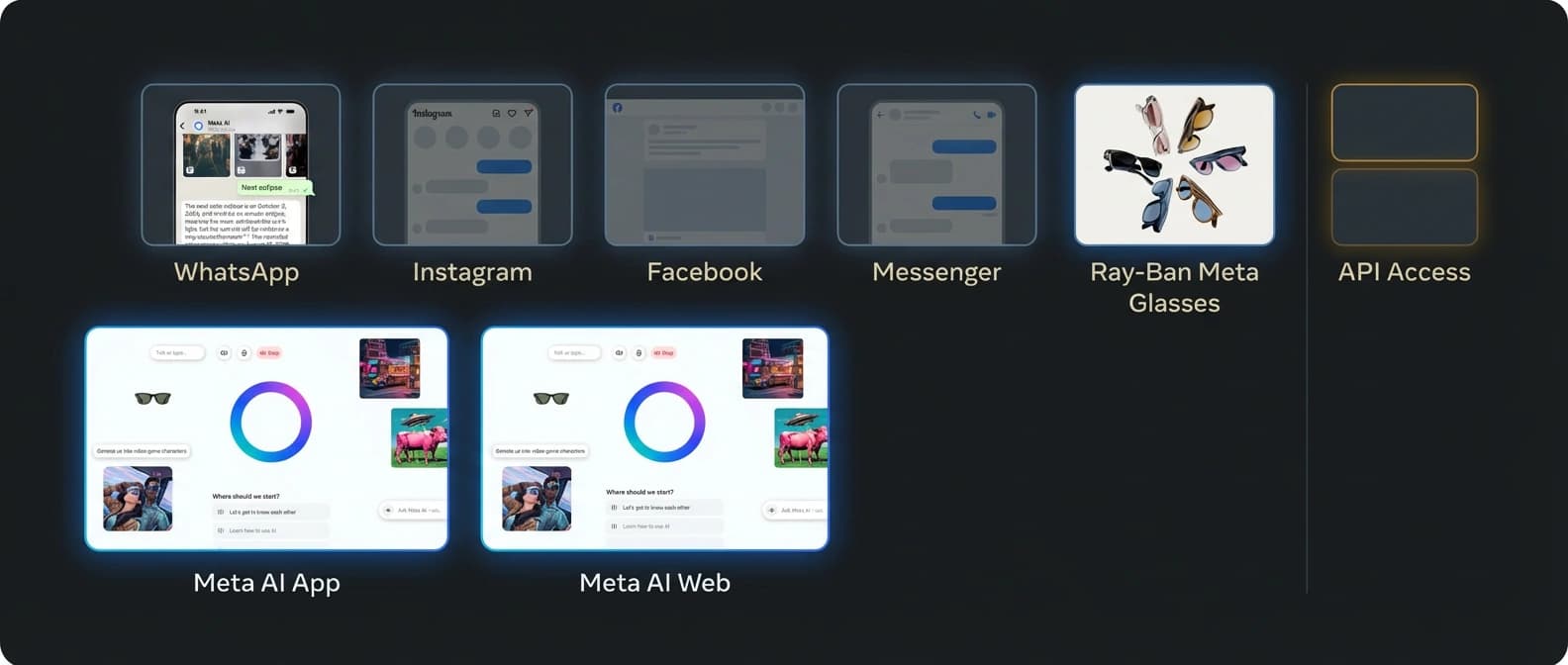

Meta's own launch post is quite clear about the immediate state of play. Muse Spark now powers Meta AI in the Meta AI app and on meta.ai. The broader rollout is not simultaneous. Meta says new modes and capabilities are starting in the US on those two surfaces first, then coming to more countries and to the other places people already use Meta AI.

That distinction matters because the launch copy is easy to skim incorrectly. Readers want to know what exists today, what is promised, and what is still parked behind careful corporate phrasing. Here is the practical version.

| Surface or capability | Status on April 8 | What Meta says is changing |

|---|---|---|

| Meta AI app | Live now | Muse Spark powers the assistant, with Instant and Thinking modes available where Meta AI already operates |

| meta.ai website | Live now | Same upgraded assistant experience, with the broader visual and reasoning upgrade starting here first |

| Contemplating mode | Limited rollout | Meta says this parallel-agent reasoning mode will roll out gradually |

| WhatsApp, Instagram, Facebook, Messenger | Coming in weeks | Meta says the new modes and capabilities will expand into these surfaces |

| AI glasses | Coming in weeks | Meta says Muse Spark's perception abilities will become more useful when the assistant reaches its glasses |

| API access | Private preview only | Meta says select partners can access Muse Spark via API private preview |

| Open-source release | Not now | Meta says it hopes to open-source future versions |

That is a far more consequential chart than any benchmark slide, mostly because people can actually act on it.

A benchmark chart tells you who had the nicer afternoon in the lab. A rollout chart tells you who intends to be in front of users first.

Meta is shifting from open-weight bragging rights to proprietary distribution

This is the strategic change hiding in plain sight.

Muse Spark is not being introduced as the next open-weight banner for the internet to benchmark, fine-tune, quantize, and argue about until everyone forgets why they were arguing. It is being introduced as a proprietary model that powers Meta's own assistant surfaces and enters the API market through a private preview.

Meta does say it hopes to open-source future versions. That language is important, and also wonderfully non-committal. "We hope to" is not the same sentence as "we are releasing." It is best read as a signal that Meta does not want to abandon its open-model reputation entirely, even while its most important new assistant move is closed.

That makes Muse Spark a meaningful repositioning. Meta is effectively saying that open models may still matter, but the near-term battle for consumer assistant relevance will be fought through distribution it already controls. It has an app. It has messaging surfaces. It has social feeds. It has glasses. It has identity, graph data, and product real estate that rivals would need years and a small diplomatic incident to recreate.

This is also why Muse Spark feels closer to the logic behind platform consolidation than to the older Llama-era story. Meta is not merely asking developers to admire the model. It is asking users to encounter the model inside products they already open every day.

That puts it in a different lane from pieces like Google's Gemini 3 stack story, where the emphasis is on routing developers across a family of tools and surfaces, or OpenAI's GPT-5.2 and Codex cloud-coding push, where the core power still concentrates around a paid, hosted work surface. Meta's twist is simpler and more aggressive: it already owns the consumer doorways.

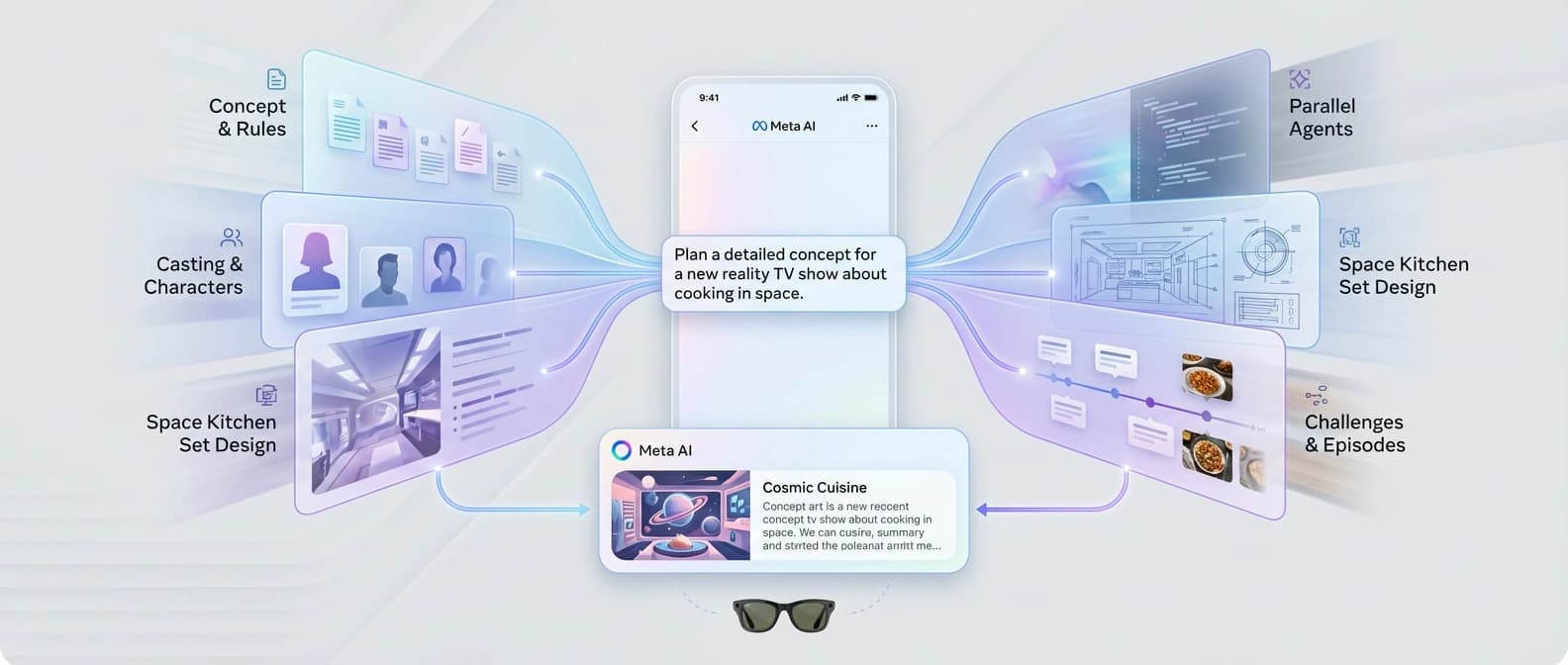

Parallel agents are the product trick, not just a research footnote

Meta's technical blog describes Muse Spark as a natively multimodal reasoning model with tool use, visual chain of thought, and multi-agent orchestration. The feature with the most product significance is not the one with the flashiest chart. It is the claim that Meta AI can launch multiple subagents in parallel and that the coming Contemplating mode orchestrates several agents reasoning at once.

Meta's public example is delightfully suburban: planning a family trip to Florida while separate agents split itinerary work, compare destinations, and collect kid-friendly activities. It is a polished example, but the idea underneath is bigger. Meta is trying to make agentic decomposition feel like a normal assistant behavior rather than a premium lab trick hidden behind a special button and a larger invoice.

The technical blog goes further, saying Contemplating mode uses multiple agents that reason in parallel so Muse Spark can spend more test-time compute without drastically increasing latency. That is worth paying attention to. The company is not only saying "our model thinks harder." It is saying "our product can divide work." Those are different claims.

And yes, the benchmarks arrive right on schedule, because no major AI launch is considered legally binding until several bar charts are wheeled onto the stage. Meta says Muse Spark offers competitive performance in multimodal perception, reasoning, health, and agentic tasks, and says Contemplating mode reaches 58% on Humanity's Last Exam and 38% on FrontierScience Research. Those are notable vendor-reported numbers. They are not a universal league table. They are evidence that Meta wants to be placed back in the frontier conversation, not proof that the market will instantly rearrange itself in gratitude.

What gives the agent claim more weight is that outside observers saw hints of the same architecture on the product side. Simon Willison's early testing of the Meta AI interface found a tool-rich harness with browser functions, Python execution, artifact-style outputs, and a subagents.spawn_agent tool exposed through the chat system. That does not validate every Meta claim, but it does suggest the company is shipping an assistant experience that is actually built around tools and delegation, not just borrowing the language because everyone else is doing it.

Muse Spark's health and multimodal pitch is ambitious, and it comes with familiar caveats

Meta is also pitching Muse Spark as a multimodal assistant that can understand images, answer visual questions, and perform especially well on health-related responses. In the technical post, Meta says it worked with more than 1,000 physicians to curate training data for better health reasoning. In the public launch post, the company says Muse Spark can answer common health questions in more detail, including questions involving images and charts.

That is a serious claim and it deserves to be read with two thoughts in mind at once.

The first is that it could be genuinely useful. Better visual understanding in a mainstream assistant is not trivial, especially once it reaches devices like AI glasses. You can see the adjacent logic in other product moves, including Google's push toward on-device and edge-aware AI experiences in areas like offline dictation and local assistance. Perception plus context is a real product direction.

The second is that health is where launch copy tends to get very inspirational right before everyone remembers privacy, liability, and failure modes exist. TechCrunch was right to point out the tension here. Meta is asking users to trust a more personal assistant while tying access to existing Meta accounts and expanding the system across services where identity and social context already matter.

So yes, Muse Spark's health and perception story could make Meta AI more useful. It also raises the classic question every major assistant launch eventually rediscovers: how personal is too personal when the platform already knows a great deal about you?

The private API preview matters because Meta wants more than app engagement

It would be easy to treat the API note as a side dish. It is not.

Meta says Muse Spark will be available in private preview via API to select partners. CNBC adds that Meta plans eventual paid API access for a wider audience later on. Translate that from corporate into plain English and the message is straightforward: Meta does not only want Muse Spark to increase engagement inside its own apps. It wants the option to sell the model as infrastructure too.

That matters for two reasons.

First, it gives Meta a second path to relevance. If consumer assistant usage grows, good. If developers or partners want the same model behind outside products, even better. A successful API lane would make Muse Spark part app strategy and part platform revenue strategy.

Second, it lets Meta test the market without surrendering control. "Private preview to select partners" is one of those phrases that sounds modest but is actually a power move. It means Meta gets feedback, distribution experiments, and potential commercial upside while keeping the tap mostly closed. This is not a public developer free-for-all. It is a curated opening.

That is a notable contrast with the more openly developer-centered positioning you see from Google and OpenAI, or with the premium enterprise intrigue surrounding Anthropic's higher-end agentic pushes like Project Glasswing. Meta is starting from consumer surfaces, then extending outward. The others often start from developer or enterprise demand, then work inward toward broader habit formation.

How Muse Spark changes Meta's position against OpenAI, Anthropic, and Google

Muse Spark does not instantly put Meta in the lead. It does, however, make Meta more strategically legible.

Against OpenAI, the difference is distribution. OpenAI has stronger mindshare around the standalone assistant and premium reasoning tiers. Meta has social, messaging, and wearable surfaces that already sit inside ordinary routines.

Against Google, the difference is product terrain. Google can spread Gemini through search, Android, Workspace, developer tools, and cloud. Meta's advantage is narrower but more intimate: private messaging, social browsing, creator ecosystems, and glasses.

Against Anthropic, the difference is market starting point. Anthropic still feels strongest where careful, higher-trust, higher-value work happens. Meta is aiming at everyday assistant behavior at mass consumer scale.

This is why the closed-versus-open shift matters so much. Meta appears to have concluded that winning the next phase of assistant adoption is less about ideological purity and more about placing one capable model inside enough high-frequency surfaces that people stop thinking about the underlying model at all.

That is a very Meta move. The company has never needed the most romantic narrative. It needs reach, repetition, and the ability to make a feature feel ambient.

The part of the Muse Spark launch worth remembering

The most important sentence in the whole launch is probably not a benchmark number. It is the simple fact that Muse Spark powers Meta AI now and is heading toward the rest of Meta's consumer stack next.

That tells you how Meta wants this cycle to work.

First, rebuild the stack and re-enter the frontier conversation with a model that is, in Meta's words, small and fast by design. Second, package that model as an assistant with Instant, Thinking, and eventually Contemplating modes so the capability ladder maps onto understandable product behavior. Third, spread it through apps and devices that already have users. Fourth, open a private API lane once the company is ready to monetize or selectively externalize the same engine.

If that works, Muse Spark will matter less because people memorize its benchmark placement and more because Meta AI starts showing up everywhere Meta already has leverage. The real test is not whether one chart flatters the launch. The real test is whether users get used to asking Meta's assistant for harder tasks across the products they already inhabit.

That is why this announcement deserves more attention than a standard model launch and less reverence than benchmark discourse will inevitably give it. Meta did not just release a model. It picked a go-to-market shape.

And for once, the shape is the story.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Canonical source for the public rollout framing, the claim that Muse Spark is the first Muse model from Meta Superintelligence Labs, the 'small and fast by design' line, current availability in the Meta AI app and meta.ai, the coming rollout to Instagram, Facebook, Messenger, WhatsApp, and AI glasses, plus the private API preview and future open-source hope.

Primary technical source for multimodal reasoning, tool use, visual chain of thought, multi-agent orchestration, Contemplating mode, parallel-agent reasoning, benchmark framing, health-response positioning, and efficiency claims relative to Llama 4 Maverick.

Useful connective reporting on Meta's strategy shift, the planned rollout path across consumer products, the private API preview, and how Meta is framing Muse Spark against rivals.

Useful for external confirmation that Muse Spark is live on web and app now, that Contemplating mode is still coming, and that the health angle raises obvious privacy questions.

Best independent early look at the hosted product surface, the Instant versus Thinking modes, the private API preview language, and the presence of tool and subagent behavior inside the Meta AI harness.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Products

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.