Anthropic Project Glasswing and Claude Mythos Preview

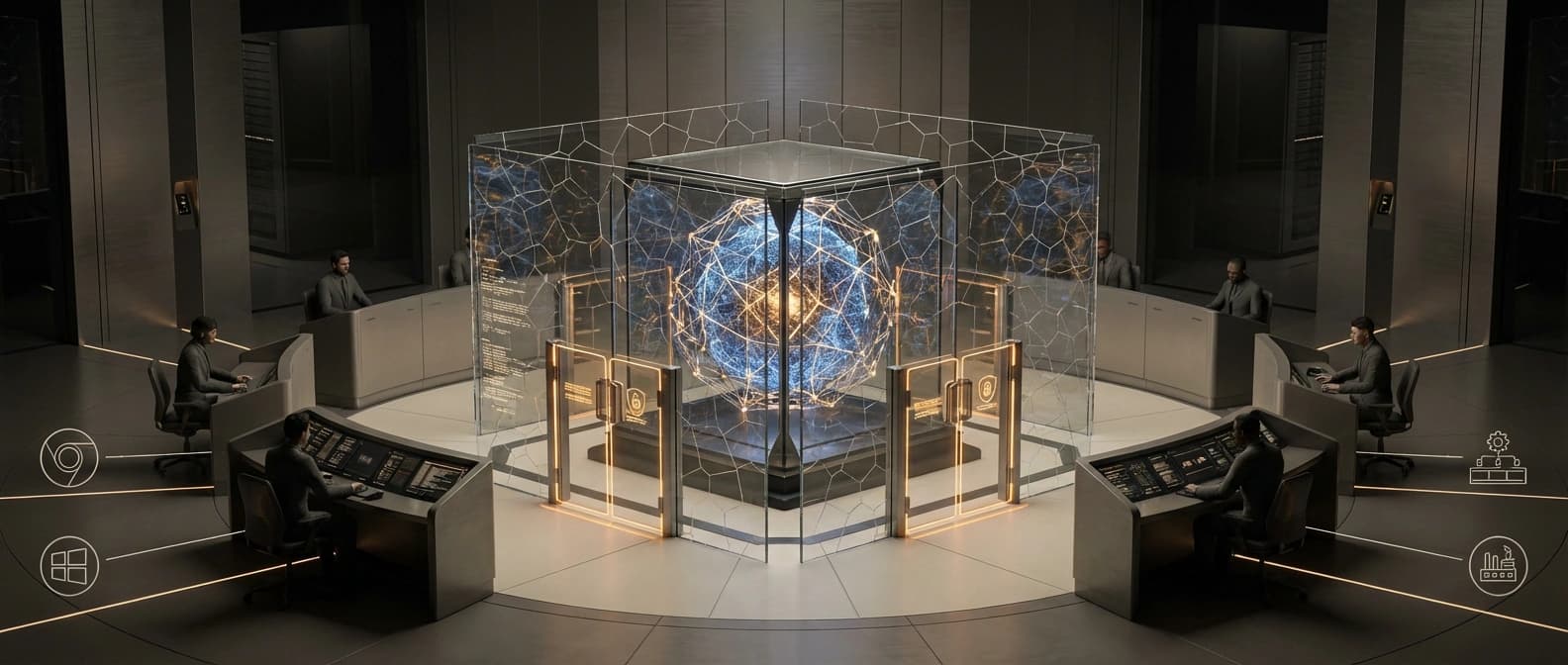

Anthropic is keeping Claude Mythos Preview inside Project Glasswing, giving a defender coalition first access to a cyber-capable model instead of a normal public release.

Project Glasswing matters because Anthropic is treating a top-tier cyber model like coalition infrastructure, not a cheerful new dropdown option.

Anthropic shipped two linked documents on April 7, and together they tell a much more interesting story than either one does alone. The first is Project Glasswing, a new security initiative with real funding, named partners, and a restricted opening cohort. The second is Claude Mythos Preview, the unreleased model Anthropic says pushed it into action.

Read side by side, this is not really a normal model launch. It is a distribution decision.

Anthropic says it does not plan to make Mythos Preview generally available. Instead, it is putting the model behind Project Glasswing and limiting early access to critical industry partners and open-source defenders. That is the part that matters. Frontier labs have spent the last few years training users to expect another dropdown, another API tier, another benchmark chart, and maybe a rate-limit apology by Friday. Glasswing is different. It treats a cyber-capable model as something to gate, coordinate, and hand to a coalition first.

I keep coming back to that distinction because it says a lot about where the frontier is moving. Once a model starts looking genuinely useful for high-end vulnerability discovery and exploit work, the access policy stops being a footnote. It becomes the product.

Project Glasswing looks like a coalition, not a launch

Anthropic says Project Glasswing begins with $100 million over five years, supports 25 organizations in its opening cohort, and aims to add 125 more organizations within 90 days. The named partner list is not subtle: Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks all appear in the launch material, alongside security institutions and researchers.

That lineup gives the game away. This is not framed like a public software release. It is framed like a defensive coalition around critical software.

Anthropic says the goal is to help important software projects improve security against AI-powered attacks, improve how vulnerabilities are found and fixed, and back the tools, processes, expertise, and recommendations needed for that work. That already sounds closer to a cyber-defense program than a tidy model-announcement blog post. The company also says this structure is meant to help defenders strengthen key systems before comparable capabilities are broadly available elsewhere.

That is an unusually direct statement. It implies Anthropic thinks the transition period matters, the capabilities are meaningfully dual-use, and first access should go to actors it considers defensive and strategically important.

A quick map of the package helps:

| Layer | Access posture | Anthropic's stated job | Why it matters |

|---|---|---|---|

| Project Glasswing | Restricted opening cohort, then selective expansion | Coordinate defenders around critical software hardening | The governance layer is part of the announcement, not an afterthought |

| Claude Mythos Preview | Not planned for general availability | Advanced vulnerability discovery, exploit work, and defensive preparation | The strongest cyber-capable model stays behind a gate |

| Public evidence so far | Mostly Anthropic-authored posts, plus same-day pickup | Explain urgency and establish the frame | The strongest evidence is still company-reported, so caution stays necessary |

Anthropic is drawing a line around cyber-capable models

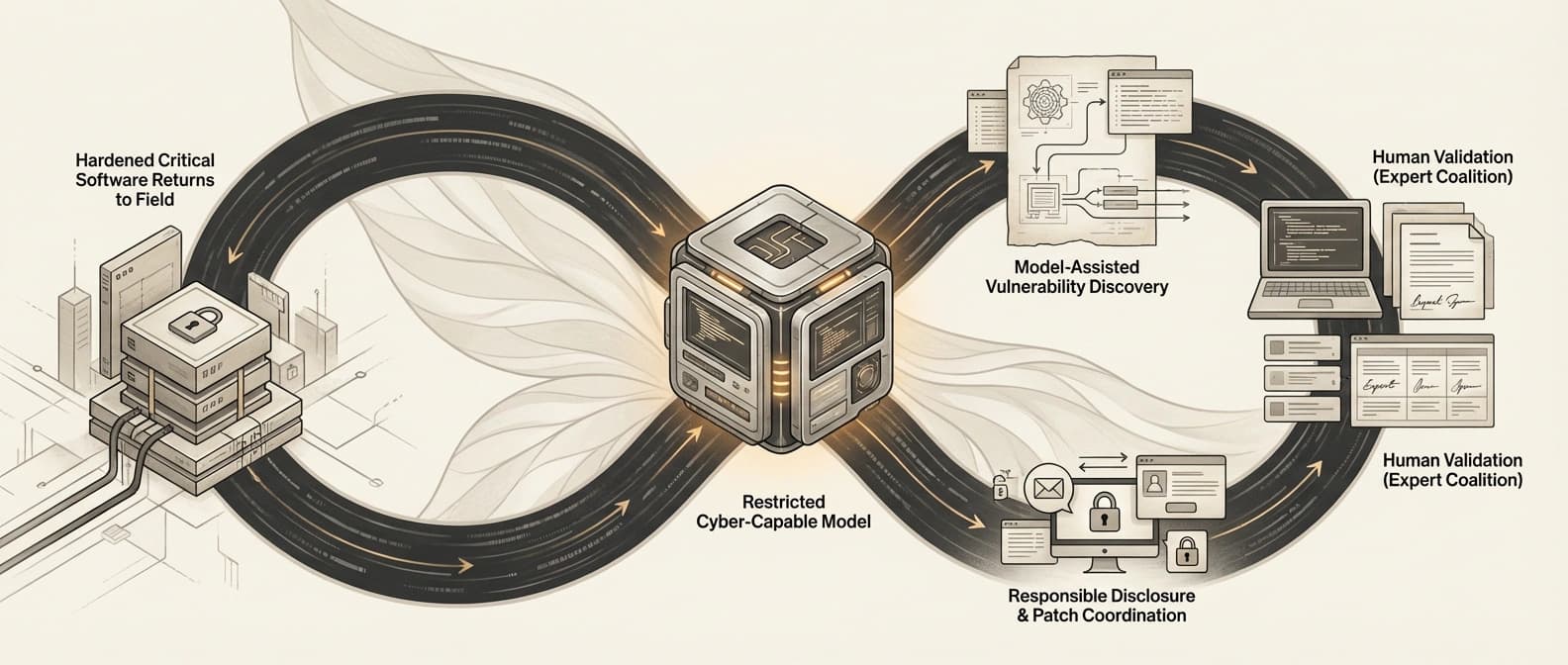

The Mythos write-up makes Anthropic's logic even plainer. The company says it is releasing the model first to a limited group of critical industry partners and open-source developers through Glasswing so defenders can secure important systems before similar models become broadly available. The Glasswing announcement separately says Anthropic does not plan to make Mythos Preview generally available.

That is not a cosmetic decision. It is a line around a class of capability.

There has been a wider drift in this direction for months. We have already seen companies inch toward heavier control surfaces in stories like AWS's frontier-agents push for security testing and cloud operations and OpenAI's safety bug bounty expansion around agentic risk.

The broader move toward open-source security funding and AI supply-chain defense points in the same direction.

We have also seen the operational side of the argument in pieces like NVIDIA OpenShell's security-control-plane design and the AI agent sandbox shift.

Glasswing pushes that logic one step further. It says, in effect: if a model is strong enough to materially change bug hunting and exploit development, then shipping it is not just a model-card question. It is an access-control question, a partner-selection question, and maybe a geopolitical question too.

To put it less politely, Mythos Preview is not being introduced like a cheerful new option beside Sonnet. It is being introduced like restricted infrastructure.

What Anthropic says Claude Mythos Preview can do

Here is the part that will get the headlines, and it deserves careful reading.

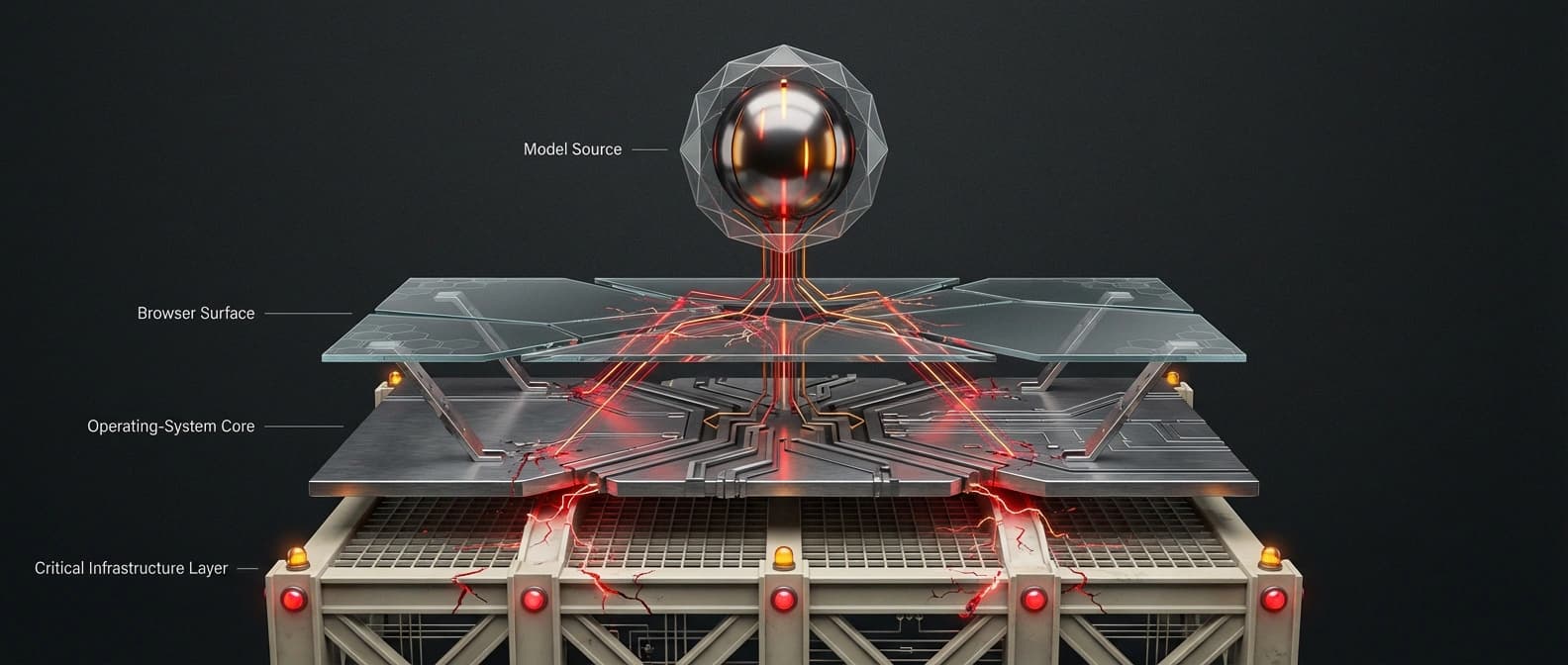

According to Anthropic, Mythos Preview can identify and exploit zero-day vulnerabilities in every major operating system and every major web browser when directed by a user to do so. The company says Anthropic engineers without formal security training used Mythos Preview to look for remote-code-execution bugs overnight and woke up to working exploits. It says the model wrote exploit chains across Linux, browser sandboxes, and other operating-system targets, and that it autonomously found and exploited a 17-year-old FreeBSD remote-code-execution vulnerability that became CVE-2026-4747.

Anthropic also offers benchmark-style evidence. In one Firefox exploitation comparison, it says Opus 4.6 produced working exploits twice across several hundred attempts, while Mythos Preview produced 181 working exploits and achieved register control 29 additional times. In Anthropic's internal OSS-Fuzz-style testing, the company says Mythos Preview reached full control-flow hijack on ten fully patched targets. In another case, Anthropic says a full exploit pipeline took under a day at a cost below $2,000.

Those are startling claims. If they hold up, Mythos is not just better autocomplete for security engineers. It is a system that compresses time, labor, and expertise in a part of cybersecurity where those things usually matter a lot.

But Anthropic also says two other things that are easy to miss when the scarier numbers start flying.

First, Mythos is not publicly available, so outside researchers cannot independently benchmark it yet.

Second, Anthropic does not claim the model universally beats top human experts across every category. In the technical post, the company says Mythos outperforms many existing benchmarks and some expert red-teamers in specific tasks, while also noting it does not believe the model beats the best human expert red-teamers in every area. That is a much more credible claim, and it is the one worth repeating.

The safest way to read the vulnerability claims

Anthropic's own write-up is unusually blunt about its evidence limits. It says over 99% of the vulnerabilities it has found are not yet patched, which is why the company withholds details on most of them. It also says the public examples are a lower bound on what it believes will be disclosed over the coming months. To create some accountability around those hidden findings, Anthropic says it is publishing SHA-3 commitments that it plans to replace with underlying materials after responsible disclosure windows expire.

That is better than pure hand-waving, but it is still not independent replication.

A second caveat matters just as much. Some of the examples are fully autonomous after an initial prompt. Some involve Anthropic later working with the model to increase severity or continue the chain. Some rely on Anthropic's own scaffolds, validation process, and contractor review. Again, that does not make the results fake. It just means the cleanest version of the story is not "the model solved security." It is "Anthropic says this model, inside Anthropic's own workflow, is capable enough that the old release pattern now looks irresponsible."

That is still a big story.

A simple way to read the technical post without swallowing the whole press release is this:

| Anthropic says | Why it matters | What it does not prove |

|---|---|---|

| Mythos can find or exploit zero-days across major browsers and operating systems | Suggests unusually broad offensive capability | Independent outside teams have not yet verified those full claims end to end |

| Thousands more vulnerabilities are in the disclosure pipeline | The scale could be much larger than the public examples | The totals are still Anthropic-reported and mostly undisclosed |

| Mythos can chain multiple bugs into working exploit paths | Reduces the advantage of purely tedious hardening steps | It does not mean all defenses are obsolete or that every environment falls easily |

| Mythos will stay gated behind Glasswing for now | Anthropic sees real dual-use and release-risk concerns | It does not prove Anthropic has solved the governance problem for everyone else |

There is also a subtler point buried in the Mythos post that I think matters a lot. Anthropic argues that some defense-in-depth measures work mostly by adding friction rather than creating hard barriers, and that large-scale model-assisted attackers can grind through that friction much faster. If that is right, then the pressure will not just land on better models. It will land on better security architecture, faster patch cycles, tighter sandboxes, and more explicit control layers.

Why Glasswing matters for the wider AI security market

This is where the story gets bigger than Anthropic.

Project Glasswing suggests frontier labs may be starting to split AI distribution into at least two tracks. One track is the familiar public one: general-purpose models, API access, enterprise seats, consumer UX, lots of benchmark marketing, and the usual scramble to win developer mindshare. The other track is more restricted: models with unusually strong cyber capability, distributed first through partnerships, security institutions, hyperscalers, major vendors, and selected open-source defenders.

That second track looks a lot less like SaaS and a lot more like strategic infrastructure. Not military hardware, exactly, but not normal software either. Think fewer launch livestream vibes, more closed-door briefing energy.

If that becomes standard, several things change.

The most obvious is that access itself becomes a strategic advantage. The security vendors, cloud operators, and platform companies inside these early coalitions may get a head start on finding and fixing bugs in critical systems. That is good if the coalition really helps harden browsers, kernels, libraries, and developer infrastructure before similar offensive capability spreads more widely.

But there is a concentration risk too. The same model that helps defenders can also reshape who counts as a trusted defender in the first place. Anthropic says Glasswing includes open-source projects and plans fast expansion, which is encouraging.

Still, the opening picture is not egalitarian. It is large institutions, major vendors, and critical infrastructure actors. If this model of rollout spreads, the frontier cyber stack may increasingly live inside alliances that look half security partnership, half access cartel.

That may be unavoidable. It may even be sensible in the short term. Anthropic itself argues that powerful language models should eventually benefit defenders more than attackers once the ecosystem reaches a new equilibrium. I can believe that and still think the transition period looks politically messy. Who gets in early matters. Who validates the claims matters. Which open-source maintainers get meaningful help, rather than a mention in a blog post, matters.

The real product here is controlled access

Glasswing is a useful reminder that sometimes the interesting thing in AI is not the chat interface, or even the benchmark table. It is the boundary around the model.

Anthropic is telling the market that Claude Mythos Preview is strong enough, and risky enough, that it should first live inside a governed access regime with named partners, funding, disclosure workflows, and an explicitly defensive mission. That does not prove every claim in the technical post. It does not prove Anthropic has already solved the governance puzzle. And it definitely does not prove AI now universally outperforms human security researchers. The company is more careful than that in its own write-up, and we should be too.

But it does show something important. Frontier labs are starting to treat top-tier cyber-capable AI less like a normal public release and more like restricted coalition infrastructure. If Glasswing is an early example rather than a one-off, the next phase of AI competition will not just be about whose model is smartest. It will also be about who controls access, who gets trusted first, and who gets to harden the software stack before everyone else realizes the old timeline is gone.

That is the real launch here.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary source for the initiative's framing, partner list, funding, opening cohort, access posture, and Anthropic's statement that Claude Mythos Preview is not planned for general availability.

Primary technical source for Anthropic's capability claims, evaluation design, responsible-disclosure caveats, and repeated warnings that most findings remain undisclosed because they are not yet patched.

Used to confirm same-day publication timing for Project Glasswing and the Claude Mythos Preview materials.

Used as same-day pickup evidence during drafting. Not relied on for any unique factual claim in the public body.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Products

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.