Granite 4.0 3B Vision is IBM's document AI wedge

IBM's Granite 4.0 3B Vision is a compact Apache 2.0 model for charts, tables, and form fields, giving document AI an open enterprise wedge.

Granite 4.0 3B Vision matters because it is not trying to be a universal multimodal celebrity. It is trying to be the adult in the document room.

This week IBM put Granite 4.0 3B Vision on Hugging Face, and the interesting part is not that it is multimodal. At this point every lab with a GPU budget and a pulse has one. The interesting part is that IBM picked a job.

Granite 4.0 3B Vision is aimed at enterprise document understanding: charts, tables, forms, and semantic key-value extraction. Not glamorous. Very useful. In a market full of general-purpose VLMs trying to describe everything, IBM is chasing the more lucrative fate of reading the annual report correctly.

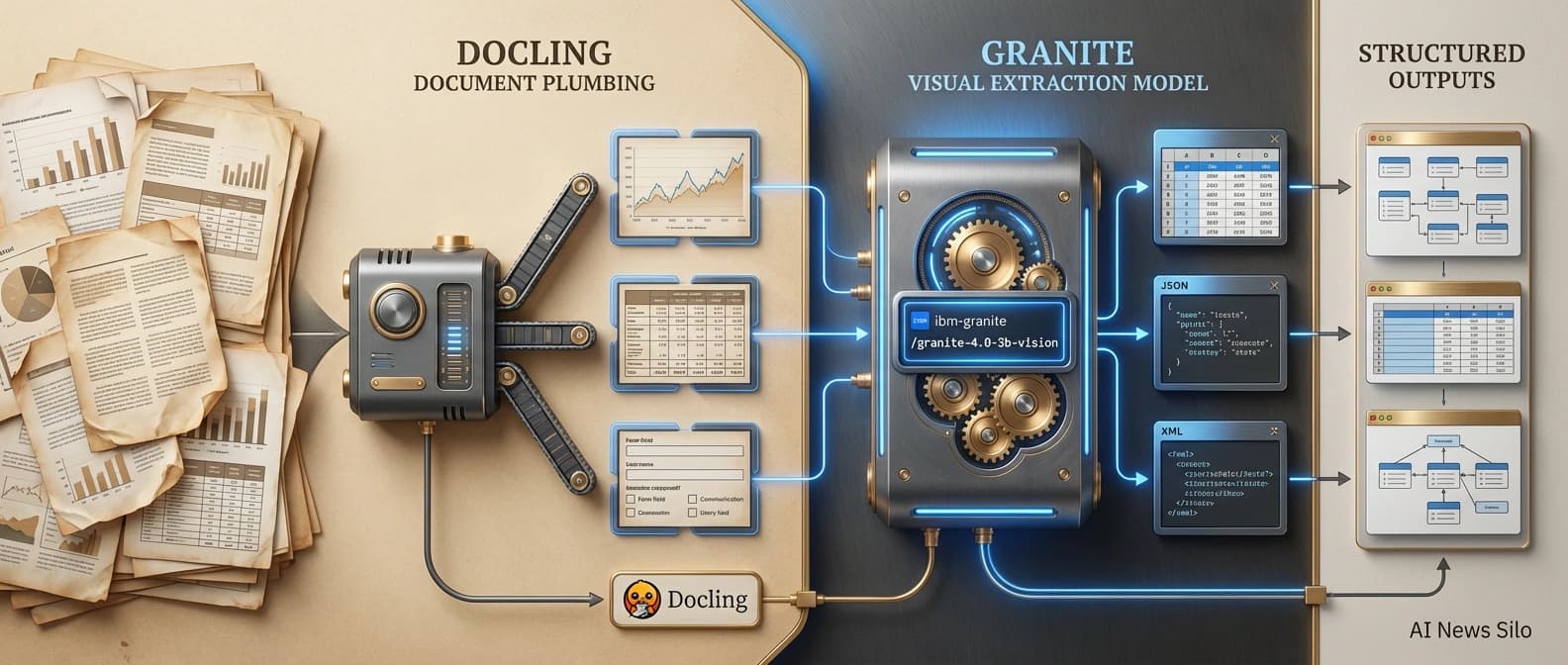

That is the wedge. IBM's launch post and model card describe a compact Apache 2.0 model, packaged as a LoRA adapter on top of Granite 4.0 Micro and built to slot into a document pipeline with Docling. The real question is not whether IBM shipped another vision model. It is whether IBM made open document AI a little more serious.

I think the answer is yes, with a few asterisks and one raised eyebrow around benchmarks.

Granite 4.0 3B Vision is built for paperwork, not party tricks

The easiest way to understand Granite 4.0 3B Vision is to notice what IBM is not claiming. It is not saying this compact model beats every general-purpose VLM at everything under the sun. It is saying the model is good at a narrow but commercially important cluster of tasks: chart extraction, table extraction, and semantic KVP extraction across messy document layouts. That is saner than the usual multimodal pageant, where everyone arrives in a sequined benchmark chart and nobody wants to talk about invoices.

The model card is refreshingly blunt. Granite ships chart modes like chart2csv, chart2summary, and chart2code, table extraction modes like tables_html and tables_json, plus schema-based key-value extraction for forms. This is not just "look at image, say words." It is "look at document, return something a pipeline can use."

That fits beside our earlier look at Mistral Forge and enterprise model ownership. The serious enterprise market keeps moving away from one giant model for everything and toward systems that know their job and can be held accountable to real workflows.

Why the Apache 2.0 license and LoRA packaging matter

The strongest part of the release may be the least cinematic part. IBM says Granite 4.0 3B Vision ships under Apache 2.0 and is delivered as a LoRA adapter on top of Granite 4.0 Micro, with a 3.5B base language model and 0.5B LoRA adapters. Nerdy, yes. Important, also yes.

Instead of forcing teams into a monolithic multimodal stack every time they want document extraction, IBM is treating vision as a modular add-on. The model card says text-only requests can use the base model without loading the vision adapter, while image prompts apply the LoRA at inference time. Same deployment, mixed workloads, less baggage.

For teams that care about cost and control, that plugs directly into the logic we covered in open-weight inference economics. Smaller specialized systems can beat giant universal ones if the task boundary is clear. IBM also provides usage paths through both Transformers and vLLM, including a custom out-of-tree vLLM integration. That moves the release out of ceremonial open source and into the much more useful "yes, a competent team can actually run this" zone.

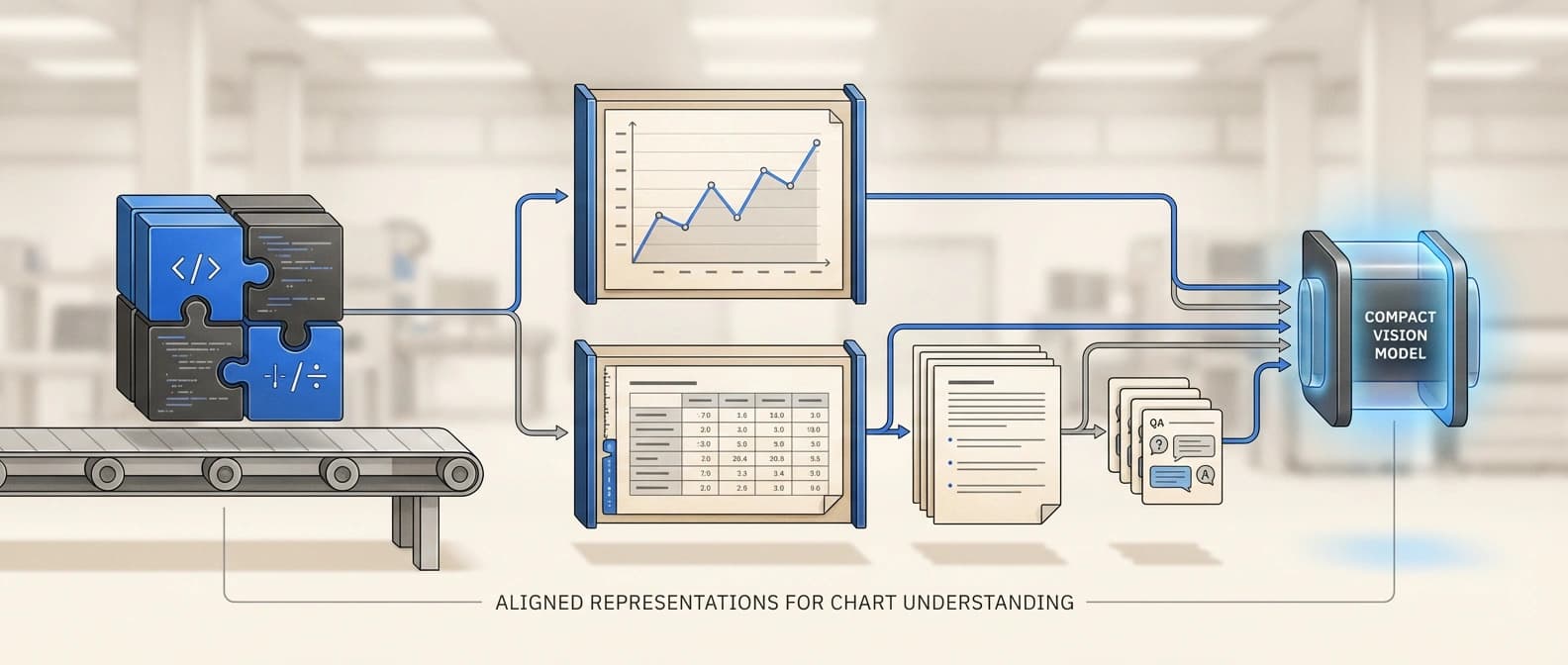

ChartNet gives IBM Granite 4 Vision a real specialty, not a vague vibe

The release would be a lot less interesting without ChartNet. IBM's launch materials describe it as a million-scale chart-understanding dataset built with a code-guided synthesis pipeline, and the CVPR 2026 paper explains why that matters. Each sample includes plotting code, a rendered chart, the underlying data table, a natural-language summary, and QA with reasoning.

The paper says ChartNet spans 24 chart types and six plotting libraries, with extra subsets for human annotations, real-world data, safety, and grounding. That matters because charts force a model to read spatial structure, numbers, labels, and trends at the same time. A model that can say "a blue line goes up" is not necessarily a model that can recover the values or summarize the change correctly.

What I like here is that the specialization is legible. Similar to the thing that made Ai2's MolmoWeb open-stack release worth paying attention to, the interesting move is not only that IBM shipped a model. It is that the surrounding dataset makes the job boundary obvious.

IBM's benchmark story looks promising, but it still needs adult supervision

IBM reports strong benchmark numbers across charts, tables, and semantic extraction. In the launch post, Granite 4.0 3B Vision scores 86.4 on Chart2Summary and 62.1 on Chart2CSV on the human-verified ChartNet benchmark, with the model card noting that chart evaluation uses GPT-4o as an LLM judge. IBM also reports leading table-extraction scores on PubTablesV2, OmniDocBench-tables, and TableVQA-Extract, plus 85.5 percent exact match zero-shot on VAREX for key-value extraction.

Interesting numbers. Three caveats. Most of the framing is still IBM-authored primary material. Some of the chart evaluation uses LLM-as-a-judge, which can be practical but is not the same thing as deterministic scoring. And the win condition here is specialized competence, not universal VLM supremacy. Granite matters if it handles enterprise paperwork better, faster, or more cheaply than bulkier alternatives.

That is also why the release overlaps with what we wrote about Together AI fine-tuning and reliability. Real value often shows up when a model is tuned to fail less often on a narrow job, not when it collects the loudest launch-week adjectives.

Granite 4.0 3B Vision plus Docling looks like a real enterprise document pipeline

The detail that pushed this launch from "interesting model card" to "serious enterprise wedge" for me is the Docling angle. IBM says Granite 4.0 3B Vision can run standalone on images, but it can also plug into Docling for end-to-end document understanding.

Docling's public repo highlights support for PDF, DOCX, PPTX, XLSX, HTML, images, and more, plus layout understanding, OCR, table structure, and local execution for sensitive or air-gapped environments. So when IBM describes Docling segmenting a PDF, finding figures or tables, and routing clean crops to Granite Vision for deeper extraction, the pipeline stops sounding hypothetical and starts sounding like something an enterprise team might actually trial.

That matters because the enterprise document problem is rarely "please look at this one image." It is "please process 400,000 ugly files created over 17 years, half of them scanned, and several of them clearly assembled by someone who hated future generations." A compact open model becomes much more useful when it slots into that larger machine cleanly.

IBM chose the boring layer on purpose. Good. The AI market does not need another multimodal demo that can identify a croissant from three angles while the accounts-payable pipeline still collapses on page two of a receipt. It needs more models that can survive contact with real business sludge.

The open-model wedge is narrow, but it is serious

So, is Granite 4.0 3B Vision truly open and deployable? On the evidence in the model card, yes, more than many launch-week "open" claims deserve. Apache 2.0 is real. The Hugging Face release is real. The deployment paths are real. The Docling integration route is real enough to take seriously.

Do the benchmarks matter? Yes, with attribution labels firmly attached. They show IBM targeted the right failure modes in document AI. They do not settle the question for everyone else. I want outside teams to push on the model, rerun the tasks, and see where it breaks. That is not hostility. That is how open releases graduate from promising to trusted.

And is this a serious enterprise document pipeline model or just another model-card flex? I land on serious, precisely because the ambition is smaller. Granite 4.0 3B Vision is trying to be a compact open component that does charts, tables, and semantic extraction well enough to earn a place in production pipelines.

That may not produce the loudest keynote. It may, however, produce the better business.

Paperwork wins.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary launch post with IBM's framing, architecture summary, benchmark claims, and Docling integration pitch.

Model card confirming Apache 2.0 licensing, LoRA packaging on Granite 4.0 Micro, supported extraction tasks, and deployment paths.

Dataset page for IBM's chart-understanding training and evaluation story.

ChartNet paper describing the chart-data pipeline, aligned modalities, and the open-dataset claim.

Confirms Docling's document parsing scope, local execution angle, and why Granite can slot into a practical pipeline.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- Open Source AI

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.