Ai2's MolmoWeb turns web agents into an open stack

Ai2's MolmoWeb ships open weights, open web-task data, and runnable tooling, giving developers a real shot at self-hosted browser agents instead of rented black boxes.

MolmoWeb matters because Ai2 is opening more of the recipe than the browser-agent market usually allows.

I keep seeing browser-agent launches treated like stage magic. The model clicks a button, the crowd gasps, and somehow nobody asks who gets the recipe after the confetti settles.

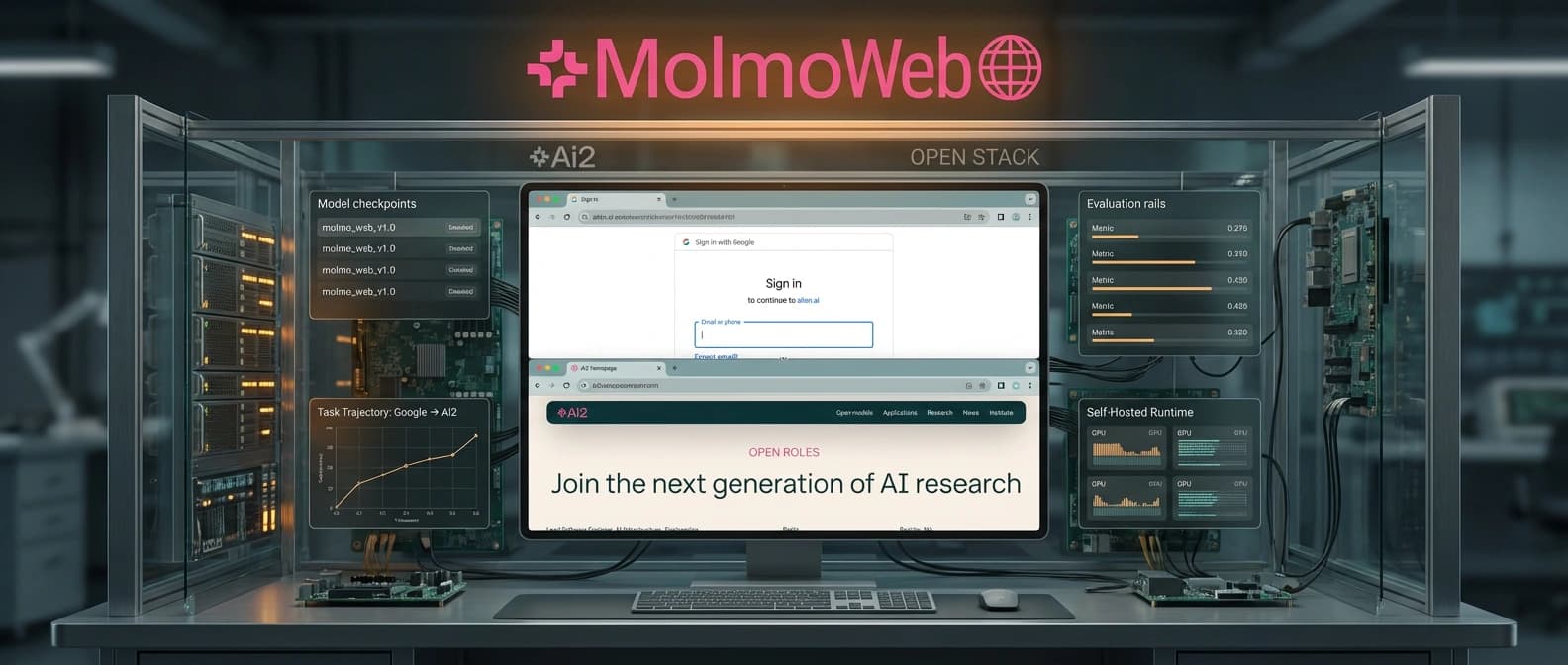

That is why Ai2's MolmoWeb launch caught my eye. The headline is not that Ai2 built another agent that can use a browser. Plenty of companies can already stage that trick. The headline is that Ai2 is opening more of the stack: model weights, web-task data, evaluation surfaces, and a self-hosting path you can actually inspect. In a market full of sealed lunch boxes, that matters.

No, this does not automatically make MolmoWeb the best browser agent on earth. It does make it easier to understand, test, and argue with. I trust systems more when I can see the plumbing.

What Ai2 actually opened up in MolmoWeb

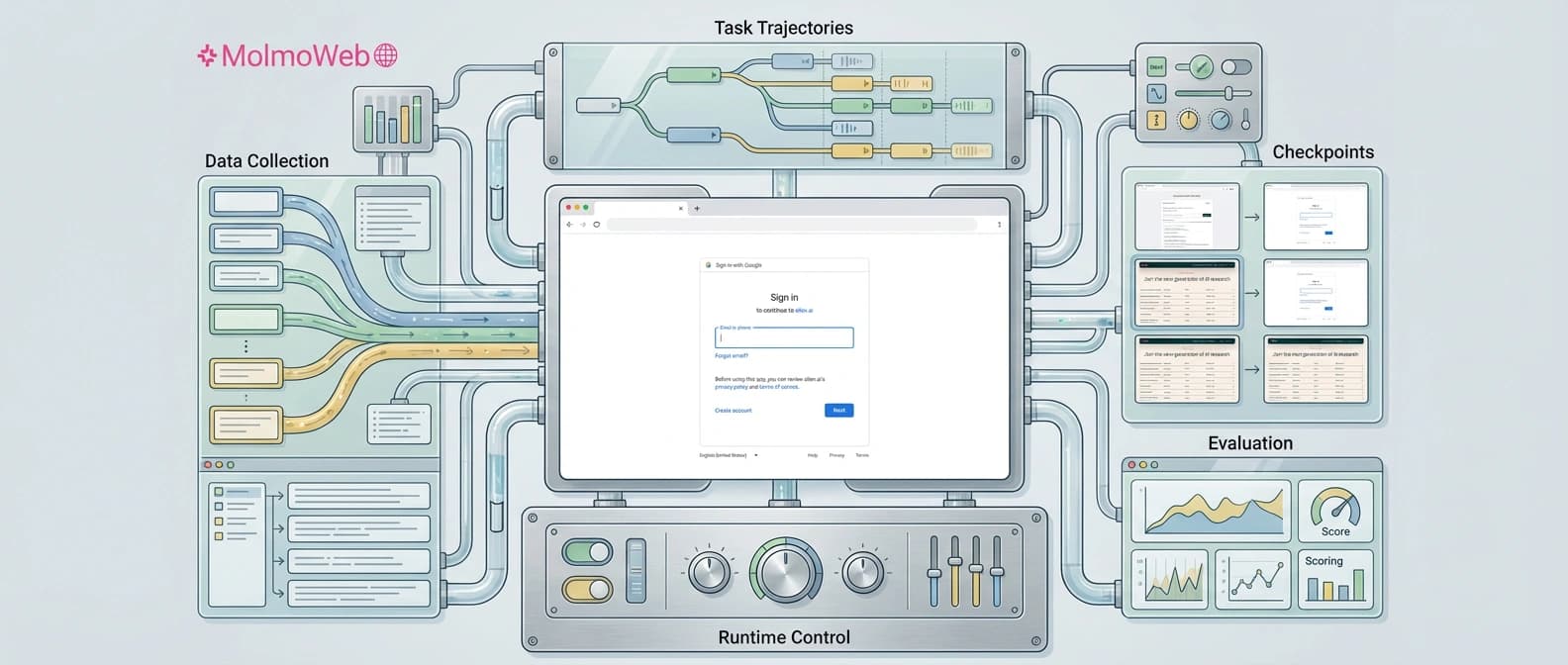

Ai2 says MolmoWeb ships in 4B and 8B variants built on the Molmo 2 family, with the models published on Hugging Face and runnable code published on GitHub. The interaction loop is familiar for this category: the agent takes a task instruction, a screenshot of the page, and recent action history, then predicts the next browser action, including clicks, typing, scrolling, tab switching, navigation, and user messages.

The unusual part is the amount of surrounding material Ai2 is willing to show. In the launch post, the lab says MolmoWebMix includes 36,000 human task trajectories, more than 623,000 subtask demonstrations, coverage across more than 1,100 websites, and over 2.2 million screenshot question-answer pairs from nearly 400 sites. Ai2 also says its synthetic trajectories came from text-only accessibility-tree agents plus human demonstrations, rather than from distilling a closed vision agent and calling it a day. That last distinction sounds fussy. It is not. It goes straight to whether other teams can reproduce the work without quietly depending on a proprietary parent model.

I would not call this a perfect open lab notebook yet. Ai2 says the training code is still coming soon, which means one important drawer in the filing cabinet is still locked. But compared with the usual browser-agent release, where the public mostly gets a slick demo and a prayer, MolmoWeb is much closer to a real kit. It feels less like ordering takeout from a mystery kitchen and more like getting the ingredients, the pan, and at least most of the recipe card.

Why open browser-agent tooling matters more than a demo reel

The closed vendors still have the easier commercial story. As we argued in OpenAI's agent platform shift and in Anthropic's Dispatch move, the big platforms are selling convenience, orchestration, and distribution first. That works because most customers want the result now and the architecture explanation never.

But builders eventually hit harder questions. Can I run this inside my own environment? Can I inspect why it failed on one cursed enterprise dashboard? Can I fine-tune it for my own workflow instead of waiting for a vendor roadmap to smile upon me? Can I control where screenshots, traces, and browsing state live? With most headline browser agents, the answer is some polished variation of "please enjoy the demo."

MolmoWeb matters because it gives developers something more concrete than that. It is not just an open framework waiting for someone else to supply the brains, and it is not just an open-weight checkpoint tossed over the wall without the surrounding evidence. It sits in the more useful middle: trained models, visible data claims, runnable tooling, and a stated self-host path. That is a much better starting point if you care about control instead of pure convenience.

There is also a standards angle here. Once one credible lab opens more of the browser-agent stack, the sealed releases look a little more naked. Not doomed. Just harder to describe as the only serious option in town.

How much faith to put in the MolmoWeb benchmarks

Ai2 reports that MolmoWeb-8B scores 78.2% on WebVoyager, 42.3% on DeepShop, and 49.5% on WebTailBench, with stronger pass@4 results when the agent gets several tries. Those are good-looking numbers for an open-weight system in this size range. They also deserve the same adult supervision every launch-day chart deserves.

Web-agent benchmarks are noisy. Live websites change. Evaluation setups drift. VLM-judged scoring is helpful, but it is not the same thing as several outside teams reproducing the results on their own hardware while muttering at broken page loads. I enjoy a benchmark chart as much as the next exhausted reporter, but only in the same way I enjoy movie trailers: they tell me what the publisher wants me to feel.

So I am not taking the tables as final truth. I am taking them as a stronger invitation to verify. That is the difference here. Because Ai2 exposed more of the stack, outside teams have a real shot at rerunning, disputing, or extending the work. In this category, that is healthier than another round of demo videos where everyone applauds and nobody gets to check the wiring.

Who can really self-host MolmoWeb today

More honestly than with most browser-agent launches, a technically capable team probably can. The repo points to a real setup path: Python 3.10+, uv, Playwright browser installs, downloadable checkpoints, a model server, and a Python client that can run locally or with Browserbase. That is not plug-and-play for ordinary humans. It is, however, recognizably real. Big difference.

Ai2 is also fairly plain about the rough edges. The launch materials call out screenshot-reading mistakes, timing problems around page loads, weaker handling for drag-and-drop, and trouble with ambiguous instructions. The system is not trained for login-heavy or financial tasks, and the hosted demo adds site allowlists, unsafe-query filtering, and blocks on password and credit-card fields. If you want to deploy something like this for real work, you still need strong permission boundaries and runtime controls of the kind we discussed in NVIDIA OpenShell's security-control-plane design.

That is why I land on a smaller, sturdier conclusion. MolmoWeb is not an open-source version of "let the agent run your life." It is a more inspectable base for browser automation and web-task research. Smaller claim. Better claim.

If Ai2 ships the remaining training code quickly, and if outside teams can meaningfully reproduce the results, MolmoWeb could become the release people point back to when browser agents started feeling less like rented magic and more like actual infrastructure.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary launch post with the official framing, dataset counts, benchmark claims, limitations, and safety notes.

Confirms the public repo, model variants, self-hosting path, inference client, and current install surface.

Confirms the public model and data collection surfaces for the release.

Useful pickup for market framing and for showing why Ai2's official counts should take precedence where press coverage differs.

About the author

Lena Ortiz

Lena tracks the economics and mechanics behind AI systems, from serving architecture and open-weight deployment to developer tooling, platform shifts, product decisions, and the operational tradeoffs that shape what teams actually run. Her reporting is aimed at builders and operators deciding what to trust, adopt, and maintain.

- 24

- Apr 10, 2026

- Berlin

Archive signal

Reporting lens: Operating leverage beats ideological posturing.. Signature: If the cost curve moves, the product strategy moves with it.

Article details

- Category

- Open Source AI

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Covers the economics, tooling, and operating realities that shape how AI gets built, shipped, and run.