Google Stitch 2.0 wants to own the agent handoff

Google Stitch 2.0 adds DESIGN.md, MCP, and SDK hooks so AI-generated UI can move from mockup theater into something coding agents can actually use.

The interesting part is not that Stitch can make another handsome dashboard. It is that Google wants the dashboard to arrive with machine-readable instructions attached.

Another AI design launch would usually earn a polite nod and maybe a small sigh from anyone who has watched too many “build me a landing page” demos before breakfast. Google Stitch 2.0 is more interesting than that. The important move is not that Stitch can generate high-fidelity UI from natural language. Plenty of tools can already make a handsome dashboard with rounded corners and a gradient that has clearly been to therapy.

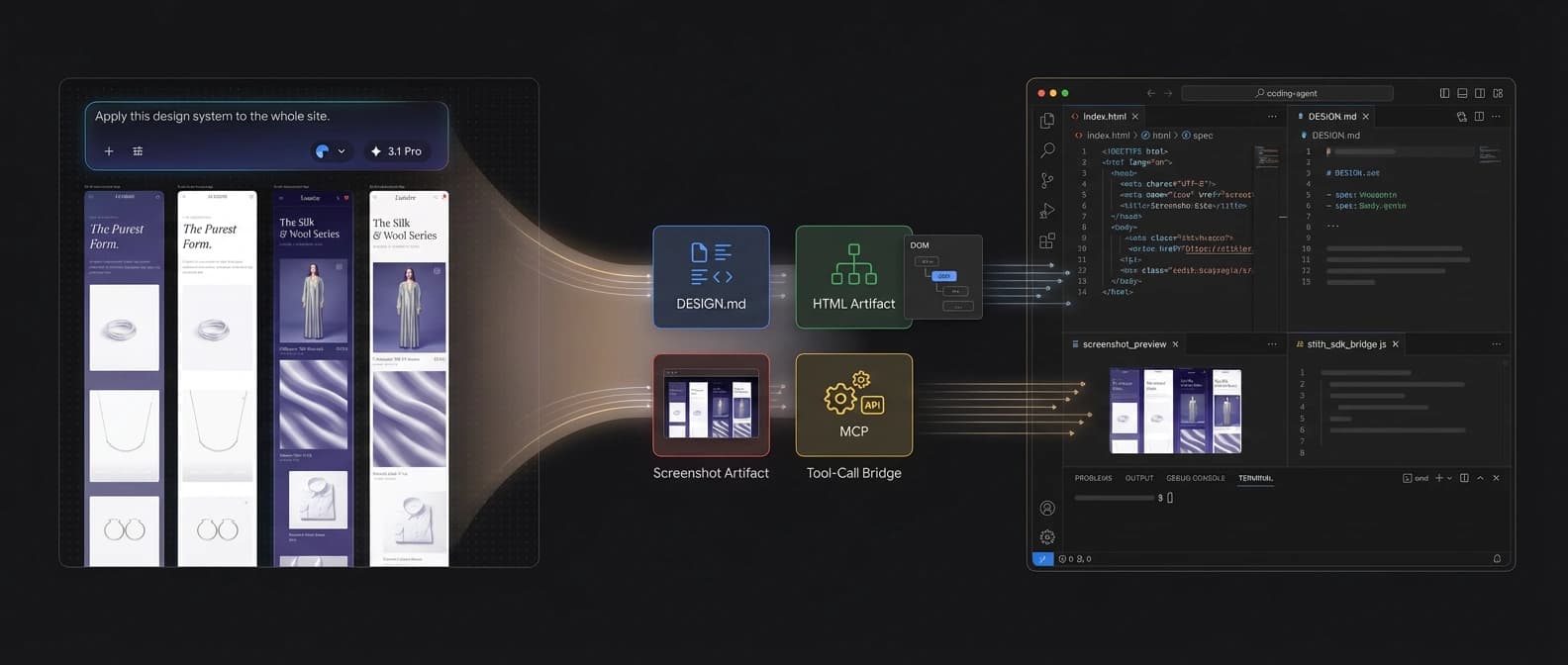

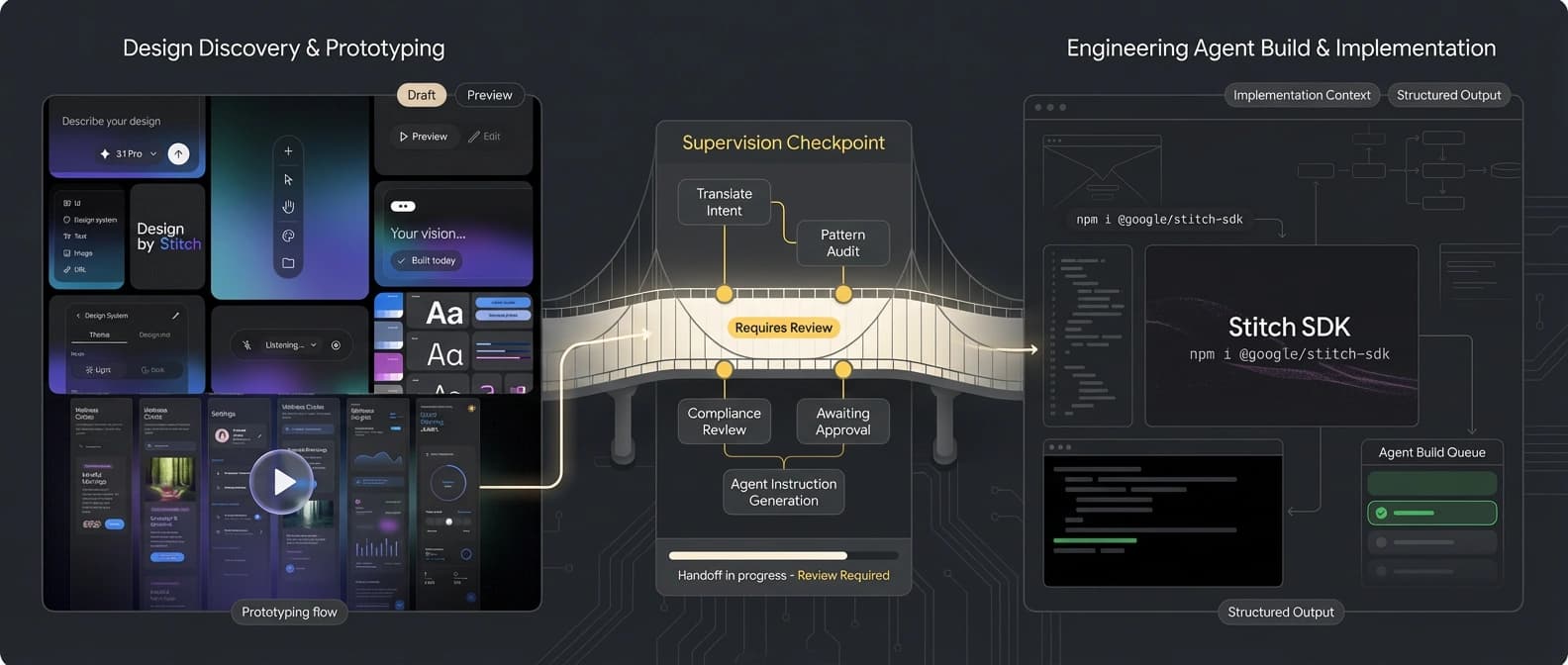

What matters is the handoff. In its March 18 update, Google said Stitch is becoming an AI-native software design canvas with a new design agent, an agent manager, instant prototyping, voice interaction, DESIGN.md import and export, and a direct bridge into code through its MCP server, SDK, and exports to tools such as AI Studio and Antigravity. The pattern is hard to miss: Google is trying to make AI design legible to the next machine in the workflow.

That is a much bigger deal than “AI makes mockups.” The painful part starts after the mockup, when the implementation agent receives a vague screenshot, some human adjectives, and a prayer. Stitch 2.0 is Google’s attempt to turn that hand-wave into a package: design rules, agent-readable context, tool access, HTML, screenshots, and enough structure that a coding agent does not have to play amateur detective.

Stitch stopped acting like a mockup toy

The official announcement is revealing if you read it less like product copy and more like an operations diagram. Stitch now has an AI-native canvas that can absorb images, text, or code as context. It also adds a design agent that can reason across the project’s evolution and an Agent manager for exploring multiple directions in parallel.

The more important addition is the design-system layer. Google says Stitch can extract a design system from any URL and import or export that system through DESIGN.md, which it describes as an agent-friendly markdown file. A coding agent does not really care that your mockup looks “clean” or “premium.” It needs explicit rules: components, spacing, typography, color, interaction intent, and constraints. DESIGN.md is Google’s way of turning design taste into something another tool can carry around without inventing half the brief on the fly.

Then there is the SDK. The stitch-sdk repository exposes screen generation, editing, variants, and both getHtml() and getImage() outputs. For agents that want direct tool access, it also exposes Stitch MCP tools and a stitchTools() helper for the Vercel AI SDK. In plain English: the generated design can travel as both structure and evidence.

That is why Stitch suddenly looks less like a front-end novelty and more like workflow plumbing. HTML gives the build agent a structural reference. The screenshot gives it visual truth. DESIGN.md gives it reusable rules. MCP and SDK hooks make the system callable instead of merely exportable. This is not a finished production frontend. It is a stronger handoff packet.

Google even says Stitch can export into AI Studio and Antigravity, which fits a broader pattern we have already seen in Google AI Studio’s full-stack push.

The more recent Gemini tool-combination update points in the same direction. Google keeps reducing the number of times a user has to leave Google-owned surfaces before an idea becomes an app. Stitch now looks like the design-side version of that same instinct.

The real product is the handoff layer

The industry keeps treating design-to-code as if the main problem were image generation quality. It is not. The main problem is that implementation tools inherit a pile of fuzzy intent. A founder says “make it feel premium.” A designer says “keep the hierarchy airy.” A coding agent stares into the abyss and returns with twelve shades of blue and a button that somehow looks both timid and overconfident.

Stitch 2.0 is trying to narrow that ambiguity. If you can hand an agent a screenshot, HTML, and a DESIGN.md file, the agent is no longer coding from vibes alone. It has something closer to a machine-readable brief. That is the substance in this launch.

The workflow chatter already reflects that. In a late-March Reddit thread aimed at Claude Code users, the appeal was not “wow, a robot made a pretty screen.” It was that Stitch exports a visual design plus HTML/CSS context, which can then be handed to Claude Code so it builds against a visible target instead of improvising every spacing and color decision from text alone. That workflow may be inelegant. It is also how real adoption begins: not with a clean standards story, but with whatever saves people forty minutes.

The HTML export matters even if it is not production-ready. Nobody should confuse a Stitch export with a hardened frontend. If you ship raw generated HTML straight to production, you are not moving fast. You are volunteering for an intimate relationship with layout bugs. But as a reference layer for an implementation agent, HTML carries spacing, structure, and ordering clues that a screenshot alone cannot.

That makes Stitch 2.0 a natural companion to the shift we described in AI’s action-versus-answer battlefront.

It also fits OpenAI’s agents platform shift. The strategic value is moving work from one stage to another without dropping all the context on the floor.

DESIGN.md is useful, but it is not a standard

Now for the wet blanket, because every AI launch deserves one.

Google’s announcement makes DESIGN.md sound important, and I think it is. But it is not an open standard just because the filename looks pleasantly generic. Right now it is a Google-backed format inside the Stitch workflow.

The skepticism showed up immediately. One UXDesign thread captured the obvious reaction: for a brief moment, DESIGN.md looked like the portable design-system file people had been waiting for. Then the fine print arrived. At the moment, it is a Stitch artifact.

That matters. If DESIGN.md stays mostly inside Stitch, it is less “new layer for the industry” and more “Google found a tidy envelope for its own workflow.” Useful, yes. Universal, no.

The same caution applies to MCP. Stitch having an MCP server is substantive because it gives agent runtimes a real tool surface. It does not automatically mean the broader design ecosystem will rally around Stitch as the canonical protocol. Plenty of products now wave the letters M-C-P around like they are holy water.

The good news for Stitch is that the SDK makes the value concrete. The handoff is not theoretical. There is a real callable interface there. The open question is whether anyone outside Google’s orbit treats that interface as infrastructure instead of a neat Labs demo.

This is where the Figma comparison gets sloppy

The lazy version of this story says Stitch 2.0 is coming for Figma. That misses the more interesting thing happening.

Figma’s core strength is still collaborative design work where humans review, discuss, and tweak details in the place they already use. Stitch’s sharper bet is different. It is trying to package design intent so an AI implementation tool can pick it up with less guesswork.

So when people ask about Stitch 2.0 vs Figma Make, the better lens is not “which one wins design?” It is “which one owns the transition from concept to implementation?” Figma has the advantage of where designers already live. Stitch is building an advantage in how design output gets turned into something an agent can consume. If Google makes that handoff smooth, it does not need to replace Figma to matter.

That is also why Stitch should not be sold as designer replacement software. It may help teams move faster, but it does not remove the need for taste, judgment, review, or constraints. It lowers the friction between “this is what we meant” and “here is something a build system can start with.”

Will Stitch 2.0 become a real design-agent handoff layer?

The next question is simple: does Stitch become a real handoff layer, or does it remain a very Google-shaped file cabinet for generated UI?

A few signals matter more than anything else. Watch whether DESIGN.md gets read outside Stitch. Watch whether operators keep using the screenshot-plus-HTML bundle in practical workflows. Watch how aggressively Google routes Stitch into AI Studio, Antigravity, and the rest of its app-building stack. If all roads keep pointing inward, the strategy is less “open handoff standard” and more “own the default corridor.”

That would still be important. Google does not need Stitch to become the ISO standard for AI design handoff next quarter. It only needs Stitch to become the easiest place where generated design stops being pretty and starts being actionable.

That is why Stitch 2.0 matters. The internet is already drowning in AI tools that can produce a decent dashboard before lunch. Google is making a serious bid to standardize, or at least capture, the messy handoff between AI-generated design and AI implementation. In today’s agent stack, that seam is where a lot of the pain lives. It is also where a lot of the leverage will sit.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Official March 18 update introducing the AI-native canvas, design agent, DESIGN.md import/export, voice features, and the MCP-plus-SDK handoff story.

Confirms the SDK methods, direct MCP tool access, stitchTools() integration, and HTML plus screenshot retrieval for generated screens.

Useful for live workflow chatter around HTML plus screenshot exports feeding coding agents. Treat adoption claims cautiously.

Captures immediate skepticism that DESIGN.md is useful only if it spreads beyond Stitch rather than staying a house format.

Use only as partial verification that the launch circulated through startup-tool discovery channels. Do not overstate traction from the listing alone.

Discussion signal only. Re-check live before citing points or comments.

Useful as a pointer that the handoff-file idea itself attracted discussion, but do not inflate it into broad consensus.

About the author

Talia Reed

Talia reports on product surfaces, developer tools, platform shifts, category shifts, and the distribution choices that determine whether AI features become durable workflows. She looks for the moment where a launch stops being a demo and becomes an ecosystem move.

- 34

- Apr 1, 2026

- New York

Archive signal

Reporting lens: Distribution is usually the story hiding inside the launch.. Signature: A feature matters when it changes someone else’s roadmap.

Article details

- Category

- AI Tools

- Last updated

- April 11, 2026

- Public sources

- 7 linked source notes

Byline

Covers product surfaces, tools, and the adoption moves that turn AI features into durable habits.