AI's new battlefront is action, not answers

I think Google, OpenAI, and Meta are all moving past chatbot answers toward AI systems with context, tools, and permissioned actions that actually do work.

The next moat is not a prettier answer. It is permissioned action with context.

The lazy way to read this month's product launches is as another answer race. Google personalizes responses. OpenAI ships faster models. Meta improves support. Fine. But I think the deeper pattern is much clearer now.

The new battlefront is action.

Major vendors are moving beyond the chatbot frame and toward systems that can hold context, use tools, and take bounded actions on a person's behalf. The important competitive question is no longer just which model sounds smartest in a box. It is which platform can turn intelligence into reliable work without making the user nervous.

Google is turning context into something operational

Google's Personal Intelligence expansion matters because it is not pitched as a prettier answer layer. The company says Personal Intelligence is expanding across AI Mode in Search, the Gemini app, and Gemini in Chrome, pulling from connected services such as Gmail and Google Photos to produce more relevant responses.

That sounds modest until you look at the examples. Shopping recommendations tied to prior purchases. Tech troubleshooting linked to the actual device model from receipts. Travel help that uses timing, gates, and personal context. That is a lot closer to an operator than a search box.

The asset here is not only answer quality. It is account-level context already living inside Google's surfaces. That gives Google an obvious advantage in the part of agent products that turns out to matter most: memory, permissions, and proximity to the task.

Google is also careful to stress the trust boundary. Users choose which apps to connect and can turn those links on or off, while Gemini and AI Mode do not train directly on Gmail inboxes or Google Photos libraries. That caveat is not boring legal trim. It is the whole point. Context without control is how a helpful feature turns into a product headache.

OpenAI is packaging the execution layer

OpenAI's recent pair of announcements fills in the second half of the picture. In the GPT-5.4 mini and nano launch, the company does not only talk about model quality. It emphasizes fast, lower-cost models for coding assistants, subagents, tool use, screenshot interpretation, and other latency-sensitive workloads where the system has to keep moving.

That matters because action-oriented systems cannot treat every step like a premium final-answer moment. If a product is calling tools, reading files, and handling supporting subtasks all day, cheap and responsive execution becomes strategic. As I argued in our earlier read on OpenAI's agent-platform shift, the real play is workflow capture.

The companion Responses API post makes that explicit. OpenAI describes a shell tool, hosted container workspace, filesystem, optional structured storage such as SQLite, restricted networking, domain-scoped secret injection, reusable skills, and native compaction. In other words, it is packaging the environment an agent needs to do work, not just the model needed to narrate work in a soothing tone.

That also connects to the cost story raised in our inference-economics coverage. Once AI products are judged by completed workflows instead of one-off prompts, tool calls, retries, and long-running loops stop being infra trivia. They become product strategy.

Meta is showing what permissioned action actually looks like

Meta's support and safety announcement matters for a different reason. It shows action inside a large consumer platform where real accounts and real trust boundaries already exist.

The new Meta AI support assistant is designed to resolve account problems from start to finish. Meta says it can answer questions, but also take actions directly within Facebook and later Instagram, including reporting scams or impersonation accounts, managing privacy settings, resetting passwords, and updating profile settings.

That is not open-ended autonomy. Good.

It is more interesting than open-ended autonomy, because it is permissioned action inside a tightly bounded domain with clear user value and obvious reasons to keep humans in the loop. Meta says more advanced AI systems are also catching severe violations, scams, and impersonation attempts with fewer mistakes, while people remain responsible for the highest-risk decisions such as critical appeals and law-enforcement reporting.

That is the near-term shape of the market. AI does more operational work, but the win depends on where the permission line sits and how clearly the human backstop is defined.

The real contest is over trustworthy action loops

Put those launches together and the market looks less like a simple model leaderboard and more like a stack race.

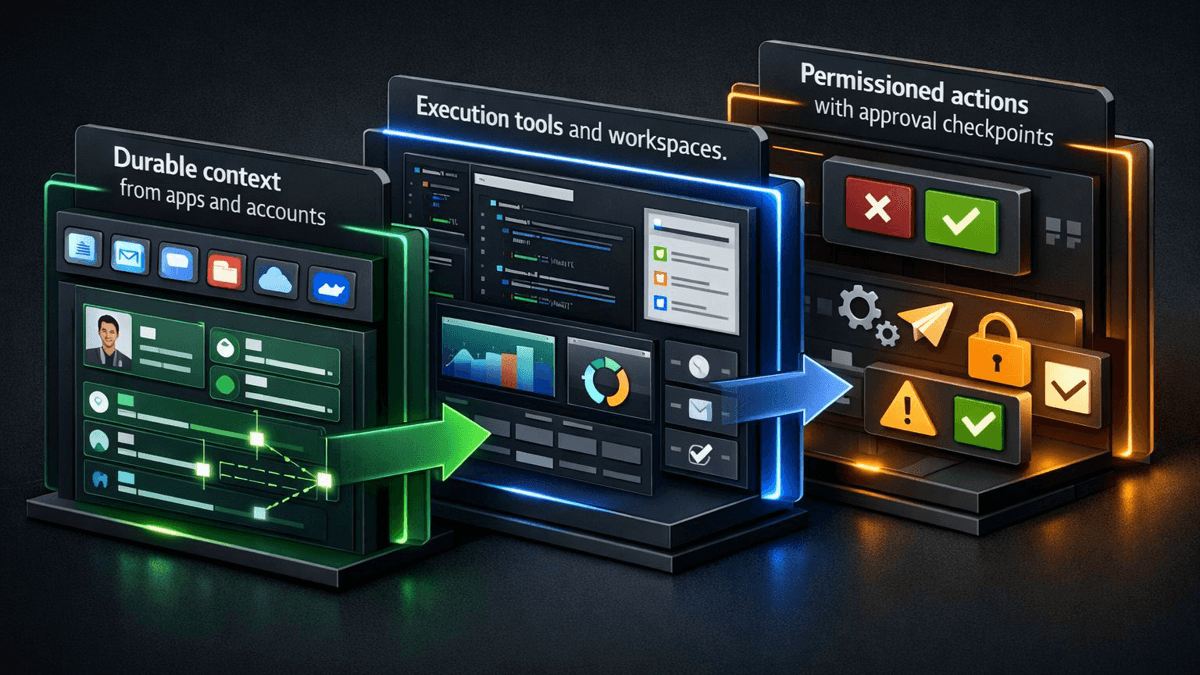

The winning products will need three things at the same time: durable context that makes the system relevant, execution surfaces that let it do work instead of merely suggesting work, and permission boundaries that make the action feel safe enough to trust.

That is why answers are becoming the shallow layer. Helpful prose still matters. But prose alone is easier to commoditize than the surrounding loop of context, execution, and approval. A polished answer is nice. A system that actually finishes the task is nicer.

My read on where the moat is moving

For readers following the broader AI agents archive or the live OpenAI tag, this is the frame I would keep in mind: the moat is moving outward from the model toward the environment around it.

The product that wins will not be the one that simply sounds the smartest. It will be the one that can remember enough, act enough, and ask permission at the right moments without turning the whole experience into airport security.

That makes this squarely a fit for the AI Products category and a natural addition to Talia Reed's archive. The next wave of AI competition will not be decided by prettier answers. It will be decided by who can turn intelligence into dependable action without making the user flinch.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Establishes Google's expansion of Personal Intelligence across AI Mode in Search, the Gemini app, and Gemini in Chrome, plus its emphasis on connected app context and user controls.

Shows OpenAI optimizing smaller models for tool use, subagents, coding workflows, computer use, and latency-sensitive production workloads.

Details the hosted shell, container workspace, networking controls, skills, and compaction primitives OpenAI is packaging around action-oriented agents.

Provides Meta's examples of AI taking bounded actions in support and safety workflows while keeping human oversight for the highest-risk decisions.

About the author

Talia Reed

Talia reports on product surfaces, developer tools, platform shifts, category shifts, and the distribution choices that determine whether AI features become durable workflows. She looks for the moment where a launch stops being a demo and becomes an ecosystem move.

- 34

- Apr 1, 2026

- New York

Archive signal

Reporting lens: Distribution is usually the story hiding inside the launch.. Signature: A feature matters when it changes someone else’s roadmap.

Article details

- Category

- AI Products

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Covers product surfaces, tools, and the adoption moves that turn AI features into durable habits.