Anthropic locks in Google TPU capacity with Broadcom

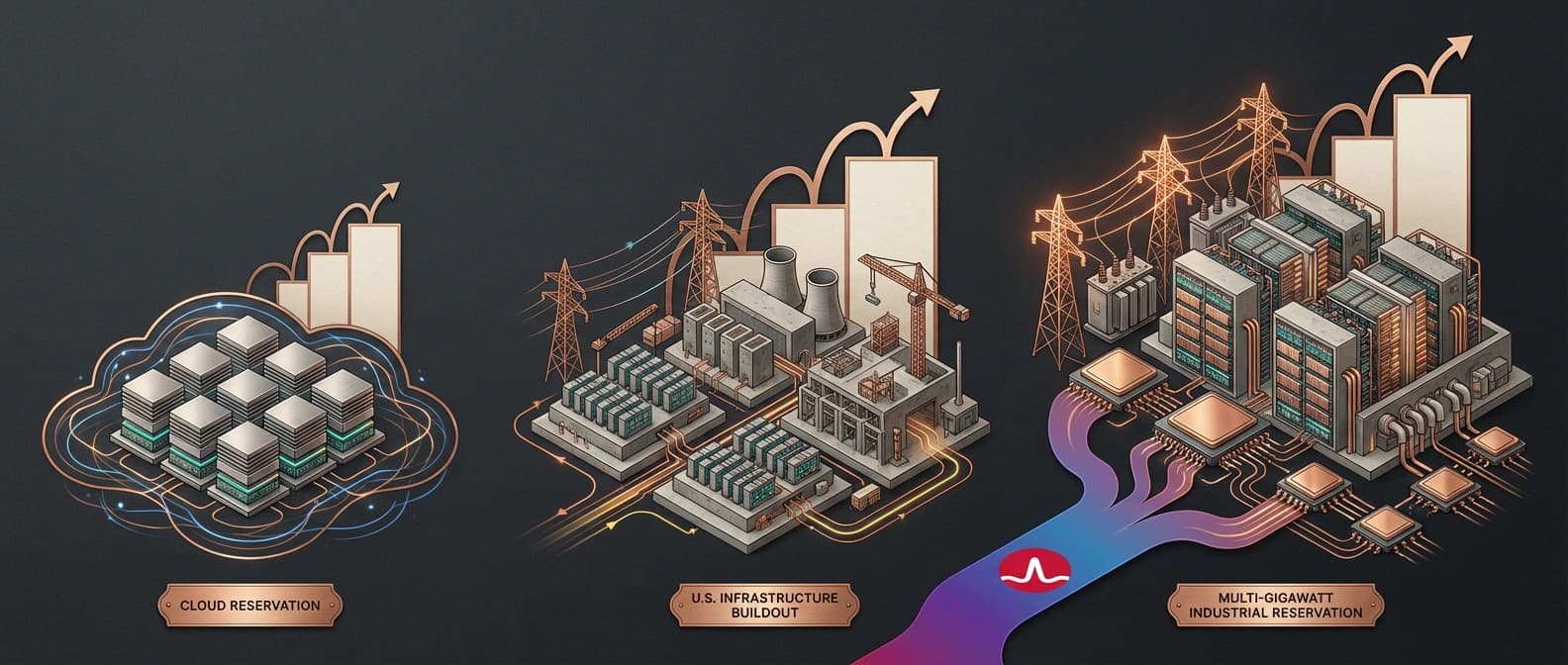

Anthropic's Google Cloud and Broadcom pact is a compute-capacity story, not a model launch. It shows frontier AI shifting toward power, silicon, and reserved compute.

Frontier AI now looks less like a software launch calendar and more like a queue for power, silicon, and construction slots.

Anthropic's new deal with Google Cloud and Broadcom is easy to misread if you skim it like a normal AI company announcement. There is no new public Claude model here. No benchmark chest-thumping. No carefully choreographed demo where a chatbot books a restaurant and then forgets your dietary restrictions.

What Anthropic actually announced on April 6 is more revealing than that. The company said it signed a new agreement with Google and Broadcom for multiple gigawatts of next-generation TPU capacity that it expects to start coming online in 2027. Most of that new compute will sit in the United States. Anthropic also went out of its way to say this is its largest compute commitment yet.

I keep coming back to that phrasing because it tells you where frontier competition is moving. The center of gravity is shifting away from who has the prettiest model launch this week and toward who can reserve power, chips, cloud fabric, and construction slots years ahead of demand. This is the point where AI starts sounding less like SaaS and more like a utility planning memo. Boring? A little. Important? Very much.

What Anthropic actually announced with Google and Broadcom

The cleanest way to read the news is as a step-up from Anthropic's October 2025 Google Cloud TPU expansion, not as a one-off surprise. Back then, Anthropic said it planned to expand its use of Google Cloud technologies, including up to one million TPUs, and expected well over one gigawatt of capacity to come online in 2026. The new agreement extends that trajectory into the next stage.

A quick snapshot helps separate confirmed facts from pickup reporting.

| Detail | Status | Source basis | Why it matters |

|---|---|---|---|

| Anthropic signed a new agreement with Google and Broadcom for multiple gigawatts of next-generation TPU capacity | Confirmed | Anthropic announcement | Locks the story into infrastructure, not product marketing |

| Capacity is expected to start coming online in 2027 | Confirmed | Anthropic announcement | This is future reserved capacity, not fully live supply today |

| Most of the new compute will be sited in the U.S. | Confirmed | Anthropic announcement | Ties the deal to domestic infrastructure and power buildout |

| Amazon remains Anthropic's primary cloud provider and training partner | Confirmed | Anthropic announcement | Important guardrail against reading the pact as exclusive |

| The new deal may amount to about 3.5 gigawatts | Pickup only | CNBC and TechCrunch citing Broadcom-linked filing detail | Big if accurate, but still not the number Anthropic itself published |

That last row matters because it changes the emotional scale of the story. Anthropic itself only says "multiple gigawatts." CNBC and TechCrunch, citing reporting around Broadcom's securities filing, point to roughly 3.5 gigawatts. That is plausible and consistent with the broader direction of travel, but it is still pickup territory. So I would write the scale this way: Anthropic confirmed a multi-gigawatt expansion, and outside reporting suggests the number may be around 3.5 gigawatts.

That distinction is not nitpicking. It is the difference between reporting the contract and freelancing the invoice.

What multiple gigawatts of compute actually means

Most AI coverage still treats compute as a vague cloud noun, which is convenient because the real numbers sound almost absurd. A gigawatt is not "a lot of GPUs." It is power-system language. When a company talks about compute in gigawatts, it is talking about data-center campuses, grid interconnection, long lead-time equipment, and a build schedule that has more in common with industrial infrastructure than app scaling.

Anthropic's own recent announcements make that shift explicit. In November 2025, it said it would invest $50 billion in American AI infrastructure with Fluidstack and described the challenge in terms of rapidly delivering gigawatts of power. In October 2025, it framed the Google Cloud TPU expansion as bringing well over one gigawatt online in 2026. Now the company is lining up the next multi-gigawatt layer for 2027 and beyond.

Short version: this is not spare capacity shopping. This is capacity reservation.

A second table makes the progression easier to see.

| Timeframe | Anthropic's stated move | Infrastructure read |

|---|---|---|

| October 2025 | Expand Google Cloud use, including up to one million TPUs and well over one gigawatt in 2026 | Anthropic starts talking like a buyer of industrial-scale compute, not just cloud instances |

| November 2025 | Commit $50 billion to U.S. AI infrastructure with Fluidstack | Power delivery and siting become part of the company story |

| April 2026 | Sign new Google and Broadcom deal for multiple gigawatts starting in 2027 | Frontier AI supply is being locked in several years ahead |

That is why this announcement lands differently from a normal cloud partnership press release. It is part of a sequencing story. Anthropic is stacking infrastructure commitments across cloud, power, and dedicated buildout so Claude demand does not outrun physical supply.

If this sounds more like airline slot control than software product planning, yes. That is the point.

Why Google TPUs and Broadcom matter together

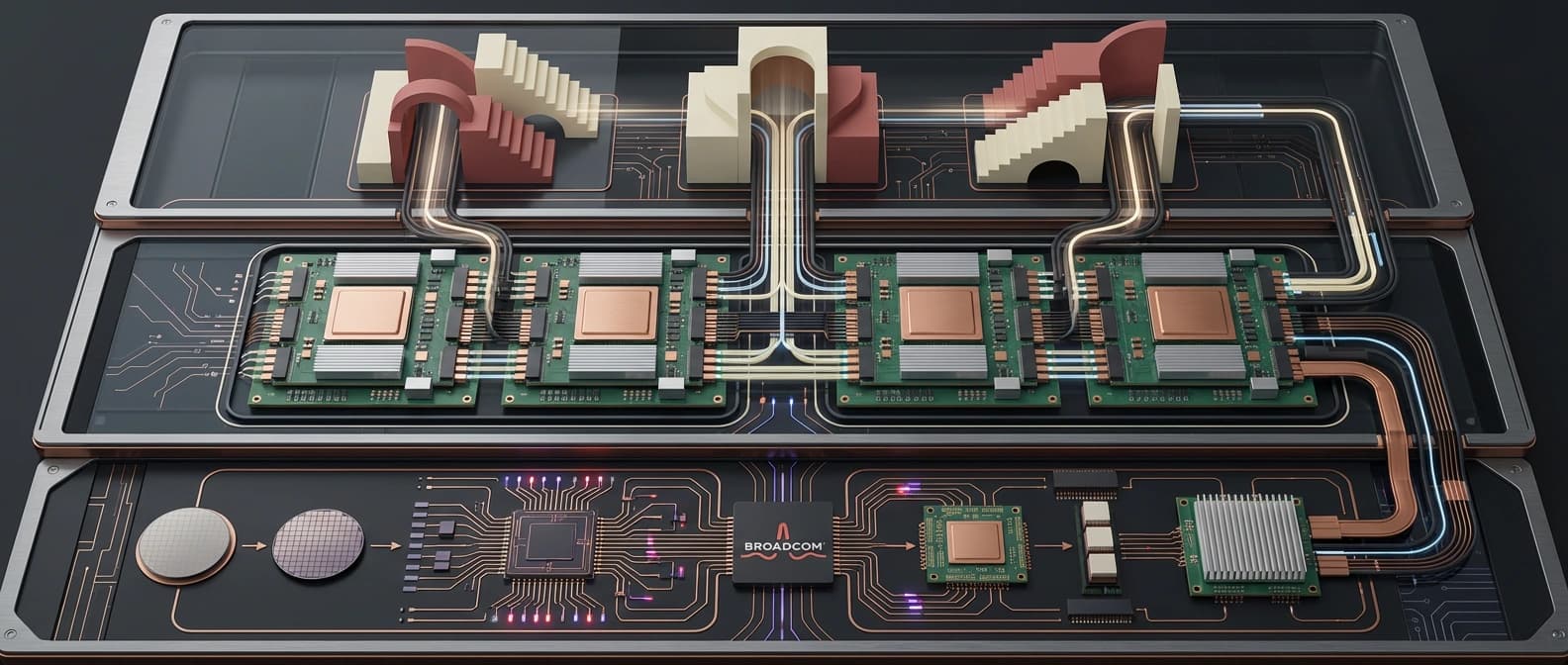

The interesting part of the deal is not just that Google Cloud is involved. It is that Google TPUs and Broadcom show up in the same sentence.

Anthropic already uses a mixed hardware strategy. In its April announcement, the company explicitly says it trains and runs Claude on AWS Trainium, Google TPUs, and NVIDIA GPUs. It also says Amazon remains its primary cloud provider and training partner, with Project Rainier still in play. That alone should stop anyone from turning this into a "Claude leaves AWS for Google" fairy tale.

What changes here is the depth of the Google lane. TPUs are not just rented compute the way a generic cloud VM is rented compute. They are a tightly integrated hardware-and-cloud stack that Google controls more directly. CNBC's reporting adds the crucial supply-chain detail: Broadcom helps Google make TPUs and has agreed to produce future versions of those AI chips for Google. So when Anthropic expands through Google TPUs with Broadcom in the middle, it is not only buying cloud access. It is aligning itself with a custom silicon pipeline.

That matters for three reasons.

First, it reduces the lazy habit of treating all frontier AI infrastructure as one big Nvidia-shaped blob. Nvidia still matters enormously, and Anthropic says outright that it continues to use Nvidia GPUs. But Google is trying to prove that its TPU stack can serve as more than an internal advantage. It wants TPUs to be a serious external platform for frontier labs. Broadcom's role strengthens that story because it links cloud access to silicon roadmap continuity.

Second, it gives Anthropic more bargaining power and workload flexibility. The company has spent months describing its compute strategy as diversified across three major chip platforms. That is not decorative wording. If one platform tightens, slips, or becomes too expensive for a certain class of workloads, Anthropic has more routing options. Our earlier piece on open-weight inference economics made a similar point at a smaller scale: the economics change when you have real routing choices instead of a single supplier dictating your reality.

Third, it tells us that custom silicon is no longer a side plot. We have already seen that in DeepSeek's Huawei chip stack story and in the increasingly specific optimization work around FlashAttention 4 and Blackwell economics. The frontier is not just model quality plus GPU count anymore. It is model quality plus silicon fit plus software fit plus power fit.

That is a more complicated story, but it is also the real one.

Why Anthropic is making this move now

The obvious answer is demand. Anthropic says its run-rate revenue has now surpassed $30 billion, up from about $9 billion at the end of 2025, and that the number of business customers spending more than $1 million annually has doubled from over 500 in February to more than 1,000 now. Those are company numbers, so they should be read as company numbers. Still, they explain the urgency.

Nobody announces a multi-gigawatt compute expansion because the dashboard looked slightly busy on Tuesday.

The deeper answer is that Anthropic is trying to keep three clocks aligned at once.

One clock is customer demand. Claude has to stay available and responsive for a much larger enterprise base.

Another clock is model development. Frontier labs cannot wait until demand fully arrives and then start shopping for power and chips. By then the lead times will own them.

The third clock is infrastructure politics. The April announcement stresses U.S. siting. The November infrastructure post stressed American jobs, domestic buildout, and rapid delivery of gigawatts. Anthropic's separate Australia MOU story showed the same pattern from another angle: compute is now wrapped up with geography, energy policy, and public legitimacy.

Put those together and the timing makes sense. Anthropic is not just scaling because Claude is popular. It is scaling because the companies that lock in industrial inputs early get to keep shipping while everyone else keeps refreshing lead-time spreadsheets and pretending that is a strategy.

Who this deal pressures across Nvidia and cloud rivals

This is where the broader market read gets interesting.

For Google Cloud, the announcement is a credibility gain. Anthropic is one of the few companies large enough for a multi-gigawatt TPU deal to mean something beyond marketing copy. If Google can turn TPUs into a long-horizon external platform for frontier labs, it becomes more than the company with smart in-house accelerators. It becomes a serious infrastructure landlord.

For Broadcom, the story is even more quietly important. Broadcom does not need the public glamour here. It needs to be embedded where custom AI silicon gets planned, revised, and scaled. This deal reinforces that it is not just adjacent to AI demand. It is inside the supply chain that shapes it.

For Nvidia, the pressure is not that Anthropic is abandoning GPUs. It is that the infrastructure map is getting more plural. Frontier labs are still using Nvidia heavily, but the strategic goal is clearly broader than "buy more Nvidia and hope." Once Google TPUs, AWS Trainium, and custom-silicon partnerships start taking larger shares of serious workloads, Nvidia's position stays powerful but less conceptually automatic.

For Amazon and Microsoft, the pressure is different. Anthropic says Amazon remains its main cloud and training partner, and Claude remains available across Bedrock, Vertex AI, and Azure Foundry. So the real issue is not exclusivity. The issue is whether every major cloud now needs a more durable answer to the same customer question: can you guarantee frontier-scale capacity years out, or are you still basically selling access to an expensive queue?

That is also why this piece belongs next to our earlier coverage of OpenAI's infrastructure flywheel. Different company, same lesson. The strongest labs are trying to reserve the future before they need it.

The part I would not overread

A deal like this invites two bad takes.

The first bad take is that Anthropic has somehow picked one permanent winner. It has not. Anthropic itself says the opposite. AWS remains its primary training partner. Nvidia GPUs remain part of the stack. Claude is still distributed across the three largest clouds. This is expansion, not monogamy.

The second bad take is that all this capacity is suddenly online now. Also no. Anthropic says the new TPU capacity is expected to start coming online in 2027. That wording is future-facing for a reason. The contract matters now because the reservation matters now. The physical capacity matters later when the data centers, chips, and power show up in enough quantity to carry real workloads.

There is one more thing not to overread: cost claims. Anthropic has spent months emphasizing price-performance and efficiency advantages in parts of its TPU story, and those statements are useful as company framing. They are not the same thing as independent proof that the whole stack suddenly beats every alternative on total cost of ownership. Keep the press release and the spreadsheet in separate drawers.

The bottom line is simpler. Anthropic's Google Cloud and Broadcom pact shows how frontier AI is being reorganized around locked-in compute supply. The labs still care about models. Of course they do. But model quality is starting to look like the visible tip of a much larger industrial system underneath.

And that system runs on power, silicon, data centers, and time.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary source for the new agreement, the 2027 starting window, U.S. siting, revenue run-rate update, customer-growth figures, and Anthropic's non-exclusive multi-platform framing across AWS Trainium, Google TPUs, and NVIDIA GPUs.

Primary source for the October 2025 TPU expansion, the up-to-one-million-TPU framing, and Anthropic's statement that well over one gigawatt of capacity was expected in 2026.

Primary source for Anthropic's U.S. infrastructure buildout framing, Fluidstack partnership, and the company's argument that frontier growth is now tied to rapid delivery of gigawatts of power.

Secondary reporting used for the pickup-only 3.5 gigawatt detail, the linkage to Broadcom's securities filing, and the reminder that Broadcom helps Google build TPUs.

Secondary reporting used to confirm how outside coverage is interpreting the filing-backed capacity scale and the step-up from the October 2025 TPU expansion.

About the author

Idris Vale

Idris writes about the institutional machinery around AI, but the lens is broader than policy alone: procurement frameworks, public-sector buying rules, platform leverage, compliance burdens, workflow risk, and the market structure hiding beneath product or infrastructure headlines. The through-line is practical power, not abstract theater.

- 23

- Apr 10, 2026

- Brussels · London corridor

Archive signal

Reporting lens: Follow the buying process, not just the bill text.. Signature: Policy turns real when someone has to buy the system.

Article details

- Category

- AI Infrastructure

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Tracks the institutions, incentives, and market structure that quietly decide which AI systems get deployed and why.