Physical AI is leaving the lab, and NVIDIA wants in

NVIDIA's National Robotics Week roundup linked household research, startup pipeline, and solar-field deployment into one bid to own the platform layer under physical AI.

Physical AI starts to feel real when the proof points stop living on keynote stages and start showing up in kitchens, solar fields, and startup customer pipelines.

NVIDIA did not spend National Robotics Week trying to sell one magic robot. I think that is exactly why the story matters.

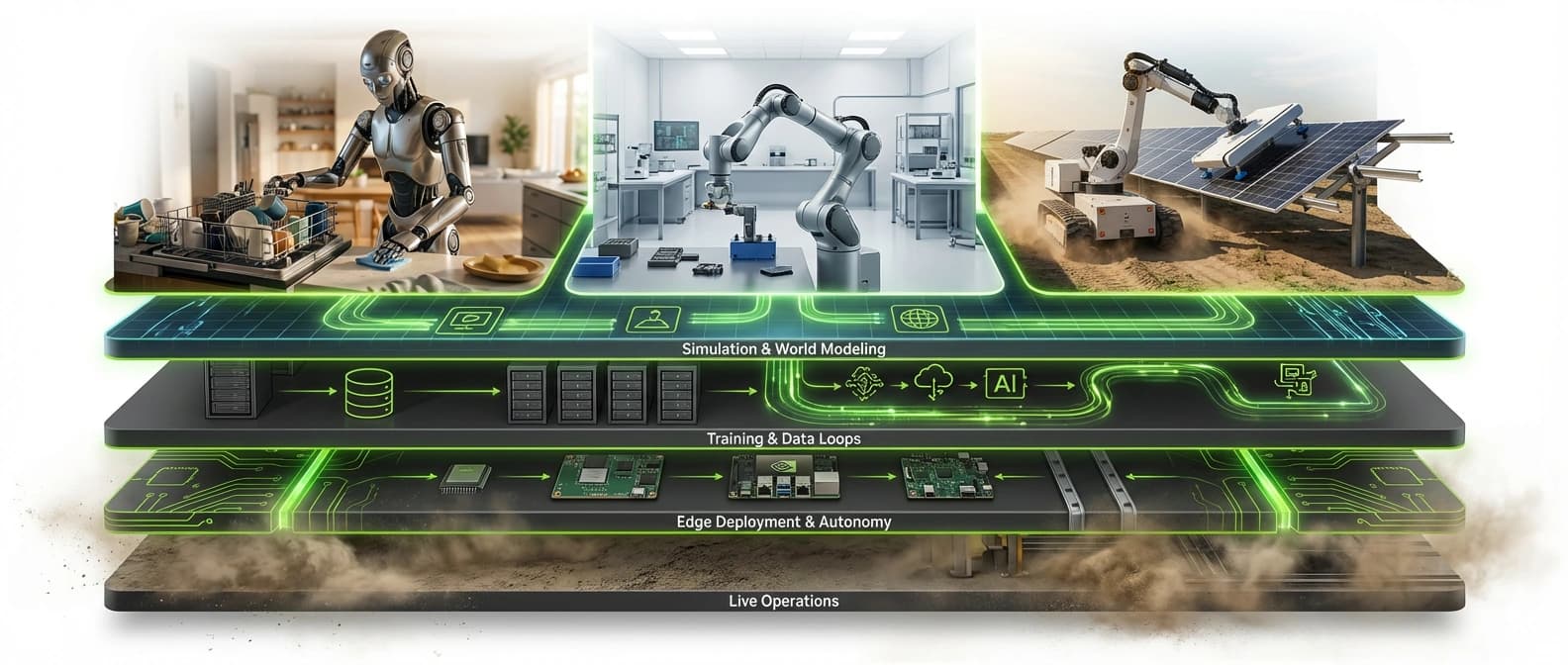

The company used its Apr. 2 roundup, later updated as the week rolled on, to do something more strategic. It lined up a household-robotics research push from the University of Maryland, a startup-commercialization signal from MassRobotics, and a field-deployment example from Maximo's solar-installation business, then wrapped the whole thing in NVIDIA's preferred stack language: simulation, synthetic data, accelerated compute, robot learning, and edge deployment.

On its face, that sounds tidy. Maybe too tidy. But the cluster is real.

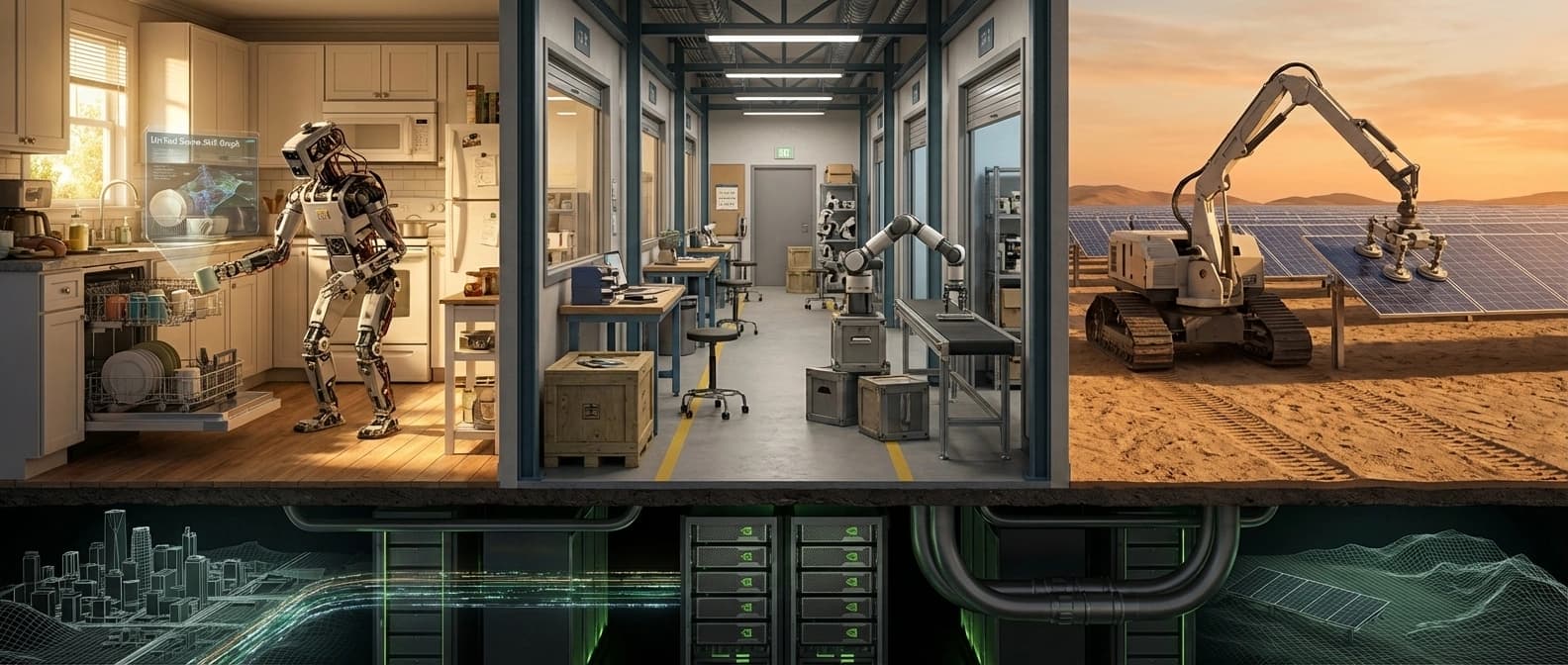

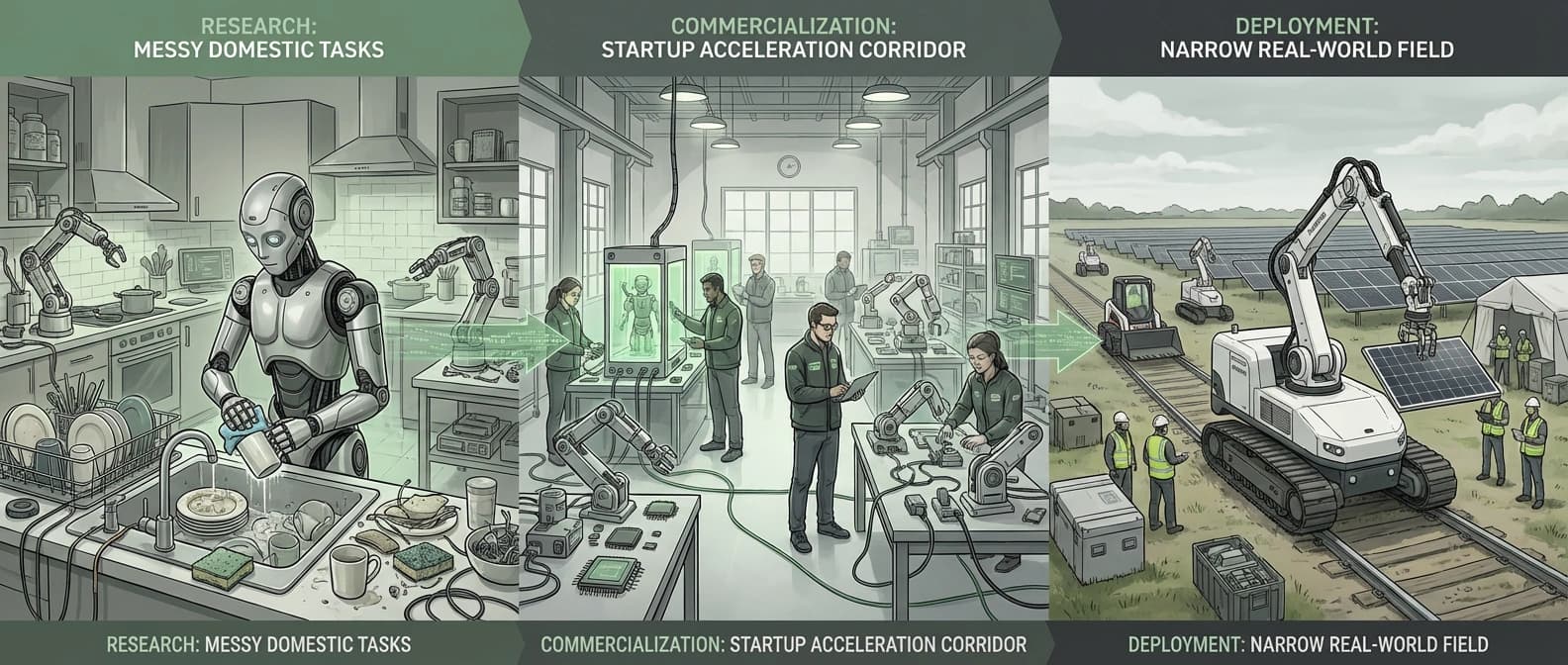

Physical AI has been one of those phrases that can mean almost anything, which is usually a sign that marketing got to the microphone first. This week felt a little different. Not because we suddenly got Rosie the Robot. We absolutely did not. The kitchen is still safe from a general-purpose home android with opinions about your dishwasher technique. What changed is that the proof points started landing in a more believable order: research first, commercialization support next, narrow deployment after that, platform owner everywhere in the background.

That is a much sturdier story than another stage-managed robot demo.

Why physical AI is back in the headlines right now

I keep coming back to the timing. None of these signals alone would have been enough. Together, they start to look like a category taking itself seriously.

NVIDIA's roundup matters less as a news event than as an editorial choice. The company could have spent the week flexing one internal demo. Instead, it curated outside and ecosystem evidence that physical AI is moving from lab environments toward workflows with budgets, timetables, and customers. That is not the same thing as mass adoption, but it is a real step up from benchmark theater.

The University of Maryland update is the research anchor. The team says it is building robot foundation models that unify perception, planning, and control, alongside a HomeGraph framework for modeling cluttered domestic spaces and multistep tasks. That is still a research program. It is not a shopping page for a robot butler. Yet it addresses the right hard problem: homes are messy, ambiguous, and rude to brittle automation.

MassRobotics provides the commercialization layer. Its second 2026 Physical AI Fellowship cohort, announced with AWS and NVIDIA, names nine startups spanning agriculture, manufacturing, solar, haptics, teleoperation, robotics-data infrastructure, and humanoids. That does not prove a mature market on its own. It does show there is now a recognizable support pipeline for companies trying to turn physical AI into something more durable than demo footage and optimistic fundraising decks.

Then there is Maximo, which is the most useful kind of deployment example: narrow, repetitive, expensive work in the real world. That is where robotics usually becomes believable. Not in a humanoid making coffee for a keynote audience, but in a solar field where somebody cares about throughput, safety, labor constraints, and whether the project actually finishes on time.

The physical AI signals that mattered this week

A quick map helps separate real movement from narrative seasoning:

| Signal | Source | Date | What changed | Why it matters |

|---|---|---|---|---|

| NVIDIA National Robotics Week roundup | NVIDIA Blog | Published Apr. 2, 2026, later updated during the week | NVIDIA packaged research, startup, and deployment examples into one physical-AI stack story | Shows NVIDIA is trying to own the category framing, not just supply chips |

| Household robotics research push | Maryland Today / UMD | Apr. 6, 2026 | UMD detailed a foundation-model program for complex household tasks using NVIDIA-backed simulation and compute | Gives the category a credible research proof point without pretending home robots are product-ready |

| Physical AI Fellowship cohort 2 | MassRobotics, AWS, NVIDIA | Mar. 12, 2026 | Nine startups were selected for technical support, credits, training, and go-to-market visibility | Suggests a real commercialization pipeline is forming around robotics startups |

| Solar-installation robot deployment claims | Maximo official site plus NVIDIA roundup | Current official site, and roundup emphasis on Apr. 2, 2026 | Maximo is framed as a live field system for faster solar installation rather than a lab concept | Points to the kind of narrow, revenue-linked deployment where physical AI can become boring enough to be real |

The pattern is what matters. Research. Startup enablement. Narrow deployment. Platform integration. That looks a lot more like a market category than a one-off spectacle.

Household robot AI is getting more credible, but not market-ready

The UMD story is probably the easiest one to overread, so it is worth slowing down.

The researchers are not saying they solved general household robotics. They are saying they are building the ingredients that might make that problem less impossible: foundation models for robotics, better scene understanding, better long-horizon planning, large-scale simulation, and natural-language instruction following. Their proposed HomeGraph framework is especially interesting because it tries to encode the relationships that make homes annoying for robots, things like what is inside what, what sits next to what, and what counts as a tool versus an obstacle.

That matters because domestic environments are basically a stress test for every weak spot in robotics. Lighting changes. Objects are partially hidden. People interrupt. The bowl is in the sink until it is not. A robot that looks smart in a neatly staged lab can fall apart the moment a towel is draped over the wrong chair.

UMD's project uses NVIDIA Isaac to generate photorealistic virtual home environments so robots can practice millions of task variations. That is a big part of the physical-AI pitch. If generative AI needed giant internet-scale corpora, physical AI needs giant world-scale practice grounds, many of them synthetic, because real-world data collection is painfully slow and occasionally destructive.

Still, I would keep the brakes on. A university announcement about household autonomy is not a sign that general-purpose home robots are around the corner. It is a sign that the tooling stack is getting serious enough for reputable labs to take the problem apart in more systematic ways. That is progress. It is not a checkout button.

Maximo shows why physical AI will probably go narrow before it goes broad

If UMD is the long-range research signal, Maximo is the near-term business signal.

Maximo, incubated within AES and marketed through Maximo Robotics, focuses on solar installation. That niche matters. Solar projects have heavy repetitive tasks, tight schedules, labor constraints, harsh field conditions, and a clean economic reason to care about speed. In other words, they have almost none of the ambiguity that makes a household robot cry in the shower. It is also the kind of deployment-envelope question we keep seeing on the edge side of AI infrastructure, including in our Intel Arc Pro B70 analysis.

The official Maximo site says the service can install modules twice as fast as standard processes, operate over extended shifts, and work alongside construction crews. The site also pitches Maximo and Max IQ as an end-to-end installation service for mechanical solar module installation. NVIDIA's roundup goes further, saying Maximo recently completed a 100-megawatt solar installation using its robot fleet and highlighting use of NVIDIA accelerated computing, Omniverse libraries, and Isaac Sim.

Here, I would be careful. The exact megawatt totals and deployment figures vary across official and affiliated pages, so the cleanest claim is not "this number is settled." It is that Maximo is now being marketed as a live utility-scale field system, not as a concept render with good lighting.

That distinction matters more than people think. Physical AI will not become real because a robot can do everything. It will become real because enough robots can do one costly thing well enough, often enough, for somebody to sign a budget. Solar installation is exactly that kind of job. It is less glamorous than a household humanoid folding laundry, but glamour has never paid for a construction schedule.

MassRobotics shows the commercialization pipeline is thickening

The MassRobotics fellowship announcement is easy to dismiss as ecosystem PR. It is also useful PR, which is rarer than it should be.

The second cohort is not just one startup with a cute robot and a brave founder on LinkedIn. It is a spread of companies working on agricultural robots, manufacturing intelligence, teleoperation, haptic interfaces, robotics-data infrastructure, solar-robotics support, and humanoid or wearable systems. That mix tells you something about where physical AI is maturing.

It is not maturing as one product category. It is maturing as a tooling and deployment layer that can show up across several verticals at once.

MassRobotics, AWS, and NVIDIA are explicit about the model: give startups technical guidance, cloud credits, NVIDIA training and tooling, and access to a broader robotics ecosystem, then help them move from promising prototypes to enterprise-grade deployments. That last phrase is the whole ballgame. Enterprise-grade deployments. Not viral demos. Not cute GIFs. Not another biped clip set to cinematic music while everyone politely ignores the safety tether.

The startups in the cohort are also revealing. Luminous Robotics is pointed directly at solar installation. Roboto AI is focused on analytics for multimodal robotics logs. Config is building data infrastructure and foundation models for bimanual tasks. Telexistence talks about physical AI and autonomous robots in commercial-scale retail production. This is what market formation looks like when it leaves the pitch deck and starts filling in the supply chain.

Why NVIDIA wants to be the platform under physical AI

This is the part that makes the story fit AI News Silo's infrastructure beat instead of a generic robotics column.

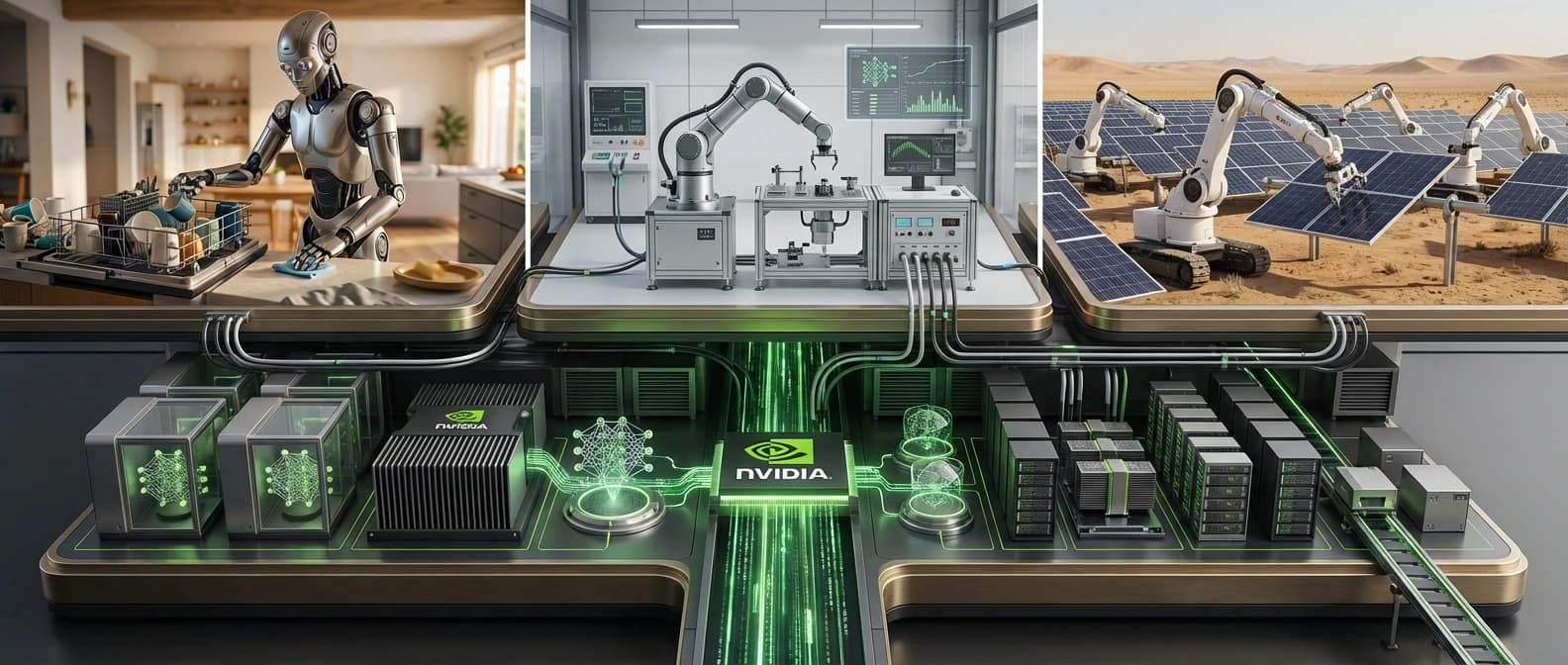

NVIDIA is trying to do for physical AI what it already did for generative AI: become the layer everyone else builds on, even when the applications look wildly different on the surface.

The components are familiar by now. Isaac and Isaac Sim handle development and simulation. Omniverse libraries help with digital-world modeling and synthetic environments. RTX PRO 6000 Blackwell GPUs show up for large-model training. Jetson AGX Thor and related edge hardware show up for deployment on real machines. Cosmos and other world-model assets expand the training-data story. Even when the robot maker gets the spotlight, NVIDIA wants to be the thing underneath the spotlight rig.

That is a smart strategy because robotics is messy. No single robot category is guaranteed to dominate. Humanoids might stay niche for longer than investors want. Agricultural robotics could fragment by crop. Industrial autonomy could split across vision, teleoperation, and task-specific hardware. The platform vendor does not need to win every end application. It needs enough of the stack to rent itself to all of them.

That logic rhymes with what we have already seen in adjacent infrastructure stories on this site. In our Dynamo piece, the company's real move was not just faster inference, but the orchestration layer above the engines. In our AI grids piece, the move was to turn distributed telecom infrastructure into inference capacity.

The same platform-under-applications instinct also shows up in Meta's custom silicon push. Physical AI is the same instinct in a different costume. Own the coordination layer, the simulation layer, the hardware layer, and the training loop, then let everyone else argue about which robot shape wins.

It also helps explain why NVIDIA is leaning so hard into category framing. If physical AI becomes a widely used term for robots that learn, perceive, plan, and act in real environments, NVIDIA wants that phrase to quietly imply its own tooling stack. That is branding, yes. It is also channel strategy.

The part I would not shrug off

I do not think physical AI has suddenly crossed into everyday normalcy. The home-robotics side is still research-heavy. Many startup claims are still early. Some deployment numbers, especially around Maximo, need careful attribution. And NVIDIA is obviously curating this story to flatter its own position.

But the underlying shift looks real.

The category is starting to produce the kind of evidence that matters more than a single viral clip. There is serious academic work on hard environments. There is commercialization infrastructure around startups. There are narrow field deployments tied to revenue and labor constraints. And there is now a major platform company trying to connect all of those dots into one stack narrative.

That is when a market starts to leave the demo stage. Not when everything is solved, but when the evidence gets boring in the best possible way. Budgets. Toolchains. Deployment stories. Customer pipelines. The plumbing.

Physical AI is still early. It is also, for the first time in a while, early in a way that feels organized.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Central source for NVIDIA's April 2026 aggregation strategy, plus the Maximo, UMD, and MassRobotics proof points it chose to emphasize.

Best source for the household-robotics research details, including HomeGraph, foundation-model framing, simulation plans, and the fact that this remains a research program rather than a market-ready home robot.

Most concrete commercialization signal in the source pack, naming the startups, support structure, and enterprise-deployment framing around the 2026 cohort.

Program page used to confirm the fellowship's role as an ongoing ecosystem-building mechanism, not a one-off press blast.

Official product site used for live deployment language, 2x-speed marketing, and current positioning of Maximo as a solar-installation service working alongside human crews.

Parent-company product page used to cross-check Maximo's official claims and keep field-deployment language tied to company wording.

About the author

Idris Vale

Idris writes about the institutional machinery around AI, but the lens is broader than policy alone: procurement frameworks, public-sector buying rules, platform leverage, compliance burdens, workflow risk, and the market structure hiding beneath product or infrastructure headlines. The through-line is practical power, not abstract theater.

- 23

- Apr 10, 2026

- Brussels · London corridor

Archive signal

Reporting lens: Follow the buying process, not just the bill text.. Signature: Policy turns real when someone has to buy the system.

Article details

- Category

- AI Infrastructure

- Last updated

- April 11, 2026

- Public sources

- 6 linked source notes

Byline

Tracks the institutions, incentives, and market structure that quietly decide which AI systems get deployed and why.