NVIDIA AI grids turn telcos into inference resellers

NVIDIA's AI grid pitch is a bet that telecom networks can sell distributed inference, but only if operators package it like a product and not a committee.

The pitch only works if telcos sell a usable inference product, not prettier rack talk.

Most GTC infrastructure stories eventually collapse into the same template: bigger racks, faster chips, louder slogans, and somebody trying to say "AI factory" like they absolutely mean it. The more interesting telecom story is narrower.

NVIDIA wants telecom operators to do more than carry AI traffic. It wants them to host inference and sell it.

That is the real point of the company's AI-grid push. In NVIDIA's framing, operators become geographically distributed AI infrastructure providers that can run workloads closer to users, devices, and data. Strip away the keynote polish and the pitch is pretty direct: your network footprint could become a compute product.

Why NVIDIA’s telecom AI grid pitch is really about inference sales

"Edge AI" has been a fuzzy promise for years. AI grids make a tighter claim. They assume there is now a meaningful class of latency-sensitive, locality-sensitive, or regulation-sensitive workloads that should run nearer to where the data lives.

That class of workloads is real enough. Vision systems, industrial monitoring, transportation software, real-time translation, and physical AI applications all get clumsier when every request has to do a full road trip back to a distant cloud region. If the app needs a fast answer, shipping the job across the country starts to feel like taking the highway to visit the neighbor next door.

AT&T's announcement with Cisco and NVIDIA leans into exactly that logic: localized compute, zero-trust security, and inference near where data is generated. Cisco's companion post says the commercial part even more plainly. Service-provider networks are being positioned as real-time AI execution fabric, not just transport.

That matters because it gives operators a workload somebody might actually pay for, not just another edge-computing theory deck.

AI-RAN is the bridge from towers to AI capacity

The acronym doing the heavy lifting here is AI-RAN. NVIDIA's AI-RAN definition frames it as combining AI workloads with the radio access network so operators can improve performance and unlock new monetization paths.

The strategic move is not the acronym itself. It is the idea of a shared accelerated platform that can support both network functions and AI work. If that works, the telco footprint stops looking like a pile of single-purpose spend and starts looking more like a distributed cloud with stronger locality advantages.

The T-Mobile physical-AI example is useful because it is not sold as a prettier radio demo. It is sold as a way to host vision and robotics-adjacent workloads closer to the environments where they operate. The SoftBank announcement pushes the same idea further by describing AI and 5G workloads running concurrently and external inference jobs being dispatched when spare capacity exists.

That is the dream in one sentence: use existing network-adjacent infrastructure to sell inference in places where the central cloud is not the best answer.

Why this edge pitch sounds more credible now

Earlier edge waves often failed because the workload story was fuzzy. Telcos had sites, power, and network reach, but not a clean answer to the question every buyer asks: yes, but what do I run there?

AI helps because the unit of value is easier to explain. A buyer can understand lower latency, local execution, data-residency benefits, and better physical-world responsiveness. And because operators already manage broad geographic footprints, they can plausibly claim an advantage when locality matters.

This is why the story overlaps with our earlier piece on open-weight inference economics. As model serving gets more flexible, placement starts to matter more. Some workloads belong in giant centralized regions. Some really do not.

The obvious problem is product friction

Here is the hard part: telcos are not the default AI buying channel.

Even if the infrastructure works, developers may still prefer the cleanest API, the easiest toolchain, and the most familiar deployment path. That is the same workflow-gravity problem I pointed to in the OpenAI platform analysis. The stack that is easiest to build on usually captures more demand than the one with the noblest architecture.

So for telecom AI grids to matter, operators need to sell more than rack space with a fresh name badge. They need packaging, APIs, support, and pricing that feel like software infrastructure, not a telecom procurement exercise that requires three steering committees and a ceremonial PDF.

Utilization is the brutal test underneath the stage show

There is also a very ordinary economic question hiding under all the strategy talk: can operators keep this capacity busy?

Distributed inference sounds great until the hardware sits around admiring the weather. Centralized cloud regions still win on utilization smoothing, ecosystem familiarity, and sheer buyer habit. A lot of workloads do not care enough about locality to justify moving out toward the network edge.

That means telco AI grids do not need to replace hyperscalers to matter. They only need to win the workloads where locality, regulation, bundled connectivity, or physical-world response times change the buying decision in a real way.

What I want to see after the keynote cycle

The next proof points should be practical. Can a developer deploy onto this infrastructure without a heroic integration project? Can an operator offer a regional inference product with pricing and support that look sane to software buyers? Can the footprint produce better economics for a specific workload, not just a prettier slide?

Those are the questions that will matter in the AI Infrastructure category. If NVIDIA and its partners can answer them, telecom AI grids could become a meaningful second lane for distributed inference. If they cannot, this will join the large museum of edge-computing ideas that sounded inevitable on stage and awkward everywhere else.

That is why I think the telecom story matters. NVIDIA is not merely pitching faster networks. It is trying to give operators a new job in the AI stack: inference resellers with locality as the sales hook. The market will judge that idea by the product, not the slogan.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Core framing for AI grids as geographically distributed infrastructure that operators can monetize with inference workloads.

Useful definition of AI-RAN and the shared infrastructure logic behind the telecom pitch.

Supports the claim that the target workload is physical AI and edge inference near devices and sensors.

Helps ground the concurrent AI-plus-5G capacity story and the idea of dispatching inference jobs when excess capacity exists.

Supports the operator-side sell around localized compute, security, and real-time inference where data is generated.

Helpful for the market framing around service-provider monetization and the shift from pilot to production at the network edge.

About the author

Lena Ortiz

Lena tracks the economics and mechanics behind AI systems, from serving architecture and open-weight deployment to developer tooling, platform shifts, product decisions, and the operational tradeoffs that shape what teams actually run. Her reporting is aimed at builders and operators deciding what to trust, adopt, and maintain.

- 24

- Apr 10, 2026

- Berlin

Archive signal

Reporting lens: Operating leverage beats ideological posturing.. Signature: If the cost curve moves, the product strategy moves with it.

Article details

- Category

- AI Infrastructure

- Last updated

- April 11, 2026

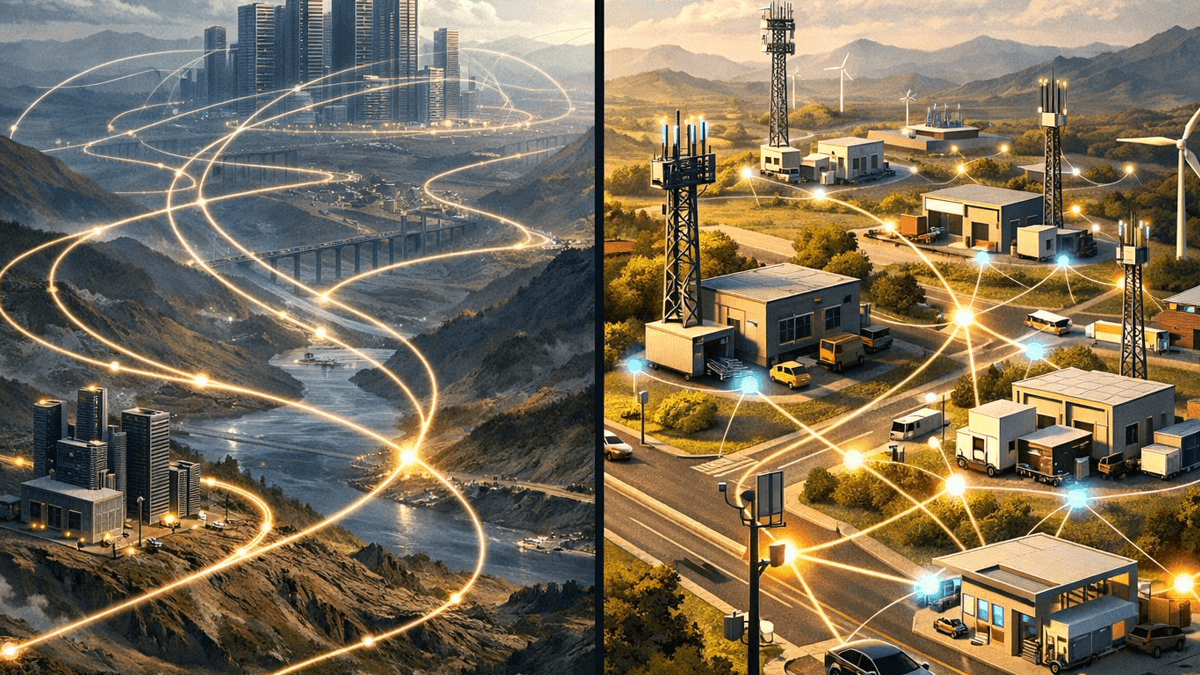

- Lead illustration

- The AI-grid pitch is really a plan to turn the telecom footprint into sellable inference capacity.

- Public sources

- 6 linked source notes

Byline

Covers the economics, tooling, and operating realities that shape how AI gets built, shipped, and run.