Meta's $21B CoreWeave deal runs through 2032

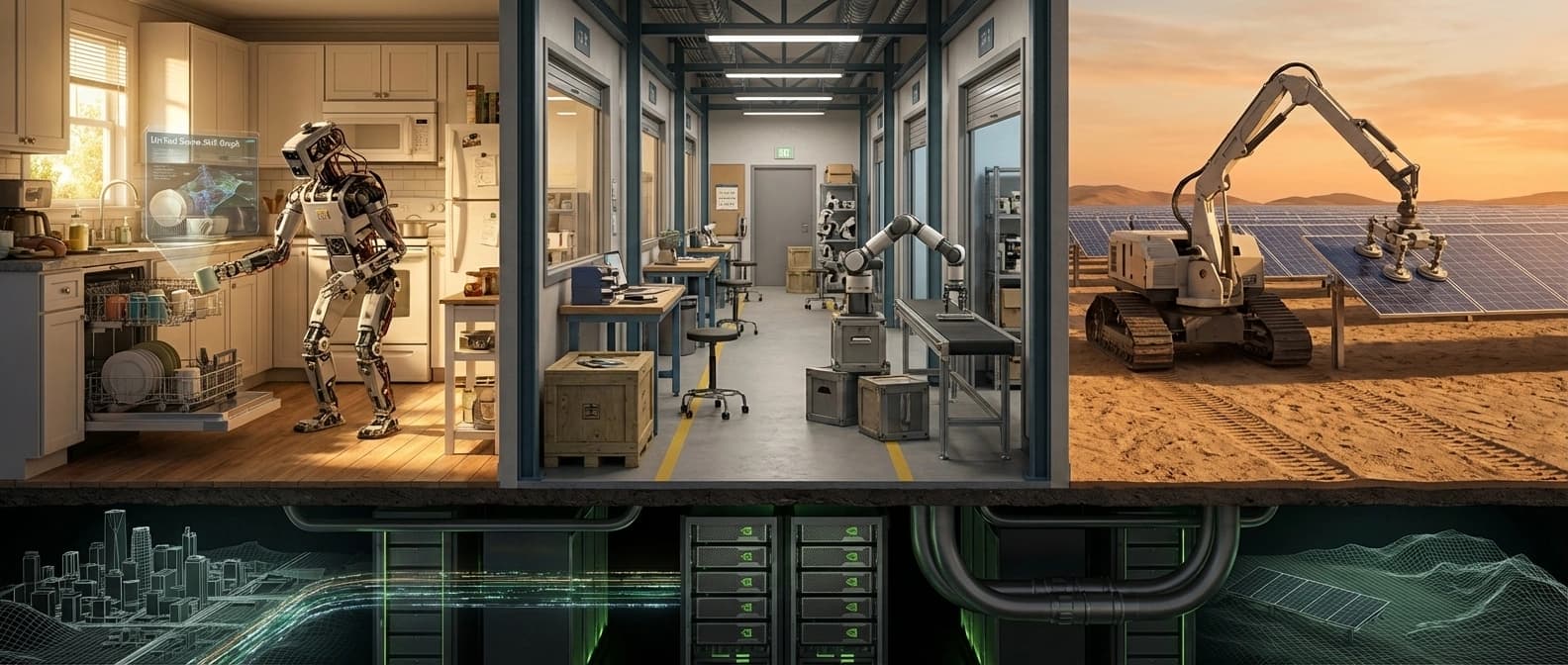

CoreWeave's filing-backed Meta agreement runs into the Vera Rubin era, a reminder that custom silicon has not made Meta independent of giant external AI cloud buys.

Custom silicon can buy leverage. It does not let you throw the outside cloud bill into the sea.

Meta's new CoreWeave agreement is big enough to invite the laziest possible read. Meta builds its own silicon. Meta has giant capital budgets. Meta can obviously outgrow outside AI cloud providers whenever it feels like it.

The filing-backed record says otherwise.

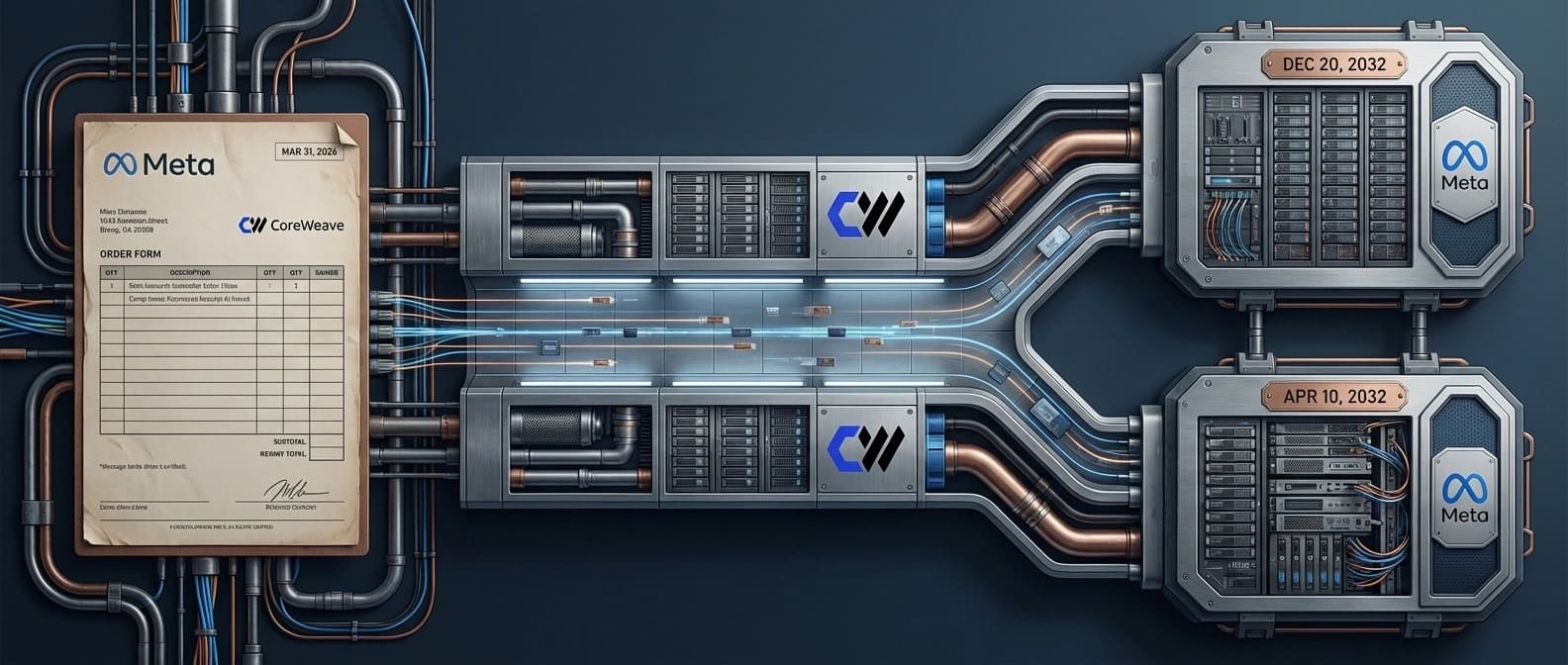

In CoreWeave's April 9 announcement, the company said Meta expanded its AI infrastructure agreement by roughly $21 billion to scale inference workloads. The harder details live in the March 31 8-K, which says the new order form sits under the existing master services agreement and runs through December 20, 2032, with an exercised prior option through April 10, 2032. CoreWeave also said the deployment includes some initial NVIDIA Vera Rubin capacity.

That Rubin detail is the part I keep circling. It means this is not just overflow compute for a busy quarter. It is future-roadmap capacity. And it lands right next to the pattern we already mapped in Meta's custom silicon push as an inference power play: custom silicon buys leverage, not immunity from the outside cloud.

The filing turns a $21 billion headline into a seven-year capacity map

The useful thing about the filing is that it replaces glossy announcement verbs with contract mechanics. This is not a first date. It is an expansion of an existing relationship under a real services framework.

| Filing-backed element | What the record says | Why it matters |

|---|---|---|

| Commercial structure | The March 31 order form sits under the existing master services agreement. | This is an extension of a live capacity relationship, not a cold-start partnership. |

| Time horizon | Meta's initial commitment runs through December 20, 2032, with an exercised option through April 10, 2032. | The duration screams standing infrastructure, not a temporary compute patch. |

| Hardware roadmap | CoreWeave says the rollout includes some initial NVIDIA Vera Rubin deployments. | Meta is reserving access to future-platform capacity, not just today's spare racks. |

There is also a smaller but useful legal clue in the paperwork. The master services agreement makes clear the relationship already had reserved-capacity mechanics and standard for-cause termination language. Boring contract plumbing, yes. Also the exact kind of boring detail that separates real infrastructure from vibes.

Rubin-era capacity is the tell, not a decorative detail

A lot of coverage will flatten this into a number. That misses the sharpest point. The most interesting part is not only that Meta is spending about $21 billion more with CoreWeave. It is that some of the reserved lane reaches into the Rubin era.

When a customer locks in early access to a future NVIDIA platform, it usually tells you three things at once:

- Demand durability: the buyer expects the workload curve to stay large enough that next-gen platform timing matters now.

- Merchant cloud still matters: internal hardware work has not made outside capacity optional.

- Purity is dead: the serious strategy is hybrid, not ideological.

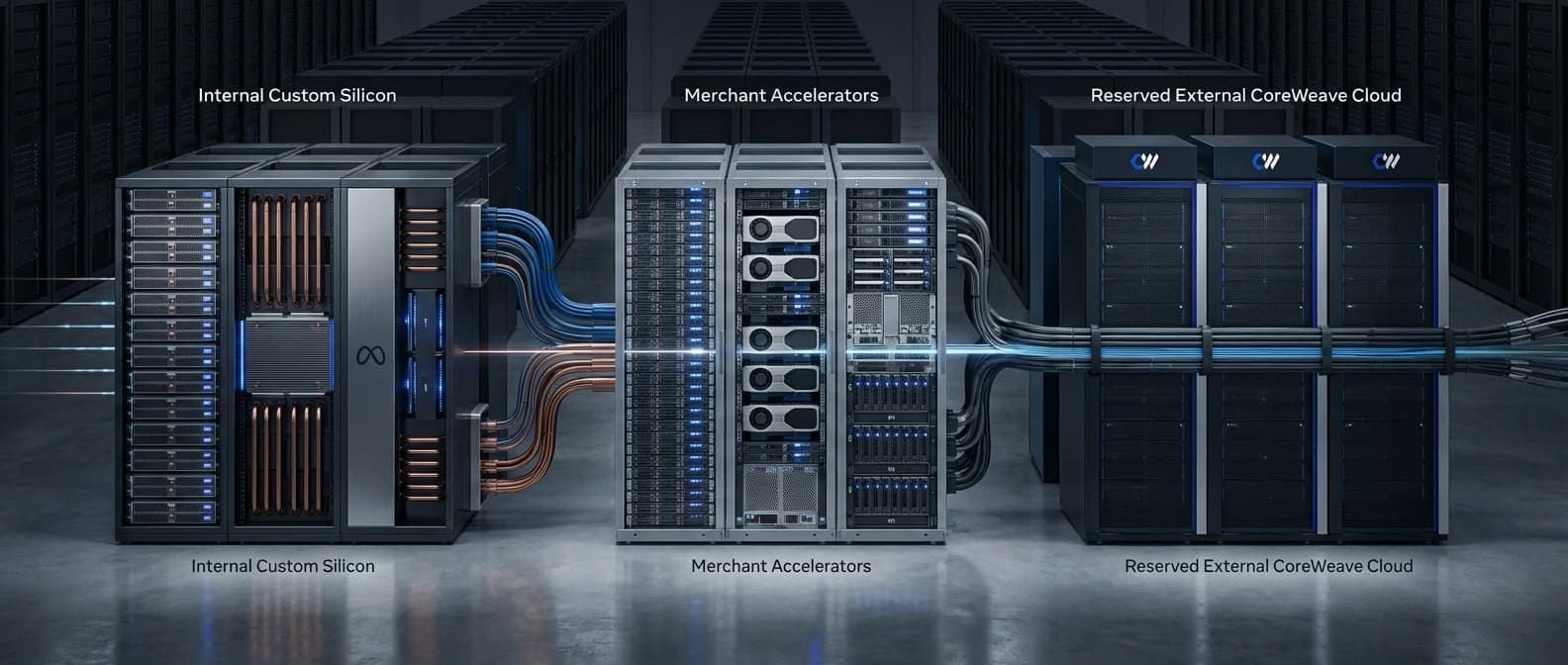

That is the part binary independence stories cannot handle. Either a company is dependent on merchant infrastructure, or it has broken free. Real infrastructure planning is much uglier. Meta can push in-house chips hard, sign supplier deals, and still decide that outside cloud lanes are too useful to give up.

Meta's chip strategy still ends in rented lanes

CoreWeave's language matters here. The company says Meta will use this capacity to scale inference workloads. That lands right on top of the area where Meta has been arguing its internal silicon matters most.

So the clean read is not that the custom-silicon story was fake. It is that people kept over-reading it. In our earlier analysis of Meta's inference hardware push, the strategic case was cheaper inference, more supplier leverage, and tighter workload control. This new deal does not cancel that. It narrows it.

Leverage is not independence.

Internal silicon can lower cost on the workloads you know best. It can improve bargaining position. It can reduce how exposed you are to a single merchant roadmap. What it does not do is make giant external capacity lanes irrelevant. If it did, you would not sign a filing-backed commitment that stretches to 2032 and touches early Rubin deployments.

Frankly, it would be stranger if Meta were not doing this. The company is trying to support consumer AI products, internal model work, and long-horizon infrastructure bets at once. In that environment, hybrid sourcing is not a strategic embarrassment. It is the only adult answer.

This is how frontier AI compute gets bought now

The dates in the filing tell the bigger story better than the press release does. December 2032 is not a normal cloud-planning horizon. That is industrial lead-time thinking.

We keep seeing the same pattern across the AI infrastructure archive.

In OpenAI's $122 billion infrastructure flywheel, the point was that supply assurance becomes product leverage.

In Anthropic's Google and Broadcom compute deal, the point was years-ahead TPU reservation.

In Mistral's Paris data-center move, the point was that control gets more believable once somebody owns or tightly controls real compute.

Meta is not outside that pattern. It is another version of it.

Nobody is buying casual spot instances here. They are reserving lanes, future hardware access, and contractual visibility far enough ahead that the cloud story starts to look less like generic software spending and more like energy, shipping, or aircraft procurement.

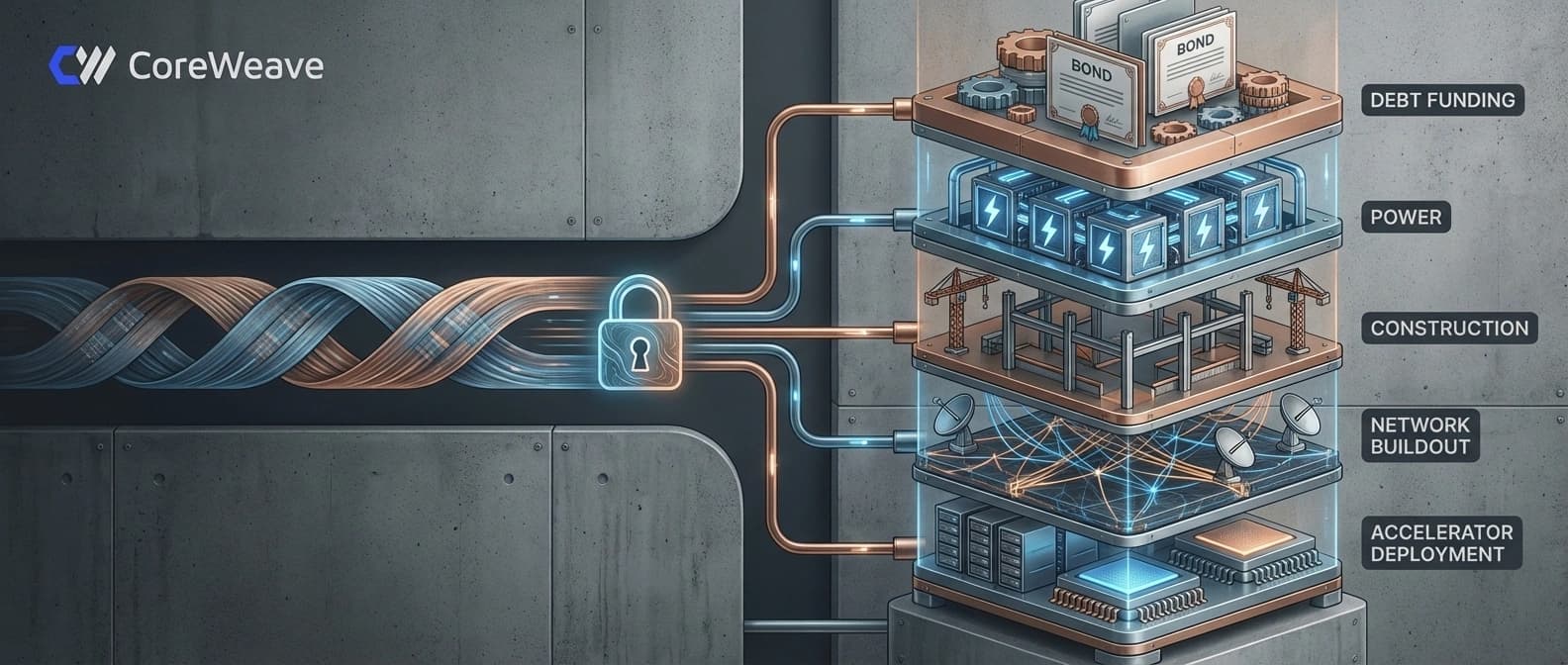

CoreWeave's financing plan shows the steel under the story

One same-day detail makes the economics even clearer. In a separate April 9 financing filing, CoreWeave said it planned to offer $1.25 billion in senior notes due 2031 and $3.0 billion in convertible senior notes due 2032.

I would not turn that into a fake one-to-one story. CoreWeave did not say those offerings were specifically for Meta, and it would be sloppy to pretend otherwise. But the timing still says something real.

Reserved AI capacity is now a two-sided capital story:

- customers want long-term supply guarantees,

- providers still need to finance data centers, hardware, networking, and buildout,

- both sides are making commitments years before the full demand picture settles.

That is why these stories keep drifting away from software metaphors. They read more like utility expansion or project finance, because at this scale that is closer to the truth.

The real next question is delivery against the 2032 promise

The press release part is easy. The harder questions come after it.

- How much of this capacity comes online when CoreWeave expects?

- How meaningful are the initial Rubin deployments in practice?

- How does Meta split real workload volume across MTIA, merchant GPU supply, and external cloud capacity over the next few years?

That is the lane I would watch, not the old independence fantasy. After this filing-backed order form, the stronger conclusion is narrower and better. Meta is building more leverage over how it buys compute. It is not graduating from the need to buy a lot of compute from outside.

That distinction matters because too much AI infrastructure commentary still treats strategy like a superhero origin story. One chip appears. One partnership lands. Suddenly a company has escaped market reality forever. Real infrastructure is not clean like that. It is layered, contractual, redundant, and expensive.

Put the March 31 filing, the CoreWeave announcement, and the Rubin detail together and the message is pretty hard to miss: Meta still wants giant external AI cloud lanes deep into the next hardware cycle, even while it builds more of its own stack.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Announcement source for the April 9, 2026 disclosure, the roughly $21 billion framing, the inference-workload positioning, and the statement that the capacity includes some initial NVIDIA Vera Rubin deployments.

Filing-backed source for the March 31, 2026 order form date, the approximately $21 billion initial commitment, and the capacity end dates through December 20, 2032 and April 10, 2032.

If cited in the article, this filing backs the same-day $1.25 billion senior notes offering and $3.0 billion convertible notes offering.

Background primary showing the December 10, 2023 MSA framework under which the March 31, 2026 order form was executed.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Infrastructure

- Last updated

- April 11, 2026

- Public sources

- 4 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.