Gemma 4 is really Google's Apache 2.0 local agent stack

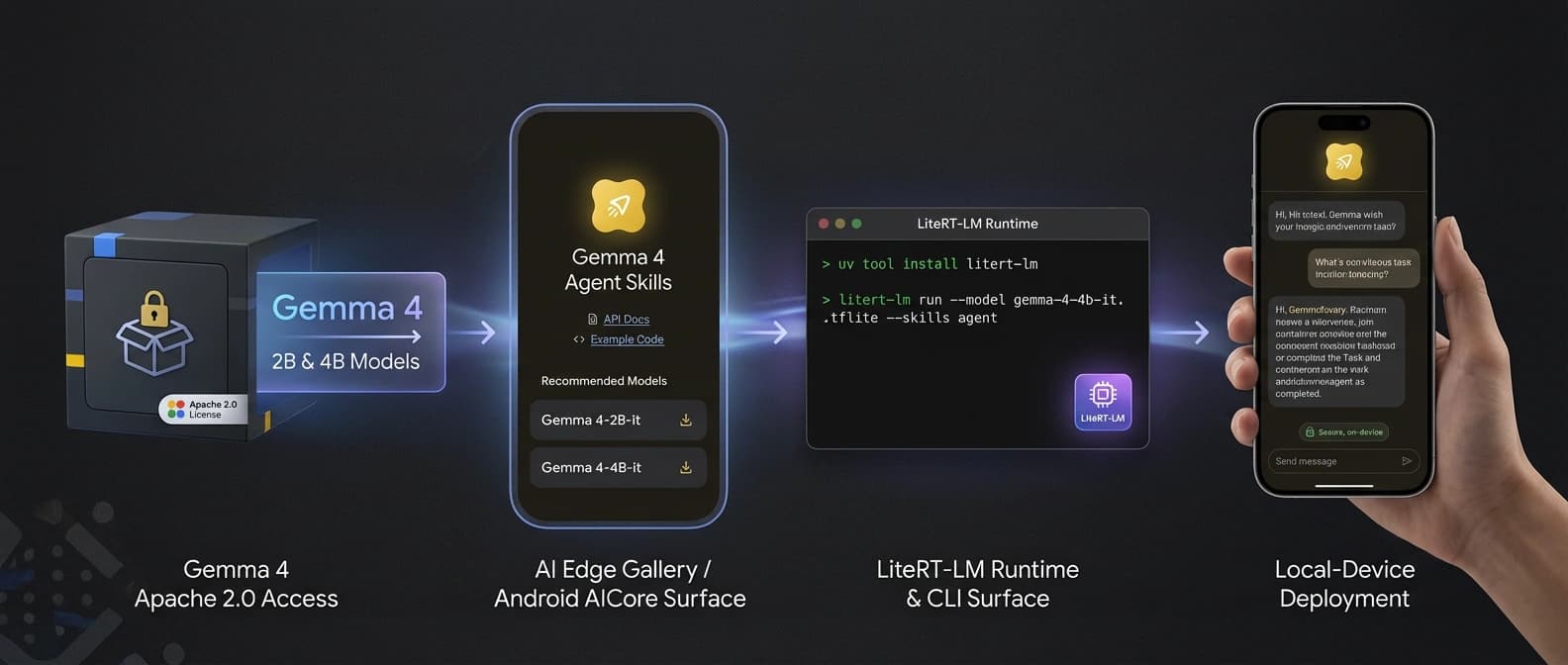

Gemma 4's real launch is the stack around it: Apache 2.0 weights, AICore, AI Edge Gallery, LiteRT-LM, and day-one local-agent support.

Google did not just open Gemma 4. It shipped the plumbing that lets a local agent leave the demo table.

Most model launches arrive as a benchmark parade with one downloadable checkpoint taped to the back. Gemma 4 is more interesting than that, and I say that as someone who usually develops a mild eye twitch when a launch post starts doing byte-for-byte calisthenics.

The real move is not the leaderboard boast. It is that Google paired Gemma 4's Apache 2.0 license with an immediate local-agent deployment path: AICore on Android, AI Edge Gallery for agent skills, LiteRT-LM for app and CLI execution, and day-one support from Hugging Face, Arm, and NVIDIA. That is not just an open-model release. That is a stack.

Benchmarks are decorative.

Google's main post makes the licensing point unusually explicit. Gemma 4 ships under Apache 2.0, with Google framing it as a route to developer flexibility, control over data and infrastructure, and deployment across on-prem or cloud environments. That matters because the local-agent story gets much more serious once the legal permission slip is boring. If you want the broader business case for that, it lines up neatly with our earlier look at open-weight inference economics and the sovereignty logic behind Microsoft's local sovereign AI stack.

Gemma 4's Apache 2.0 license matters because the stack can actually travel

A lot of "open" launches still feel like someone handing you flour and calling it dinner. Nice ingredient. Still not a meal. Google's bet with Gemma 4 is more operational. The company is saying the models support multi-step planning, function calling, structured JSON output, native system instructions, long context, and multimodal inputs, then immediately showing where those capabilities land in real local surfaces.

That is the key distinction. An Apache 2.0 model card by itself is useful. An Apache 2.0 model card tied to built-in Android access and ready runtimes is much harder to ignore.

This is also why the launch reads as a distribution play, just in a more local form than Google AI Studio's full-stack distribution push. Google is not only asking developers to admire Gemma 4. It is putting the model where developers already live: phones, local terminals, edge runtimes, and familiar OSS tooling.

AI Edge Gallery and Android AICore turn Gemma 4 into an on-device agent path

The developer post is where the story stops looking theoretical. Google says developers can access Android's built-in Gemma 4 model through the new AICore Developer Preview, while AI Edge Gallery now ships "Agent Skills" for multi-step workflows that run entirely on-device.

Those examples are not world-changing on their own, and thank heavens for that. The useful ones are boring in the exact right way: query Wikipedia, turn speech into summaries or graphs, connect to text-to-speech or image generation, and build end-to-end conversational app flows. This is local-agent plumbing, not AGI cosplay.

I think that is the smart move. Most developers do not need another sermon about frontier potential. They need to know whether the model can sit inside an app, call a tool, touch local context, and keep working when the network behaves like an offended house cat. Google's answer here is yes, or at least yes enough to start building.

LiteRT-LM gives Gemma 4 a local-agent CLI instead of a vague promise

LiteRT-LM is the part I suspect practitioners will remember after the benchmark screenshots have drifted into the usual compost heap. Google positions it as the runtime layer for deploying Gemma 4 across mobile, desktop, web, IoT, and robotics, building on LiteRT plus XNNPack and ML Drift.

The details matter. Google says LiteRT-LM can process 4,000 input tokens across two skills in under three seconds, that Gemma 4 E2B hits 133 tokens per second prefill and 7.6 tokens per second decode on Raspberry Pi 5, and that the new litert-lm CLI runs on Linux, macOS, and Raspberry Pi. The CLI also supports tool calling, which means the same agent-skills idea is not trapped inside a demo app.

That last part is huge. Plenty of companies show a clever agent surface and quietly leave the runtime story to archaeology. Google is doing the opposite. It is offering a terminal path, a Python path, an Android path, and an app-surface path on day one. If Google's Gemini API tool-combination push was about making tool use easier in the hosted stack, Gemma 4 looks like the local version of that ambition.

Hugging Face, Arm, and NVIDIA make Gemma 4 look deployable on arrival

The ecosystem support is what pushes this beyond launch-day chest puffing. Hugging Face says Gemma 4 landed with support across Transformers, llama.cpp, MLX, WebGPU paths, Rust tooling, fine-tuning libraries, and local-agent-friendly runtimes. That is not ornamental. It means the gap between "Google announced a thing" and "I can run the thing in my own setup" is much smaller than usual.

Arm's note makes the Android-scale case even plainer. The company says early engineering tests on Gemma 4 E2B show average 5.5x prefill speedups and up to 1.6x faster decode on SME2-enabled Arm CPUs, then uses Envision as an example of why this matters: offline scene description for blind and low-vision users without shipping sensitive data back to the cloud. That is a much more adult story than benchmark jousting.

NVIDIA is pushing the same idea from the other side of the hardware map. Its post says Gemma 4 is optimized across RTX PCs, workstations, DGX Spark, and Jetson Orin Nano, which effectively turns the launch into a continuum from phone to edge box to local workstation. Even Holo3's open-weight foothold in computer-use AI did not arrive with this kind of immediate deployment ramp.

Why Gemma 4's real launch is Google's local agent stack

The cleanest way to say it is this: Google did not just release an open model family. It released a route. License, runtime, app entry point, CLI, and ecosystem support all showed up at once.

That matters because local agents are not blocked by missing intelligence alone. They are blocked by the tedious middle layers: legal friction, runtime gaps, weak tool use, missing device paths, and launch-day ecosystem excuses. Gemma 4 does not solve every part of that. It does, however, remove enough of the usual excuses that developers can start treating local-agent deployment as an engineering choice instead of a mood board.

And yes, the launch copy still contains the usual benchmark flexing. We are not abolishing marketing this week. But the practical story sits somewhere less glamorous and more important. Google opened the model under Apache 2.0 and shipped enough surrounding infrastructure that the local-agent idea can leave the keynote and go bother an actual device.

That is the launch.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Core launch source for Apache 2.0 licensing, model family positioning, function calling, JSON output, AICore, AI Edge Gallery, LiteRT-LM, and the day-one ecosystem support list.

Most important deployment-path source. Details AICore Developer Preview, AI Edge Gallery Agent Skills, LiteRT-LM, the new CLI, and on-device tool-calling support.

Confirms day-one support across major libraries and runtimes including Transformers, llama.cpp, MLX, WebGPU paths, and local-agent-friendly deployment tooling.

Documents Arm's day-one optimization framing, SME2 performance claims, and the Android-scale argument for local Gemma 4 deployment.

Shows launch-day optimization support across RTX PCs, DGX Spark, and Jetson-class edge hardware for local agentic workloads.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- Open Source AI

- Last updated

- April 11, 2026

- Public sources

- 5 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.