OpenClaw beta gateway becomes OpenAI-compatible

OpenClaw 2026.3.24-beta.1 adds /v1/models and /v1/embeddings, nudging its gateway toward a local control plane for evals, RAG, and OpenAI-shaped clients.

The clever part of this beta is not just another endpoint. It is the decision to expose agents as a model-shaped surface that existing clients already know how to talk to.

I think the most important OpenClaw beta feature this week is the one almost nobody would put on a T-shirt.

In 2026.3.24-beta.1, the gateway added GET /v1/models and POST /v1/embeddings, while also forwarding explicit model overrides through /v1/chat/completions and /v1/responses. That reads like pure plumbing because it is pure plumbing. It is also a strategic shift.

OpenClaw already had an always-on gateway, a control UI, tool invocation, WebSocket control, and OpenAI-shaped chat and responses endpoints. What it lacked was the minimum compatibility surface that makes outside software stop squinting and just connect. In infrastructure, that kind of missing endpoint can be the difference between “interesting” and “actually used.”

Why /v1/models changes how outside clients see OpenClaw

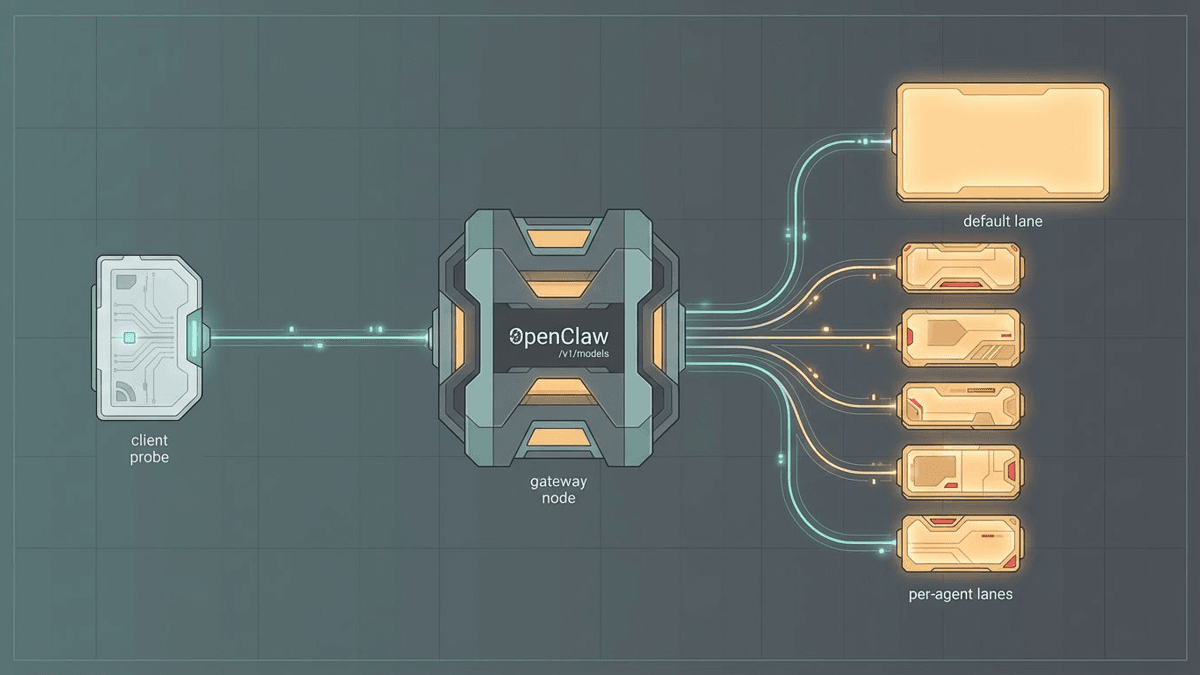

The clever part is that OpenClaw does not treat models the way a normal hosted-model catalog would. The gateway docs say the endpoint returns agent-shaped identities such as openclaw, openclaw/default, and openclaw/<agentId>. So the thing being discovered is not just a backend model SKU. It is an addressable agent surface with tools, policy, workspace context, and runtime behavior attached.

That matters because lots of clients probe /v1/models before they do anything else. If the endpoint is missing, the integration conversation often dies right there. The software does not admire your architecture. It just leaves.

Once /v1/models exists, OpenClaw can meet a much bigger slice of the ecosystem on familiar terms. And I like the alias design here. openclaw/default gives operators a stable target, while openclaw/<agentId> keeps per-agent routing explicit. It is compatibility, yes, but compatibility with opinion. That is usually the sweet spot.

/v1/embeddings is the part retrieval and memory tooling needed

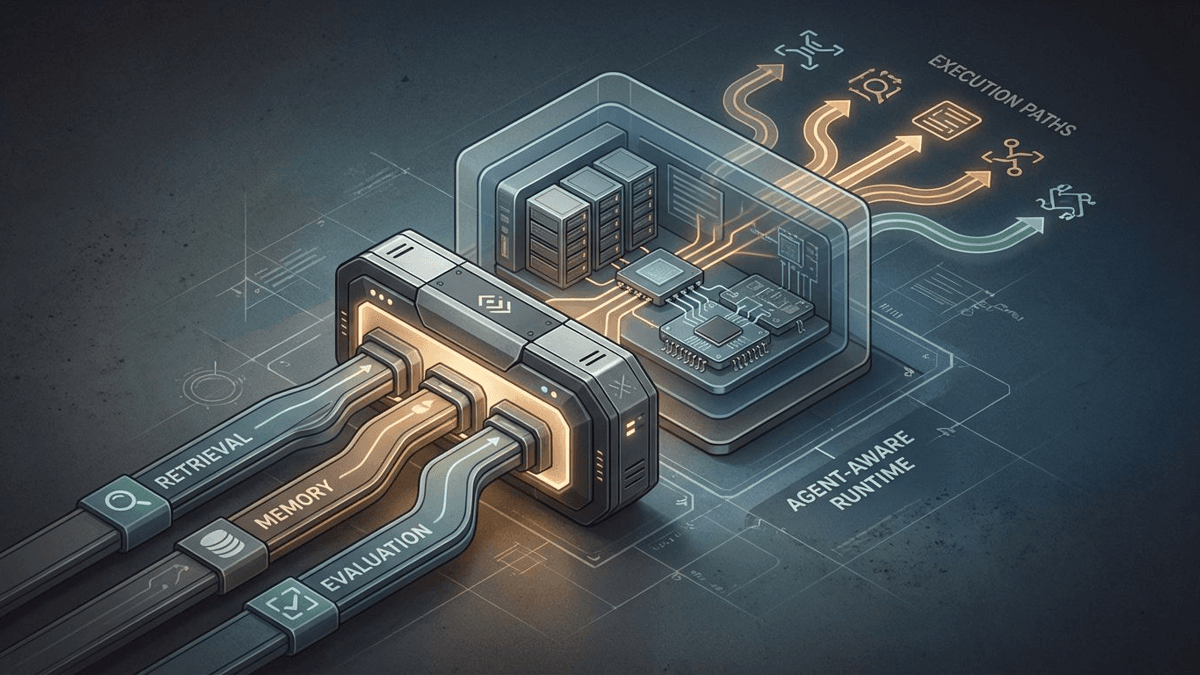

The embeddings endpoint may be even more important in practice. Lots of retrieval, memory, and evaluation workflows assume embeddings are table stakes. Without that surface, OpenClaw could already handle chats and native agent sessions, but it still looked awkward to surrounding software that wanted to plug it into RAG or persistent-context pipelines without special pleading.

That surrounding software already exists. Promptfoo’s OpenClaw provider docs treat OpenClaw as a provider surface across chat, responses, WebSocket agent access, and direct tool invocation. ByteRover’s OpenClaw integration docs make the memory angle even clearer. Adding /v1/embeddings shortens the path from “nice local agent runtime” to “thing I can slot into the workflow I already have.”

That is the kind of change people routinely underrate because it lacks launch sparkle. Nobody throws confetti for embeddings support. Then six months later half the ecosystem quietly assumes it is there. Infrastructure is sneaky like that.

This compatibility layer still keeps agent identity in charge

The forwarded model-override behavior is another clue about what OpenClaw is trying to be. The release notes say explicit model overrides now pass through /v1/chat/completions and /v1/responses. The gateway docs add that operators can use x-openclaw-model when they want a backend override and otherwise keep the selected agent’s normal model and embedding configuration in charge.

That is important because OpenClaw is not trying to become a generic model host. It is trying to make an agent runtime legible through interfaces the rest of the software world already speaks. Think translator, not clone.

There are still obvious caveats. The development-channel docs are clear that beta builds are under test. /v1/models returning agent aliases is useful, but it is not the same thing as mirroring every provider-native capability perfectly. /v1/embeddings helps a lot, but it does not erase auth, configuration, or policy differences. Good compatibility work narrows friction. It does not repeal reality.

My read on the gateway shift

I keep reading this beta as a control-plane move. OpenClaw wants the gateway to become the stable place other software expects to meet the system, the same way ClawHub’s install-path change and managed hosting offers keep nudging the project toward broader operational use.

If more tools start shipping explicit OpenClaw presets, or if more teams reach for the gateway before they reach for the native protocol, this line item will stop looking like a changelog footnote. It will look like one of those unglamorous API decisions that quietly rewired a product’s future.

That is why I think it matters. /v1/models and /v1/embeddings are not exciting in the cinematic sense. They are exciting in the “the door finally has a normal handle” sense. For infrastructure, that is often the better kind of excitement.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary source for the beta release date and the new gateway compatibility changes, including /v1/models and /v1/embeddings.

Defines the gateway runtime model, OpenAI-compatible endpoint surface, and the agent-first model alias behavior.

Confirms beta is an under-test release channel rather than a stable production commitment.

Shows that eval tooling already treats OpenClaw as a provider surface across chat, responses, WebSocket agent, and tool invocation paths.

Supports the operator-side claim that memory and long-term context tooling already exist around OpenClaw.

Useful ecosystem evidence that OpenClaw is being packaged and operationalized beyond the core repo.

About the author

Lena Ortiz

Lena tracks the economics and mechanics behind AI systems, from serving architecture and open-weight deployment to developer tooling, platform shifts, product decisions, and the operational tradeoffs that shape what teams actually run. Her reporting is aimed at builders and operators deciding what to trust, adopt, and maintain.

- 24

- Apr 10, 2026

- Berlin

Archive signal

Reporting lens: Operating leverage beats ideological posturing.. Signature: If the cost curve moves, the product strategy moves with it.

Article details

- Category

- AI Infrastructure

- Last updated

- April 11, 2026

- Public sources

- 6 linked source notes

Byline

Covers the economics, tooling, and operating realities that shape how AI gets built, shipped, and run.