Why AI benchmark wins feel less trustworthy

I still care about benchmarks, but not the old way. A score only matters if it survives reproducibility checks, task fit, and deployment reality.

A benchmark is still useful. I just no longer trust it alone.

Every benchmark cycle starts with the same little ritual. A chart drops. A model moves up a few rows. Half the internet acts like the matter is settled by lunchtime. I still read those charts, but I trust them less than I used to.

That is not because benchmarks stopped mattering. It is because more of us now understand how many design choices sit underneath the number. Once you see the machinery, the scoreboard stops looking like divine truth and starts looking like a recipe card with the interesting ingredients left off.

Why AI benchmark wins feel thinner now

Part of the trust problem is volume. There are more lab posts, more vendor dashboards, more custom graders, and more benchmark-adjacent demos than there were even a year ago. A result can be technically real and still feel editorially flimsy because readers know a lot of invisible setup work went into making that number happen.

That skepticism is healthy. Modern evaluation is built from choices: task selection, prompt framing, tool access, grader rules, refusal handling, and cost tolerances. OpenAI's own guide to working with evals reads like exactly what it is: evidence that evaluation is a design discipline, not a holy scoreboard that descended from the cloud.

Once you understand that, the old headline format starts to wobble. "Model X beats model Y" is not false, exactly. It is just incomplete in the way a restaurant menu is incomplete about what is happening in the kitchen.

A leaderboard score is only the top layer

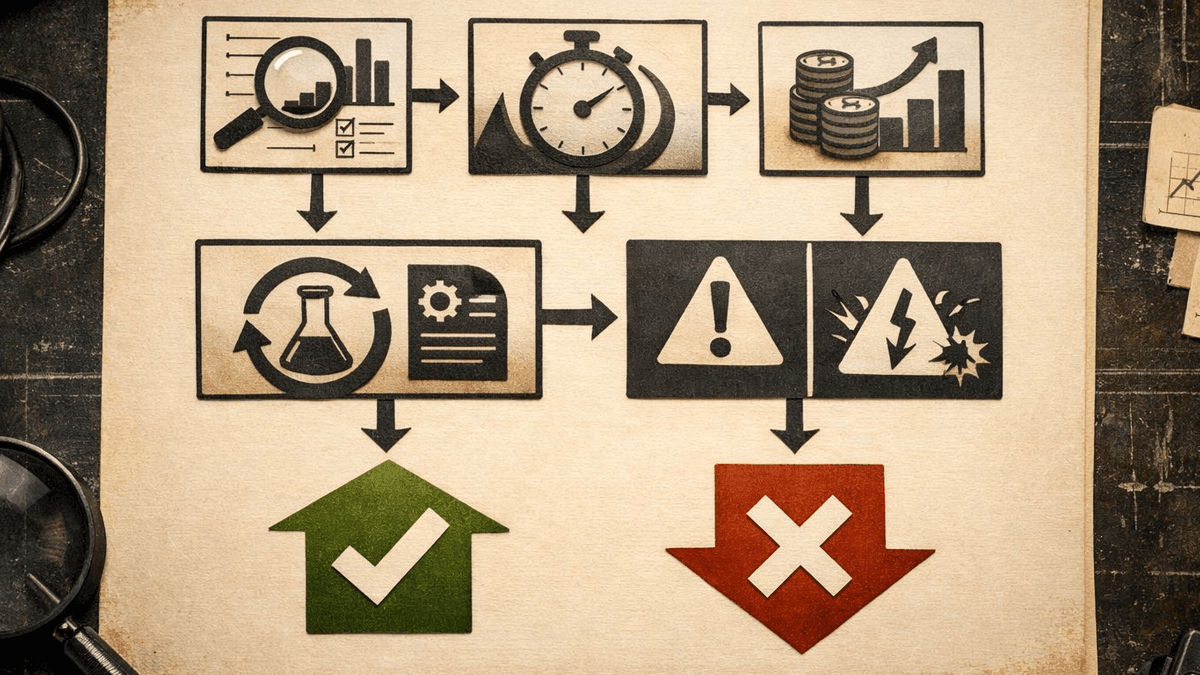

A benchmark is still a signal. It can tell me that something changed, that a model family improved, or that a new setup deserves a closer look. What it cannot do by itself is settle whether that improvement matters for an actual workflow.

That is why the strongest evaluation work now looks less like a victory lap and more like a methodology packet. A score without context feels suspicious. A score with task definitions, grader logic, baselines, failure modes, and caveats feels like someone is trying to inform me rather than sell me.

Projects such as Stanford CRFM's HELM matter for exactly that reason. They force a broader view of model assessment. The same goes for the open lm-evaluation-harness, which keeps more of the machinery visible. When the setup is inspectable, the result earns more trust. Funny how that works.

Reproducibility is now part of the headline

A benchmark story used to end with the chart. Now the chart is only the first paragraph. The second paragraph is whether somebody else can run something close to it and land in the same neighborhood.

That is not academic nagging. Operators need to know whether a gain came from the model, the workflow, or a carefully staged environment that disappears the second they try it on their own stack. A model that wins only inside a custom pipeline may still be impressive research. It is weaker buying guidance.

This is one reason the benchmark debate overlaps with OpenAI's workflow-capture push. As runtime, tooling, and evals live deeper inside vendor-controlled environments, it gets harder to separate a model improvement from a platform-packaged improvement. The win may be real. The source of the win gets blurrier.

The same caution applies to infrastructure. If a benchmark ignores latency penalties, serving complexity, or cost, it tells you less than it pretends to. Our piece on open-weight inference economics lands on the same point from a different angle: once the system goes live, operations are part of quality.

What I ask before I believe a benchmark chart

I am not arguing for cynicism. I am arguing for inspection.

Before I treat a benchmark claim as decision-grade, I want a few uncomfortable questions answered. What actually changed between runs: weights, prompts, tools, grader logic, or all of the above? Does the task resemble the workflow I care about, or only the benchmark author's favorite framing? Are costs, latency, and failure cases disclosed clearly enough to compare tradeoffs? Could another team rerun something similar and validate the claim's direction?

That is not anti-benchmark behavior. It is the only way to keep benchmarks useful once the market matures.

It also explains why deeper explainers will outperform simple chart recaps in the AI Research category. Readers do not just want the score anymore. They want translation. They want to know whether the result is broad, brittle, expensive, or mostly a very polished magic trick.

The trust gap is really about incentives

A lot of benchmark anxiety is an incentives story in disguise. Labs want attention. Vendors want proof points. Researchers want clean tasks. Buyers want deployment relevance. Those priorities overlap, but they do not line up perfectly.

Once I admit that, the whole mood becomes easier to understand. Benchmark claims are not necessarily weaker because everyone is cheating. They are weaker because the audience now understands that every evaluation is built from choices, and those choices are shaped by incentives.

That does not make benchmarks disposable. It makes source trails, methodology, and editorial framing much more important.

What a trustworthy benchmark story looks like now

The best benchmark coverage in 2026 does four things well. It says what the metric captures. It says what the metric leaves out. It explains which decisions the result can safely inform. And it names the likely failure modes before the reader discovers them the hard way.

That is more demanding than a one-card win graphic. Good. The lazy version stopped working.

Benchmarks are not dead. I still use them. I just refuse to let them travel alone.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Explains how formal evaluation setups are defined, run, and iterated in practice rather than treated as one-off scorecards.

Useful reference point for broader evaluation framing beyond a single vendor leaderboard.

Shows how open evaluation tooling keeps benchmark execution and comparison methods inspectable.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Research

- Last updated

- April 11, 2026

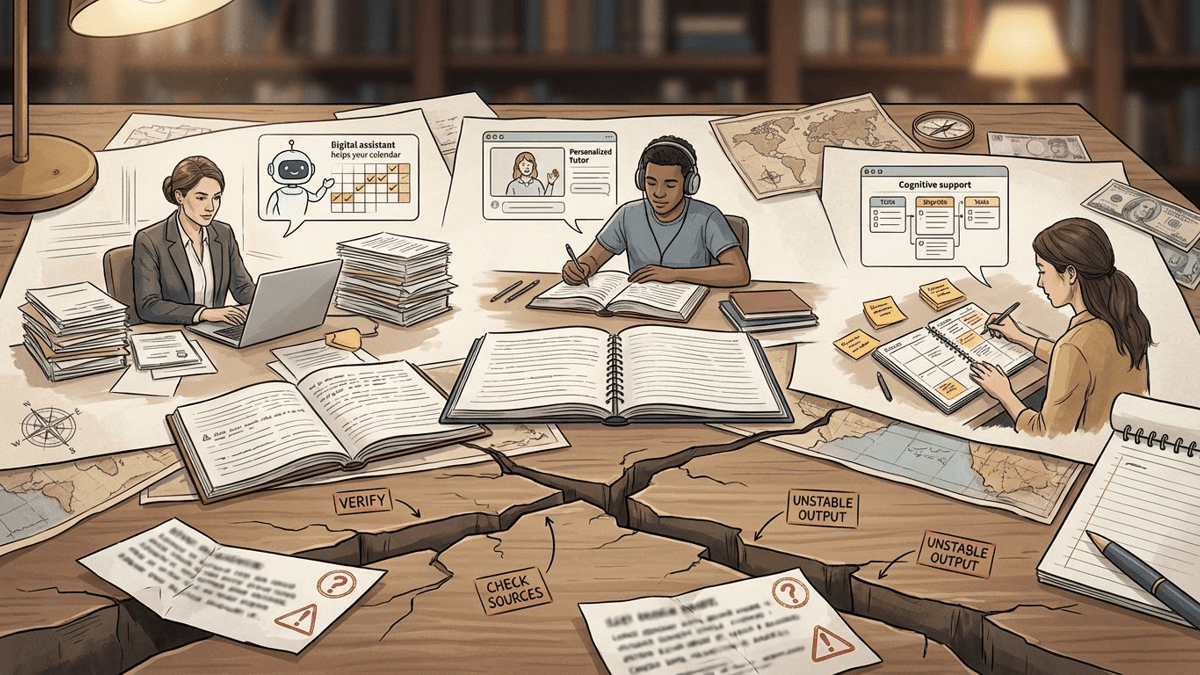

- Lead illustration

- Benchmark wins travel fastest when they fit on one card. Trust usually depends on everything left off that card.

- Public sources

- 3 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.