Google TurboQuant turns KV cache into a cost story

Google says TurboQuant can slash KV-cache memory use and accelerate H100 attention. The bigger story is that long-context AI costs now hinge on memory compression.

In long-context serving, the next cost win may come from shrinking memory pressure rather than buying yet another bigger model.

Google is giving TurboQuant a fresh public push, but I think the most useful way to read the announcement is not as “Google found a neat new compression trick.” The more important read is that inference economics are starting to look like a memory story as much as a compute story.

That distinction matters because the paper behind TurboQuant is not a surprise this week. The arXiv version of Online Vector Quantization with Near-optimal Distortion Rate went up in April 2025, and Google is now using a March 25, 2026 Research blog post to translate the work into a practical operator message. That message is blunt: Google says TurboQuant can cut KV-cache memory by at least 6x on its tested long-context workloads, speed attention-logit computation up to 8x on H100s at 4 bits, and do it without retraining or accuracy loss in those evaluations.

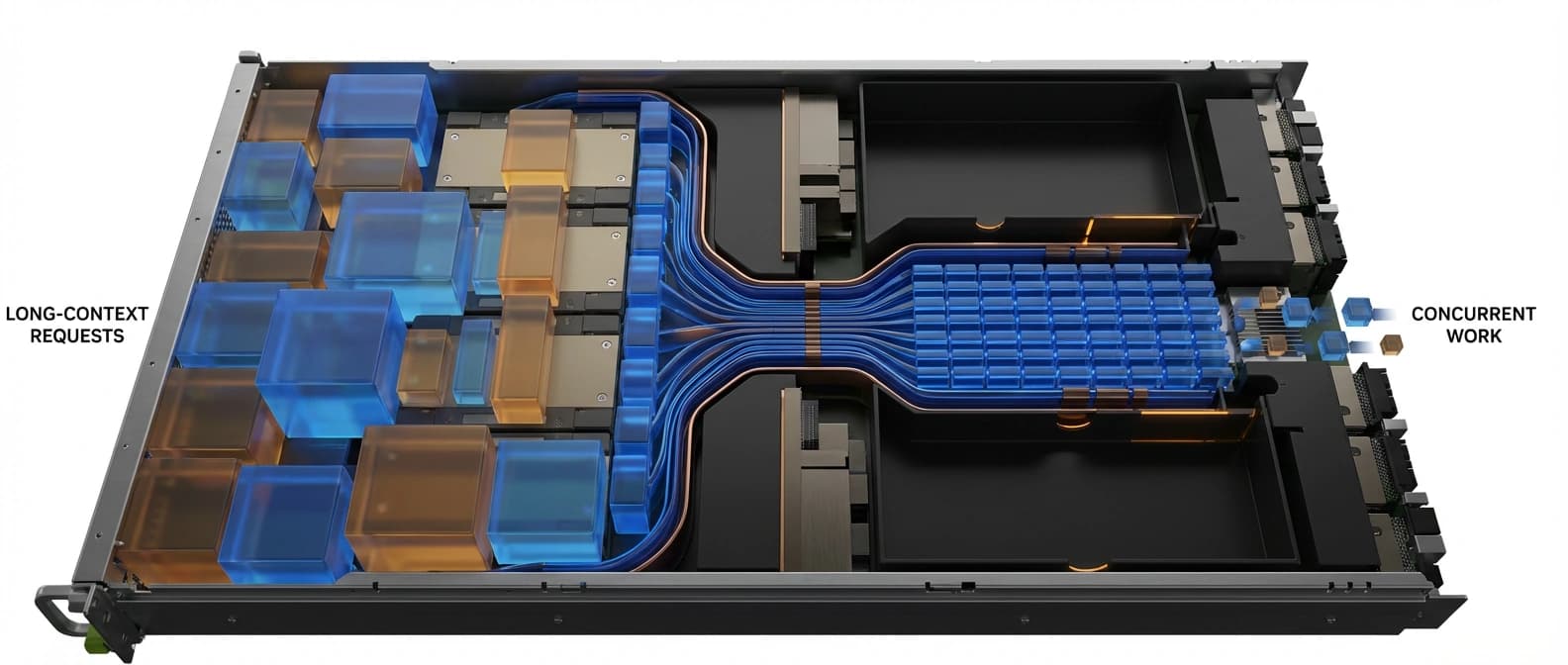

If those gains travel well into real serving stacks, they matter for a simple reason. Long-context inference is increasingly constrained by what fits in memory, not just by how many FLOPs are advertised on the box. GPU memory is a very expensive place to store an unfinished thought.

The bottleneck is the cache, not the headline model

KV cache sounds like a detail until you have to pay for it. During generation, a transformer stores key and value tensors from earlier tokens so it does not have to recompute them on every next-token step. That cache is what makes long contexts feasible, but it is also what turns context length into a memory bill.

The bill shows up in a few ways. Bigger caches mean fewer concurrent requests per GPU. They can force more aggressive sharding or offload strategies. They can turn a model that looks fine on paper into a serving problem once real users start asking for longer context windows. That is why the underlying question is economic before it is aesthetic. A smaller KV footprint can mean more usable context, better concurrency, or less hardware for the same work.

This fits the pattern we have already seen in pieces like open-weight inference economics and FlashAttention-4's Blackwell kernel economics. The glamorous part of the stack gets the headline. The bill usually arrives from the quieter constraint sitting next to it.

Google's framing is useful precisely because it treats compression as a way to relieve that quieter constraint. In the blog post, the company says it evaluated TurboQuant, PolarQuant, and QJL on long-context benchmarks including LongBench, Needle in a Haystack, ZeroSCROLLS, RULER, and L-Eval using Gemma and Mistral models. TurboQuant is the flagship result because it combines the strongest memory reduction with what Google describes as lossless downstream performance on those tests.

That does not automatically mean every serving team gets a clean 6x cost improvement. But it does mean the right unit of attention is not “can compression be elegant?” It is “can compression reclaim enough resident memory to change what the fleet can do?”

What TurboQuant is actually doing

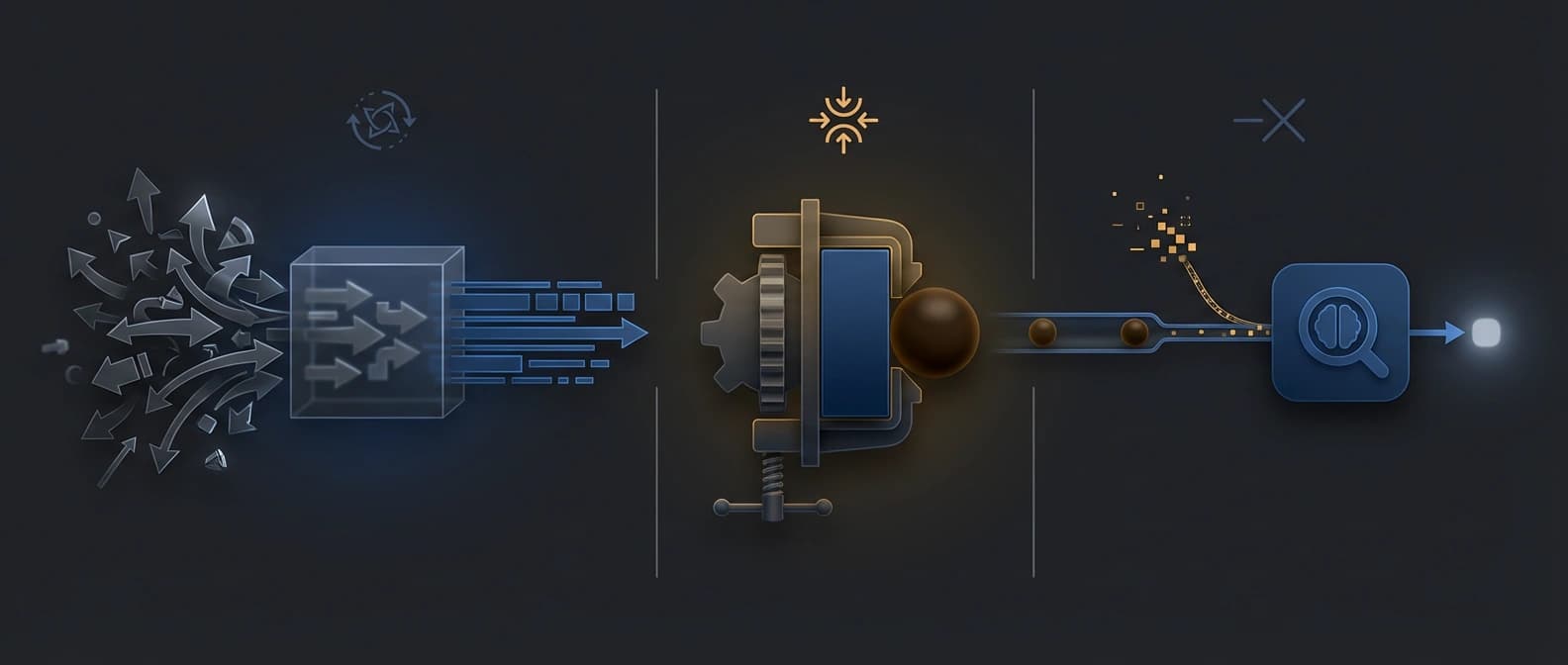

The short version is that TurboQuant is a two-stage quantization method designed to compress vectors hard without wrecking the inner-product calculations attention depends on.

According to Google and the paper, the first stage does most of the heavy lifting through PolarQuant. TurboQuant randomly rotates the vector, then uses PolarQuant to map it into a representation whose structure is easier to compress efficiently. The point is not just fewer bits. It is fewer bits without the usual overhead that traditional vector-quantization schemes pay when they need extra constants and metadata to reconstruct the result cleanly.

Then comes the second stage. The authors say plain mean-squared-error quantization can introduce bias in inner-product estimation, which is bad news when attention scores depend on those inner products staying trustworthy. So TurboQuant adds a residual correction step using a 1-bit Quantized Johnson-Lindenstrauss transform, or QJL. In plain English, PolarQuant does most of the compression, and QJL helps clean up the distortion that would otherwise make the attention math drift.

The paper's abstract makes the tradeoff even sharper. It says TurboQuant achieves absolute quality neutrality at 3.5 bits per channel and only marginal degradation at 2.5 bits per channel for KV-cache quantization. That is the kind of detail operators should care about because it makes clear that the work is not merely “smaller numbers good.” It is a claim about where the compression frontier sits before quality starts to slip.

Google's public blog post leans on the same practical selling points. The company says TurboQuant can quantize KV cache to 3 bits without training or fine-tuning and still run faster than the original Gemma and Mistral setups in its tests. That no-retraining detail is a big part of why the story is relevant now. An elegant method that demands model-specific retraining is academically interesting. A method that promises drop-in compression starts to sound like something infra teams might actually try.

Why this matters now for serving stacks

The deeper reason TurboQuant matters is that it reframes where inference leverage may come from over the next cycle. The last year of infrastructure talk has been full of giant-model releases, bigger GPUs, and orchestration layers such as NVIDIA Dynamo. Those things matter. But they do not erase the memory-pressure problem. They often expose it more clearly.

Long-context serving is one of the places where that pressure becomes painfully concrete. If a compression method preserves quality while shrinking KV cache drastically, the same hardware can potentially hold more sessions, tolerate longer contexts, or postpone some capex. That is not a rounding error. It is product strategy disguised as quantization.

There is also a stack-level point here. Even strong compression results do not become savings on their own. They have to survive batching behavior, scheduler quirks, mixed request shapes, integration work, and the peculiar habits of real serving runtimes. That is why implementation work in systems like vLLM's Triton attention backend on AMD still matters. Compression wins on paper; serving stacks decide whether those wins make it to production.

So the right response is neither breathless excitement nor bored dismissal. Google may be right that memory-efficient quantization is becoming a decisive lever for long-context AI. The company has at least made a credible case that the economics are shifting in that direction. But the operational proof still needs to show up in mainstream inference stacks, in mixed workloads, and in the ugly ordinary traffic patterns that ruin pretty charts.

What to watch after the blog-post glow fades

The next signal is not another dramatic percentage. It is whether TurboQuant-like methods start appearing as normal options in the serving software people actually use, and whether they move fleet-planning assumptions in practice.

Watch for three things. First, whether the technique lands beyond Google's own framing and shows up in widely used inference stacks without heroic custom integration. Second, whether the gains hold under real deployment conditions instead of only benchmark-style long-context tests. Third, whether more infrastructure teams start treating KV-cache compression as a standard economic lever alongside batching, routing, and kernel optimization.

If that happens, this story will age as an early marker of where inference margins are going. Not toward one miraculous new model, but toward better control of the memory system wrapped around the model. That is a less cinematic headline. It is also the kind that tends to survive contact with the budget.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Primary source for Google's March 25, 2026 public framing, the at-least-6x KV-cache memory claim, the up-to-8x H100 attention-speed claim, and the no- retraining positioning.

Primary technical source establishing that the paper predates this week's publicity cycle, describing TurboQuant's two-stage method, and reporting quality neutrality at 3.5 bits per channel with marginal degradation at 2.5 bits per channel.

Useful outside framing for how the broader tech press is translating the research into an inference-cost and on-device possibility story.

About the author

Lena Ortiz

Lena tracks the economics and mechanics behind AI systems, from serving architecture and open-weight deployment to developer tooling, platform shifts, product decisions, and the operational tradeoffs that shape what teams actually run. Her reporting is aimed at builders and operators deciding what to trust, adopt, and maintain.

- 24

- Apr 10, 2026

- Berlin

Archive signal

Reporting lens: Operating leverage beats ideological posturing.. Signature: If the cost curve moves, the product strategy moves with it.

Article details

- Category

- AI Infrastructure

- Last updated

- April 11, 2026

- Lead illustration

- TurboQuant matters if compression changes how much useful long-context work a fixed GPU budget can keep resident.

- Public sources

- 3 linked source notes

Byline

Covers the economics, tooling, and operating realities that shape how AI gets built, shipped, and run.