Anthropic study: AI users want help, not autonomy

Anthropic’s 80,508-interview Claude user study suggests the market wants productivity, learning, and cognitive support more than full AI autonomy.

The loudest user signal in Anthropic’s data is not “replace me.” It is “help me, but make it dependable.”

I think Anthropic's new 81,000-user interview project is useful for exactly one thing, and that one thing matters a lot.

It is not a referendum on what humanity wants from AI. It is not a neutral public-opinion poll. Anthropic invited Claude users to talk with Anthropic Interviewer, its Claude-powered interviewing system, over one week in December 2025. The appendix says 112,846 interviews came in, 80,508 survived the quality filter, and respondents wrote from 159 countries in 70 languages. That is a large sample. It is also a very specific sample.

So I read this as demand research from engaged AI users, not a universal mood ring. And honestly, that is still extremely valuable.

This is a power-user survey, not a referendum on humanity

Anthropic's appendix is unusually candid about the caveats. Respondents were existing Claude users who opted in. Occupational categories were inferred from self-descriptions. The interview order may have nudged some people to tie hopes and fears together more tightly than they otherwise would. Good. I would rather have a biased instrument that labels its bias than a fake neutral one pretending to speak for the planet.

That framing matters because active users are the people shaping the near-term market. Product teams are not building first for an abstract global public. They are building for the people willing to try, pay for, and keep using these systems now.

Users mostly want relief, not robot management

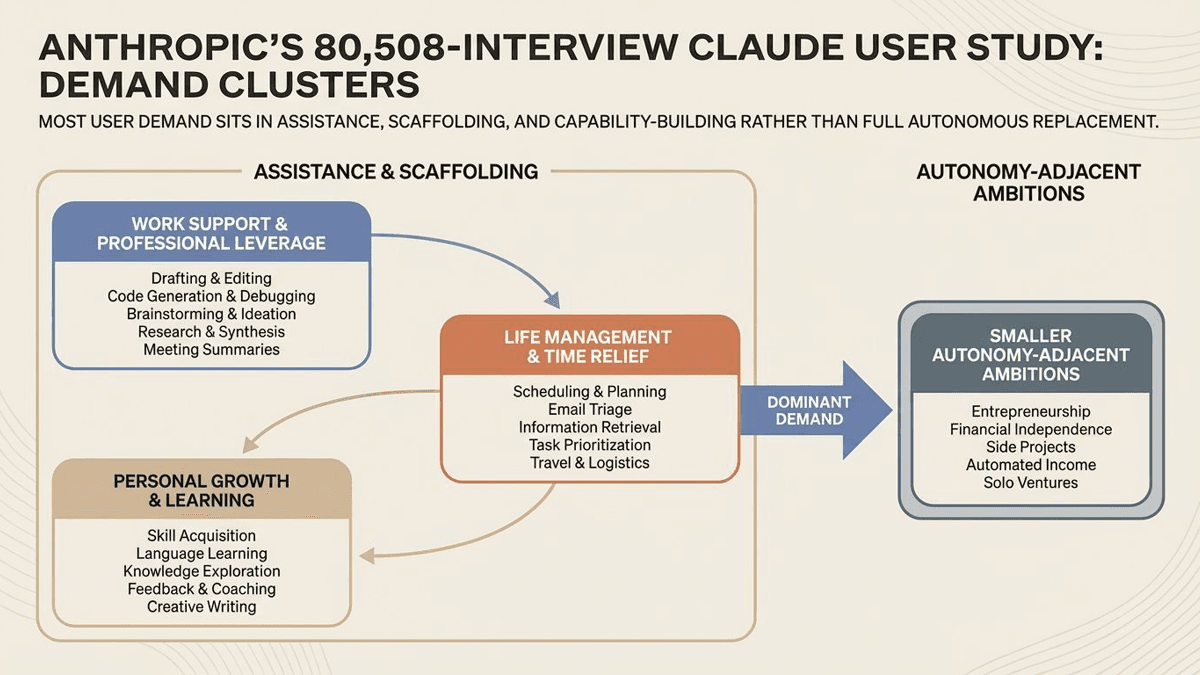

The category breakdown is the part I cannot stop staring at. Anthropic says the largest hope bucket was professional excellence at 18.8%, followed by personal transformation at 13.7%, life management at 13.5%, and time freedom at 11.1%. Learning and growth came in at 8.4%, entrepreneurship at 8.7%, and financial independence at 9.7%.

That does not sound like a population begging for a sovereign robot manager. It sounds like people asking for leverage, relief, and help with the boring parts of being alive. A good sous-chef, not a self-appointed emperor.

The delivery categories tell the same story. Productivity led at 32%. Cognitive partnership followed at 17.2%. Learning came in at 9.9%, technical accessibility at 8.7%, research synthesis at 7.2%, and emotional support at 6.1%. The center of gravity is assistance. Not disappearance of the human.

That is a useful correction to the current agent hype cycle. The industry loves to talk about ever-more-autonomous systems. Users, at least in this sample, mostly sound like they want a very capable helper that saves time and reduces mental clutter without turning into an overconfident houseguest.

Reliability is still the commercial choke point

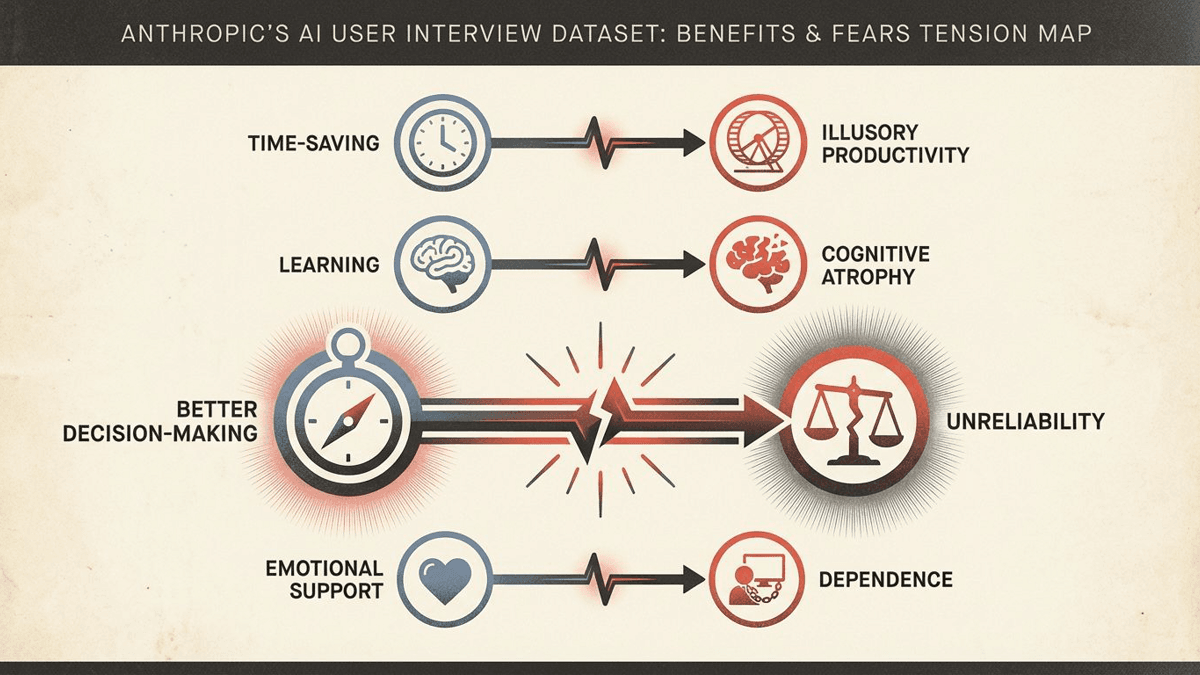

The fear rankings are the most commercially important part of the whole package. Anthropic says unreliability topped the concern list at 26.7%, followed by jobs and the economy at 22.3%, autonomy and agency at 21.9%, cognitive atrophy at 16.3%, and governance at 14.7%.

That ordering says a lot. The market can imagine ambitious futures for AI, but users are still getting hung up on a much plainer complaint: the system is wrong, flaky, overconfident, or so verification-heavy that the time savings disappear. I keep connecting this back to Together AI's reliability pitch. Reliability is not polish. It is the bottleneck.

Anthropic's appendix sharpens the point by showing how positive use cases often travel with matching worries: better decision-making alongside unreliability, learning alongside cognitive atrophy, emotional support alongside dependence. The helpful thing and the worrying thing are often the same mechanism wearing different shoes.

My read on what people actually want from AI

The lazy responses here are to oversell the study or dismiss it. I think both are wrong. Overselling it turns a vendor-run sample into a fake global poll. Dismissing it ignores the fact that engaged users are the people already shaping budgets, norms, and product direction.

The direction I see is pretty clear. Users closest to the tools are not mainly asking for maximum autonomy at any cost. They are asking for systems that make them more capable, less overloaded, and better supported. They also want those systems to stop flaking out.

People want help. Full stop.

If that holds, the near-term winners probably will not be the companies that promise the wildest autonomy. They will be the ones that deliver dependable leverage without making users feel replaced, misled, or managed. That is a much less cinematic story. I suspect it is also the one that will actually sell.

Source file

Public source trail

These links anchor the package to the underlying reporting trail. They are not a substitute for judgment, but they do show where the reporting starts.

Core source for the headline findings, category breakdowns, representative quotes, and the study’s stated framing.

Explains how Anthropic Interviewer works and why Anthropic sees the tool as a scalable qualitative-research method.

Provides the sample size, filtering details, classifier methodology, and explicit limitations that matter for honest framing.

Useful secondary synthesis of the main user-demand and concern patterns for comparison against Anthropic’s own framing.

Helpful outside summary of the study’s adoption framing and business-angle takeaways.

About the author

Maya Halberg

Maya writes across the AI field, from research claims and benchmark narratives to tools, products, institutional decisions, and market shifts. Her reporting stays focused on what changes once hype meets deployment, procurement, workflow reality, and human skepticism.

- 24

- Apr 11, 2026

- Stockholm · Remote

Archive signal

Reporting lens: Methodology over launch theater.. Signature: A result only matters after the setup becomes legible.

Article details

- Category

- AI Research

- Last updated

- April 11, 2026

- Lead illustration

- Anthropic’s interviews point to a market that wants useful assistance first. The trust bottleneck is still whether the system reliably holds up.

- Public sources

- 5 linked source notes

Byline

Writes across the AI field with an eye for what survives contact with real users, real budgets, and real operating constraints.